@KPS

Unfortunate, no. I just replicate and avoid moving live VM on the same host, target for replication.

Posts

-

RE: DUPLICATE_MAC_SEED

-

RE: VM backup retry - status failed despite it was done on second attempt

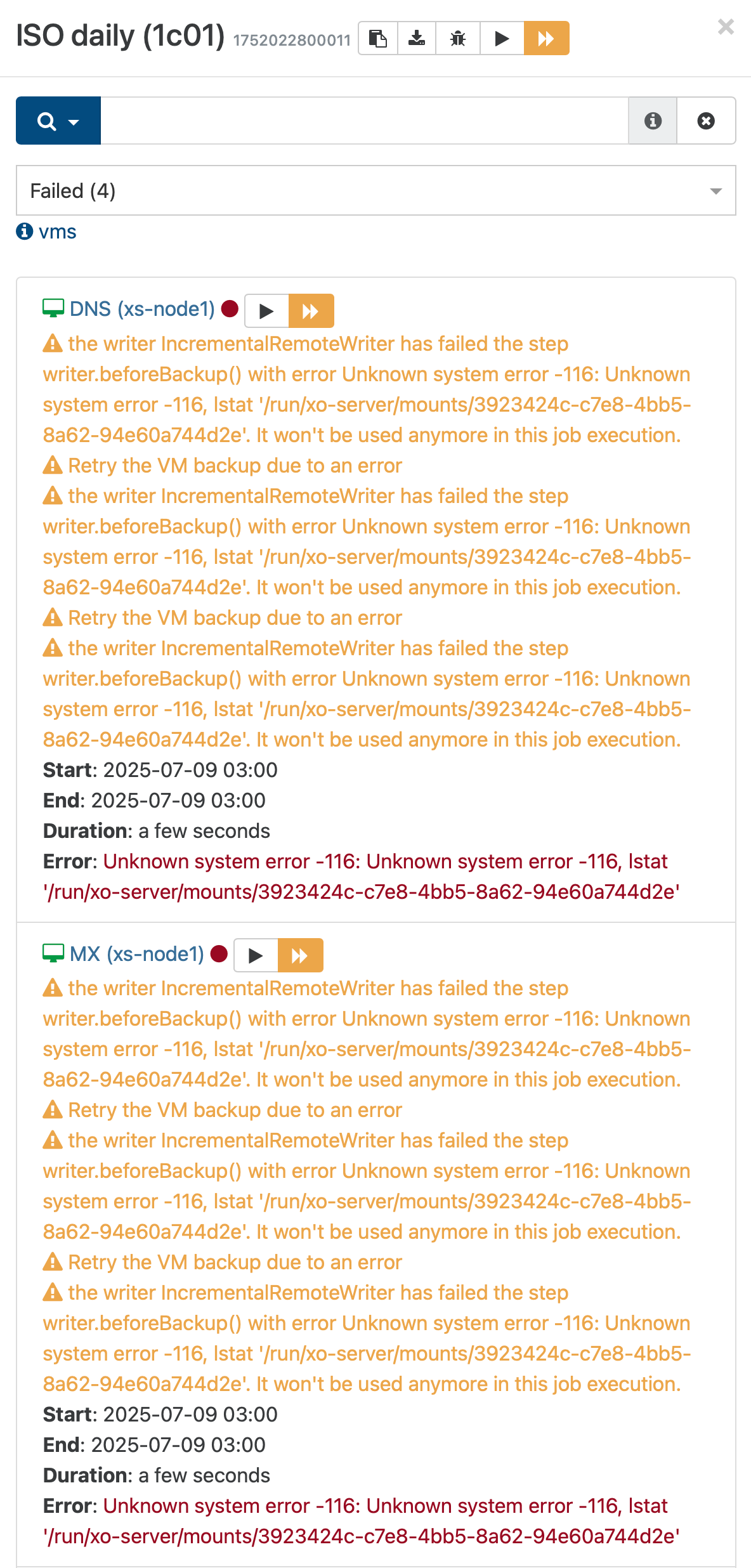

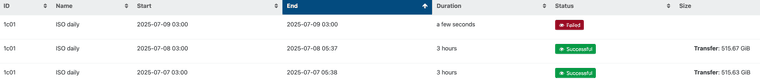

From today morning...

Yesterday all was ok.

-

RE: VM backup retry - status failed despite it was done on second attempt

@lsouai-vates

Sure, I understand issues might be related to changes of backup processing under the hood.

I hope my report going to help with identification of bugs.

Does the EEXIST error are also related with this? -

VM backup retry - status failed despite it was done on second attempt

Hi,

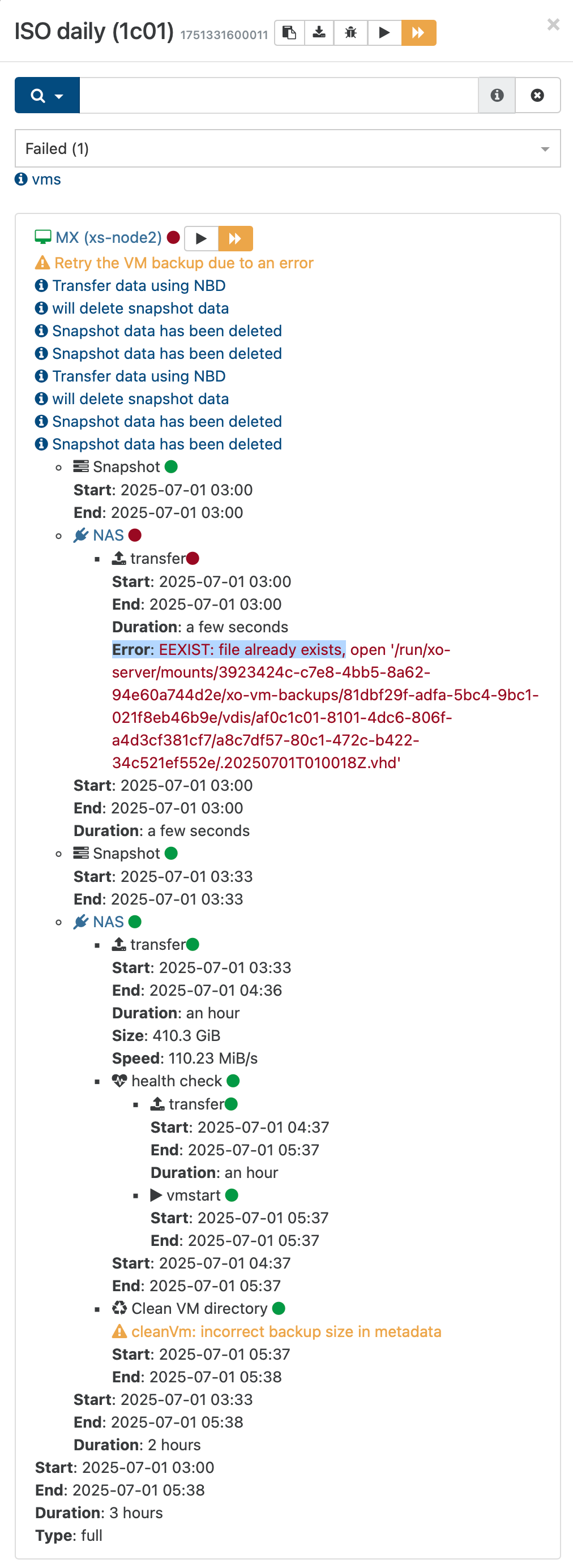

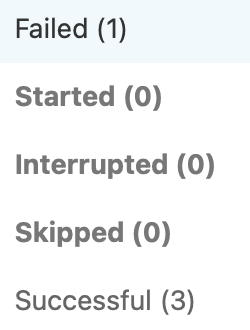

I see that changes of backup engine causing a lot of new errors.

- There are many "Error: EEXIST: file already exists" which never happened in the past. Restart of the same backup usually just works.

- Due to 1 I've added option "retry" to each backup and now even if error occurs second attempt is successful but overall status of backup tasks is set to failed.

This is how it looks like.

This backup job is an old one which running at my environment for months if not years.

Backups are stored on NAS via NFS.Other VMs processed in this job are successfully processed.

Backup job logs attached.

2025-07-01T01_00_00.011Z - backup NG.json.txt -

RE: DUPLICATE_MAC_SEED

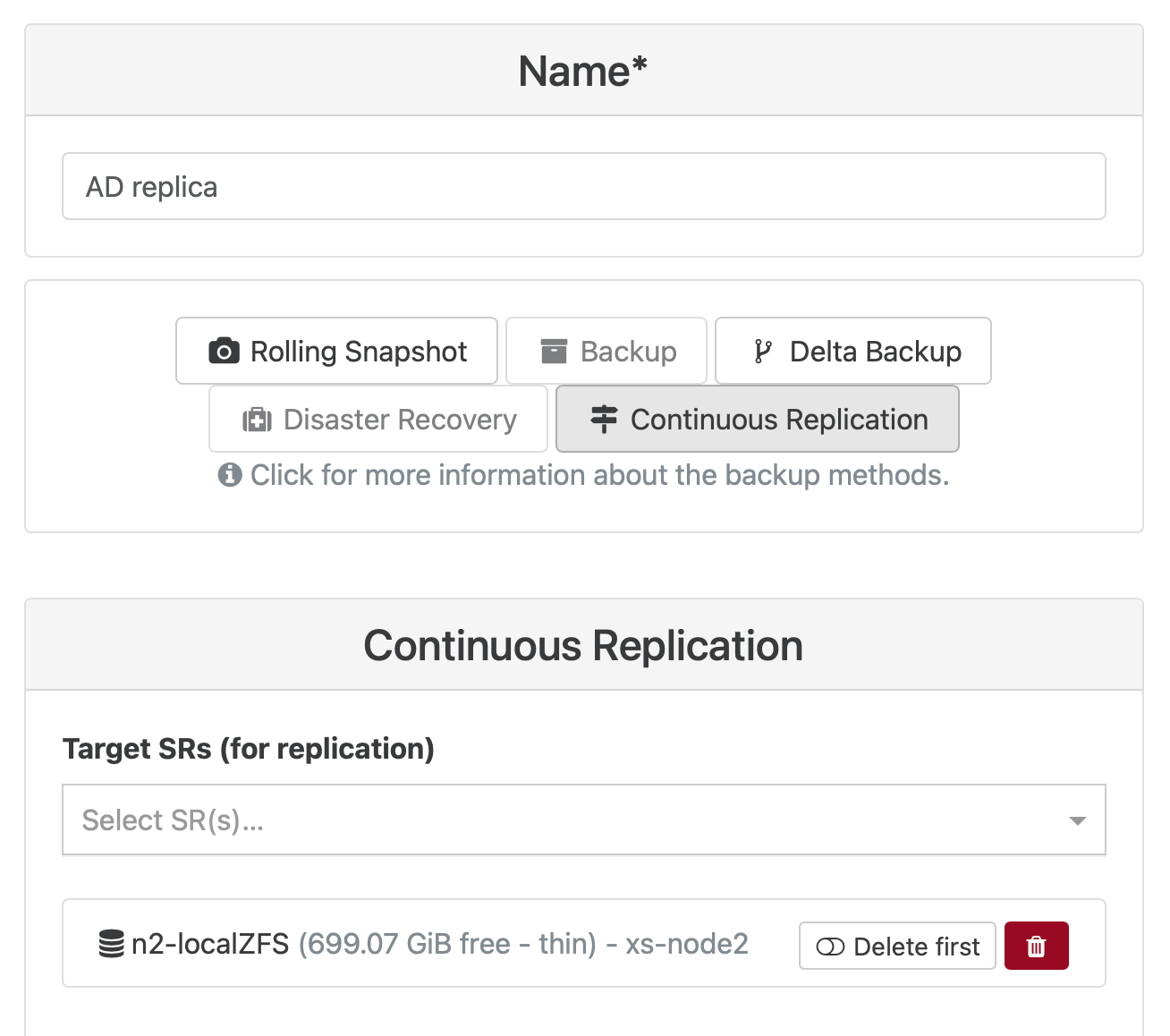

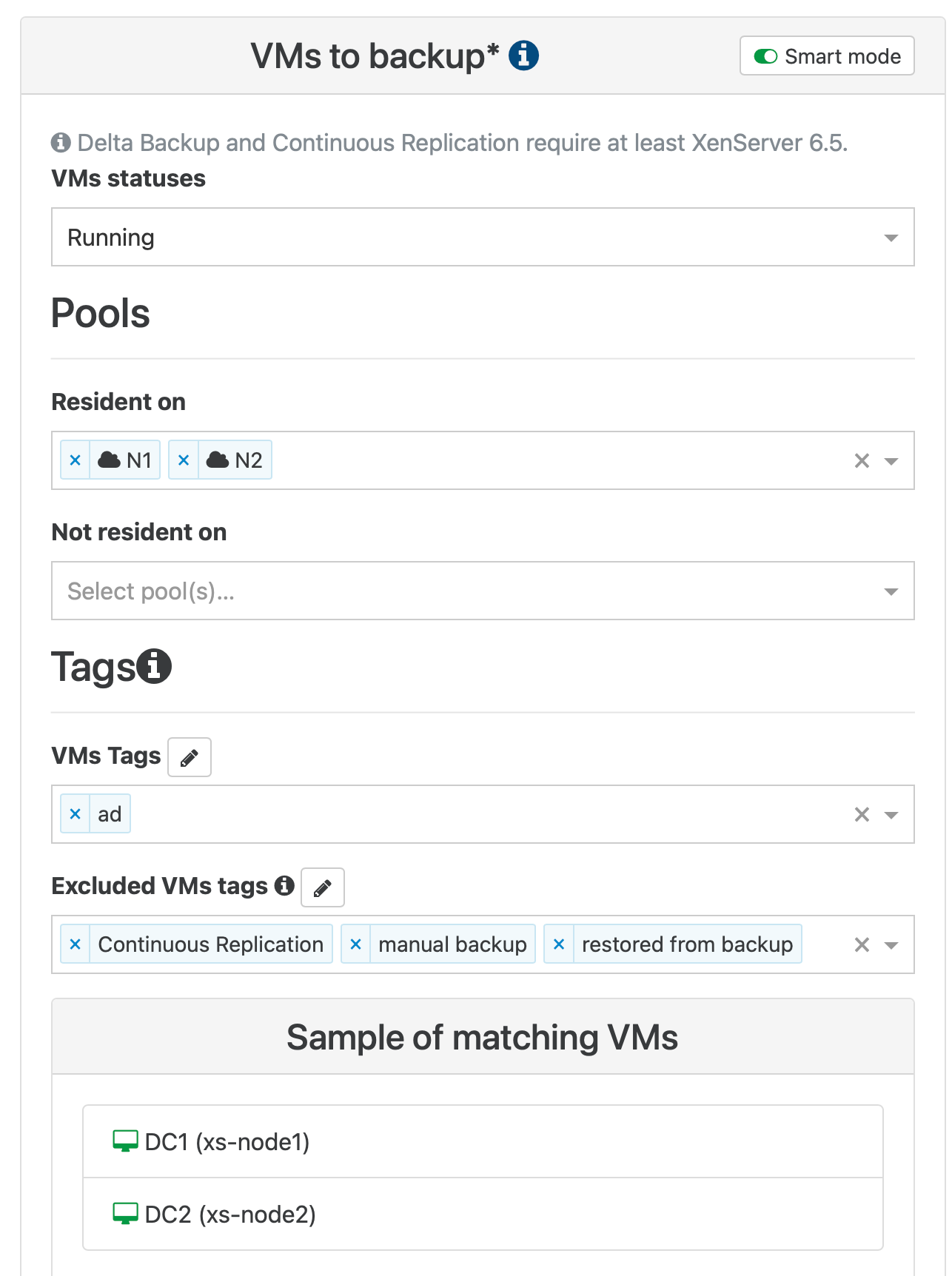

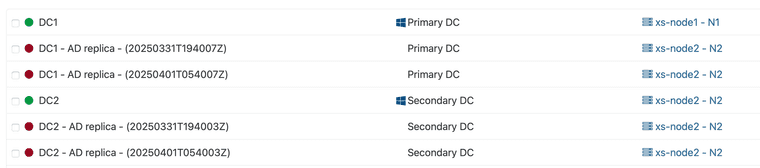

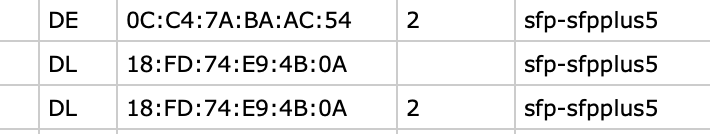

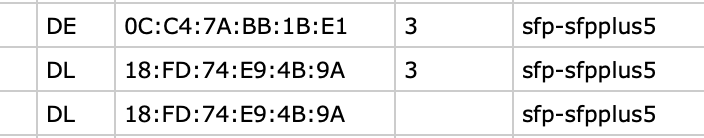

Look I have xs-node1 and xs-node2 as you can see DC1 running on node1 and have replica to separate, replica dedicated, SR at node2... DC2 running on node2 and have replica also to this replica dedicated SR.

Both xs-node1 and xs-node2 have only local storage NVMe for running VMs and xs-node2 have also ZFS pool for replicas.

When I'm trying to migrate DC1 to xs-node2 on NVMe SR I'm getting this DUPLICATE_MAC_SEED error.

Only one DC1 VM running across both xs-nodes...

-

RE: DUPLICATE_MAC_SEED

@DustinB Main reason is related with OS license costs... I agree that it would be easier to have single pool.

-

DUPLICATE_MAC_SEED

I have 2 XCP-NG hosts one is used to run VMs and other is a target for VMs replica.

When I try migrate running VM to other host which is a target of backup replica, so there are VM objects created during replication, I get error DUPLICATE_MAC_SEED.

I need to move all running VMs to this second host to perform maintenance of main one.

It was possible before.Xen Orchestra, commit 6d993

Master, commit ec782 -

RE: CBT: the thread to centralize your feedback

@icompit

Xen Orchestra, commit a5967

Master, commit a5967 -

RE: CBT: the thread to centralize your feedback

@icompit Full backups of the same VMs are processed without a problem.

{ "data": { "mode": "delta", "reportWhen": "failure" }, "id": "1728349200005", "jobId": "af0c1c01-8101-4dc6-806f-a4d3cf381cf7", "jobName": "ISO", "message": "backup", "scheduleId": "0e073624-a7eb-47ca-aca5-5f1cd0fc996b", "start": 1728349200005, "status": "success", "infos": [ { "data": { "vms": [ "81dbf29f-adfa-5bc4-9bc1-021f8eb46b9e", "fc1c7067-69f3-8949-ab68-780024a49a75", "07c32e8d-ea85-ffaa-3509-2dabff58e4af", "66949c31-5544-88fe-5e49-f0e0c7946347" ] }, "message": "vms" } ], "tasks": [ { "data": { "type": "VM", "id": "81dbf29f-adfa-5bc4-9bc1-021f8eb46b9e", "name_label": "MX" }, "id": "1728349200726", "message": "backup VM", "start": 1728349200726, "status": "success", "tasks": [ { "id": "1728349200738", "message": "clean-vm", "start": 1728349200738, "status": "success", "end": 1728349200773, "result": { "merge": false } }, { "id": "1728349200944", "message": "snapshot", "start": 1728349200944, "status": "success", "end": 1728349202402, "result": "c9285da2-1b13-e8a7-7652-316fbfde76fd" }, { "data": { "id": "22d6a348-ae2b-4783-a77d-a456e508ba64", "isFull": true, "type": "remote" }, "id": "1728349202402:0", "message": "export", "start": 1728349202402, "status": "success", "tasks": [ { "id": "1728349202859", "message": "transfer", "start": 1728349202859, "status": "success", "end": 1728355287481, "result": { "size": 358491499520 } }, { "id": "1728355310406", "message": "health check", "start": 1728355310406, "status": "success", "tasks": [ { "id": "1728355311238", "message": "transfer", "start": 1728355311238, "status": "success", "end": 1728359446575, "result": { "size": 358491498496, "id": "17402cd8-bd52-98e1-7951-3d47a03dcbf4" } }, { "id": "1728359446575:0", "message": "vmstart", "start": 1728359446575, "status": "success", "end": 1728359477580 } ], "end": 1728359492584 }, { "id": "1728359492598", "message": "clean-vm", "start": 1728359492598, "status": "success", "end": 1728359492625, "result": { "merge": false } } ], "end": 1728359492627 } ], "infos": [ { "message": "will delete snapshot data" }, { "data": { "vdiRef": "OpaqueRef:dea183a0-abf2-422b-b7fd-4d0ed1d2ac12" }, "message": "Snapshot data has been deleted" }, { "data": { "vdiRef": "OpaqueRef:e1c325ac-e8c9-4a75-83d0-a0ee50289665" }, "message": "Snapshot data has been deleted" } ], "end": 1728359492628 }, { "data": { "type": "VM", "id": "fc1c7067-69f3-8949-ab68-780024a49a75", "name_label": "ISH" }, "id": "1728359492631", "message": "backup VM", "start": 1728359492631, "status": "success", "tasks": [ { "id": "1728359492636", "message": "clean-vm", "start": 1728359492636, "status": "success", "end": 1728359492649, "result": { "merge": false } }, { "id": "1728359492792", "message": "snapshot", "start": 1728359492792, "status": "success", "end": 1728359493650, "result": "8c4a61e7-9f4c-a58b-f1d7-e9edb86e73bb" }, { "data": { "id": "22d6a348-ae2b-4783-a77d-a456e508ba64", "isFull": true, "type": "remote" }, "id": "1728359493650:0", "message": "export", "start": 1728359493650, "status": "success", "tasks": [ { "id": "1728359494085", "message": "transfer", "start": 1728359494085, "status": "success", "end": 1728360044219, "result": { "size": 49962294784 } }, { "id": "1728360047118", "message": "health check", "start": 1728360047118, "status": "success", "tasks": [ { "id": "1728360047256", "message": "transfer", "start": 1728360047256, "status": "success", "end": 1728360544642, "result": { "size": 49962294272, "id": "3d7e38b1-2366-e657-884e-67f33bee9b56" } }, { "id": "1728360544642:0", "message": "vmstart", "start": 1728360544642, "status": "success", "end": 1728360567171 } ], "end": 1728360568598 }, { "id": "1728360568628", "message": "clean-vm", "start": 1728360568628, "status": "success", "end": 1728360568663, "result": { "merge": false } } ], "end": 1728360568667 } ], "infos": [ { "message": "will delete snapshot data" }, { "data": { "vdiRef": "OpaqueRef:18e03c56-b537-490c-8c1d-0f87fef61940" }, "message": "Snapshot data has been deleted" } ], "end": 1728360568667 }, { "data": { "type": "VM", "id": "07c32e8d-ea85-ffaa-3509-2dabff58e4af", "name_label": "VPS1" }, "id": "1728360568671", "message": "backup VM", "start": 1728360568671, "status": "success", "tasks": [ { "id": "1728360568677", "message": "clean-vm", "start": 1728360568677, "status": "success", "end": 1728360568702, "result": { "merge": false } }, { "id": "1728360568803", "message": "snapshot", "start": 1728360568803, "status": "success", "end": 1728360569642, "result": "eac56d7c-fa78-f6ae-41c3-d316fbc597a0" }, { "data": { "id": "22d6a348-ae2b-4783-a77d-a456e508ba64", "isFull": true, "type": "remote" }, "id": "1728360569643", "message": "export", "start": 1728360569643, "status": "success", "tasks": [ { "id": "1728360570055", "message": "transfer", "start": 1728360570055, "status": "success", "end": 1728360787031, "result": { "size": 15826942976 } }, { "id": "1728360788525", "message": "health check", "start": 1728360788525, "status": "success", "tasks": [ { "id": "1728360788592", "message": "transfer", "start": 1728360788592, "status": "success", "end": 1728360987674, "result": { "size": 15826942464, "id": "52962bc5-2ed4-c397-9131-8894f45523bd" } }, { "id": "1728360987675", "message": "vmstart", "start": 1728360987675, "status": "success", "end": 1728361007502 } ], "end": 1728361008575 }, { "id": "1728361008588", "message": "clean-vm", "start": 1728361008588, "status": "success", "end": 1728361008622, "result": { "merge": true } } ], "end": 1728361008636 } ], "infos": [ { "message": "will delete snapshot data" }, { "data": { "vdiRef": "OpaqueRef:00c688b0-555d-42bb-93e9-db8b09fea339" }, "message": "Snapshot data has been deleted" } ], "end": 1728361008636 }, { "data": { "type": "VM", "id": "66949c31-5544-88fe-5e49-f0e0c7946347", "name_label": "DNS" }, "id": "1728361008638", "message": "backup VM", "start": 1728361008638, "status": "success", "tasks": [ { "id": "1728361008643", "message": "clean-vm", "start": 1728361008643, "status": "success", "end": 1728361008669, "result": { "merge": false } }, { "id": "1728361008780", "message": "snapshot", "start": 1728361008780, "status": "success", "end": 1728361009626, "result": "20408aa8-5979-23a5-e8db-c32eb5ec52a8" }, { "data": { "id": "22d6a348-ae2b-4783-a77d-a456e508ba64", "isFull": true, "type": "remote" }, "id": "1728361009626:0", "message": "export", "start": 1728361009626, "status": "success", "tasks": [ { "id": "1728361010081", "message": "transfer", "start": 1728361010081, "status": "success", "end": 1728361179509, "result": { "size": 14742417920 } }, { "id": "1728361181070:0", "message": "health check", "start": 1728361181070, "status": "success", "tasks": [ { "id": "1728361181124", "message": "transfer", "start": 1728361181124, "status": "success", "end": 1728361321066, "result": { "size": 14742417408, "id": "cd3ce194-28ca-0c3f-8a78-6873ebac0da2" } }, { "id": "1728361321067", "message": "vmstart", "start": 1728361321067, "status": "success", "end": 1728361368268 } ], "end": 1728361369283 }, { "id": "1728361369296", "message": "clean-vm", "start": 1728361369296, "status": "success", "end": 1728361369327, "result": { "merge": true } } ], "end": 1728361369340 } ], "infos": [ { "message": "will delete snapshot data" }, { "data": { "vdiRef": "OpaqueRef:b6f2b416-83b1-4f50-84f1-662f03aa626d" }, "message": "Snapshot data has been deleted" } ], "end": 1728361369340 } ], "end": 1728361369340 } -

RE: CBT: the thread to centralize your feedback

I'm also facing "can't create a stream from a metadata VDI, fall back to a base" on delta backups after upgrade of XO.

Below the logs from failed jobs:{ "data": { "mode": "delta", "reportWhen": "failure" }, "id": "1728295200005", "jobId": "af0c1c01-8101-4dc6-806f-a4d3cf381cf7", "jobName": "ISO", "message": "backup", "scheduleId": "340b6832-4e2b-485b-87b5-5d21b30b4ddf", "start": 1728295200005, "status": "failure", "infos": [ { "data": { "vms": [ "81dbf29f-adfa-5bc4-9bc1-021f8eb46b9e", "fc1c7067-69f3-8949-ab68-780024a49a75", "07c32e8d-ea85-ffaa-3509-2dabff58e4af", "66949c31-5544-88fe-5e49-f0e0c7946347" ] }, "message": "vms" } ], "tasks": [ { "data": { "type": "VM", "id": "81dbf29f-adfa-5bc4-9bc1-021f8eb46b9e", "name_label": "MX" }, "id": "1728295200766", "message": "backup VM", "start": 1728295200766, "status": "failure", "tasks": [ { "id": "1728295200804", "message": "clean-vm", "start": 1728295200804, "status": "success", "end": 1728295200839, "result": { "merge": false } }, { "id": "1728295200981", "message": "snapshot", "start": 1728295200981, "status": "success", "end": 1728295202424, "result": "21700914-d8d4-86a9-0035-409406b214e4" }, { "data": { "id": "22d6a348-ae2b-4783-a77d-a456e508ba64", "isFull": false, "type": "remote" }, "id": "1728295202425", "message": "export", "start": 1728295202425, "status": "success", "tasks": [ { "id": "1728295214099", "message": "clean-vm", "start": 1728295214099, "status": "success", "end": 1728295214130, "result": { "merge": false } } ], "end": 1728295214135 } ], "infos": [ { "message": "will delete snapshot data" }, { "data": { "vdiRef": "OpaqueRef:8eeda3b4-5eb9-4bf5-9d09-c76a3d729054" }, "message": "Snapshot data has been deleted" }, { "data": { "vdiRef": "OpaqueRef:5431ba31-88d3-4421-8f35-90c943f3b6eb" }, "message": "Snapshot data has been deleted" } ], "end": 1728295214135, "result": { "message": "can't create a stream from a metadata VDI, fall back to a base ", "name": "Error", "stack": "Error: can't create a stream from a metadata VDI, fall back to a base \n at Xapi.exportContent (file:///etc/xen-orchestra/@xen-orchestra/xapi/vdi.mjs:251:15)\n at process.processTicksAndRejections (node:internal/process/task_queues:95:5)\n at async file:///etc/xen-orchestra/@xen-orchestra/backups/_incrementalVm.mjs:56:32\n at async Promise.all (index 0)\n at async cancelableMap (file:///etc/xen-orchestra/@xen-orchestra/backups/_cancelableMap.mjs:11:12)\n at async exportIncrementalVm (file:///etc/xen-orchestra/@xen-orchestra/backups/_incrementalVm.mjs:25:3)\n at async IncrementalXapiVmBackupRunner._copy (file:///etc/xen-orchestra/@xen-orchestra/backups/_runners/_vmRunners/IncrementalXapi.mjs:44:25)\n at async IncrementalXapiVmBackupRunner.run (file:///etc/xen-orchestra/@xen-orchestra/backups/_runners/_vmRunners/_AbstractXapi.mjs:379:9)\n at async file:///etc/xen-orchestra/@xen-orchestra/backups/_runners/VmsXapi.mjs:166:38" } }, { "data": { "type": "VM", "id": "fc1c7067-69f3-8949-ab68-780024a49a75", "name_label": "ISH" }, "id": "1728295214142", "message": "backup VM", "start": 1728295214142, "status": "failure", "tasks": [ { "id": "1728295214147", "message": "clean-vm", "start": 1728295214147, "status": "success", "end": 1728295214171, "result": { "merge": false } }, { "id": "1728295214288", "message": "snapshot", "start": 1728295214288, "status": "success", "end": 1728295215179, "result": "b1e43127-c471-cf4b-bb96-d168b3d6cd18" }, { "data": { "id": "22d6a348-ae2b-4783-a77d-a456e508ba64", "isFull": false, "type": "remote" }, "id": "1728295215179:0", "message": "export", "start": 1728295215179, "status": "success", "tasks": [ { "id": "1728295215992", "message": "clean-vm", "start": 1728295215992, "status": "success", "end": 1728295216011, "result": { "merge": false } } ], "end": 1728295216012 } ], "infos": [ { "message": "will delete snapshot data" }, { "data": { "vdiRef": "OpaqueRef:e17c3e37-5e9d-4296-8108-642e7ec2c5e2" }, "message": "Snapshot data has been deleted" } ], "end": 1728295216012, "result": { "message": "can't create a stream from a metadata VDI, fall back to a base ", "name": "Error", "stack": "Error: can't create a stream from a metadata VDI, fall back to a base \n at Xapi.exportContent (file:///etc/xen-orchestra/@xen-orchestra/xapi/vdi.mjs:251:15)\n at process.processTicksAndRejections (node:internal/process/task_queues:95:5)\n at async file:///etc/xen-orchestra/@xen-orchestra/backups/_incrementalVm.mjs:56:32\n at async Promise.all (index 0)\n at async cancelableMap (file:///etc/xen-orchestra/@xen-orchestra/backups/_cancelableMap.mjs:11:12)\n at async exportIncrementalVm (file:///etc/xen-orchestra/@xen-orchestra/backups/_incrementalVm.mjs:25:3)\n at async IncrementalXapiVmBackupRunner._copy (file:///etc/xen-orchestra/@xen-orchestra/backups/_runners/_vmRunners/IncrementalXapi.mjs:44:25)\n at async IncrementalXapiVmBackupRunner.run (file:///etc/xen-orchestra/@xen-orchestra/backups/_runners/_vmRunners/_AbstractXapi.mjs:379:9)\n at async file:///etc/xen-orchestra/@xen-orchestra/backups/_runners/VmsXapi.mjs:166:38" } }, { "data": { "type": "VM", "id": "07c32e8d-ea85-ffaa-3509-2dabff58e4af", "name_label": "VPS1" }, "id": "1728295216015", "message": "backup VM", "start": 1728295216015, "status": "failure", "tasks": [ { "id": "1728295216019", "message": "clean-vm", "start": 1728295216019, "status": "success", "end": 1728295216054, "result": { "merge": false } }, { "id": "1728295216173", "message": "snapshot", "start": 1728295216173, "status": "success", "end": 1728295216808, "result": "3f1b009a-534b-cb40-b1d3-b8fad305bb73" }, { "data": { "id": "22d6a348-ae2b-4783-a77d-a456e508ba64", "isFull": false, "type": "remote" }, "id": "1728295216808:0", "message": "export", "start": 1728295216808, "status": "success", "tasks": [ { "id": "1728295217406", "message": "clean-vm", "start": 1728295217406, "status": "success", "end": 1728295217432, "result": { "merge": false } } ], "end": 1728295217434 } ], "infos": [ { "message": "will delete snapshot data" }, { "data": { "vdiRef": "OpaqueRef:56a92275-7bbe-47d0-970e-f32c136fe870" }, "message": "Snapshot data has been deleted" } ], "end": 1728295217434, "result": { "message": "can't create a stream from a metadata VDI, fall back to a base ", "name": "Error", "stack": "Error: can't create a stream from a metadata VDI, fall back to a base \n at Xapi.exportContent (file:///etc/xen-orchestra/@xen-orchestra/xapi/vdi.mjs:251:15)\n at process.processTicksAndRejections (node:internal/process/task_queues:95:5)\n at async file:///etc/xen-orchestra/@xen-orchestra/backups/_incrementalVm.mjs:56:32\n at async Promise.all (index 0)\n at async cancelableMap (file:///etc/xen-orchestra/@xen-orchestra/backups/_cancelableMap.mjs:11:12)\n at async exportIncrementalVm (file:///etc/xen-orchestra/@xen-orchestra/backups/_incrementalVm.mjs:25:3)\n at async IncrementalXapiVmBackupRunner._copy (file:///etc/xen-orchestra/@xen-orchestra/backups/_runners/_vmRunners/IncrementalXapi.mjs:44:25)\n at async IncrementalXapiVmBackupRunner.run (file:///etc/xen-orchestra/@xen-orchestra/backups/_runners/_vmRunners/_AbstractXapi.mjs:379:9)\n at async file:///etc/xen-orchestra/@xen-orchestra/backups/_runners/VmsXapi.mjs:166:38" } }, { "data": { "type": "VM", "id": "66949c31-5544-88fe-5e49-f0e0c7946347", "name_label": "DNS" }, "id": "1728295217436", "message": "backup VM", "start": 1728295217436, "status": "failure", "tasks": [ { "id": "1728295217440", "message": "clean-vm", "start": 1728295217440, "status": "success", "end": 1728295217462, "result": { "merge": false } }, { "id": "1728295217599", "message": "snapshot", "start": 1728295217599, "status": "success", "end": 1728295218238, "result": "950ff33c-b7e9-b202-c2b3-53b2a8e8c416" }, { "data": { "id": "22d6a348-ae2b-4783-a77d-a456e508ba64", "isFull": false, "type": "remote" }, "id": "1728295218238:0", "message": "export", "start": 1728295218238, "status": "success", "tasks": [ { "id": "1728295218833", "message": "clean-vm", "start": 1728295218833, "status": "success", "end": 1728295218862, "result": { "merge": false } } ], "end": 1728295218863 } ], "infos": [ { "message": "will delete snapshot data" }, { "data": { "vdiRef": "OpaqueRef:7907be71-6b11-4240-a2a7-6043aea4ee05" }, "message": "Snapshot data has been deleted" } ], "end": 1728295218864, "result": { "message": "can't create a stream from a metadata VDI, fall back to a base ", "name": "Error", "stack": "Error: can't create a stream from a metadata VDI, fall back to a base \n at Xapi.exportContent (file:///etc/xen-orchestra/@xen-orchestra/xapi/vdi.mjs:251:15)\n at process.processTicksAndRejections (node:internal/process/task_queues:95:5)\n at async file:///etc/xen-orchestra/@xen-orchestra/backups/_incrementalVm.mjs:56:32\n at async Promise.all (index 0)\n at async cancelableMap (file:///etc/xen-orchestra/@xen-orchestra/backups/_cancelableMap.mjs:11:12)\n at async exportIncrementalVm (file:///etc/xen-orchestra/@xen-orchestra/backups/_incrementalVm.mjs:25:3)\n at async IncrementalXapiVmBackupRunner._copy (file:///etc/xen-orchestra/@xen-orchestra/backups/_runners/_vmRunners/IncrementalXapi.mjs:44:25)\n at async IncrementalXapiVmBackupRunner.run (file:///etc/xen-orchestra/@xen-orchestra/backups/_runners/_vmRunners/_AbstractXapi.mjs:379:9)\n at async file:///etc/xen-orchestra/@xen-orchestra/backups/_runners/VmsXapi.mjs:166:38" } } ], "end": 1728295218864 } -

RE: PCIe NIC passing through - dropped packets, no communication

No, the logs from hypervisor as well as from dom0 didn't lead me to this.

Logs from VM OS while trying use those NICs generated some logs which after checking on the Internet lead to RHEL support case where adding this parameter to kernel was a confirmed solution. At XCP-NG forum there was also a thread which mentioned this option for passthrough device but it was related with GPU if I recall correctly.

Anyway this has helped me with Intel NICs.

Still no luck with Marvel FastlinQ NICs but there the problem is more related with driver included in kernel on guest OS. I'm still trying to solve it.

I didn't test this yet with Mellanox cards.

I'm trying to squeeze my lab environment as much as I can to save on electricity... the prices went crazy nowadays.

I'm trying to use StarWind VSAN (free license for 3 nodes and HA storage) and those NICs with SATA controller and NVMe ssd I needed to passthrough to VM. Server nodes are so powerful today so even single socket server can handle quite a lot..

I did some tests today with two LUNs presented over multi path iSCSI and this may work quite nice.

One instance of StarWind VSAN on one XCP-NG node, one on second and communication over dedicated NICs.. HA and replication on interfaces connected directly between StarWind nodes without switch.

I don't need much in terms of computing power and storage spece.. It might be perfect solution of two node cluster setups which may fit many of small customers or labs as my.

Of course this require more testing... but my lab is perfect place to experiment

-

RE: PCIe NIC passing through - dropped packets, no communication

Problem solved with adding the kernel parameter wit command:

/opt/xensource/libexec/xen-cmdline --set-dom0 "pci=realloc=on"

-

RE: PCIe NIC passing through - dropped packets, no communication

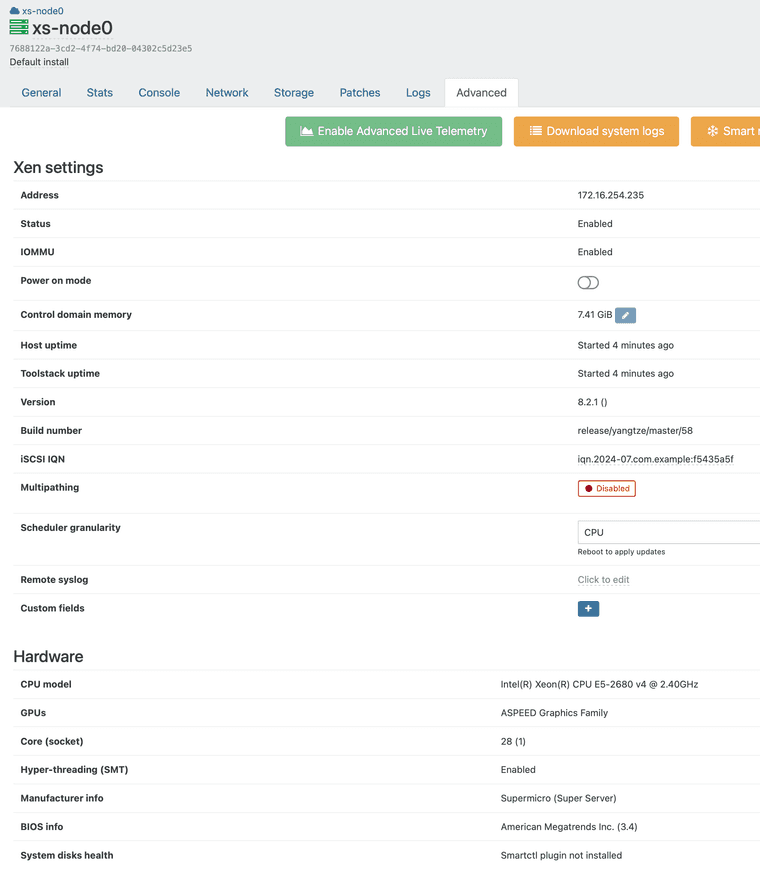

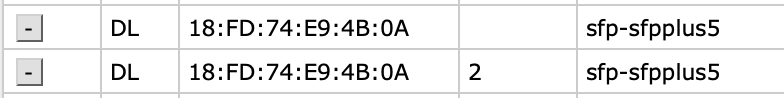

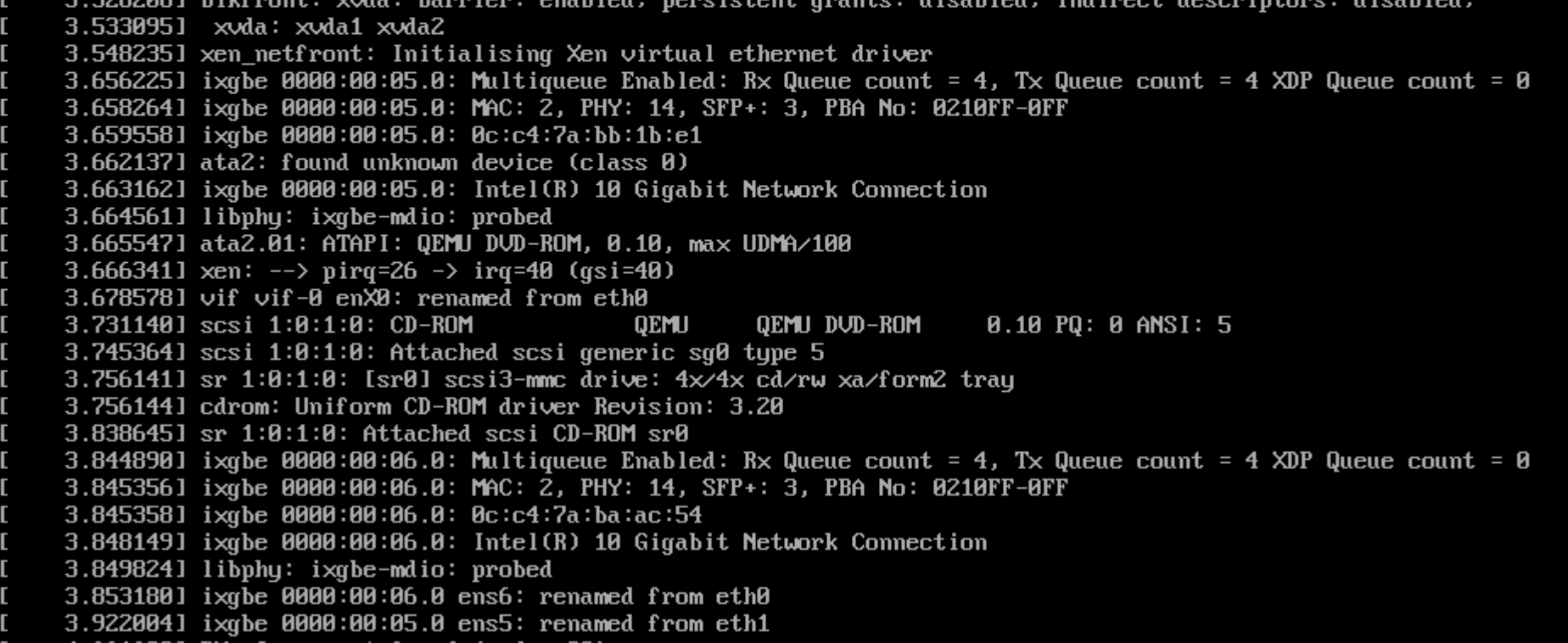

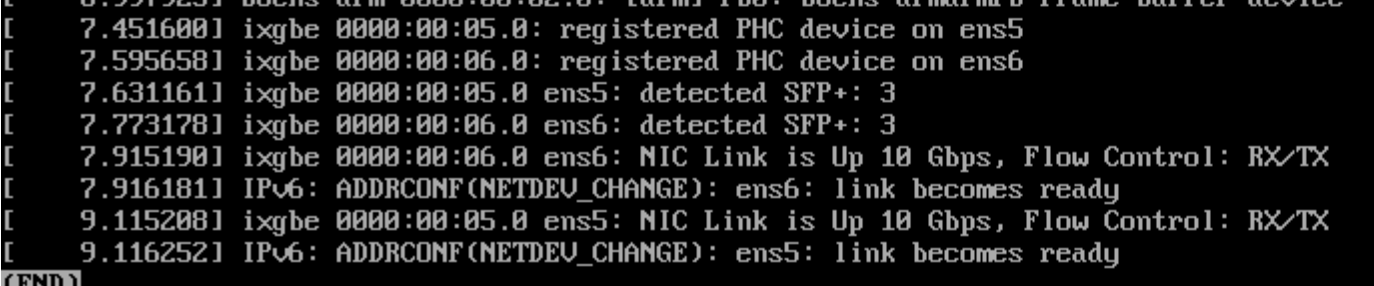

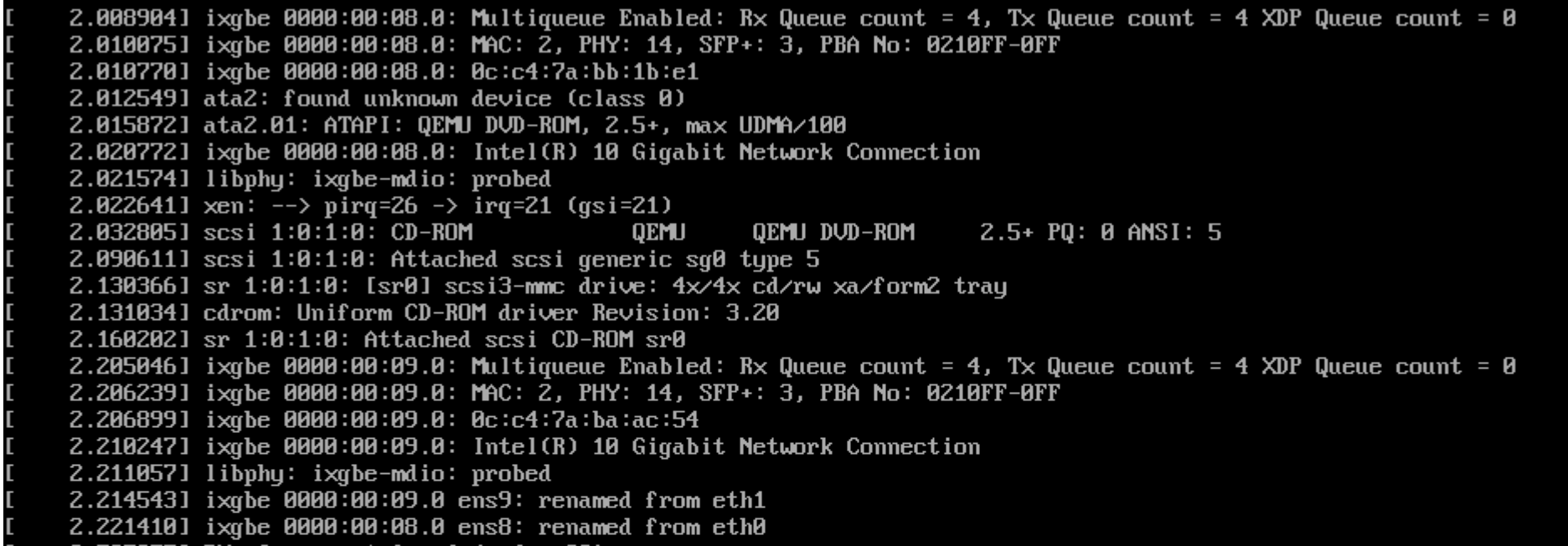

I've made clean install of xcp-ng 8.2.1 and applied all the patches available to this day again to collect all the details.

After installation:

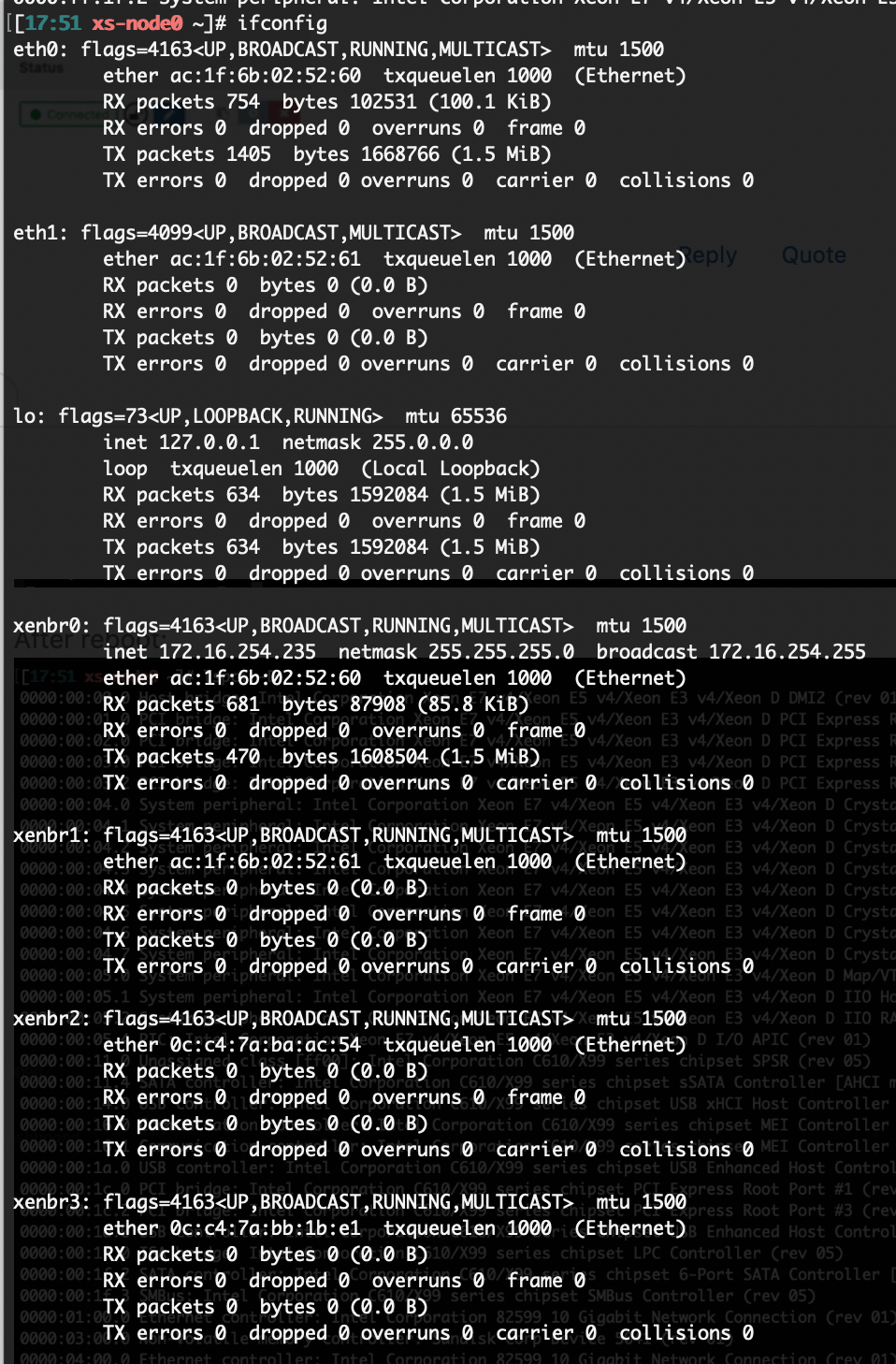

After reboot:

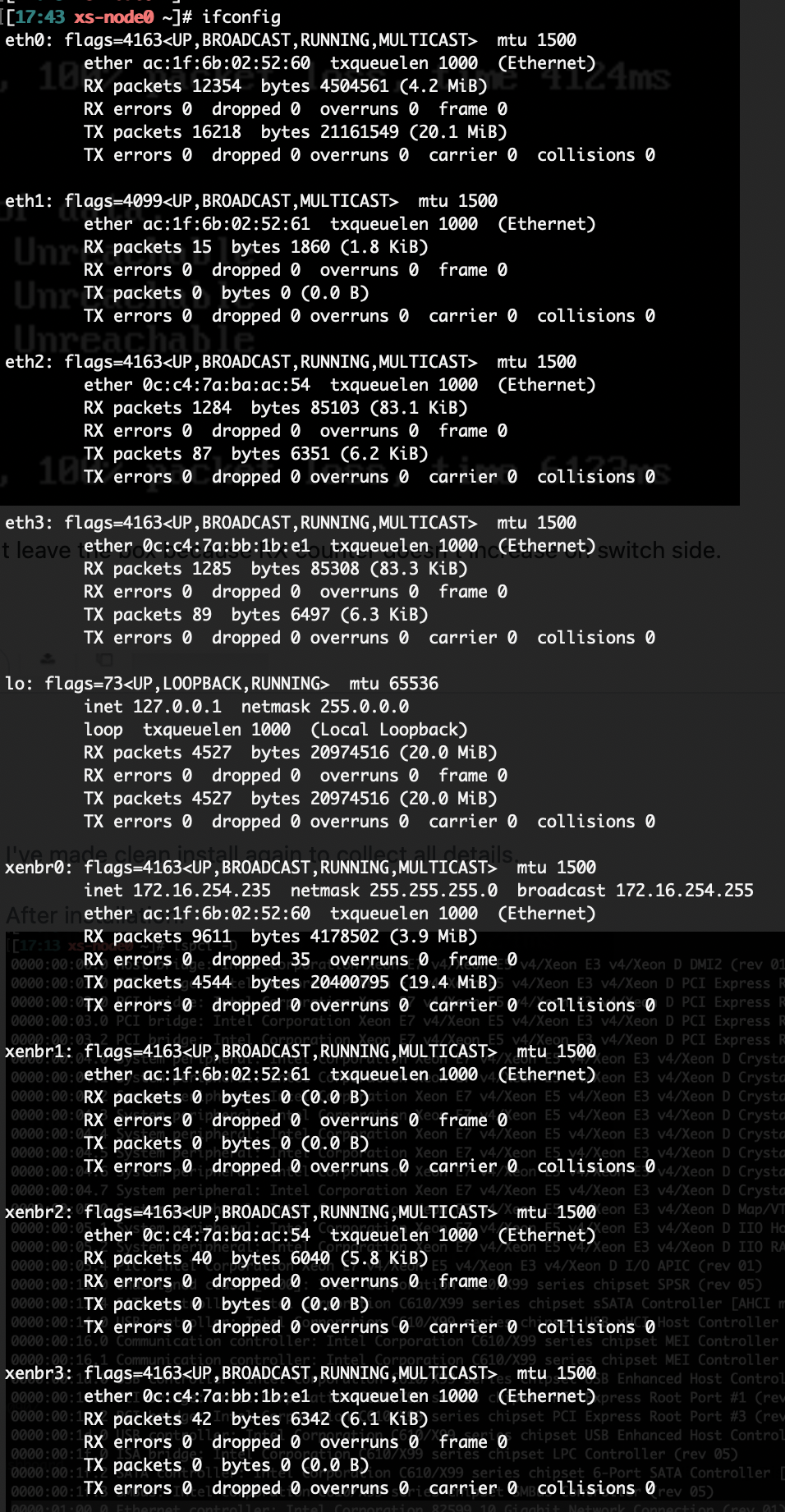

NIC are hidden from dom0, the interfaces eth2 and eth3 are not visible but the xenbr2 and xenbr3 are still there and are operational.

This is a bit strange for me as I thought those interfaces should be not present and this should lead that both xenbr interfaces at lest should be down... without operational connection.

I see the MAC addresses fot those interfaces on switch side.

Anyway both interfaces are available for passthrough.

I've bonded eth1 and eth2 before setting up the VM target for NIC for passthrough.

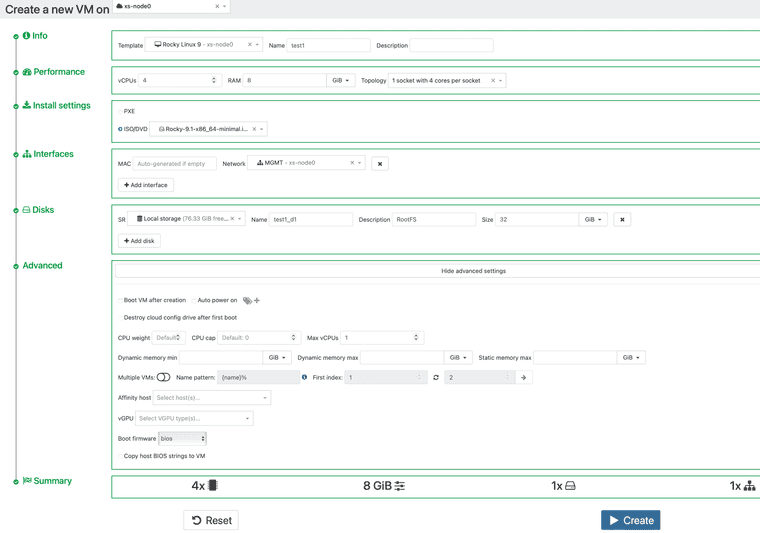

VM installation:

Before I start the VM and proceed with installation I will add those cards.

|

|I see at XO that that NICs are assigned for the VM.

During installation cards are available:

But what is worth to mention on switch side apart from MAC of card I'm starting to see also MAC from xenbr2 and xenbr3 interfaces.

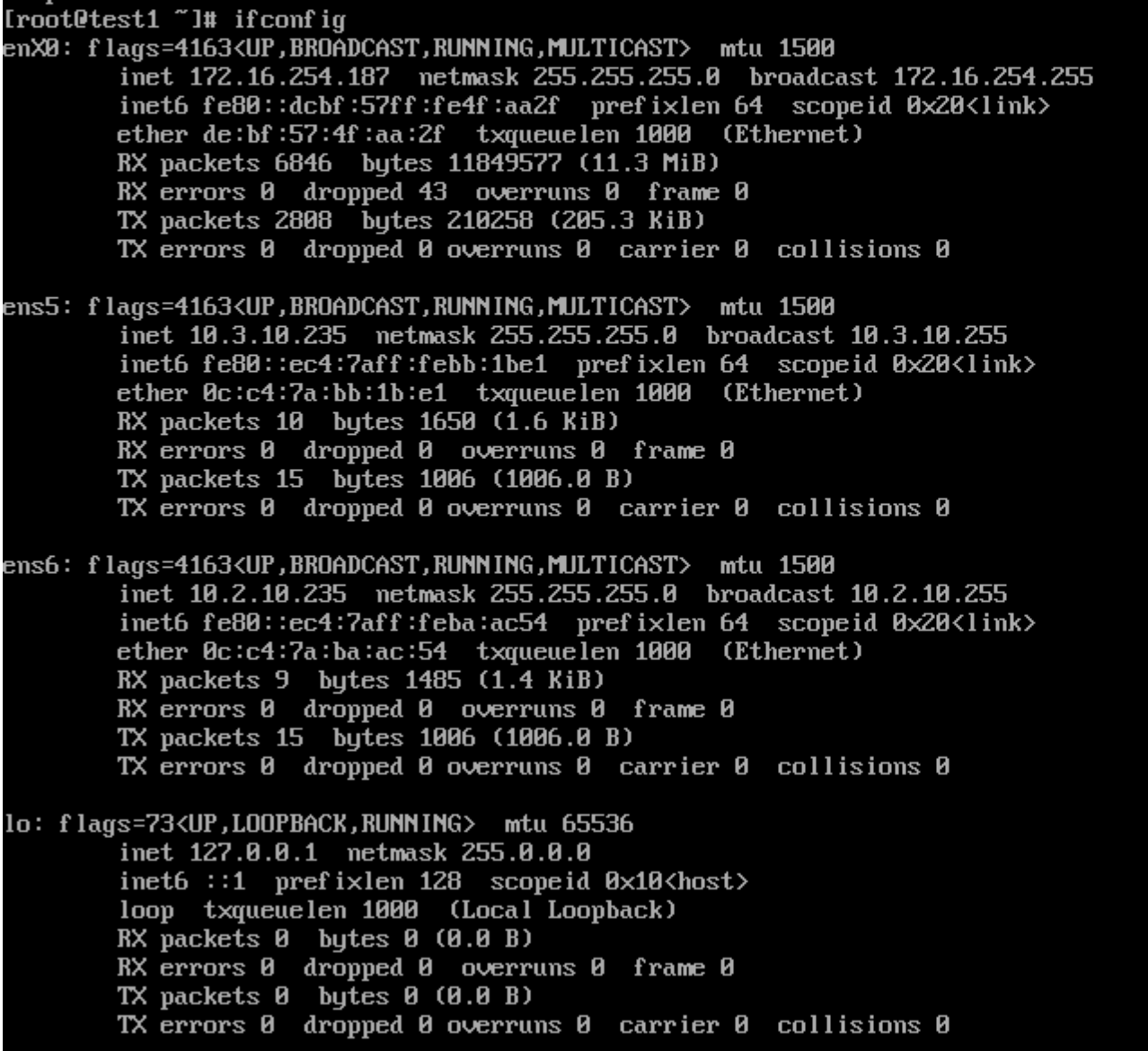

After starting VM:

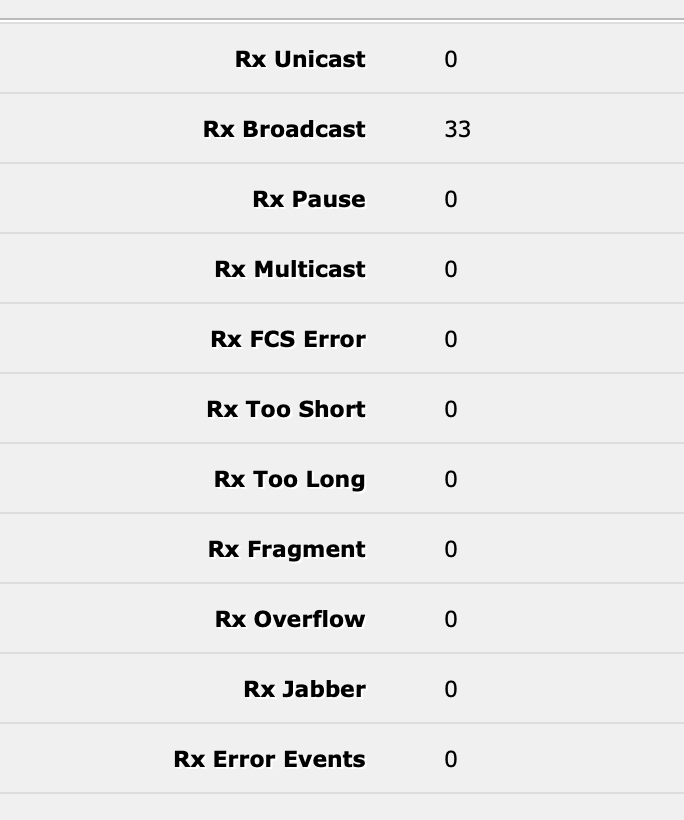

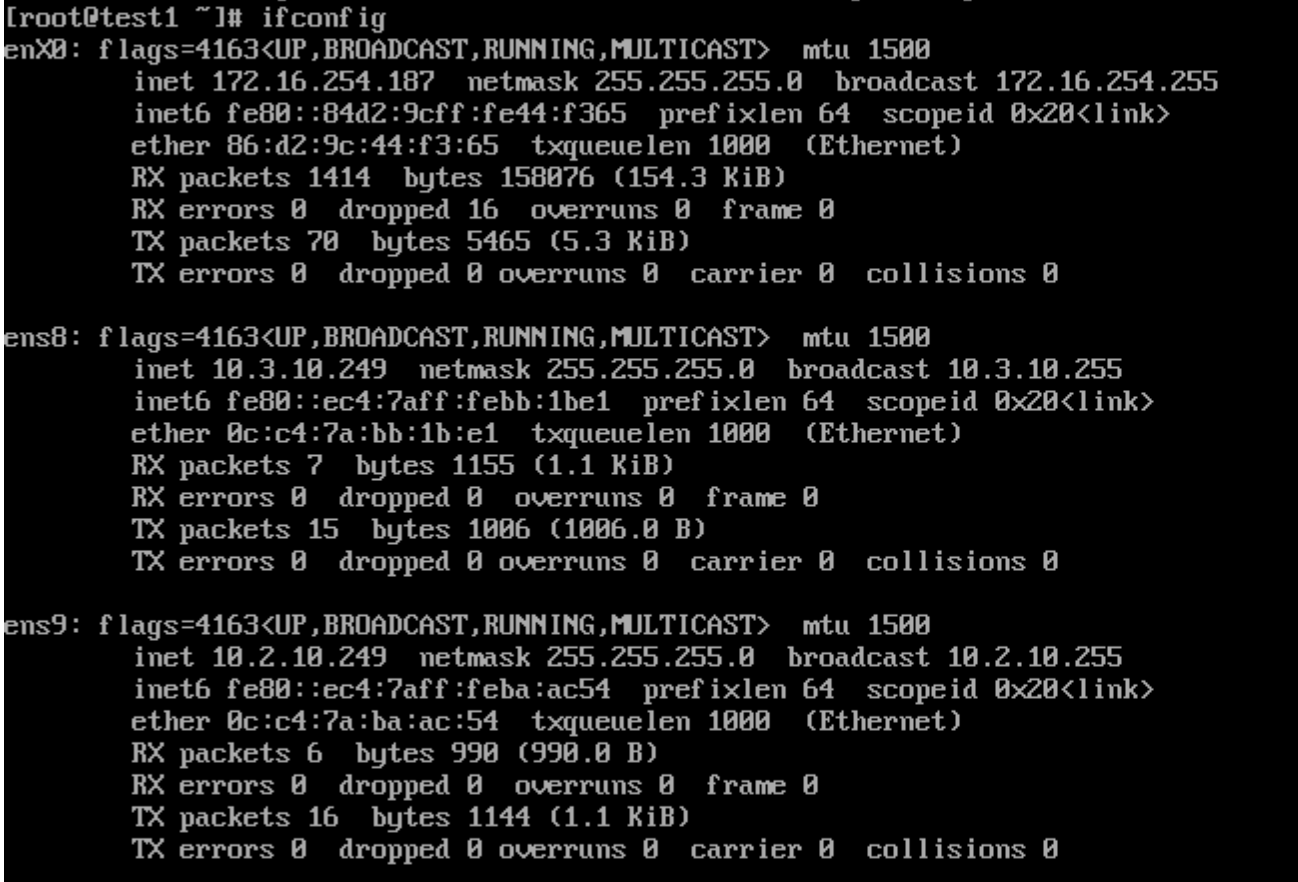

When I start ping to the host in the network connected to the passthrough NIC on switch side I can see only:

No packet received on VM level, counters doesn't increase.

Is there something which I miss to configure on hypervisor level???

-

RE: PCIe NIC passing through - dropped packets, no communication

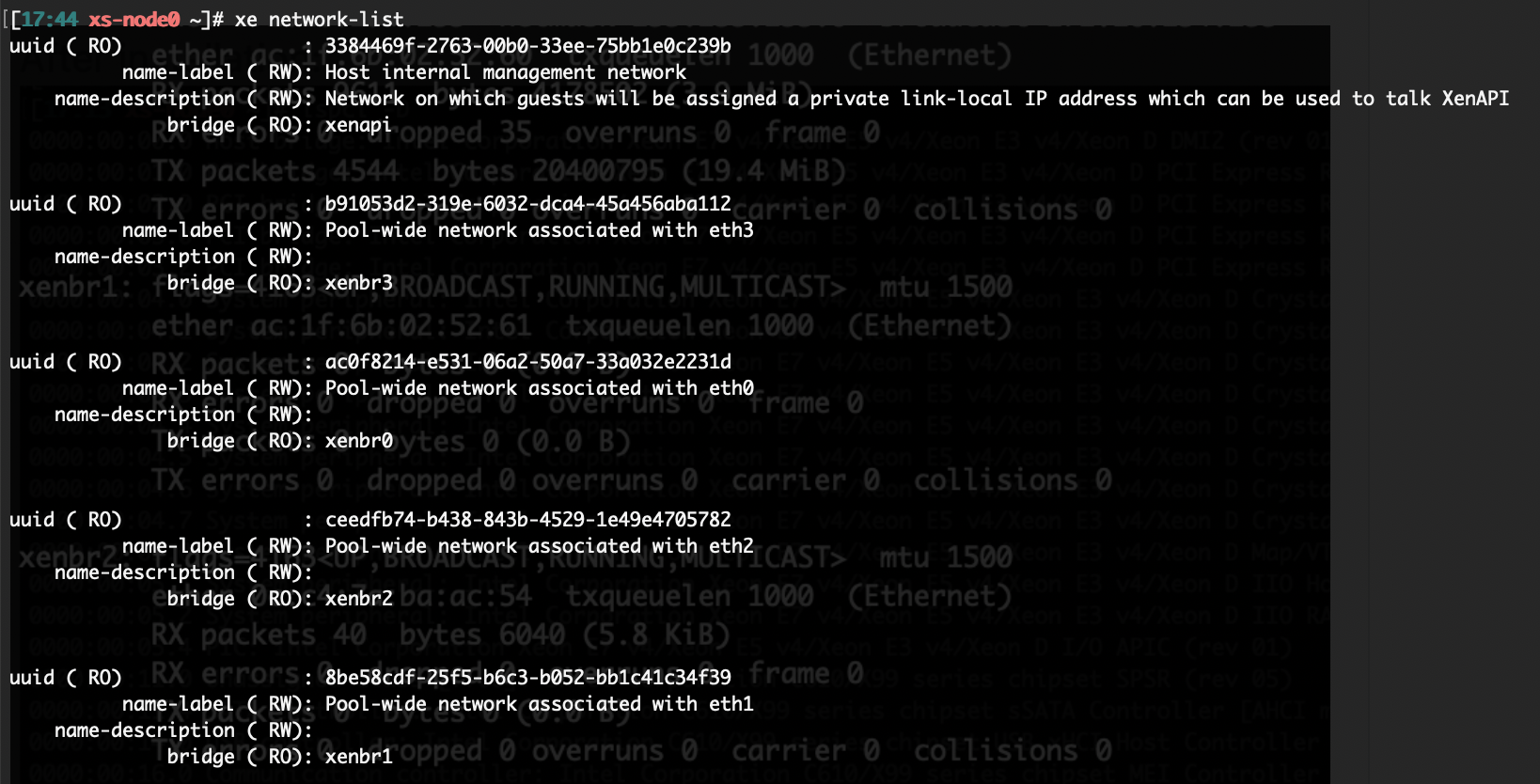

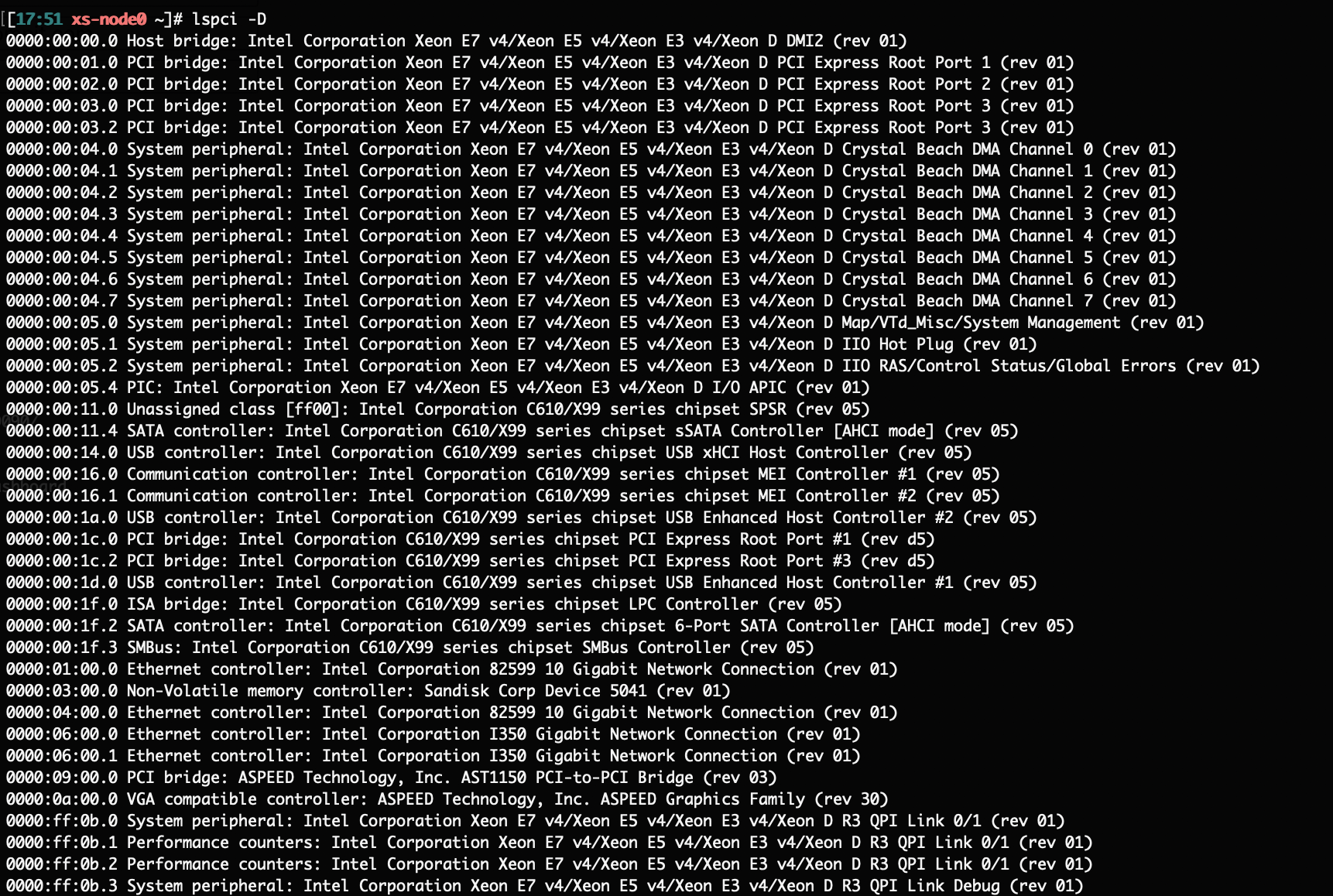

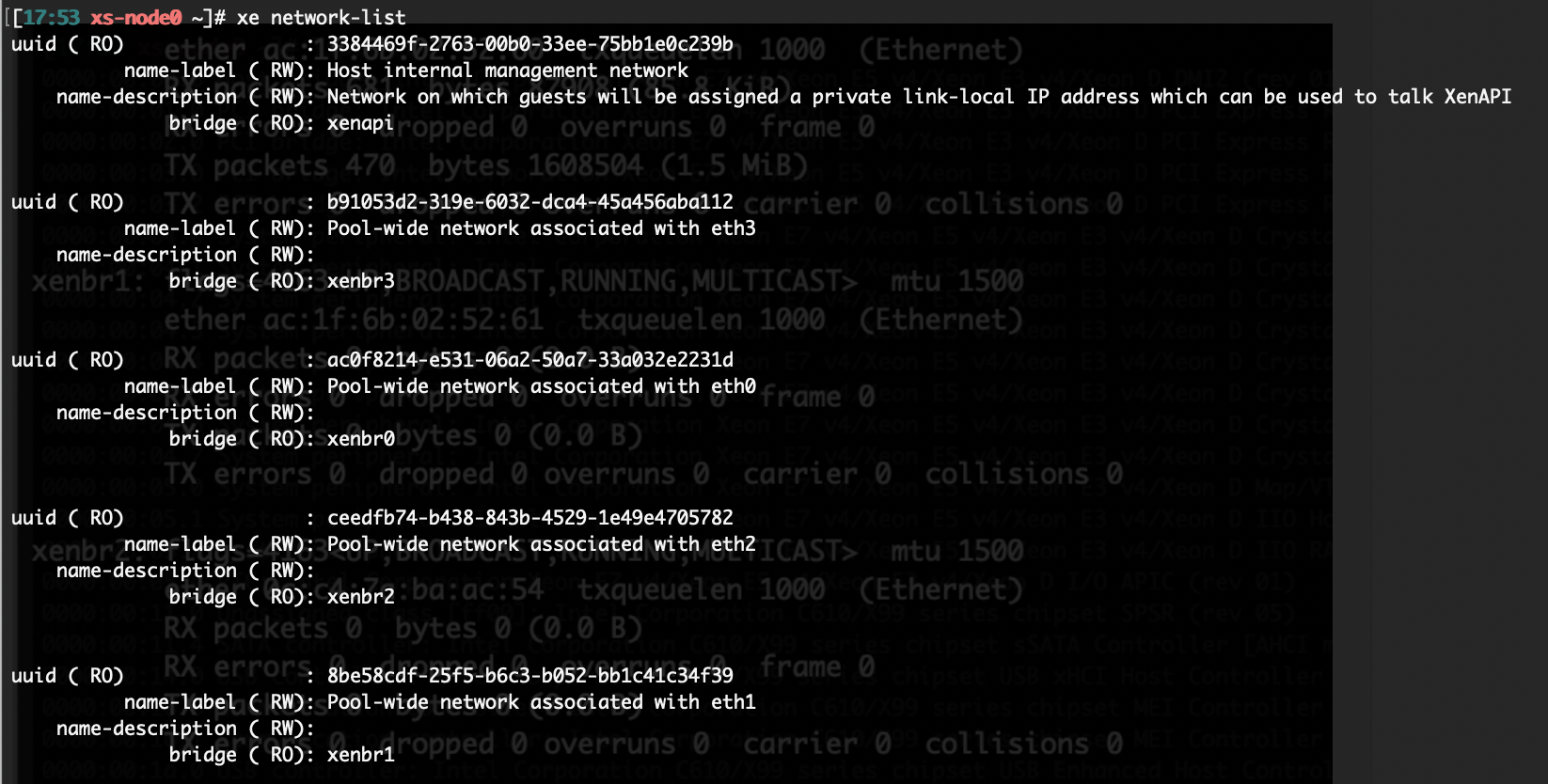

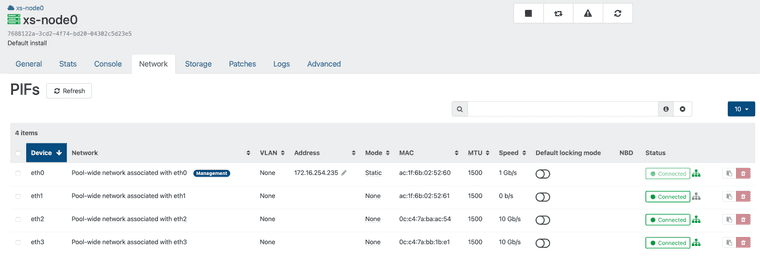

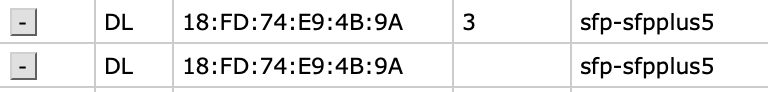

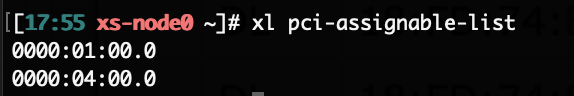

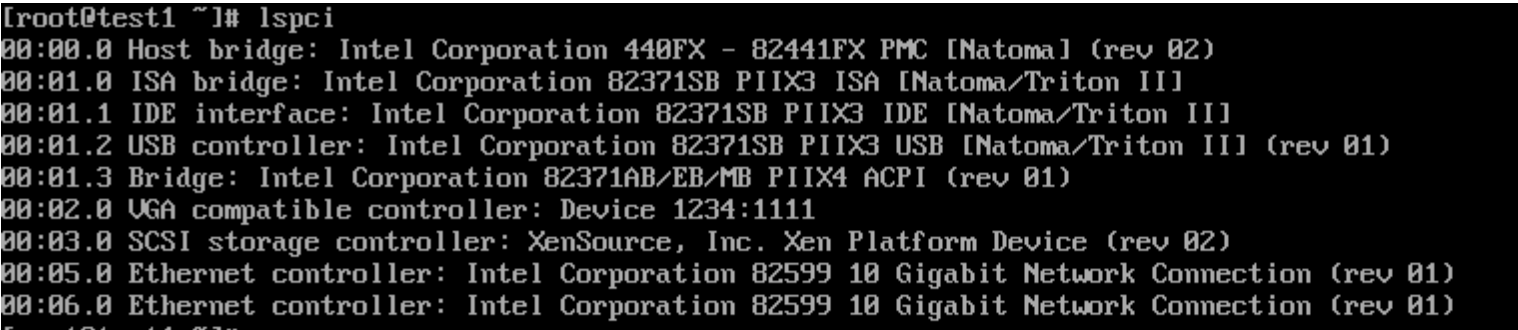

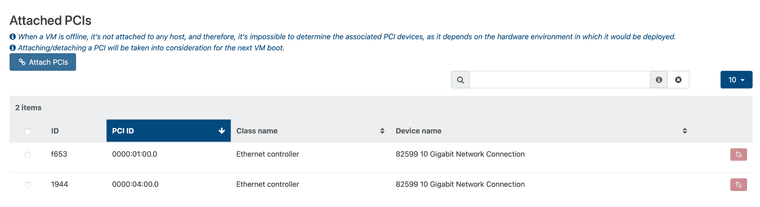

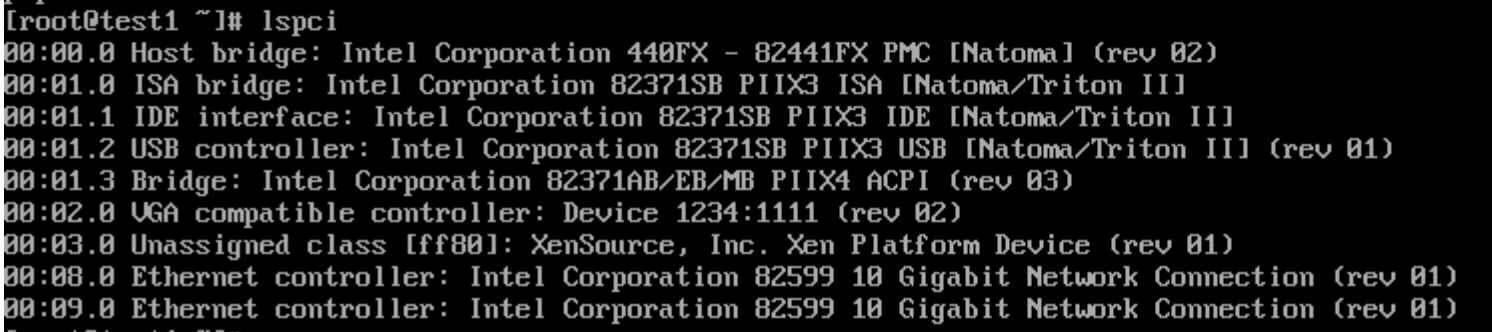

From the beginning:

- I have bare metal system with 2 NICs on motherboard and 2 NICs installed in PCIe slots

- I have installed XCP-NG on top of this hardware and I time to have 2 NICs from motherboard in use for hypervisor and two from PCIe slots passed through to VM.

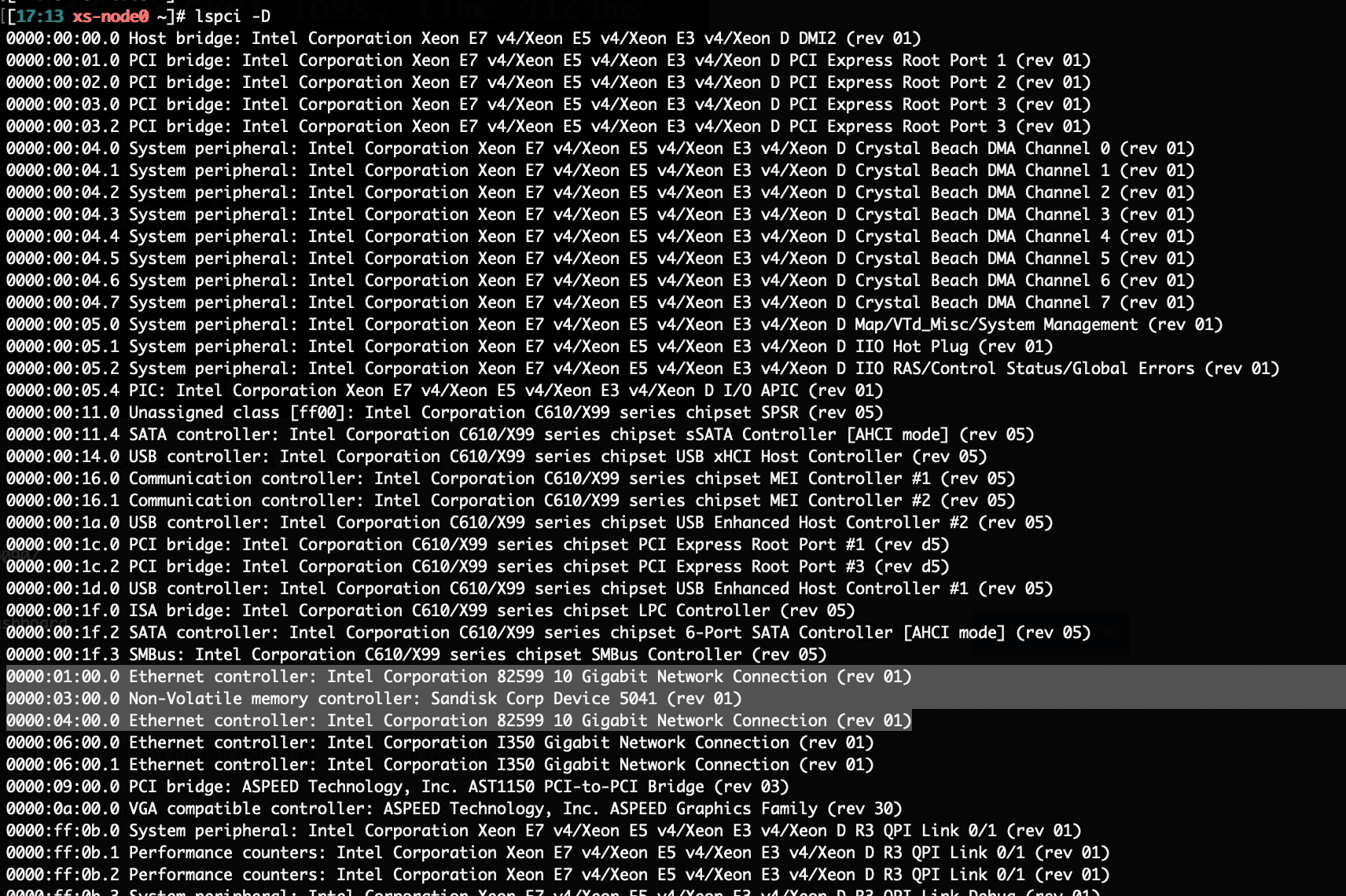

I'm using lspci -D to find devices which I need to passthrough at XCP-NG console

0000:01:00.0 Ethernet controller: Intel Corporation 82599 10 Gigabit Network Connection (rev 01)

0000:03:00.0 Non-Volatile memory controller: Sandisk Corp Device 5041 (rev 01)

0000:04:00.0 Ethernet controller: Intel Corporation 82599 10 Gigabit Network Connection (rev 01)

0000:06:00.0 Ethernet controller: Intel Corporation I350 Gigabit Network Connection (rev 01)

0000:06:00.1 Ethernet controller: Intel Corporation I350 Gigabit Network Connection (rev 01)and with command:

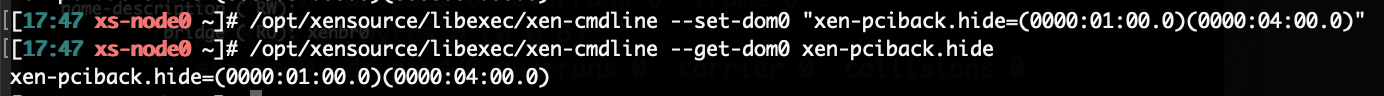

/opt/xensource/libexec/xen-cmdline --set-dom0 "xen-pciback.hide=(0000:01:00.0)(0000:04:00.0)"

I prepare them to passthrough.

After reboot I check does the devices are ready:

*[00:12 xs-node0 ~]# xl pic-assignable-list

0000:01:00.0

0000:04:00.0 *So from this side all looks OK.

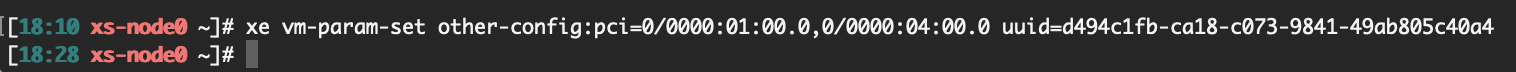

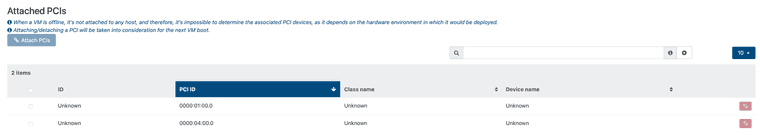

Now I'm adding those devices to VM with command:

xe vm-param-set other-config:pci=0/0000:01:00.0,0/0000:04:00.0 uuid=xxxx

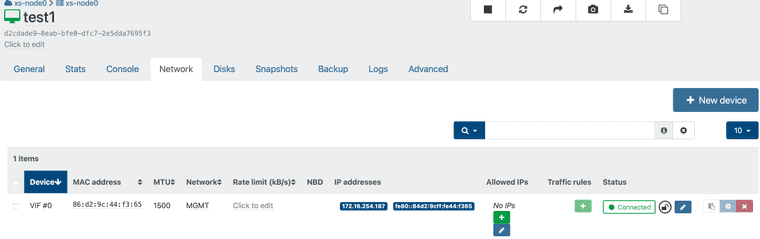

I can see at XO that both cards are configured for the VM

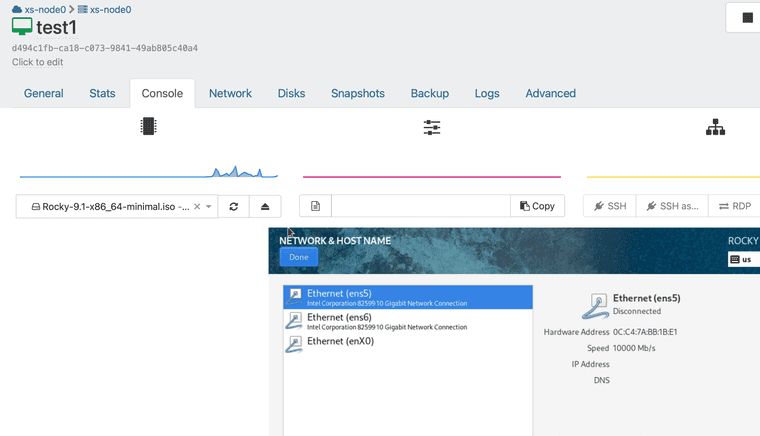

and when I start the VM I can see the cards:

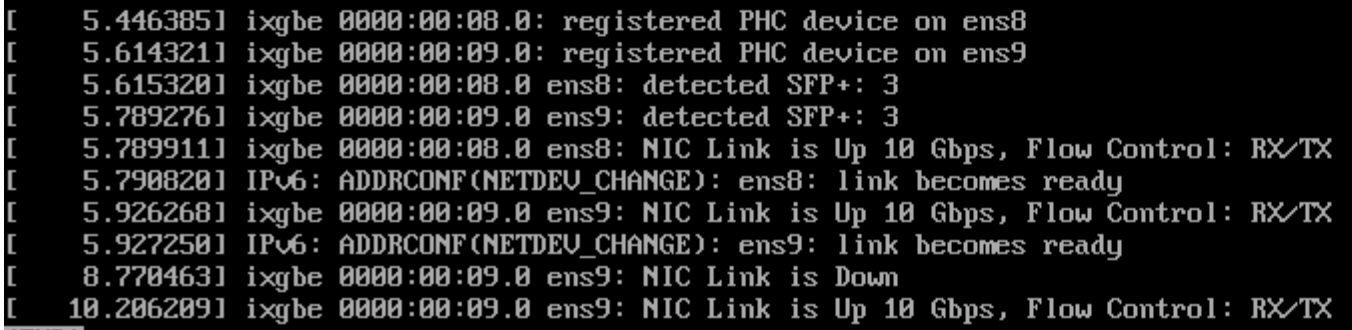

Both links are UP and at this point all looks OK.

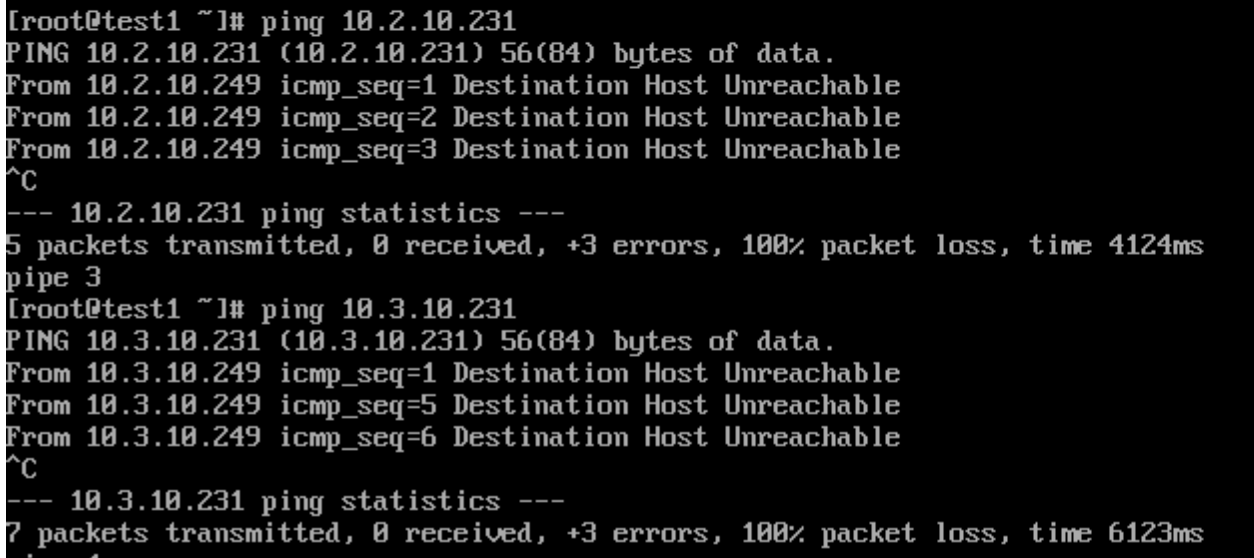

Unfortunate when I try to ping the host which is on the same network to which cards are connected I get:

When I try to ping the TX counter on VM interface increase... but seems that package doesn't leave the box because RX counter doesn't increase on switch side.

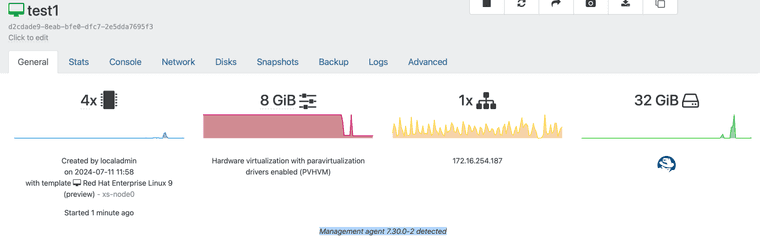

I can add that Management agent 7.30.0-2 has been installed on VM.

The 3rd interface which is configured on this VM works OK.

-

PCIe NIC passing through - dropped packets, no communication

I'm looking for help with setting up PCIe NIC passthrough to VM. I tried with several Linux distributions on clean XCP-NG 8.2 and 8.3 and with different 10GbE network cards (Intel/Emulex/Mellanox).

I've used Supermicro and Gigabyte server class motherboard and Xeon and Ryzen CPUs.

The outcome is the same in each case. The device is nicely passed to the VM it appears correctly and except MSI and MSI-X interrupt related errors displayed during boot, the device seems to be operational at guest OS.

The main problem is that I can't communicate through those interfaces.

MAC addresses are visible on the switch but all RX packets are dropped, at least this is reported in interfaces counters on guest OS.Additionally despite the fact the I hided NIC for dom0 there are still bridge interfaces related with those passthrough interfaces operational and their MAC addresses are also visible on switch side.

Can someone help me here?

THX in advance for sharing some of your wisdom.