XCP-ng 8.3 betas and RCs feedback 🚀

-

No UUID change if you made an upgrade, that's the beauty of it, it should be painless/transparent without modifying the database for most parts

-

@GravityMan You upgraded to the release candidate rather than the final release?

-

Just in case not everyone is aware: we published XCP-ng 8.3 officially on Monday.

Announcement: https://xcp-ng.org/blog/2024/10/07/xcp-ng-8-3/

Release notes: https://docs.xcp-ng.org/releases/release-8-3/

As you can read in the announcement, we really want to thank everyone who helped us with their feedback and other forms of contribution!

-

We also had a live yesterday, whose replay is here: https://www.youtube.com/watch?v=YfyCxP0I6y8

-

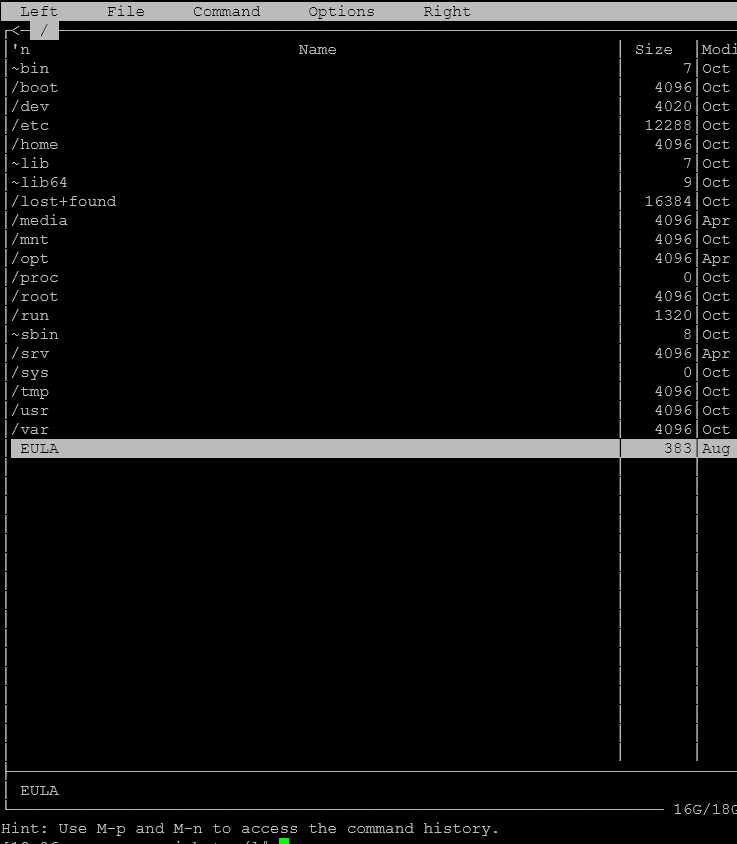

not sure is it distro problem, but

mcis pretty broken on 8.3, same at release version.

Arrows navigation is not working, it typing something instead.That probably happens because of missing ini file. https://github.com/microsoft/WSL/issues/26#issuecomment-210004998

-

@Tristis-Oris indeed, I can confirm. I'm not sure about the config file because it's the same version of

mcas we have on 8.2.1, where it's working.Maybe it needs a rebuild.

-

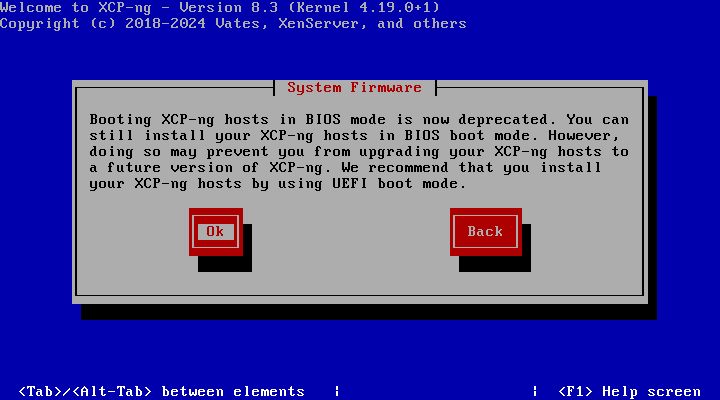

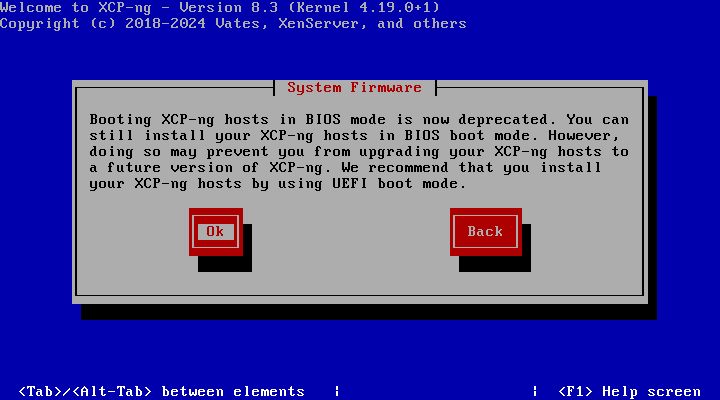

I noticed an error message while performing this upgrade... Says we should be using UEFI from this point forward. I didn't see that in the release notes, or didn't really read that part very well. It lets you go forward but mentions that higher revisions will not be able to work in BIOS mode.

Here's a question that probably doesn't need to be worked out yet:

If we have old BIOS boot for 8.2.x, and we go to upgrade to 8.x.x (next LTS), are we going to be able to switch to shutdown, switch to UEFI, boot the installer, and upgrade? Or is this going to need a clean install?

-

@Greg_E said in XCP-ng 8.3 betas and RCs feedback

:

:I noticed an error message while performing this upgrade... Says we should be using UEFI from this point forward. I didn't see that in the release notes, or didn't really read that part very well. It lets you go forward but mentions that higher revisions will not be able to work in BIOS mode.

Here's a question that probably doesn't need to be worked out yet:

If we have old BIOS boot for 8.2.x, and we go to upgrade to 8.x.x (next LTS), are we going to be able to switch to shutdown, switch to UEFI, boot the installer, and upgrade? Or is this going to need a clean install?

The release of 8.3 is the last version in the 8.x version series. Also 8.3 is a special release at the moment its a standard release, then at a later date its going to become the LTS (next one from after 8.2).

The next version number after 8.3 is going to be 9.0 (https://xcp-ng.org/blog/2024/10/07/xcp-ng-8-3/ and https://docs.xcp-ng.org/releases/release-8-3/).

-

@john-c

The UEFI only is going to cut off a bunch of older lab hardware, my HP DL360 gen 8 do not (can not?) have UEFI. That also means that Supermicro X9 is probably out, not sure about old Dell.It's progress and as such need to move with the times, but Beta 9 is going to be a long way off for my testing it due to hardware limitations.

-

@Greg_E said in XCP-ng 8.3 betas and RCs feedback

:

:@john-c

The UEFI only is going to cut off a bunch of older lab hardware, my HP DL360 gen 8 do not (can not?) have UEFI. That also means that Supermicro X9 is probably out, not sure about old Dell.It's progress and as such need to move with the times, but Beta 9 is going to be a long way off for my testing it due to hardware limitations.

If your old Dell machines are from PowerEdge Gen 12 or later then they can use EFI (UEFI) just need to enable it in the BIOS of those servers.

May also be possible with your Supermicro X9, though a link to the model or the datasheet would help please?

The HPE DL360 Gen8 is likely definitely out unless it has a EFI option via an update. Though for sure the use of HPE DL360 Gen9 or higher will work.

-

weird that i got warning even with UEFI enabled.

-

@Greg_E All answers are actually in the release notes: https://docs.xcp-ng.org/releases/release-8-3/#legacy-bios

The deprecation decision comes from XenServer. Whether we'll follow the same schedule as them is yet to be decided, also depending on when they remove its support completely.

-

@john-c No UEFI on HP DL Gen8.... it too bad because they work just fine. HP DL Gen9 servers support UEFI boot. By the time XCP 9.x rolls around it will be time to trash the G8 machines anyway. But lack of BIOS boot will limit cheap lab/home machines...

-

@john-c

HP says Gen9 has UEFI, and probably those are now in the $200 shipped range with 40 threads and 128gb of RAM like the gen8 were when I bought them.

I'll have to upgrade at some point, probably going to be a mini system next time.

As an FYI, I think Vsphere8 products require UEFI, I saw a notice in the instructions about this, haven't installed yet. Yes testing the competition and mostly for experience migrating off of VMware.

-

@Greg_E said in XCP-ng 8.3 betas and RCs feedback

:

:@john-c

HP says Gen9 has UEFI, and probably those are now in the $200 shipped range with 40 threads and 128gb of RAM like the gen8 were when I bought them.

I'll have to upgrade at some point, probably going to be a mini system next time.

As an FYI, I think Vsphere8 products require UEFI, I saw a notice in the instructions about this, haven't installed yet. Yes testing the competition and mostly for experience migrating off of VMware.

Bargain Hardware carries a HPE DL360 Gen9 for only £80, a HPE DL380 Gen9 for £80 and a HPE DL560 Gen9 for £237. Check them out! https://www.bargainhardware.co.uk

I used them for my Home Lab's Dell PowerEdge servers, they are really good. I haven't had problems with them or their products if I do they are very good at helping out. Also are a good source for access to components and upgrades which are hard to get!

-

@Greg_E I saw a similar thing and dealt with it thusly:

Upgrade the master in BIOS to preserve the configuration

Upgrade second (only other, in this case) host in the pool using UEFI boot

Migrate 'master' status to the second host

re-install the first host with UEFI, re-join it to the pool.Before doing this take careful note of the

xe pool-enable-tls-verificationmentioned in the release notes. You won't be able to join the cleanly reinstalled host without it.Typing 'master' so many times has me wishing we had a better word for the pool coordinator.

-

Thanks. I will eventually need to do this on my production system, thankfully it is new hardware and should be good until I retire (next guy's problem).

-

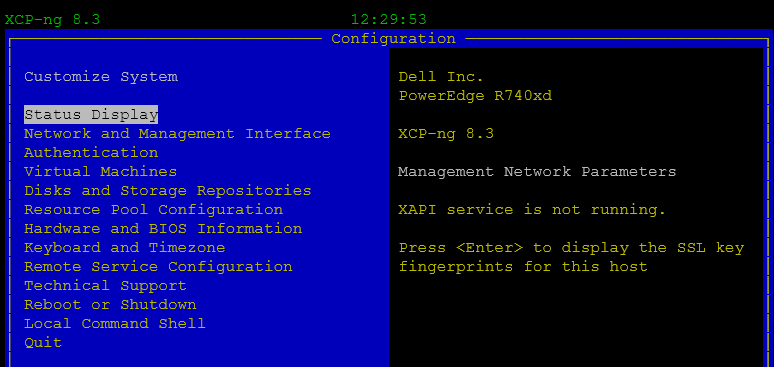

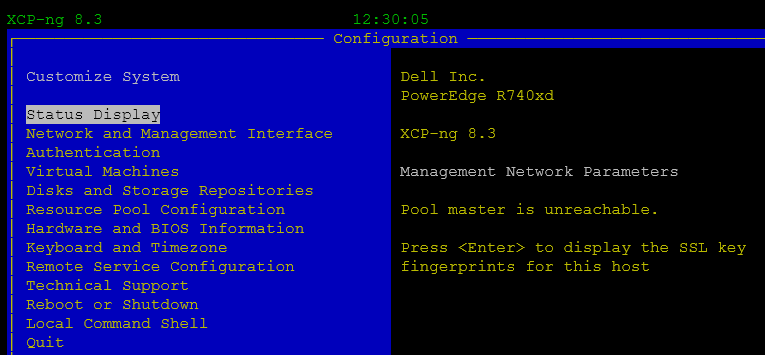

not sure is it XO or Xen issue.

8.3 release. i changed pool master and now it unavailable.master

hosts

Oct 11 12:12:00 rpsrv-cas-caph SM: [420301] lock: opening lock file /var/lock/sm/5cceb02b-bda5-85d1-10c1-b5dfe4fbf66a/sr Oct 11 12:12:00 rpsrv-cas-caph SM: [420301] Setting LVM_DEVICE to /dev/disk/by-scsid/36e43ec6100f01acf292713f000000006 Oct 11 12:12:00 rpsrv-cas-caph SM: [420301] lock: opening lock file /var/lock/sm/845ef0aa-7561-9253-b9fa-0b862c145c54/sr Oct 11 12:12:00 rpsrv-cas-caph SM: [420301] LVMCache created for VG_XenStorage-845ef0aa-7561-9253-b9fa-0b862c145c54 Oct 11 12:12:00 rpsrv-cas-caph fairlock[3895]: /run/fairlock/devicemapper acquired Oct 11 12:12:00 rpsrv-cas-caph fairlock[3895]: /run/fairlock/devicemapper sent '420301 - 77059.294148651hz#006��^?' Oct 11 12:12:00 rpsrv-cas-caph SM: [420301] ['/sbin/vgs', '--readonly', 'VG_XenStorage-845ef0aa-7561-9253-b9fa-0b862c145c54'] Oct 11 12:12:00 rpsrv-cas-caph SM: [420301] pread SUCCESS Oct 11 12:12:00 rpsrv-cas-caph fairlock[3895]: /run/fairlock/devicemapper released Oct 11 12:12:00 rpsrv-cas-caph SM: [420301] Entering _checkMetadataVolume Oct 11 12:12:00 rpsrv-cas-caph SM: [420301] LVMCache: will initialize now Oct 11 12:12:00 rpsrv-cas-caph SM: [420301] LVMCache: refreshing Oct 11 12:12:00 rpsrv-cas-caph fairlock[3895]: /run/fairlock/devicemapper acquired Oct 11 12:12:00 rpsrv-cas-caph fairlock[3895]: /run/fairlock/devicemapper sent '420301 - 77059.464449994hz#006��^?' Oct 11 12:12:00 rpsrv-cas-caph SM: [420301] ['/sbin/lvs', '--noheadings', '--units', 'b', '-o', '+lv_tags', '/dev/VG_XenStorage-845ef0aa-7561-9253-b9fa-0b862c145c54'] Oct 11 12:12:00 rpsrv-cas-caph SM: [420301] pread SUCCESS Oct 11 12:12:00 rpsrv-cas-caph fairlock[3895]: /run/fairlock/devicemapper released Oct 11 12:12:00 rpsrv-cas-caph SM: [420301] Targets 1 and iscsi_sessions 1 Oct 11 12:12:00 rpsrv-cas-caph SM: [420301] lock: closed /var/lock/sm/5cceb02b-bda5-85d1-10c1-b5dfe4fbf66a/sr Oct 11 12:12:00 rpsrv-cas-caph SM: [420301] lock: closed /var/lock/sm/845ef0aa-7561-9253-b9fa-0b862c145c54/sr Oct 11 12:22:19 rpsrv-cas-caph SM: [423642] Raising exception [150, Failed to initialize XMLRPC connection] Oct 11 12:22:19 rpsrv-cas-caph SM: [423642] Unable to open local XAPI session -

- Is that on the released version of XCP-ng 8.3?

- What steps did you follow to change the pool master and why? Was it in the middle of the pool upgrade?

- check xensource.log around the time of the XAPI startup.

-

- release version.

- fresh pool installation. no tasks, just master change via XO.

$ Oct 11 13:26:52 rpsrv-cas-navi xcp-rrdd: [ info||7 ||rrdd_main] GC live_words = 1213142 Oct 11 13:26:52 rpsrv-cas-navi xcp-rrdd: [ info||7 ||rrdd_main] GC heap_words = 1772032 Oct 11 13:26:52 rpsrv-cas-navi xcp-rrdd: [ info||7 ||rrdd_main] GC free_words = 558699 Oct 11 13:29:38 rpsrv-cas-navi xapi: [debug||0 |bringing up management interface D:56aeb415b10f|stunnel] client cert verification 172.29.1.153:443: SNI=pool path=/etc/stun$ Oct 11 13:29:38 rpsrv-cas-navi xapi: [debug||0 |bringing up management interface D:56aeb415b10f|master_connection] stunnel: stunnel start\x0A Oct 11 13:29:38 rpsrv-cas-navi xapi: [debug||0 |bringing up management interface D:56aeb415b10f|stunnel] Started a client (pid:45316): -> 172.29.1.153:443 Oct 11 13:29:38 rpsrv-cas-navi xapi: [ info||0 |bringing up management interface D:56aeb415b10f|master_connection] stunnel disconnected fd_closed=true proc_quit=false Oct 11 13:29:38 rpsrv-cas-navi xapi: [error||0 |bringing up management interface D:56aeb415b10f|master_connection] Caught Xapi_database.Master_connection.Goto_handler Oct 11 13:29:38 rpsrv-cas-navi xapi: [debug||0 |bringing up management interface D:56aeb415b10f|master_connection] Sleeping 256.000000 seconds before retrying master conne$ Oct 11 13:29:52 rpsrv-cas-navi xcp-rrdd: [ info||7 ||rrdd_main] GC live_words = 854843 Oct 11 13:29:52 rpsrv-cas-navi xcp-rrdd: [ info||7 ||rrdd_main] GC heap_words = 880128 Oct 11 13:29:52 rpsrv-cas-navi xcp-rrdd: [ info||7 ||rrdd_main] GC free_words = 25284 Oct 11 13:32:52 rpsrv-cas-navi xcp-rrdd: [ info||7 ||rrdd_main] GC live_words = 1571266 Oct 11 13:32:52 rpsrv-cas-navi xcp-rrdd: [ info||7 ||rrdd_main] GC heap_words = 1772032 Oct 11 13:32:52 rpsrv-cas-navi xcp-rrdd: [ info||7 ||rrdd_main] GC free_words = 200761 Oct 11 13:33:54 rpsrv-cas-navi xapi: [debug||0 |bringing up management interface D:56aeb415b10f|stunnel] client cert verification 172.29.1.153:443: SNI=pool path=/etc/stun$ Oct 11 13:33:54 rpsrv-cas-navi xapi: [debug||0 |bringing up management interface D:56aeb415b10f|master_connection] stunnel: stunnel start\x0A Oct 11 13:33:54 rpsrv-cas-navi xapi: [debug||0 |bringing up management interface D:56aeb415b10f|stunnel] Started a client (pid:46379): -> 172.29.1.153:443 Oct 11 13:33:54 rpsrv-cas-navi xapi: [ info||0 |bringing up management interface D:56aeb415b10f|master_connection] stunnel disconnected fd_closed=true proc_quit=false Oct 11 13:33:54 rpsrv-cas-navi xapi: [error||0 |bringing up management interface D:56aeb415b10f|master_connection] Caught Xapi_database.Master_connection.Goto_handler Oct 11 13:33:54 rpsrv-cas-navi xapi: [debug||0 |bringing up management interface D:56aeb415b10f|master_connection] Sleeping 256.000000 seconds before retrying master conne$ Oct 11 13:35:52 rpsrv-cas-navi xcp-rrdd: [ info||7 ||rrdd_main] GC live_words = 935099 Oct 11 13:35:52 rpsrv-cas-navi xcp-rrdd: [ info||7 ||rrdd_main] GC heap_words = 1012224 Oct 11 13:35:52 rpsrv-cas-navi xcp-rrdd: [ info||7 ||rrdd_main] GC free_words = 77123 Oct 11 13:38:10 rpsrv-cas-navi xapi: [debug||0 |bringing up management interface D:56aeb415b10f|stunnel] client cert verification 172.29.1.153:443: SNI=pool path=/etc/stun$ Oct 11 13:38:10 rpsrv-cas-navi xapi: [debug||0 |bringing up management interface D:56aeb415b10f|master_connection] stunnel: stunnel start\x0A Oct 11 13:38:10 rpsrv-cas-navi xapi: [debug||0 |bringing up management interface D:56aeb415b10f|stunnel] Started a client (pid:47395): -> 172.29.1.153:443 Oct 11 13:38:10 rpsrv-cas-navi xapi: [ info||0 |bringing up management interface D:56aeb415b10f|master_connection] stunnel disconnected fd_closed=true proc_quit=false Oct 11 13:38:10 rpsrv-cas-navi xapi: [error||0 |bringing up management interface D:56aeb415b10f|master_connection] Caught Xapi_database.Master_connection.Goto_handler Oct 11 13:38:10 rpsrv-cas-navi xapi: [debug||0 |bringing up management interface D:56aeb415b10f|master_connection] Sleeping 256.000000 seconds before retrying master conne$ Oct 11 13:38:52 rpsrv-cas-navi xcp-rrdd: [ info||7 ||rrdd_main] GC live_words = 1213031 Oct 11 13:38:52 rpsrv-cas-navi xcp-rrdd: [ info||7 ||rrdd_main] GC heap_words = 1772032 Oct 11 13:38:52 rpsrv-cas-navi xcp-rrdd: [ info||7 ||rrdd_main] GC free_words = 558790

Hello! It looks like you're interested in this conversation, but you don't have an account yet.

Getting fed up of having to scroll through the same posts each visit? When you register for an account, you'll always come back to exactly where you were before, and choose to be notified of new replies (either via email, or push notification). You'll also be able to save bookmarks and upvote posts to show your appreciation to other community members.

With your input, this post could be even better 💗

Register Login