MAP_DUPLICATE_KEY error in XOA backup - VM's wont START now!

-

@jshiells said in MAP_DUPLICATE_KEY error in XOA backup - VM's wont START now!:

@tuxen no sorry, great idea but we are not seeing any errors like that in kern.log. this problem when it happens is across several xen hosts all at the same time. it would be wild if all of the xen hosts were having hardware issues during the small window of time this problem happened in. if it was one xen server then i would look at hardware but its all of them, letting me believe its XOA, a BUG in xcp-ng or a storage problem (even though we have seen no errors or monitoring blips at all on the truenas server)

In a cluster with shared resources, It only takes one unstable host or a crashed PID left with an open shared resource to cause some unclean state cluster-wide. If the hosts and VMs aren't protected by a HA/STONITH mechanism to automatically release the resources, a qemu crash might keep one or more VDIs in an invalid, blocked state and affect SR scans done by the master. Failed SR scans may prevent SR-dependent actions (eg. VM boot, snapshots, GC kicking etc).

I'm not saying there isn't a bug somewhere but running MEMTEST/CPU tests on the host that triggered the bad RIP error would be my starting point of investigation. Just to be sure.

-

After much digging I traced the following formal exceptions below from

XEN. For most of them, the "chop" error is burried in the bowels of XAPI.ie

Oct 18 21:46:29 fen-xcp-01 SMGC: [28249] File "/usr/lib/python2.7/site-packages/XenAPI.py", line 238, in _parse_result Oct 18 21:46:29 fen-xcp-01 SMGC: [28249] raise Failure(result['ErrorDescription'])It's worth noting due to the time involved in this, the only things

considered in play where nfs-01/02 and lesser degree 03. The one XCP

pool (fen-xcp), and even lesser degree XOA itself.'I could not find any obvious issues with storage, neither hardware nor

data. Scrubs were fine, no cpu/hardware errors.I could not find any obvious issues with xcp hosts, neither hardware nor

data. No cpu/hardware errors.The only real change made was to correct the clock on nfs-01. I don't

see how that could affect this since most if not all locking is done

with flock.There is a valid argument to be made that Xen technically was responding

to an issue, though not entirely clear what nor how. Most of the other

errors / wtfbbq states are either directly related (in call path), or

indirectly (xen wanted a thing, didn't get it). Those are some deep

rabbit holes.There is more pre/post context to these, tried to include what I thought

made them a bit more easier to understand.

./SMlog.4.gz:Oct 19 00:19:36 fen-xcp-01 SMGC: [3729] * E X C E P T I O N * Oct 19 00:19:36 fen-xcp-01 SMGC: [3729] leaf-coalesce: EXCEPTION <class 'XenAPI.Failure'>, ['INTERNAL_ERROR', 'Invalid argument: chop'] Oct 19 00:19:36 fen-xcp-01 SMGC: [3729] File "/opt/xensource/sm/cleanup.py", line 1774, in coalesceLeaf Oct 19 00:19:36 fen-xcp-01 SMGC: [3729] self._coalesceLeaf(vdi) Oct 19 00:19:36 fen-xcp-01 SMGC: [3729] File "/opt/xensource/sm/cleanup.py", line 2048, in _coalesceLeaf Oct 19 00:19:36 fen-xcp-01 SMGC: [3729] if not self._snapshotCoalesce(vdi): Oct 19 00:19:36 fen-xcp-01 SMGC: [3729] File "/opt/xensource/sm/cleanup.py", line 2153, in _snapshotCoalesce Oct 19 00:19:36 fen-xcp-01 SMGC: [3729] self._coalesce(tempSnap) Oct 19 00:19:36 fen-xcp-01 SMGC: [3729] File "/opt/xensource/sm/cleanup.py", line 1962, in _coalesce Oct 19 00:19:36 fen-xcp-01 SMGC: [3729] self.deleteVDI(vdi) Oct 19 00:19:36 fen-xcp-01 SMGC: [3729] File "/opt/xensource/sm/cleanup.py", line 2469, in deleteVDI Oct 19 00:19:36 fen-xcp-01 SMGC: [3729] self._checkSlaves(vdi) Oct 19 00:19:36 fen-xcp-01 SMGC: [3729] File "/opt/xensource/sm/cleanup.py", line 2482, in _checkSlaves Oct 19 00:19:36 fen-xcp-01 SMGC: [3729] self._checkSlave(hostRef, vdi) Oct 19 00:19:36 fen-xcp-01 SMGC: [3729] File "/opt/xensource/sm/cleanup.py", line 2491, in _checkSlave Oct 19 00:19:36 fen-xcp-01 SMGC: [3729] text = _host.call_plugin(*call) Oct 19 00:19:36 fen-xcp-01 SMGC: [3729] File "/usr/lib/python2.7/site-packages/XenAPI.py", line 264, in __call__ Oct 19 00:19:36 fen-xcp-01 SMGC: [3729] return self.__send(self.__name, args) Oct 19 00:19:36 fen-xcp-01 SMGC: [3729] File "/usr/lib/python2.7/site-packages/XenAPI.py", line 160, in xenapi_request Oct 19 00:19:36 fen-xcp-01 SMGC: [3729] result = _parse_result(getattr(self, methodname)(*full_params)) Oct 19 00:19:36 fen-xcp-01 SMGC: [3729] File "/usr/lib/python2.7/site-packages/XenAPI.py", line 238, in _parse_result Oct 19 00:19:36 fen-xcp-01 SMGC: [3729] raise Failure(result['ErrorDescription']) ./SMlog.4.gz:Oct 19 00:22:04 fen-xcp-01 SMGC: [3729] * E X C E P T I O N * Oct 19 00:22:04 fen-xcp-01 SMGC: [3729] leaf-coalesce: EXCEPTION <class 'XenAPI.Failure'>, ['INTERNAL_ERROR', 'Invalid argument: chop'] Oct 19 00:22:04 fen-xcp-01 SMGC: [3729] File "/opt/xensource/sm/cleanup.py", line 1774, in coalesceLeaf Oct 19 00:22:04 fen-xcp-01 SMGC: [3729] self._coalesceLeaf(vdi) Oct 19 00:22:04 fen-xcp-01 SMGC: [3729] File "/opt/xensource/sm/cleanup.py", line 2048, in _coalesceLeaf Oct 19 00:22:04 fen-xcp-01 SMGC: [3729] if not self._snapshotCoalesce(vdi): Oct 19 00:22:04 fen-xcp-01 SMGC: [3729] File "/opt/xensource/sm/cleanup.py", line 2153, in _snapshotCoalesce Oct 19 00:22:04 fen-xcp-01 SMGC: [3729] self._coalesce(tempSnap) Oct 19 00:22:04 fen-xcp-01 SMGC: [3729] File "/opt/xensource/sm/cleanup.py", line 1962, in _coalesce Oct 19 00:22:04 fen-xcp-01 SMGC: [3729] self.deleteVDI(vdi) Oct 19 00:22:04 fen-xcp-01 SMGC: [3729] File "/opt/xensource/sm/cleanup.py", line 2469, in deleteVDI Oct 19 00:22:04 fen-xcp-01 SMGC: [3729] self._checkSlaves(vdi) Oct 19 00:22:04 fen-xcp-01 SMGC: [3729] File "/opt/xensource/sm/cleanup.py", line 2482, in _checkSlaves Oct 19 00:22:04 fen-xcp-01 SMGC: [3729] self._checkSlave(hostRef, vdi) Oct 19 00:22:04 fen-xcp-01 SMGC: [3729] File "/opt/xensource/sm/cleanup.py", line 2491, in _checkSlave Oct 19 00:22:04 fen-xcp-01 SMGC: [3729] text = _host.call_plugin(*call) Oct 19 00:22:04 fen-xcp-01 SMGC: [3729] File "/usr/lib/python2.7/site-packages/XenAPI.py", line 264, in __call__ Oct 19 00:22:04 fen-xcp-01 SMGC: [3729] return self.__send(self.__name, args) Oct 19 00:22:04 fen-xcp-01 SMGC: [3729] File "/usr/lib/python2.7/site-packages/XenAPI.py", line 160, in xenapi_request Oct 19 00:22:04 fen-xcp-01 SMGC: [3729] result = _parse_result(getattr(self, methodname)(*full_params)) Oct 19 00:22:04 fen-xcp-01 SMGC: [3729] File "/usr/lib/python2.7/site-packages/XenAPI.py", line 238, in _parse_result Oct 19 00:22:04 fen-xcp-01 SMGC: [3729] raise Failure(result['ErrorDescription']) ./SMlog.4.gz:Oct 19 00:22:11 fen-xcp-01 SMGC: [3729] * E X C E P T I O N * Oct 19 00:22:11 fen-xcp-01 SMGC: [3729] gc: EXCEPTION <class 'XenAPI.Failure'>, ['INTERNAL_ERROR', 'Invalid argument: chop'] Oct 19 00:22:11 fen-xcp-01 SMGC: [3729] File "/opt/xensource/sm/cleanup.py", line 3388, in gc Oct 19 00:22:11 fen-xcp-01 SMGC: [3729] _gc(None, srUuid, dryRun) Oct 19 00:22:11 fen-xcp-01 SMGC: [3729] File "/opt/xensource/sm/cleanup.py", line 3273, in _gc Oct 19 00:22:11 fen-xcp-01 SMGC: [3729] _gcLoop(sr, dryRun) Oct 19 00:22:11 fen-xcp-01 SMGC: [3729] File "/opt/xensource/sm/cleanup.py", line 3214, in _gcLoop Oct 19 00:22:11 fen-xcp-01 SMGC: [3729] sr.garbageCollect(dryRun) Oct 19 00:22:11 fen-xcp-01 SMGC: [3729] File "/opt/xensource/sm/cleanup.py", line 1794, in garbageCollect Oct 19 00:22:11 fen-xcp-01 SMGC: [3729] self.deleteVDIs(vdiList) Oct 19 00:22:11 fen-xcp-01 SMGC: [3729] File "/opt/xensource/sm/cleanup.py", line 2374, in deleteVDIs Oct 19 00:22:11 fen-xcp-01 SMGC: [3729] SR.deleteVDIs(self, vdiList) Oct 19 00:22:11 fen-xcp-01 SMGC: [3729] File "/opt/xensource/sm/cleanup.py", line 1808, in deleteVDIs Oct 19 00:22:11 fen-xcp-01 SMGC: [3729] self.deleteVDI(vdi) Oct 19 00:22:11 fen-xcp-01 SMGC: [3729] File "/opt/xensource/sm/cleanup.py", line 2469, in deleteVDI Oct 19 00:22:11 fen-xcp-01 SMGC: [3729] self._checkSlaves(vdi) Oct 19 00:22:11 fen-xcp-01 SMGC: [3729] File "/opt/xensource/sm/cleanup.py", line 2482, in _checkSlaves Oct 19 00:22:11 fen-xcp-01 SMGC: [3729] self._checkSlave(hostRef, vdi) Oct 19 00:22:11 fen-xcp-01 SMGC: [3729] File "/opt/xensource/sm/cleanup.py", line 2491, in _checkSlave Oct 19 00:22:11 fen-xcp-01 SMGC: [3729] text = _host.call_plugin(*call) Oct 19 00:22:11 fen-xcp-01 SMGC: [3729] File "/usr/lib/python2.7/site-packages/XenAPI.py", line 264, in __call__ Oct 19 00:22:11 fen-xcp-01 SMGC: [3729] return self.__send(self.__name, args) Oct 19 00:22:11 fen-xcp-01 SMGC: [3729] File "/usr/lib/python2.7/site-packages/XenAPI.py", line 160, in xenapi_request Oct 19 00:22:11 fen-xcp-01 SMGC: [3729] result = _parse_result(getattr(self, methodname)(*full_params)) Oct 19 00:22:11 fen-xcp-01 SMGC: [3729] File "/usr/lib/python2.7/site-packages/XenAPI.py", line 238, in _parse_result Oct 19 00:22:11 fen-xcp-01 SMGC: [3729] raise Failure(result['ErrorDescription']) Oct 19 00:22:11 fen-xcp-01 SMGC: [3729] ./SMlog.5.gz:Oct 18 21:51:23 fen-xcp-01 SMGC: [30714] * E X C E P T I O N * Oct 18 21:51:23 fen-xcp-01 SMGC: [30714] GC process exiting, no work left Oct 18 21:51:23 fen-xcp-01 SM: [30714] lock: released /var/lock/sm/0cff5362-5c89-2241-2207-a1d736d9ef5e/gc_active Oct 18 21:51:23 fen-xcp-01 SMGC: [30714] In cleanup Oct 18 21:51:23 fen-xcp-01 SMGC: [30714] SR 0cff ('fen-nfs-03 - DR (Diaster Recovery Storage ZFS/NFS)') (608 VDIs in 524 VHD trees): no changes Oct 18 21:51:23 fen-xcp-01 SMGC: [30714] *~*~*~*~*~*~*~*~*~*~*~*~*~*~*~*~*~*~*~*~* Oct 18 21:51:23 fen-xcp-01 SMGC: [30714] *********************** Oct 18 21:51:23 fen-xcp-01 SMGC: [30714] * E X C E P T I O N * Oct 18 21:51:23 fen-xcp-01 SMGC: [30714] *********************** Oct 18 21:51:23 fen-xcp-01 SMGC: [30714] gc: EXCEPTION <class 'XenAPI.Failure'>, ['INTERNAL_ERROR', 'Invalid argument: chop'] Oct 18 21:51:23 fen-xcp-01 SMGC: [30714] File "/opt/xensource/sm/cleanup.py", line 3388, in gc Oct 18 21:51:23 fen-xcp-01 SMGC: [30714] _gc(None, srUuid, dryRun) Oct 18 21:51:23 fen-xcp-01 SMGC: [30714] File "/opt/xensource/sm/cleanup.py", line 3273, in _gc Oct 18 21:51:23 fen-xcp-01 SMGC: [30714] _gcLoop(sr, dryRun) Oct 18 21:51:23 fen-xcp-01 SMGC: [30714] File "/opt/xensource/sm/cleanup.py", line 3214, in _gcLoop Oct 18 21:51:23 fen-xcp-01 SMGC: [30714] sr.garbageCollect(dryRun) Oct 18 21:51:23 fen-xcp-01 SMGC: [30714] File "/opt/xensource/sm/cleanup.py", line 1794, in garbageCollect Oct 18 21:51:23 fen-xcp-01 SMGC: [30714] self.deleteVDIs(vdiList) Oct 18 21:51:23 fen-xcp-01 SMGC: [30714] File "/opt/xensource/sm/cleanup.py", line 2374, in deleteVDIs Oct 18 21:51:23 fen-xcp-01 SMGC: [30714] SR.deleteVDIs(self, vdiList) Oct 18 21:51:23 fen-xcp-01 SMGC: [30714] File "/opt/xensource/sm/cleanup.py", line 1808, in deleteVDIs Oct 18 21:51:23 fen-xcp-01 SMGC: [30714] self.deleteVDI(vdi) Oct 18 21:51:23 fen-xcp-01 SMGC: [30714] File "/opt/xensource/sm/cleanup.py", line 2469, in deleteVDI Oct 18 21:51:23 fen-xcp-01 SMGC: [30714] self._checkSlaves(vdi) Oct 18 21:51:23 fen-xcp-01 SMGC: [30714] File "/opt/xensource/sm/cleanup.py", line 2482, in _checkSlaves Oct 18 21:51:23 fen-xcp-01 SMGC: [30714] self._checkSlave(hostRef, vdi) Oct 18 21:51:23 fen-xcp-01 SMGC: [30714] File "/opt/xensource/sm/cleanup.py", line 2491, in _checkSlave Oct 18 21:51:23 fen-xcp-01 SMGC: [30714] text = _host.call_plugin(*call) Oct 18 21:51:23 fen-xcp-01 SMGC: [30714] File "/usr/lib/python2.7/site-packages/XenAPI.py", line 264, in __call__ Oct 18 21:51:23 fen-xcp-01 SMGC: [30714] return self.__send(self.__name, args) Oct 18 21:51:23 fen-xcp-01 SMGC: [30714] File "/usr/lib/python2.7/site-packages/XenAPI.py", line 160, in xenapi_request Oct 18 21:51:23 fen-xcp-01 SMGC: [30714] result = _parse_result(getattr(self, methodname)(*full_params)) Oct 18 21:51:23 fen-xcp-01 SMGC: [30714] File "/usr/lib/python2.7/site-packages/XenAPI.py", line 238, in _parse_result Oct 18 21:51:23 fen-xcp-01 SMGC: [30714] raise Failure(result['ErrorDescription']) Oct 18 21:51:23 fen-xcp-01 SMGC: [30714] Oct 18 21:51:23 fen-xcp-01 SMGC: [30714] *~*~*~*~*~*~*~*~*~*~*~*~*~*~*~*~*~*~*~*~* Oct 18 21:51:23 fen-xcp-01 SMGC: [30714] * * * * * SR 0cff5362-5c89-2241-2207-a1d736d9ef5e: ERROR Oct 18 21:51:23 fen-xcp-01 SMGC: [30714] Oct 18 21:51:28 fen-xcp-01 SM: [26746] lock: opening lock file /var/lock/sm/894e5d0d-c100-be00-4fc4-b0c6db478a26/sr -

Well, changing time on the NFS server could have an impact, since file access time attributes are used to do many checks in general. I'm not saying that's 100% the issue, but it tells how deep a small change could cause havoc.

-

Just an update on this:

we made sure all our server times were synced. issue happened again the next run.

just for shits and giggles we restarted toolstack on the all the hosts yesterday and the problem went away. no issues with the backup last night. maybe just a coincidence, we are continuing to monitor.

we also noticed that even though this CHOP error is coming up, snapshots are getting created

-

I have, what is hopefully a final update to this issue(s).

we upgraded to xoa version .99 a few weeks ago and the problem has now gone away. we suspect that some changes were made for timeouts in xoa that have resolved this , and a few other related problems.

-

O olivierlambert marked this topic as a question on

O olivierlambert marked this topic as a question on

-

O olivierlambert has marked this topic as solved on

O olivierlambert has marked this topic as solved on

-

This problem is back with vengeance. after almost a year of no issues, we have upgraded to version 109.2 of XOA and boom... probably is back

XCP-NG servers are on 8.2.1 May 6th update

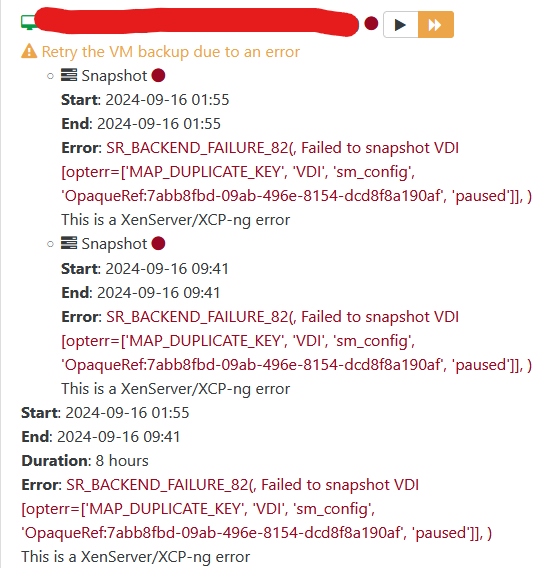

we get this following error in XOA

Error: SR_BACKEND_FAILURE_82(, Failed to snapshot VDI [opterr=failed to pause VDI 7d213264-1f89-488f-b273-eb91c186eaab], )

cant make snapshots of the effected VM anymore...

manual snapshots we get same error:

SR_BACKEND_FAILURE_82(, Failed to snapshot VDI [opterr=failed to pause VDI c51f22e2-ba5c-413b-8afc-9208a2b944ef],

but there is NO issues with the SR.failed 17 backups last night,

failed 3 backups tonight.

across 3 different storage arraysall those VM's we had to shut down and fix.

tapdisk connections to the disk have to be manually killed.we will double check all our interfaces again but i suspect it will be the same as last time:, no errors on networking or storage side.

something has changed, reverted.

-

It's hard to tell how it's triggered. Sometimes there are false correlations. It could be also a load on the SR, some concurrency/race conditions etc.

However, if you can reproduce the issue 100% of the time, then it's different. Those problems are really hard to pinpoint.

-

@olivierlambert the 3 truenas servers are very bored

we be lucking to be hitting 10% of there abilities even during the backups.

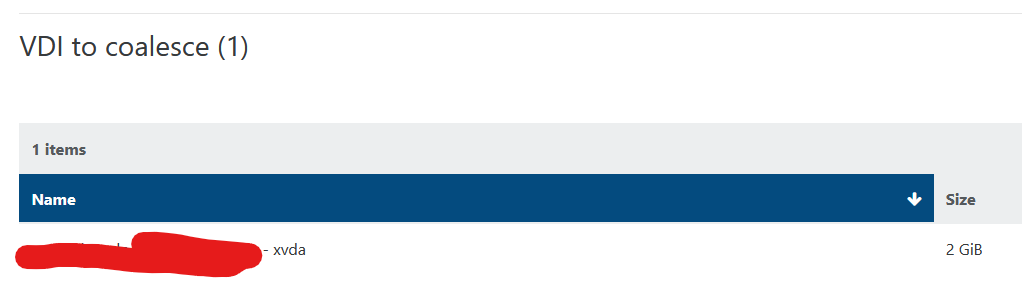

we be lucking to be hitting 10% of there abilities even during the backups.i think i have stumbled across something that maybe a bug or the cause:

lets say we have 5 servers, VM getting this error during backups is running on server3, When this happens we power it down on server3 and clear the stuck tapdisk connections a log entry shows up saying a snapshot link on server5 was also cleared....

example: "Cleared RW for 7d213264-1f89-488f-b273-eb91c186eaab on host 14c98969-ae12-4d7f-9c42-d7656aae01e5 on server5"

... but the VM was running on server3.....so HERE is what i think is happening... just a theory... I think the load balance plugin is moving VMs around during the backup process. could that be possible?? i feel based on what i am seeing this could be the cause.

-

Hmmm that's a possibility. An interesting lead to explore. Ie trying to do it yourself to see if the result if the same?

-

@olivierlambert it has happened again

"XXXXX-nfs-02 (FAST all Flash Storage ZFS/NFS): failed to unpause tapdisk","Failed to unpause tapdisk for VDI ab867371-7a74-4a9f-88a9-9fb89e7dd3de, VMs using this tapdisk have lost access to the corresponding disk(s)","XXXXX XXXX-xcp Pool","Oct 23, 2025 8:18 PM",""

VM for this VDI had been running on host XXX-xcp-03 but there were attachments on the vdi from host XXX-xcp-07 .

we did not manually move this VM but it has moved.

I really think this problem is getting caused by load balancer moving things around while delta backups are running.

can we look into having XOA LB plugin NOT move VM's getting touched by the XOA backup processes?

-

Question for @pierrebrunet I assume

-

Hi @jshiells,

Sorry for not answering earlier, Pierre wasn't working during the past weeks.

I'll soon investigate on how the load balancer interacts with backups.

-

Hi @jshiells,

After some tests, I don't think it can be caused by the load balancer.

If the load balancer tries to migrate a VM that is being backed up, the migration instantly fails and nothing happens.

Reversely, if a backup job starts when a VM is being migrated by the load balancer, the backup will fail for that VM with error "cannot backup a VM currently being migrated".

Hello! It looks like you're interested in this conversation, but you don't have an account yet.

Getting fed up of having to scroll through the same posts each visit? When you register for an account, you'll always come back to exactly where you were before, and choose to be notified of new replies (either via email, or push notification). You'll also be able to save bookmarks and upvote posts to show your appreciation to other community members.

With your input, this post could be even better 💗

Register Login