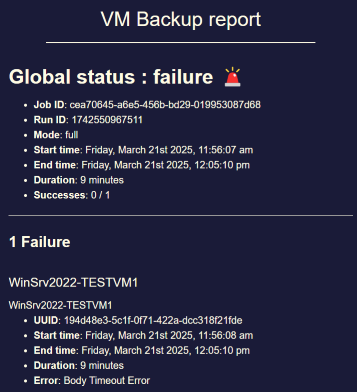

Potential bug with Windows VM backup: "Body Timeout Error"

-

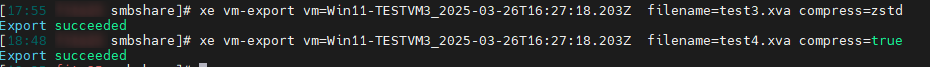

xe vm-export filename=export_filename compress=true(for gzip, otherwise usezstdfor Zstd compression)Note: make sure to mount a share with enough space and not export directly on the dom0 root, otherwise you'll fill it.

-

@olivierlambert

I have tested several scenarios on 1TB test VM.

Mounted share from same storage where backups from XO are stored.- Export of shutdown VM with zstd - succeeded

- Export of snapshot while VM is running with zstd - succeeded

- Export of snapshot while VM is running with gzip - succeeded

-

That's a very interesting result

It means the problem is either an interaction between XO and XAPI, or on XO's side, but not simply an XCP-ng issue as we could have thought initially

It means the problem is either an interaction between XO and XAPI, or on XO's side, but not simply an XCP-ng issue as we could have thought initially

Can you check if the XVA file seems to work when importing it? (it case

xefails silently). Usexe vm-import. -

@olivierlambert

Tested on one of the previous exports and import with " xe vm-import" was successful. VM Windows OS starts normally. -

-

Boosting this because it looks like I have a Windows Server 2022 that is going to keep failing. It also has more then 150GB of free space and I was thinking of shrinking it down (if only I could pull a good backup in case it breaks). I no longer need that much space.

That said, a Linux VM with more free space went zooming right along, way faster than the Server 2022 that I was also backing up at the same time. This other Server 2022 succeeded, but I'll want to try a second on all my Windows backups to make sure they work before starting them on a schedule.

I saw a Delta style mentioned above, mine fails with a Delta too. The snapshot is created, then the file compression and file copy starts, and this is where things fail.

Writing out to an NFS share, but I might try backing up across my router to my lab which has an SMB share for backup testing.

I'm using XCP-ng 8.2.x for and XO from sources with commit d7e64.

I'm migrating that VM from one storage device to another to see if that might be part of the issue, once it is done I'll give this backup another try.

-

Not sure if this helps, I was able to get this VM to backup using no compression. Now I'm going to make the drive smaller to remove most of the free space and see if compression works.

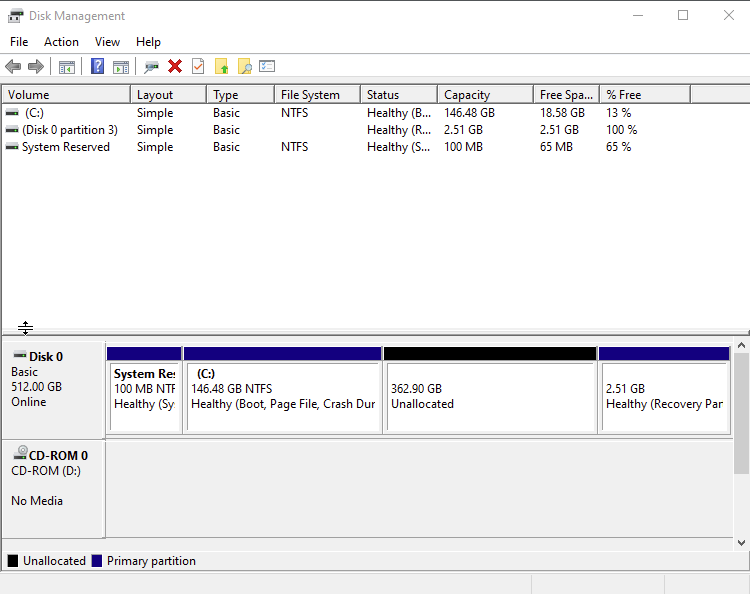

This VM had almost 400GB of free space, and I no longer need this much since Microsoft deprecated a feature I was using after win10, all my clients have been moved to win11.

I have one more "big" Windows VM that probably has a bunch of space I can reclaim, or I'll just go without compression for that one.

And this is only a Windows issue, my biggest Linux VM also has a lot of free space to hold disk images for deployment, and it was FAST compared to a windows backup.

[late edit] I forgot that this is a process. The Recovery partition sits at the end of disk space, so shrinking the main partition will leave uncommitted space between them, and not shrink anything at all. What I've done in the past was to boot to a Linux disk and use Gparted to move the Recovery where it needed to be. This machine is one of my domain controllers and will need to wait until I have "idle" time on the system to shut it down and do this, maybe tomorrow if I'm lucky. Since I have more than one, I generally shouldn't need to worry, but I still try to work around other users.

-

My previous ping didn't work so I will try my luck with @lsouai-vates

-

@olivierlambert transfered

-

I backed up another Windows Server 2022 that had a lot of free space, setting no compression is the workaround right now. I'll have to get both of these shrunk down to reasonable and see if compression starts working. That's and after lunch task for the second "big" VM. I'll report back after performing the shrink steps on the one I can reboot today.

I agree with the working theory way up at the top... The process is still going, counting each empty "block" and "compressing" it, but with no data moving for over 5 minutes, it errors out. And 120-150GB worth of empty space in a Windows VM is enough to hit that timer.

Why the Linux machines don't do this? Might be because all of mine are done in less than 10 minutes total, which doesn't leave a lot of time where that timer can run. 3 of my linux with "large" disk went just fine, a couple only took 3 minutes to compress and copy to the remote share.

[edit] After shrinking and moving the partitions, I'm finding that XO is not allowed to decrease the size of a "disk", so I might just be stuck with no compression on these two VMs.

-

@florent can you help him?

-

Hey,

I am experiencing the same issue using XO from sources (commit 4d77b79ce920925691d84b55169ea3b70f7a52f6), Node version 22, Debian 13.

I have multiple backup jobs and only one which is a full backup job is giving me issues.

Most VMs can be backed up by this full backup job just fine but some error out with "body timeout error", e.g.:

{ "id": "1762017810483", "message": "transfer", "start": 1762017810483, "status": "failure", "end": 1762018134258, "result": { "name": "BodyTimeoutError", "code": "UND_ERR_BODY_TIMEOUT", "message": "Body Timeout Error", "stack": "BodyTimeoutError: Body Timeout Error\n at FastTimer.onParserTimeout [as _onTimeout] (/etc/xen-orchestra/node_modules/undici/lib/dispatcher/client-h1.js:646:28)\n at Timeout.onTick [as _onTimeout] (/etc/xen-orchestra/node_modules/undici/lib/util/timers.js:162:13)\n at listOnTimeout (node:internal/timers:588:17)\n at process.processTimers (node:internal/timers:523:7)" } }XO from sources VM has 8 vCPU and 8GB RAM.

Link speed of the XCP-ng hosts is 50 Gbit/s.

XO VM can reach 20 Gbit/s to the NAS in iperf.Zstd is enabled for this backup job.

It appears that only big VMs (as in disk size) have this issue.

The VMs that have this issue on the full backup job can be backed up just fine via delta backup job.I read in another thread that this issue can be caused by dom0 hardware constrains but dom0 has 16 vCPU and is at ~40% CPU usage while backups are running.

RAM usage sits at 2GB out of 8GB used.I changed my full backup job to GZIP compression and will see if this helps.

Will report back.

I really need compression due to the large virtual disks of some VMs...Best regards

MajorP -

@MajorP93 im seeing this as well, I think the issue is related to communication between XO and XCP-NG.

I noticed that it doesn't seem to depend on the vdi size in our case, but rather latency between XO and XCP-NG, which are on different sites and connected via IPSEC VPN. -

@nikade Hmm I have a hard time understanding what might cause this issue in my case since all of our 5 XCP-ng hosts are on the same site. They can talk on layer 2 with each other and have 2x 50 Gbit/s LACP bond each...

The XO VM is running on the pool master itself.

Some of the VMs that threw this error are also even running on the pool master itself.

So I would expect that the traffic does not even have to exit the physical host in this case...

Latency should be perfectly fine in this case...All XCP-ng hosts, XO VM and NAS (backup remote) can ping each other at below 1ms latency...

Really weird.

If anyone has an idea regarding what could possibly cause this I would be grateful.

As I said before I want to test Gzip instead of Zstd but I have to wait until this backup job finished.

It has ~40TB of data to backup in total

-

-

@olivierlambert said in Potential bug with Windows VM backup: "Body Timeout Error":

I think we have a lead, I've seen a discussion between @florent and @dinhngtu recently about that topic

Sounds good!

So there is a fix currently being worked on? -

I think we have a lead to explore, we'll keep you posted when we have a branch to test

-

@olivierlambert said in Potential bug with Windows VM backup: "Body Timeout Error":

I think we have a lead to explore, we'll keep you posted when we have a branch to test

Sure! Thank you very much.

When there is a branch available I will be happy to compile, test and provide any information / log needed. -

I did 2 more tests.

- using full backup with encryption disabled on the remote (had it enabled before) --> same issue

- switching from zstd to gzip --> same issue

-

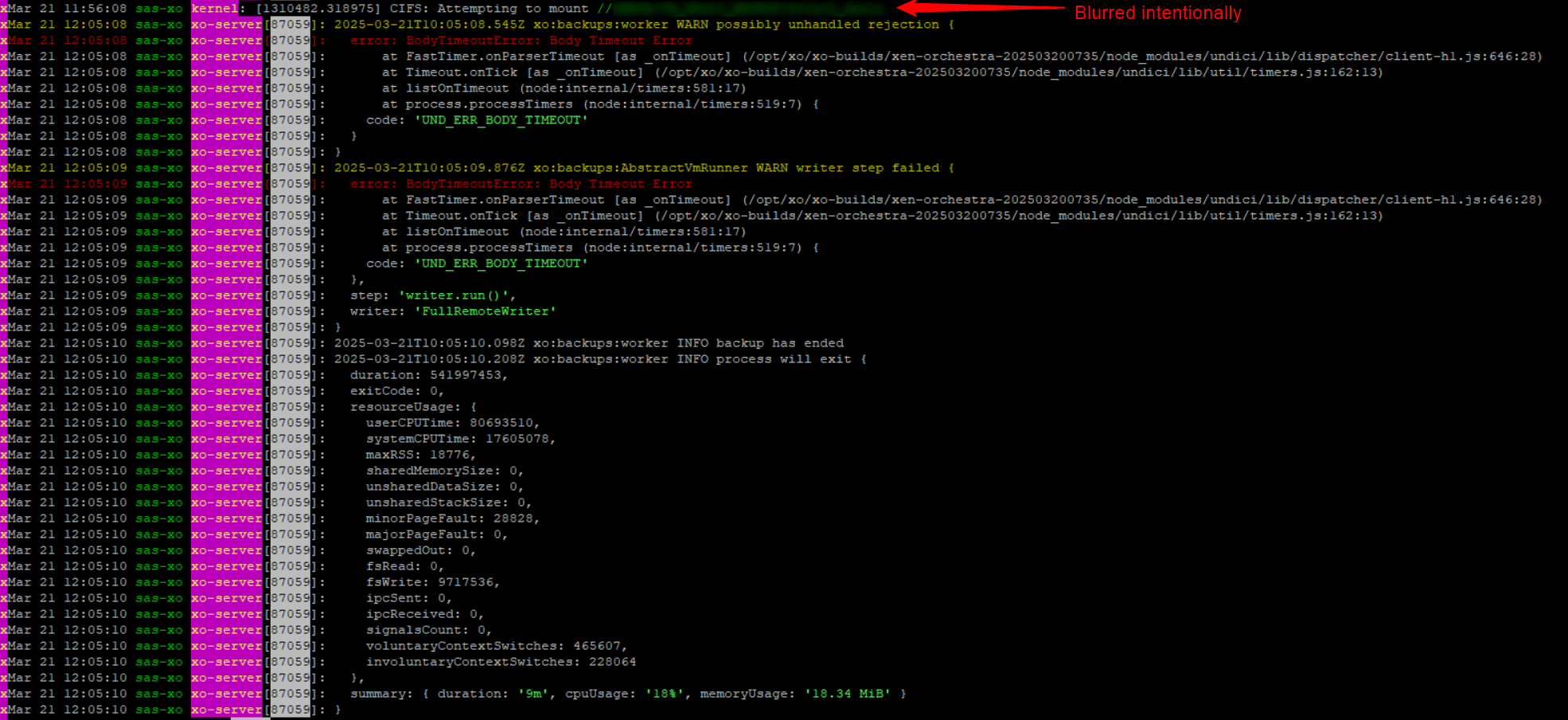

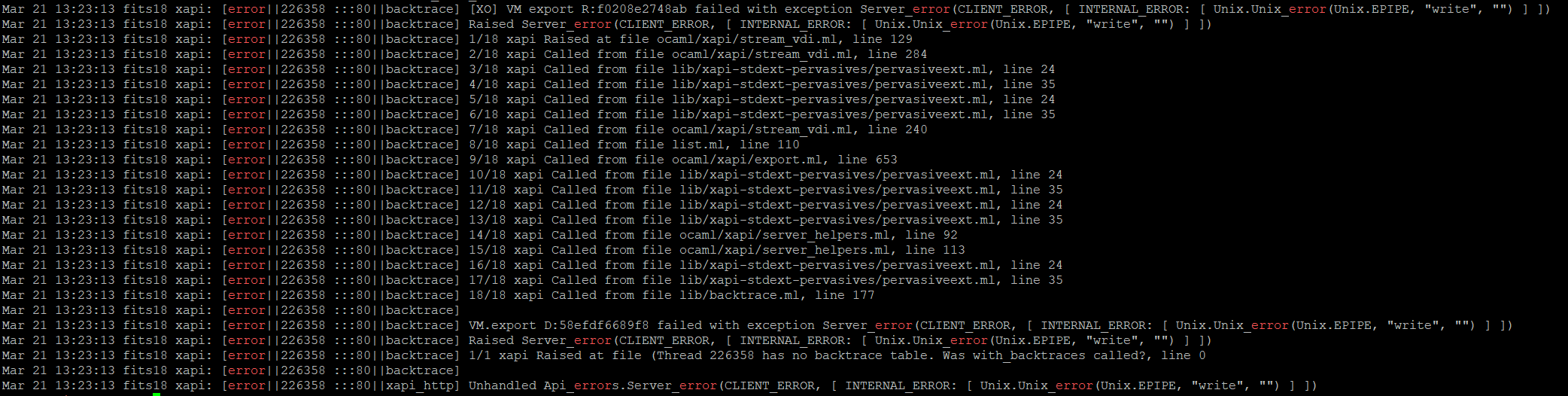

Hey, I did some more digging.

I found some log entries that were written at the moment the backup job threw the "body timeout error". (Those blocks of log entries appear for all VMs that show this problem).grep -i "VM.export" xensource.log | grep -i "error"

Nov 4 08:02:33 dat-xcpng01 xapi: [error||141766 HTTPS 192.168.60.30->:::80|[XO] VM export R:edfb08f9b55b|xapi_compression] nice failed to compress: exit code 70 Nov 4 08:02:33 dat-xcpng01 xapi: [error||141766 :::80|VM.export D:6f2ee2bc66b8|backtrace] [XO] VM export R:edfb08f9b55b failed with exception Server_error(CLIENT_ERROR, [ INTERNAL_ERROR: [ Unix.Unix_error(Unix.EPIPE, "write", "") ] ]) Nov 4 08:02:33 dat-xcpng01 xapi: [error||141766 :::80|VM.export D:6f2ee2bc66b8|backtrace] Raised Server_error(CLIENT_ERROR, [ INTERNAL_ERROR: [ Unix.Unix_error(Unix.EPIPE, "write", "") ] ]) Nov 4 08:02:33 dat-xcpng01 xapi: [error||141766 :::80|VM.export D:6f2ee2bc66b8|backtrace] 1/16 xapi Raised at file ocaml/xapi/stream_vdi.ml, line 127 Nov 4 08:02:33 dat-xcpng01 xapi: [error||141766 :::80|VM.export D:6f2ee2bc66b8|backtrace] 2/16 xapi Called from file ocaml/xapi/stream_vdi.ml, line 307 Nov 4 08:02:33 dat-xcpng01 xapi: [error||141766 :::80|VM.export D:6f2ee2bc66b8|backtrace] 3/16 xapi Called from file ocaml/libs/xapi-stdext/lib/xapi-stdext-pervasives/pervasiveext.ml, line 24 Nov 4 08:02:33 dat-xcpng01 xapi: [error||141766 :::80|VM.export D:6f2ee2bc66b8|backtrace] 4/16 xapi Called from file ocaml/libs/xapi-stdext/lib/xapi-stdext-pervasives/pervasiveext.ml, line 39 Nov 4 08:02:33 dat-xcpng01 xapi: [error||141766 :::80|VM.export D:6f2ee2bc66b8|backtrace] 5/16 xapi Called from file ocaml/libs/xapi-stdext/lib/xapi-stdext-pervasives/pervasiveext.ml, line 24 Nov 4 08:02:33 dat-xcpng01 xapi: [error||141766 :::80|VM.export D:6f2ee2bc66b8|backtrace] 6/16 xapi Called from file ocaml/libs/xapi-stdext/lib/xapi-stdext-pervasives/pervasiveext.ml, line 39 Nov 4 08:02:33 dat-xcpng01 xapi: [error||141766 :::80|VM.export D:6f2ee2bc66b8|backtrace] 7/16 xapi Called from file ocaml/xapi/stream_vdi.ml, line 263 Nov 4 08:02:33 dat-xcpng01 xapi: [error||141766 :::80|VM.export D:6f2ee2bc66b8|backtrace] 8/16 xapi Called from file list.ml, line 110 Nov 4 08:02:33 dat-xcpng01 xapi: [error||141766 :::80|VM.export D:6f2ee2bc66b8|backtrace] 9/16 xapi Called from file ocaml/xapi/export.ml, line 707 Nov 4 08:02:33 dat-xcpng01 xapi: [error||141766 :::80|VM.export D:6f2ee2bc66b8|backtrace] 10/16 xapi Called from file ocaml/libs/xapi-stdext/lib/xapi-stdext-pervasives/pervasiveext.ml, line 24 Nov 4 08:02:33 dat-xcpng01 xapi: [error||141766 :::80|VM.export D:6f2ee2bc66b8|backtrace] 11/16 xapi Called from file ocaml/libs/xapi-stdext/lib/xapi-stdext-pervasives/pervasiveext.ml, line 39 Nov 4 08:02:33 dat-xcpng01 xapi: [error||141766 :::80|VM.export D:6f2ee2bc66b8|backtrace] 12/16 xapi Called from file ocaml/libs/xapi-stdext/lib/xapi-stdext-pervasives/pervasiveext.ml, line 24 Nov 4 08:02:33 dat-xcpng01 xapi: [error||141766 :::80|VM.export D:6f2ee2bc66b8|backtrace] 13/16 xapi Called from file ocaml/libs/xapi-stdext/lib/xapi-stdext-pervasives/pervasiveext.ml, line 39 Nov 4 08:02:33 dat-xcpng01 xapi: [error||141766 :::80|VM.export D:6f2ee2bc66b8|backtrace] 14/16 xapi Called from file ocaml/xapi/server_helpers.ml, line 75 Nov 4 08:02:33 dat-xcpng01 xapi: [error||141766 :::80|VM.export D:6f2ee2bc66b8|backtrace] 15/16 xapi Called from file ocaml/xapi/server_helpers.ml, line 97 Nov 4 08:02:33 dat-xcpng01 xapi: [error||141766 :::80|VM.export D:6f2ee2bc66b8|backtrace] 16/16 xapi Called from file ocaml/libs/log/debug.ml, line 250 Nov 4 08:02:33 dat-xcpng01 xapi: [error||141766 :::80|VM.export D:6f2ee2bc66b8|backtrace] Nov 4 08:02:33 dat-xcpng01 xapi: [error||141766 :::80|handler:http/get_export D:b71f8095b88f|backtrace] VM.export D:6f2ee2bc66b8 failed with exception Server_error(CLIENT_ERROR, [ INTERNAL_ERROR: [ Unix.Unix_error(Unix.EPIPE, "write", "") ] ])daemon.log shows:

Nov 4 08:02:31 dat-xcpng01 forkexecd: [error||0 ||forkexecd] 135416 (/bin/nice -n 19 /usr/bin/ionice -c 3 /usr/bin/zstd) exited with code 70Maybe this gives some insights

To me it appears that the issue is caused by the compression (I had zstd enabled during the backup run).Best regards

//EDIT: This is what ChatGPT thinks about this (I know AI responses have to be taken with a grain of salt):

Why would there be long “no data” gaps? With full exports, XAPI compresses on the host side. When it encounters very large runs of zeros / sparse/unused regions, compression may yield almost nothing for long stretches. If the stream goes quiet longer than Undici’s bodyTimeout (default ~5 minutes), XO aborts. This explains why it only hits some big VMs and why delta (NBD) backups are fine.