@stormi Installed on our 2 production pools, DR and remote sites, 46 hosts total ranging from Dell, Lenovo, HP, and Supermicro servers, no issues to report!

Best posts made by flakpyro

-

RE: XCP-ng 8.3 updates announcements and testing

-

RE: XCP-ng 8.3 updates announcements and testing

Installed on my usual selection of hosts. (A mixture of AMD and Intel hosts, SuperMicro, Asus, and Minisforum). No issues after a reboot, PCI Passthru, backups, etc continue to work smoothly. Also installed on a HP GL325 Gen 10 with no issues after reboot.

-

RE: XCP-ng 8.3 updates announcements and testing

@gduperrey Updated my usual test hosts, (Minisforum and Supermicro X11) as well as an two sets of 2 host AMD pools (one pool of HP DL320 Gen10s and another of Asus Epyc servers of some sort, and lastly a Dell R360 without issue.

-

RE: XCP-ng 8.3 updates announcements and testing

@gduperrey Installed on my usual round test hosts. No issues to report so far! With such a small change i wasn't expecting anything to go wrong!

-

RE: XCP-ng 8.3 updates announcements and testing

Installed on my usual selection of hosts. (A mixture of AMD and Intel hosts, SuperMicro, Asus, and Minisforum). No issues after a reboot, PCI Passthru, backups, etc continue to work smoothly

-

RE: log_fs_usage / /var/log directory on pool master filling up constantly

One of our pools. (5 hosts, 6 NFS SRs) had this issue when we first deployed it. I engaged with support from Vates and they changed a setting that reduced the frequency of the SR.scan job from 30 seconds to every 2 mins instead. This totally fixed the issue for us going on a year and a half later.

I dug back in our documentation and found the command they gave us

xe host-param-set other-config:auto-scan-interval=120 uuid=<Host UUID>Where hosts UUID is your pool master.

-

RE: XCP-ng 8.3 updates announcements and testing

@stormi Installed on my usual test hosts (Intel Minisforum MS-01, and Supermicro running a Xeon E-2336 CPU). Also installed onto a 2 host AMD epyc pool. Updates went smooth, backups continue to function as before.

3 windows 11 VMs had secure boot enabled. In XOA i clicked "Copy pool's default UEFI certificates to the VM" after the update was complete. The VMs continued to boot without issue after.

-

RE: XCP-ng 8.3 updates announcements and testing

installed on 2 test machines

Machine 1:

Intel Xeon E-2336

SuperMicro board.Machine 2:

Minisforum MS-01

i9-13900H

32 GB Ram

Using Intel X710 onboard NICBoth machines installed fine and all VMs came up without issue after. My one test backup job also seemed to run without any issues.

-

RE: XCP-ng 8.3 updates announcements and testing

@gduperrey installed on 2 test machines

Machine 1:

Intel Xeon E-2336

SuperMicro board.Machine 2:

Minisforum MS-01

i9-13900H

32 GB Ram

Using Intel X710 onboard NICBoth machines installed fine and all VMs came up without issue after.

I ran a backup job after to test snapshot coalesce, no issues there.

-

RE: XCP-ng 8.3 updates announcements and testing

@stormi Updated a test machine running only couple VMs. Everything installed fine and rebooted without issue.

Machine is:

Intel Xeon E-2336

SuperMicro board.

One VM happens to be windows based with an Nvidia GPU passed though to it running Blue Iris using the MSR fixed found elsewhere on these forums, fix continues to work with this version of Xen.

Latest posts made by flakpyro

-

RE: XCP-ng 8.3 updates announcements and testing

Installed on my usual batch of test hosts, no issues so far.

-

RE: XCP-ng 8.3 updates announcements and testing

Installed on my usual batch of test hosts, so far so good.

-

RE: XCP-ng 8.3 updates announcements and testing

@Andrew I have experienced this twice so far as well.

-

RE: XCP-ng 8.3 updates announcements and testing

@stormi Updates deployed, no issues so far.

-

RE: XCP-ng 8.3 updates announcements and testing

Updates deployed, no issues so far.

-

RE: XCP-ng 8.3 updates announcements and testing

Installed on my usual test hosts without issues. However i am not using the qcow2 disk format anywhere yet

-

RE: Xen Orchestra 6.3.2 Random Replication Failure

@florent I am using NBD for all backups yes but am not purging snapshots/usnig CBT.

Its so rare in fact that i haven't had it happen since since i made this post last week. (When i had it happen twice in 2 days). This is our production XOA it has occurred on so i wont be able to test a branch and i have never seen it happen on my sources install at home.

-

RE: XCP-ng 8.3 updates announcements and testing

Installed on a handful of test machines. Not as many as usual as im being very cautious with this one for now. Everything rebooted and VMs started ok after. Using VHD for everything currently.

-

RE: Xen Orchestra 6.3.2 Random Replication Failure

@pierrebrunet Thanks for the update. Glad to know its not something unique to our environment and you were able to track down the cause!

-

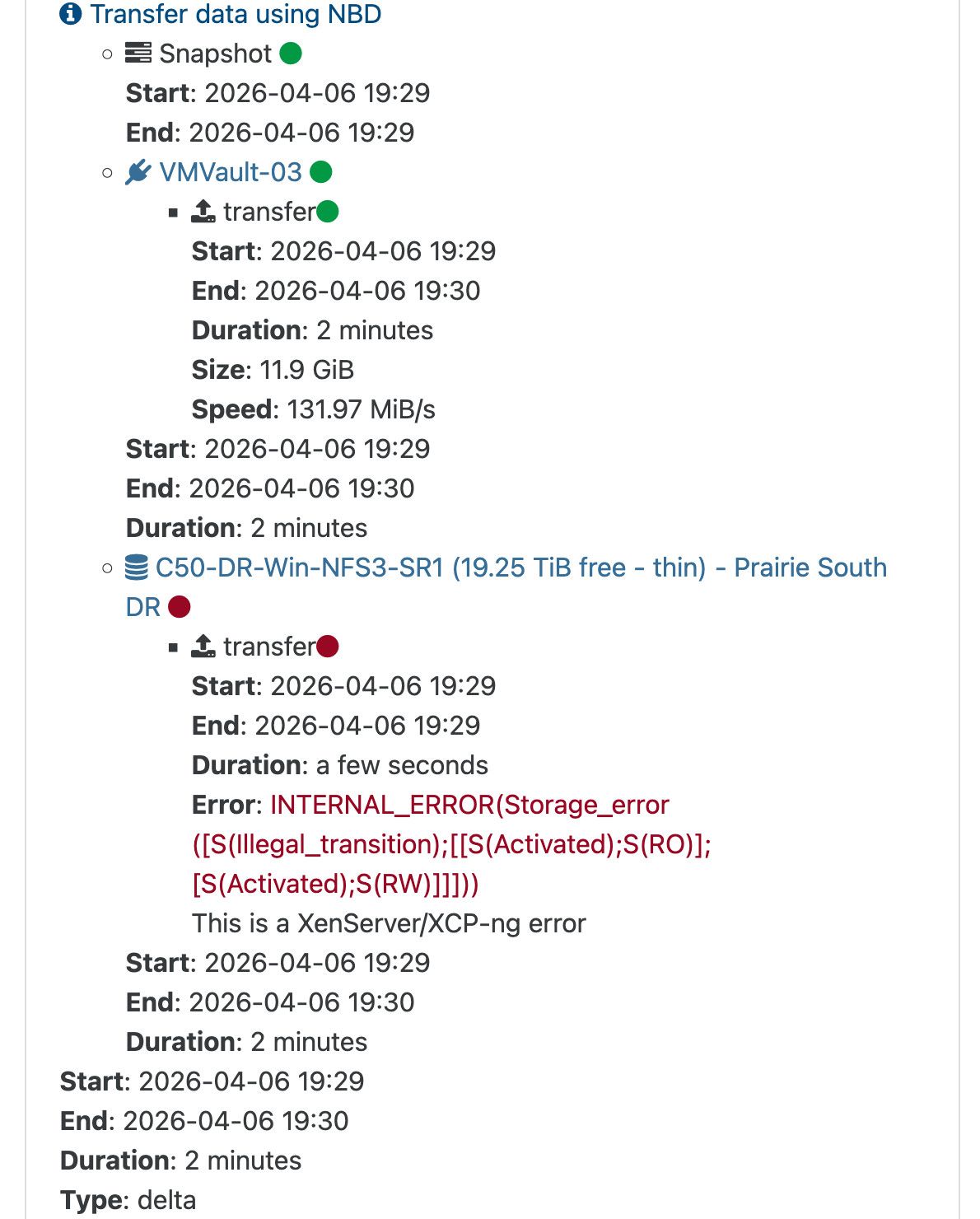

Xen Orchestra 6.3.2 Random Replication Failure

Since the XOA 6.3 release i have had a few random backup errors in an environment that has otherwise had fairly flawless backup performance for the last year. I cannot make out what exactly the error means but retrying the job allows it to succeed without issue. It is also very intermittent.

Log attached. 2026-04-07T01_00_03.075Z - backup NG.txt

If the issue persists i will submit a ticket to dive into it further but i have only had it happen 3 times since the release ofthe 6.3.x update so its hard to reproduce.

Replication target storage is a Pure C50R4 with NFS3 exports.