Installed on my usual hosts, one of which has an E810 and used the ICE driver, no issues so far however i am not using LACP bonding on that host.

Posts

-

RE: XCP-ng 8.3 updates announcements and testing

-

RE: XCP-ng 8.3 updates announcements and testing

Installed on my usual batch of test hosts, no issues so far.

-

RE: XCP-ng 8.3 updates announcements and testing

Installed on my usual batch of test hosts, so far so good.

-

RE: XCP-ng 8.3 updates announcements and testing

@Andrew I have experienced this twice so far as well.

-

RE: XCP-ng 8.3 updates announcements and testing

@stormi Updates deployed, no issues so far.

-

RE: XCP-ng 8.3 updates announcements and testing

Updates deployed, no issues so far.

-

RE: XCP-ng 8.3 updates announcements and testing

Installed on my usual test hosts without issues. However i am not using the qcow2 disk format anywhere yet

-

RE: Xen Orchestra 6.3.2 Random Replication Failure

@florent I am using NBD for all backups yes but am not purging snapshots/usnig CBT.

Its so rare in fact that i haven't had it happen since since i made this post last week. (When i had it happen twice in 2 days). This is our production XOA it has occurred on so i wont be able to test a branch and i have never seen it happen on my sources install at home.

-

RE: XCP-ng 8.3 updates announcements and testing

Installed on a handful of test machines. Not as many as usual as im being very cautious with this one for now. Everything rebooted and VMs started ok after. Using VHD for everything currently.

-

RE: Xen Orchestra 6.3.2 Random Replication Failure

@pierrebrunet Thanks for the update. Glad to know its not something unique to our environment and you were able to track down the cause!

-

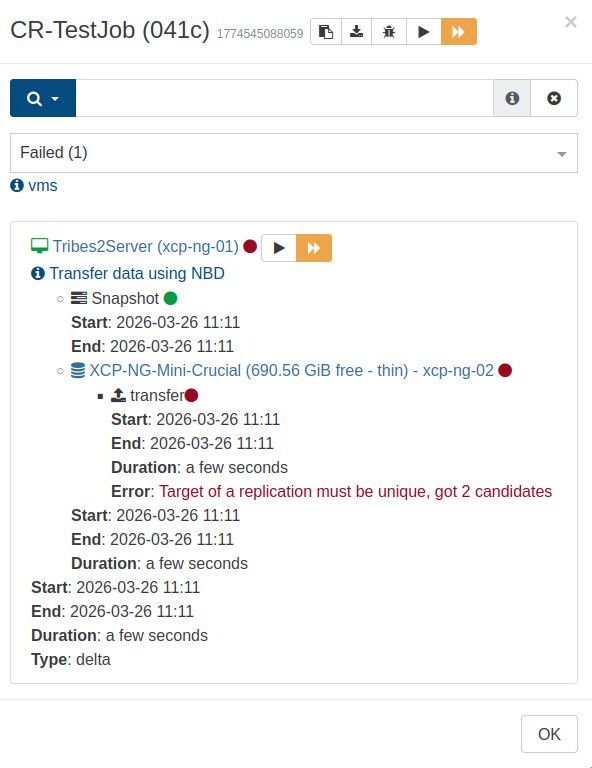

Xen Orchestra 6.3.2 Random Replication Failure

Since the XOA 6.3 release i have had a few random backup errors in an environment that has otherwise had fairly flawless backup performance for the last year. I cannot make out what exactly the error means but retrying the job allows it to succeed without issue. It is also very intermittent.

Log attached. 2026-04-07T01_00_03.075Z - backup NG.txt

If the issue persists i will submit a ticket to dive into it further but i have only had it happen 3 times since the release ofthe 6.3.x update so its hard to reproduce.

Replication target storage is a Pure C50R4 with NFS3 exports.

-

RE: XOA 6.1.3 Replication fails with "VTPM_MAX_AMOUNT_REACHED(1)"

@florent I can confirm that this fixes the issue!

-

RE: XOA 6.1.3 Replication fails with "VTPM_MAX_AMOUNT_REACHED(1)"

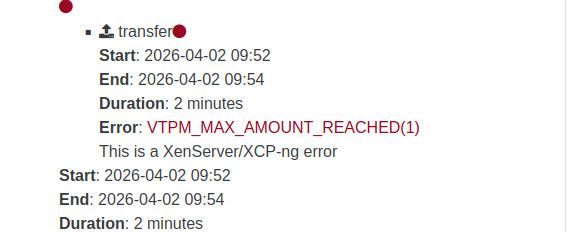

I created a brand new Windows 11 VM with a VTPM and Secure boot enabled and am able to reproduce this on a freshly created VM.

- Initial replication will work.

- Any follow up replications will fail with Error: VTPM_MAX_AMOUNT_REACHED(1)

- Retrying the job after the failure will succeed.

-

RE: XOA 6.1.3 Replication fails with "VTPM_MAX_AMOUNT_REACHED(1)"

I tried removing the replica chain and let it start from scratch. The initial full replica was a success but unfortunately running a follow up incremental replication job results in the same error.

The entire transfer succeeds, it only seems to fail at the very end.

I have other VMs (Server 2022) with VTPMs that do not have this issue. The VM that is failing is Windows 11, and is the only Windows 11 VM we have running.

-

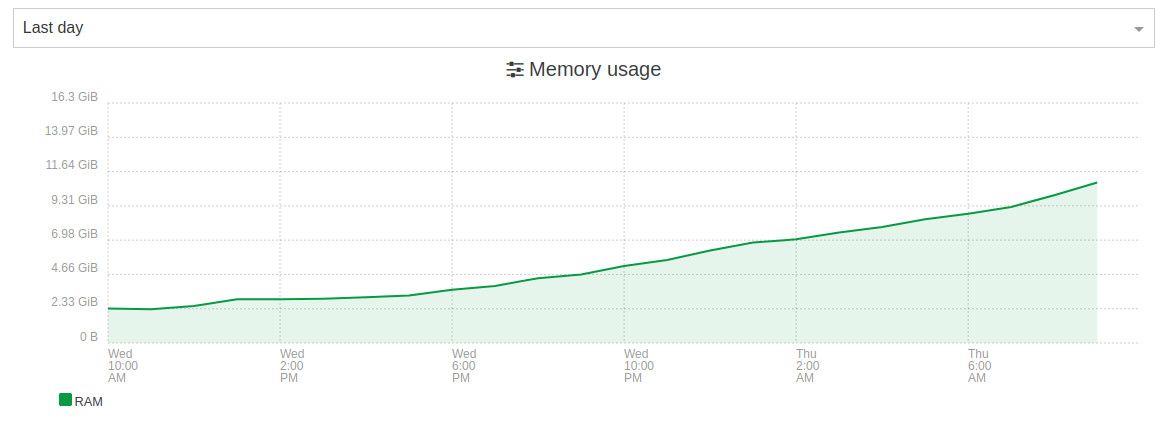

RE: XOA - Memory Usage

Looks like the issue still persists in 6.3.1?

Here is since installing the latest update yesterday:

-

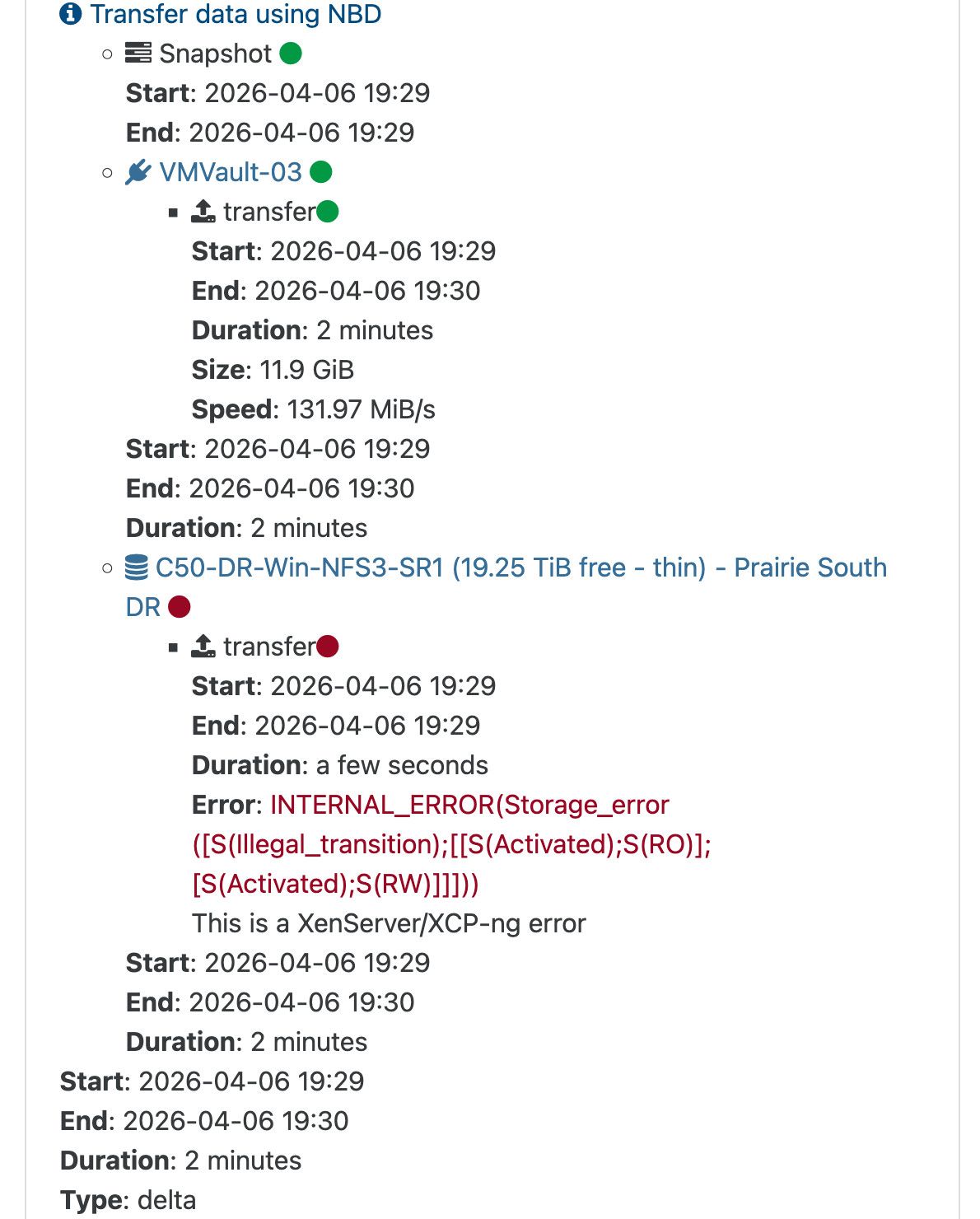

XOA 6.1.3 Replication fails with "VTPM_MAX_AMOUNT_REACHED(1)"

Since updating to XOA 6.1.3 i have a VM that has secure boot enabled and will fail to replicate with the error:

"VTPM_MAX_AMOUNT_REACHED(1)"Retrying the backup allows it to complete.

"data": { "id": "69d826f4-383c-f163-b59a-8f3ea5132fd1", "isFull": false, "name_label": "C50-DR-Win-NFS3-SR1", "type": "SR" }, "id": "1775101037617", "message": "export", "start": 1775101037617, "status": "failure", "tasks": [ { "id": "1775101039730", "message": "transfer", "start": 1775101039730, "status": "failure", "end": 1775101063330, "result": { "code": "VTPM_MAX_AMOUNT_REACHED", "params": [ "1" ], "call": { "duration": 3, "method": "VTPM.create", "params": [ "* session id *", "OpaqueRef:5263e3da-0772-f8c5-5344-32e81c08c37a", false ] }, "message": "VTPM_MAX_AMOUNT_REACHED(1)", "name": "XapiError", "stack": "XapiError: VTPM_MAX_AMOUNT_REACHED(1)\n at XapiError.wrap (file:///usr/local/lib/node_modules/xo-server/node_modules/xen-api/_XapiError.mjs:16:12)\n at file:///usr/local/lib/node_modules/xo-server/node_modules/xen-api/transports/json-rpc.mjs:38:21\n at process.processTicksAndRejections (node:internal/process/task_queues:95:5)" } } ], "end": 1775101063749 }It appears to be trying to create a new TPM on the replica when one already exists?

Not sure why it fails during the job run but completes during a retry but it is consistent in its behaviour.

-

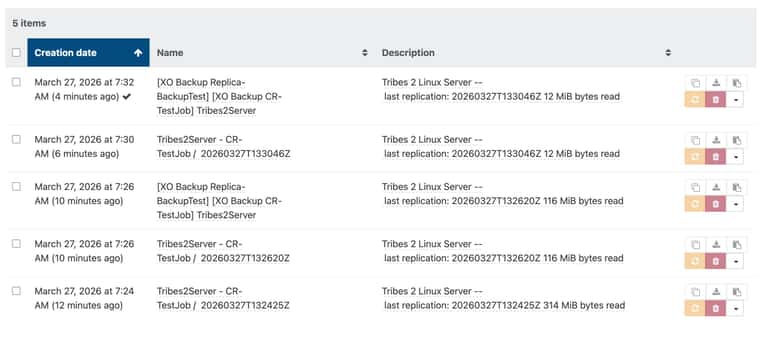

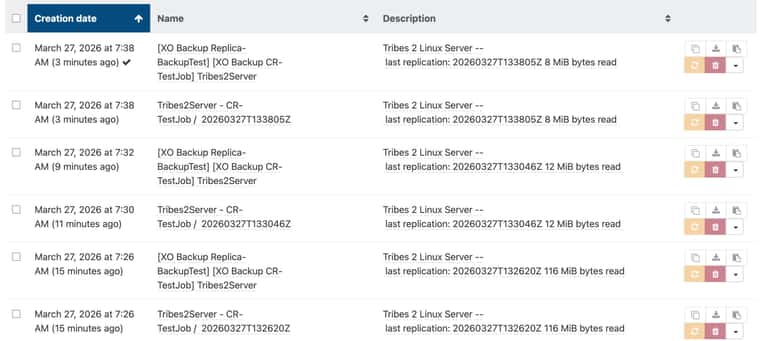

RE: Backing up from Replica triggers full backup

Happy to report from my limited testing that as of this morning this appears to be fixed in master.

-

RE: Backing up from Replica triggers full backup

@florent Since both jobs were test jobs that i have been running manually, i do have an unnamed and disabled schedule on both that does look identical, so i unintentionally did have multiple jobs on the same schedule. I have since named the schedule within my test job so to each is unique.

Updating to "f445b" shows improvement:

I was able to replicate from pool A to Pool B, then run a backup job which was incremental.

I then ran the replication job again which was incremental and did not create a new VM!

Unfortunately though after this i ran the backup job again which resulted in a full backup from the replica rather than a delta, not sure why. The snapshot from the first backup job run was also not removed, leaving 2 snapshots behind, one from each backup run.

I then tried the process again. Ran the CR job, which was a delta (this part seemed fixed!) then ran the backup job after, same behavior, a full ran instead of a delta and the previous backup snapshot was left behind leaving the VM looking like:

So it seems one problem solved but another remains.

-

RE: Backing up from Replica triggers full backup

Retrying the job after the above failure results in a full replication and a new VM being created just as before.

-

RE: Backing up from Replica triggers full backup

@florent Just gave this branch a shot, trying to run replication job after running a backup job no longer results in a full replication but just fails with: