https://access.redhat.com/solutions/5347091

System fails to mount filesystem on multipath partition during boot time in RHEL8

Solution Verified - Updated May 31 2021 at 2:25 PM - English

Environment

Red Hat Enterprise Linux 8

dracut-049-27.git20190906.el8.x86_64

Issue

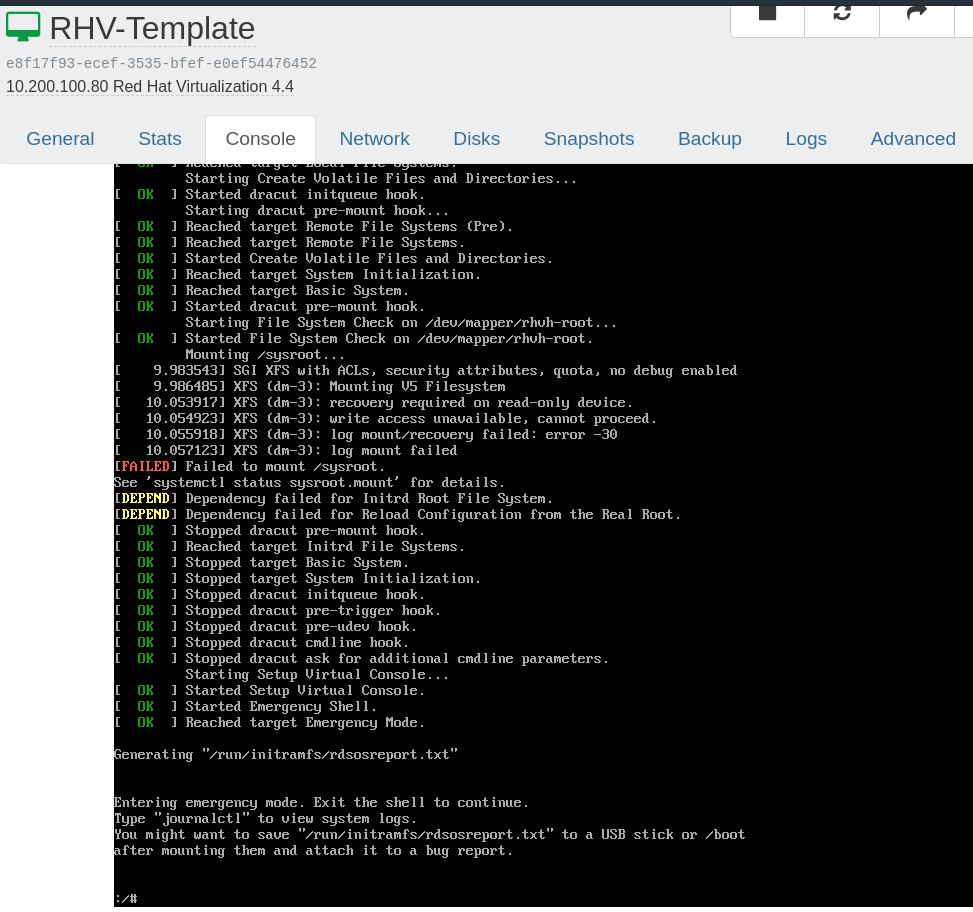

System fails to mount filesystem created on multipath partition during boot time in RHEL8.

Raw

[ 235.191955] testsystem systemd[1]: Mounting /sysroot...

[ 235.398136] testsystem kernel: SGI XFS with ACLs, security attributes, no debug enabled

[ 235.398913] testsystem mount[2922]: mount: /sysroot: /dev/sdb4 already mounted or mount point busy.

[ 235.399207] testsystem systemd[1]: sysroot.mount: Mount process exited, code=exited status=32

[ 235.399259] testsystem systemd[1]: sysroot.mount: Failed with result 'exit-code'.

[ 235.399480] testsystem systemd[1]: Failed to mount /sysroot.

Resolution

Upgrade dracut package to dracut-049-135.git20210121.el8 as per RHBA-2021:1661

As a workaround, rebuild initramfs with /lib/udev/rules.d/11-dm-parts.rules.

Raw

dracut -f -v --include /lib/udev/rules.d/11-dm-parts.rules /lib/udev/rules.d/11-dm-parts.rules

Or create /etc/dracut.conf.d/part.conf with below lines and rebuild initramfs image.

#vi /etc/dracut.conf.d/part.conf

install_items+=/lib/udev/rules.d/11-dm-parts.rules

Root Cause

dracut doesn't install /lib/udev/rules.d/11-dm-parts.rules, which is what changes the link priority for kpartx partition devices, which should make /dev/disk/by-uuid point to multipath devices instead of the scsi path devices.

Diagnostic Steps

Check boot logs and verify whether root filesystem mounted using sdX device or mpath device.

Raw

[ 235.185721] testsystem systemd[1]: Starting File System Check on /dev/disk/by-uuid/3a9fc23b-e95d-44a2-b95c-9651799f8c77...

[ 235.190597] testsystem systemd-fsck[2920]: fsck.none doesn't exist, not checking file system on /dev/disk/by-uuid/3a9fc23b-e95d-44a2-b95c-9651799f8c77.

[ 235.191192] testsystem systemd[1]: Started File System Check on /dev/disk/by-uuid/3a9fc23b-e95d-44a2-b95c-9651799f8c77.

[ 235.191955] testsystem systemd[1]: Mounting /sysroot...

[ 235.398913] testsystem mount[2922]: mount: /sysroot: /dev/sdb4 already mounted or mount point busy.

Verify /lib/udev/rules.d/11-dm-parts.rules rule available in initramfs image.

Raw

lsinitrd initramfs-4.18.0-193.el8.x86_64.img |grep dm-parts |wc -l

0

Rebuild initramfs image with /usr/lib/udev/rules.d/11-dm-parts.rules.

Raw

#lsinitrd initramfs-4.18.0-193.el8.x86_64.img |grep dm-parts

Arguments: -f -v -a 'multipath' --include '/usr/lib/udev/rules.d/11-dm-parts.rules' '/usr/lib/udev/rules.d/11-dm-parts.rules'

-rw-r--r-- 1 root root 1442 Dec 17 2019 usr/lib/udev/rules.d/11-dm-parts.rules