MAP_DUPLICATE_KEY error in XOA backup - VM's wont START now!

-

still doing more digging on our side too

the issue seems to be around here:

Sep 16 11:33:40 fen-xcp-01 SM: [24503] Paused or host_ref key found [{'paused': 'true', 'read-caching-enabled-on-b3a38eb3-bdad-47f6-92c0-15c11fa4125e': 'true', 'vhd-parent': '93c10df0-ba2a-4553-8e3e-43eb9752fba1', 'read-caching-enabled-on-c305769c-bb8a-43f4-88ff-be7d9f88ad1bc305769c-bb8a-43f4-88ff-be7d9f88ad1b': 'true', 'vhd-blocks': 'eJxjYBhYAAAAgAAB'}]

b3a38eb3-bdad-47f6-92c0-15c11fa4125e is fen-xcp-01

c305769c-bb8a-43f4-88ff-be7d9f88ad1b is fen-xcp-08I can't really see what 93c10df0-ba2a-4553-8e3e-43eb9752fba1 is; I would think a vdi, but it's not showing as so. no tap-disk process attached to anything with that in the name,

-

My first feeling is a I/O issue causing the process to hang, because the device

tdlisn't responding anymore. Reasons could be numerous, network (packet loss or whatever), process issue on the NFS server but as a result, the underlying process attached to this VDI was dead.To answer your question, 93c10 is the parent VHD (in a snapshot chain). You need the parent to be reachable to coalesce.

-

... that helps in that I know that 93c10 should be findable; but if it's not , it's not immediately apparent what to do.

I suppose that I could look to try and see any tap disk that we don't know what it might be attached to.

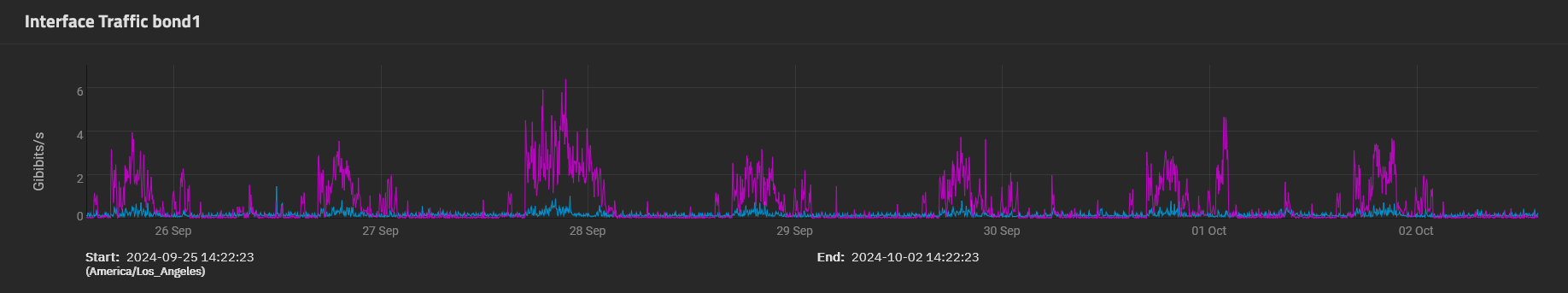

we just double checked our storage network. Monitoring software (snmpc and zabbix) show there have been no connectivity issues over the past 6 months. no traps , no issues, steady numbers on bandwidth used.

running a constant ping shows no drops. everything storage network is layer2 connectivity. NFS are connected via redundant 40gb/s to redundant 40gb/s switches. no routers involved.

387 VM's backed up correctly, 7 failed with this error that we cant resolve.

we are not seeing any other IO issues on VM's

i can create new snapshots in the pool with no issues on other VM', backups for other VM's did complete with no errors last night except for the 7 we are having problems with.

my storage guy has finished combing through the logs on all 3 NFS servers and not finding any signs of problems with drives, network, ZFS.

also note: the issue with the 7 vm's having problems exist on across different xen hosts AND different storage NFS servers.

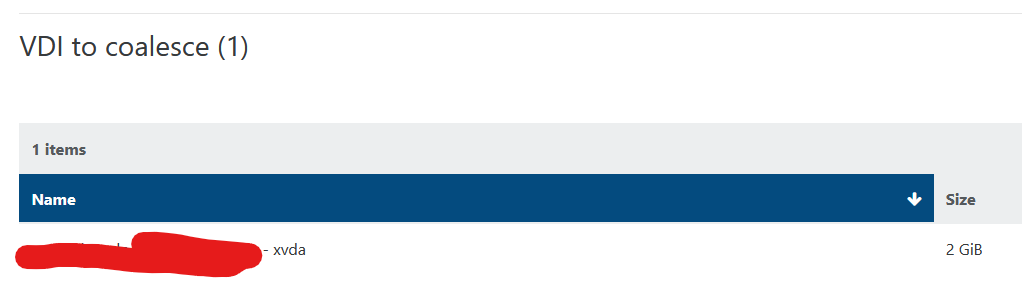

all 7 vm's are stuck with at least one disk in VDI to coalesce , same error/issue on all of them

cat SMlog | grep "Leaf-coalesce failed"

Sep 16 01:55:53 fen-xcp-01 SMGC: [3077] Leaf-coalesce failed on 4a2f04ae(60.000G/128.000K), skipping

Sep 16 04:23:05 fen-xcp-01 SMGC: [27850] Leaf-coalesce failed on 4a2f04ae(60.000G/128.000K), skipping

Sep 16 10:43:08 fen-xcp-01 SMGC: [1761] Leaf-coalesce failed on 3a4bdcc6(2.000G/8.500K), skipping

Sep 16 11:22:38 fen-xcp-01 SMGC: [26946] Leaf-coalesce failed on 4a2f04ae(60.000G/128.000K), skipping

Sep 16 12:01:00 fen-xcp-01 SMGC: [23306] Leaf-coalesce failed on 4a2f04ae(60.000G/128.000K), skipping

Sep 16 13:09:34 fen-xcp-01 SMGC: [24023] Leaf-coalesce failed on 4a2f04ae(60.000G/128.000K), skipping[13:15 fen-xcp-01 log]# cat SMlog | grep "4a2f04ae"

Sep 16 01:55:21 fen-xcp-01 SM: [7227] ['/usr/sbin/td-util', 'query', 'vhd', '-vpfb', '/var/run/sr-mount/ba6c1ba6-c6c8-caef-5d30-7951f50bbbc1/4a2f04ae-6a1d-4bbe-bbc0-2f1a0c4db37b.vhd'] Sep 16 01:55:21 fen-xcp-01 SM: [7227] vdi_snapshot {'sr_uuid': 'ba6c1ba6-c6c8-caef-5d30-7951f50bbbc1', 'subtask_of': 'DummyRef:|477538e7-9035-4d41-9318-dfeb69248734|VDI.snapshot', 'vdi_ref': 'OpaqueRef:5527b552-e1ec-449a-912e-4eb2fe440554', 'vdi_on_boot': 'persist', 'args': [], 'o_direct': False, 'vdi_location': '4a2f04ae-6a1d-4bbe-bbc0-2f1a0c4db37b', 'host_ref': 'OpaqueRef:a34e39e4-b42d-48d3-87a9-6eec76720259', 'session_ref': 'OpaqueRef:62704cca-7fb4-4110-a84c-b7fe0f0275f2', 'device_config': {'server': '10.20.86.80', 'options': '', 'SRmaster': 'true', 'serverpath': '/mnt/srv/databases', 'nfsversion': '4.1'}, 'command': 'vdi_snapshot', 'vdi_allow_caching': 'false', 'sr_ref': 'OpaqueRef:1d92d8fc-8c8f-4bdc-9de8-6eccf499d65a', 'driver_params': {'epochhint': '475c2776-2553-daa0-00e9-a95cf0cd0ee4'}, 'vdi_uuid': '4a2f04ae-6a1d-4bbe-bbc0-2f1a0c4db37b'} Sep 16 01:55:21 fen-xcp-01 SM: [7227] Pause request for 4a2f04ae-6a1d-4bbe-bbc0-2f1a0c4db37b Sep 16 01:55:52 fen-xcp-01 SMGC: [3077] Got on-boot for 4a2f04ae(60.000G/128.000K): 'persist' Sep 16 01:55:52 fen-xcp-01 SMGC: [3077] Got allow_caching for 4a2f04ae(60.000G/128.000K): False Sep 16 01:55:52 fen-xcp-01 SMGC: [3077] Got other-config for 4a2f04ae(60.000G/128.000K): {} Sep 16 01:55:52 fen-xcp-01 SMGC: [3077] Removed vhd-blocks from 4a2f04ae(60.000G/128.000K) Sep 16 01:55:52 fen-xcp-01 SM: [3077] ['/usr/bin/vhd-util', 'read', '--debug', '-B', '-n', '/var/run/sr-mount/ba6c1ba6-c6c8-caef-5d30-7951f50bbbc1/4a2f04ae-6a1d-4bbe-bbc0-2f1a0c4db37b.vhd'] Sep 16 01:55:52 fen-xcp-01 SMGC: [3077] Set vhd-blocks = eJztwQENAAAAwqD3T20PBxQAAAD8Gw8AAAE= for 4a2f04ae(60.000G/128.000K) Sep 16 01:55:53 fen-xcp-01 SMGC: [3077] Leaf-coalesce candidate: 4a2f04ae(60.000G/128.000K) Sep 16 01:55:53 fen-xcp-01 SMGC: [3077] Got on-boot for 4a2f04ae(60.000G/128.000K): 'persist' Sep 16 01:55:53 fen-xcp-01 SMGC: [3077] Got allow_caching for 4a2f04ae(60.000G/128.000K): False Sep 16 01:55:53 fen-xcp-01 SMGC: [3077] Got other-config for 4a2f04ae(60.000G/128.000K): {} Sep 16 01:55:53 fen-xcp-01 SMGC: [3077] Removed vhd-blocks from 4a2f04ae(60.000G/128.000K) Sep 16 01:55:53 fen-xcp-01 SM: [3077] ['/usr/bin/vhd-util', 'read', '--debug', '-B', '-n', '/var/run/sr-mount/ba6c1ba6-c6c8-caef-5d30-7951f50bbbc1/4a2f04ae-6a1d-4bbe-bbc0-2f1a0c4db37b.vhd'] Sep 16 01:55:53 fen-xcp-01 SMGC: [3077] Set vhd-blocks = eJztwQENAAAAwqD3T20PBxQAAAD8Gw8AAAE= for 4a2f04ae(60.000G/128.000K) Sep 16 01:55:53 fen-xcp-01 SMGC: [3077] Leaf-coalesce candidate: 4a2f04ae(60.000G/128.000K) Sep 16 01:55:53 fen-xcp-01 SMGC: [3077] Leaf-coalescing 4a2f04ae(60.000G/128.000K) -> *1869c2bd(60.000G/1.114G) Sep 16 01:55:53 fen-xcp-01 SM: [3077] Pause request for 4a2f04ae-6a1d-4bbe-bbc0-2f1a0c4db37b Sep 16 01:55:53 fen-xcp-01 SMGC: [3077] Removed leaf-coalesce from 4a2f04ae(60.000G/128.000K) Sep 16 01:55:53 fen-xcp-01 SMGC: [3077] Leaf-coalesce failed on 4a2f04ae(60.000G/128.000K), skipping Sep 16 04:08:52 fen-xcp-01 SMGC: [27850] 4a2f04ae(60.000G/128.000K) Sep 16 04:23:04 fen-xcp-01 SMGC: [27850] Got on-boot for 4a2f04ae(60.000G/128.000K): 'persist' Sep 16 04:23:04 fen-xcp-01 SMGC: [27850] Got allow_caching for 4a2f04ae(60.000G/128.000K): False Sep 16 04:23:04 fen-xcp-01 SMGC: [27850] Got other-config for 4a2f04ae(60.000G/128.000K): {} Sep 16 04:23:04 fen-xcp-01 SMGC: [27850] Removed vhd-blocks from 4a2f04ae(60.000G/128.000K) Sep 16 04:23:04 fen-xcp-01 SM: [27850] ['/usr/bin/vhd-util', 'read', '--debug', '-B', '-n', '/var/run/sr-mount/ba6c1ba6-c6c8-caef-5d30-7951f50bbbc1/4a2f04ae-6a1d-4bbe-bbc0-2f1a0c4db37b.vhd'] Sep 16 04:23:04 fen-xcp-01 SMGC: [27850] Set vhd-blocks = eJztwQENAAAAwqD3T20PBxQAAAD8Gw8AAAE= for 4a2f04ae(60.000G/128.000K) Sep 16 04:23:04 fen-xcp-01 SMGC: [27850] Leaf-coalesce candidate: 4a2f04ae(60.000G/128.000K) Sep 16 04:23:05 fen-xcp-01 SMGC: [27850] Got on-boot for 4a2f04ae(60.000G/128.000K): 'persist' Sep 16 04:23:05 fen-xcp-01 SMGC: [27850] Got allow_caching for 4a2f04ae(60.000G/128.000K): False Sep 16 04:23:05 fen-xcp-01 SMGC: [27850] Got other-config for 4a2f04ae(60.000G/128.000K): {} Sep 16 04:23:05 fen-xcp-01 SMGC: [27850] Removed vhd-blocks from 4a2f04ae(60.000G/128.000K) Sep 16 04:23:05 fen-xcp-01 SM: [27850] ['/usr/bin/vhd-util', 'read', '--debug', '-B', '-n', '/var/run/sr-mount/ba6c1ba6-c6c8-caef-5d30-7951f50bbbc1/4a2f04ae-6a1d-4bbe-bbc0-2f1a0c4db37b.vhd'] Sep 16 04:23:05 fen-xcp-01 SMGC: [27850] Set vhd-blocks = eJztwQENAAAAwqD3T20PBxQAAAD8Gw8AAAE= for 4a2f04ae(60.000G/128.000K) Sep 16 04:23:05 fen-xcp-01 SMGC: [27850] Leaf-coalesce candidate: 4a2f04ae(60.000G/128.000K) Sep 16 04:23:05 fen-xcp-01 SMGC: [27850] Leaf-coalescing 4a2f04ae(60.000G/128.000K) -> *1869c2bd(60.000G/1.114G) Sep 16 04:23:05 fen-xcp-01 SM: [27850] Pause request for 4a2f04ae-6a1d-4bbe-bbc0-2f1a0c4db37b Sep 16 04:23:05 fen-xcp-01 SMGC: [27850] Removed leaf-coalesce from 4a2f04ae(60.000G/128.000K) Sep 16 04:23:05 fen-xcp-01 SMGC: [27850] Leaf-coalesce failed on 4a2f04ae(60.000G/128.000K), skipping Sep 16 09:41:02 fen-xcp-01 SM: [973] ['/usr/sbin/td-util', 'query', 'vhd', '-vpfb', '/var/run/sr-mount/ba6c1ba6-c6c8-caef-5d30-7951f50bbbc1/4a2f04ae-6a1d-4bbe-bbc0-2f1a0c4db37b.vhd'] Sep 16 09:41:02 fen-xcp-01 SM: [973] vdi_snapshot {'sr_uuid': 'ba6c1ba6-c6c8-caef-5d30-7951f50bbbc1', 'subtask_of': 'DummyRef:|6677fd65-d9b2-4f85-a6ad-c2871f013368|VDI.snapshot', 'vdi_ref': 'OpaqueRef:5527b552-e1ec-449a-912e-4eb2fe440554', 'vdi_on_boot': 'persist', 'args': [], 'o_direct': False, 'vdi_location': '4a2f04ae-6a1d-4bbe-bbc0-2f1a0c4db37b', 'host_ref': 'OpaqueRef:a34e39e4-b42d-48d3-87a9-6eec76720259', 'session_ref': 'OpaqueRef:776ddeef-9758-4885-93a4-32e7fa0d2473', 'device_config': {'server': '10.20.86.80', 'options': '', 'SRmaster': 'true', 'serverpath': '/mnt/srv/databases', 'nfsversion': '4.1'}, 'command': 'vdi_snapshot', 'vdi_allow_caching': 'false', 'sr_ref': 'OpaqueRef:1d92d8fc-8c8f-4bdc-9de8-6eccf499d65a', 'driver_params': {'epochhint': 'b31e09c1-c512-f823-1a3e-fa37586fe962'}, 'vdi_uuid': '4a2f04ae-6a1d-4bbe-bbc0-2f1a0c4db37b'} Sep 16 09:41:02 fen-xcp-01 SM: [973] Pause request for 4a2f04ae-6a1d-4bbe-bbc0-2f1a0c4db37b Sep 16 11:17:33 fen-xcp-01 SM: [26745] ['vhd-util', 'key', '-p', '-n', '/var/run/sr-mount/ba6c1ba6-c6c8-caef-5d30-7951f50bbbc1/4a2f04ae-6a1d-4bbe-bbc0-2f1a0c4db37b.vhd'] Sep 16 11:17:37 fen-xcp-01 SMGC: [26946] 4a2f04ae(60.000G/128.000K) Sep 16 11:17:37 fen-xcp-01 SMGC: [26946] Got on-boot for 4a2f04ae(60.000G/128.000K): 'persist' Sep 16 11:17:37 fen-xcp-01 SMGC: [26946] Got allow_caching for 4a2f04ae(60.000G/128.000K): False Sep 16 11:17:37 fen-xcp-01 SMGC: [26946] Got other-config for 4a2f04ae(60.000G/128.000K): {} Sep 16 11:17:37 fen-xcp-01 SMGC: [26946] Removed vhd-blocks from 4a2f04ae(60.000G/128.000K) Sep 16 11:17:37 fen-xcp-01 SM: [26946] ['/usr/bin/vhd-util', 'read', '--debug', '-B', '-n', '/var/run/sr-mount/ba6c1ba6-c6c8-caef-5d30-7951f50bbbc1/4a2f04ae-6a1d-4bbe-bbc0-2f1a0c4db37b.vhd'] Sep 16 11:17:37 fen-xcp-01 SMGC: [26946] Set vhd-blocks = eJztwQENAAAAwqD3T20PBxQAAAD8Gw8AAAE= for 4a2f04ae(60.000G/128.000K) Sep 16 11:17:37 fen-xcp-01 SMGC: [26946] Leaf-coalesce candidate: 4a2f04ae(60.000G/128.000K) Sep 16 11:22:37 fen-xcp-01 SMGC: [26946] Got on-boot for 4a2f04ae(60.000G/128.000K): 'persist' Sep 16 11:22:37 fen-xcp-01 SMGC: [26946] Got allow_caching for 4a2f04ae(60.000G/128.000K): False Sep 16 11:22:37 fen-xcp-01 SMGC: [26946] Got other-config for 4a2f04ae(60.000G/128.000K): {} Sep 16 11:22:37 fen-xcp-01 SMGC: [26946] Removed vhd-blocks from 4a2f04ae(60.000G/128.000K) Sep 16 11:22:37 fen-xcp-01 SM: [26946] ['/usr/bin/vhd-util', 'read', '--debug', '-B', '-n', '/var/run/sr-mount/ba6c1ba6-c6c8-caef-5d30-7951f50bbbc1/4a2f04ae-6a1d-4bbe-bbc0-2f1a0c4db37b.vhd'] Sep 16 11:22:37 fen-xcp-01 SMGC: [26946] Set vhd-blocks = eJztwQENAAAAwqD3T20PBxQAAAD8Gw8AAAE= for 4a2f04ae(60.000G/128.000K) Sep 16 11:22:37 fen-xcp-01 SMGC: [26946] Leaf-coalesce candidate: 4a2f04ae(60.000G/128.000K) Sep 16 11:22:37 fen-xcp-01 SMGC: [26946] Got on-boot for 4a2f04ae(60.000G/128.000K): 'persist' Sep 16 11:22:37 fen-xcp-01 SMGC: [26946] Got allow_caching for 4a2f04ae(60.000G/128.000K): False Sep 16 11:22:37 fen-xcp-01 SMGC: [26946] Got other-config for 4a2f04ae(60.000G/128.000K): {} Sep 16 11:22:37 fen-xcp-01 SMGC: [26946] Removed vhd-blocks from 4a2f04ae(60.000G/128.000K) Sep 16 11:22:37 fen-xcp-01 SM: [26946] ['/usr/bin/vhd-util', 'read', '--debug', '-B', '-n', '/var/run/sr-mount/ba6c1ba6-c6c8-caef-5d30-7951f50bbbc1/4a2f04ae-6a1d-4bbe-bbc0-2f1a0c4db37b.vhd'] Sep 16 11:22:37 fen-xcp-01 SMGC: [26946] Set vhd-blocks = eJztwQENAAAAwqD3T20PBxQAAAD8Gw8AAAE= for 4a2f04ae(60.000G/128.000K) Sep 16 11:22:37 fen-xcp-01 SMGC: [26946] Leaf-coalesce candidate: 4a2f04ae(60.000G/128.000K) Sep 16 11:22:37 fen-xcp-01 SMGC: [26946] Leaf-coalescing 4a2f04ae(60.000G/128.000K) -> *1869c2bd(60.000G/1.114G) Sep 16 11:22:38 fen-xcp-01 SM: [26946] Pause request for 4a2f04ae-6a1d-4bbe-bbc0-2f1a0c4db37b Sep 16 11:22:38 fen-xcp-01 SMGC: [26946] Removed leaf-coalesce from 4a2f04ae(60.000G/128.000K) Sep 16 11:22:38 fen-xcp-01 SMGC: [26946] Leaf-coalesce failed on 4a2f04ae(60.000G/128.000K), skipping Sep 16 11:55:55 fen-xcp-01 SM: [23125] ['vhd-util', 'key', '-p', '-n', '/var/run/sr-mount/ba6c1ba6-c6c8-caef-5d30-7951f50bbbc1/4a2f04ae-6a1d-4bbe-bbc0-2f1a0c4db37b.vhd'] Sep 16 11:55:59 fen-xcp-01 SMGC: [23306] 4a2f04ae(60.000G/128.000K) Sep 16 11:55:59 fen-xcp-01 SMGC: [23306] Got on-boot for 4a2f04ae(60.000G/128.000K): 'persist' Sep 16 11:55:59 fen-xcp-01 SMGC: [23306] Got allow_caching for 4a2f04ae(60.000G/128.000K): False Sep 16 11:55:59 fen-xcp-01 SMGC: [23306] Got other-config for 4a2f04ae(60.000G/128.000K): {} Sep 16 11:55:59 fen-xcp-01 SMGC: [23306] Removed vhd-blocks from 4a2f04ae(60.000G/128.000K) Sep 16 11:55:59 fen-xcp-01 SM: [23306] ['/usr/bin/vhd-util', 'read', '--debug', '-B', '-n', '/var/run/sr-mount/ba6c1ba6-c6c8-caef-5d30-7951f50bbbc1/4a2f04ae-6a1d-4bbe-bbc0-2f1a0c4db37b.vhd'] Sep 16 11:55:59 fen-xcp-01 SMGC: [23306] Set vhd-blocks = eJztwQENAAAAwqD3T20PBxQAAAD8Gw8AAAE= for 4a2f04ae(60.000G/128.000K) Sep 16 11:55:59 fen-xcp-01 SMGC: [23306] Leaf-coalesce candidate: 4a2f04ae(60.000G/128.000K) Sep 16 11:56:00 fen-xcp-01 SM: [23842] ['vhd-util', 'key', '-p', '-n', '/var/run/sr-mount/ba6c1ba6-c6c8-caef-5d30-7951f50bbbc1/4a2f04ae-6a1d-4bbe-bbc0-2f1a0c4db37b.vhd'] Sep 16 11:56:31 fen-xcp-01 SM: [26211] ['/usr/sbin/td-util', 'query', 'vhd', '-vpfb', '/var/run/sr-mount/ba6c1ba6-c6c8-caef-5d30-7951f50bbbc1/4a2f04ae-6a1d-4bbe-bbc0-2f1a0c4db37b.vhd'] Sep 16 11:56:31 fen-xcp-01 SM: [26211] vdi_update {'sr_uuid': 'ba6c1ba6-c6c8-caef-5d30-7951f50bbbc1', 'subtask_of': 'DummyRef:|efb1535c-5bf0-454c-a213-7a2be0f0a177|VDI.stat', 'vdi_ref': 'OpaqueRef:5527b552-e1ec-449a-912e-4eb2fe440554', 'vdi_on_boot': 'persist', 'args': [], 'o_direct': False, 'vdi_location': '4a2f04ae-6a1d-4bbe-bbc0-2f1a0c4db37b', 'host_ref': 'OpaqueRef:a34e39e4-b42d-48d3-87a9-6eec76720259', 'session_ref': 'OpaqueRef:aa44b900-f6a7-4ee2-aab0-504a8b11cddc', 'device_config': {'server': '10.20.86.80', 'options': '', 'SRmaster': 'true', 'serverpath': '/mnt/srv/databases', 'nfsversion': '4.1'}, 'command': 'vdi_update', 'vdi_allow_caching': 'false', 'sr_ref': 'OpaqueRef:1d92d8fc-8c8f-4bdc-9de8-6eccf499d65a', 'vdi_uuid': '4a2f04ae-6a1d-4bbe-bbc0-2f1a0c4db37b'} Sep 16 11:56:31 fen-xcp-01 SM: [26211] ['/usr/sbin/td-util', 'query', 'vhd', '-vpfb', '/var/run/sr-mount/ba6c1ba6-c6c8-caef-5d30-7951f50bbbc1/4a2f04ae-6a1d-4bbe-bbc0-2f1a0c4db37b.vhd'] Sep 16 11:56:58 fen-xcp-01 SM: [27737] ['vhd-util', 'key', '-p', '-n', '/var/run/sr-mount/ba6c1ba6-c6c8-caef-5d30-7951f50bbbc1/4a2f04ae-6a1d-4bbe-bbc0-2f1a0c4db37b.vhd'] Sep 16 12:01:00 fen-xcp-01 SMGC: [23306] Got on-boot for 4a2f04ae(60.000G/128.000K): 'persist' Sep 16 12:01:00 fen-xcp-01 SMGC: [23306] Got allow_caching for 4a2f04ae(60.000G/128.000K): False Sep 16 12:01:00 fen-xcp-01 SMGC: [23306] Got other-config for 4a2f04ae(60.000G/128.000K): {} Sep 16 12:01:00 fen-xcp-01 SMGC: [23306] Removed vhd-blocks from 4a2f04ae(60.000G/128.000K) Sep 16 12:01:00 fen-xcp-01 SM: [23306] ['/usr/bin/vhd-util', 'read', '--debug', '-B', '-n', '/var/run/sr-mount/ba6c1ba6-c6c8-caef-5d30-7951f50bbbc1/4a2f04ae-6a1d-4bbe-bbc0-2f1a0c4db37b.vhd'] Sep 16 12:01:00 fen-xcp-01 SMGC: [23306] Set vhd-blocks = eJztwQENAAAAwqD3T20PBxQAAAD8Gw8AAAE= for 4a2f04ae(60.000G/128.000K) Sep 16 12:01:00 fen-xcp-01 SMGC: [23306] Leaf-coalesce candidate: 4a2f04ae(60.000G/128.000K) Sep 16 12:01:00 fen-xcp-01 SMGC: [23306] Got on-boot for 4a2f04ae(60.000G/128.000K): 'persist' Sep 16 12:01:00 fen-xcp-01 SMGC: [23306] Got allow_caching for 4a2f04ae(60.000G/128.000K): False Sep 16 12:01:00 fen-xcp-01 SMGC: [23306] Got other-config for 4a2f04ae(60.000G/128.000K): {} Sep 16 12:01:00 fen-xcp-01 SMGC: [23306] Removed vhd-blocks from 4a2f04ae(60.000G/128.000K) Sep 16 12:01:00 fen-xcp-01 SM: [23306] ['/usr/bin/vhd-util', 'read', '--debug', '-B', '-n', '/var/run/sr-mount/ba6c1ba6-c6c8-caef-5d30-7951f50bbbc1/4a2f04ae-6a1d-4bbe-bbc0-2f1a0c4db37b.vhd'] Sep 16 12:01:00 fen-xcp-01 SMGC: [23306] Set vhd-blocks = eJztwQENAAAAwqD3T20PBxQAAAD8Gw8AAAE= for 4a2f04ae(60.000G/128.000K) Sep 16 12:01:00 fen-xcp-01 SMGC: [23306] Leaf-coalesce candidate: 4a2f04ae(60.000G/128.000K) Sep 16 12:01:00 fen-xcp-01 SMGC: [23306] Leaf-coalescing 4a2f04ae(60.000G/128.000K) -> *1869c2bd(60.000G/1.114G) Sep 16 12:01:00 fen-xcp-01 SM: [23306] Pause request for 4a2f04ae-6a1d-4bbe-bbc0-2f1a0c4db37b Sep 16 12:01:00 fen-xcp-01 SMGC: [23306] Removed leaf-coalesce from 4a2f04ae(60.000G/128.000K) Sep 16 12:01:00 fen-xcp-01 SMGC: [23306] Leaf-coalesce failed on 4a2f04ae(60.000G/128.000K), skipping Sep 16 13:04:29 fen-xcp-01 SM: [23853] ['vhd-util', 'key', '-p', '-n', '/var/run/sr-mount/ba6c1ba6-c6c8-caef-5d30-7951f50bbbc1/4a2f04ae-6a1d-4bbe-bbc0-2f1a0c4db37b.vhd'] Sep 16 13:04:33 fen-xcp-01 SMGC: [24023] 4a2f04ae(60.000G/128.000K) Sep 16 13:04:33 fen-xcp-01 SMGC: [24023] Got on-boot for 4a2f04ae(60.000G/128.000K): 'persist' Sep 16 13:04:33 fen-xcp-01 SMGC: [24023] Got allow_caching for 4a2f04ae(60.000G/128.000K): False Sep 16 13:04:33 fen-xcp-01 SMGC: [24023] Got other-config for 4a2f04ae(60.000G/128.000K): {} Sep 16 13:04:33 fen-xcp-01 SMGC: [24023] Removed vhd-blocks from 4a2f04ae(60.000G/128.000K) Sep 16 13:04:33 fen-xcp-01 SM: [24023] ['/usr/bin/vhd-util', 'read', '--debug', '-B', '-n', '/var/run/sr-mount/ba6c1ba6-c6c8-caef-5d30-7951f50bbbc1/4a2f04ae-6a1d-4bbe-bbc0-2f1a0c4db37b.vhd'] Sep 16 13:04:33 fen-xcp-01 SMGC: [24023] Set vhd-blocks = eJztwQENAAAAwqD3T20PBxQAAAD8Gw8AAAE= for 4a2f04ae(60.000G/128.000K) Sep 16 13:04:33 fen-xcp-01 SMGC: [24023] Leaf-coalesce candidate: 4a2f04ae(60.000G/128.000K) Sep 16 13:09:33 fen-xcp-01 SMGC: [24023] Got on-boot for 4a2f04ae(60.000G/128.000K): 'persist' Sep 16 13:09:33 fen-xcp-01 SMGC: [24023] Got allow_caching for 4a2f04ae(60.000G/128.000K): False Sep 16 13:09:33 fen-xcp-01 SMGC: [24023] Got other-config for 4a2f04ae(60.000G/128.000K): {} Sep 16 13:09:33 fen-xcp-01 SMGC: [24023] Removed vhd-blocks from 4a2f04ae(60.000G/128.000K) Sep 16 13:09:33 fen-xcp-01 SM: [24023] ['/usr/bin/vhd-util', 'read', '--debug', '-B', '-n', '/var/run/sr-mount/ba6c1ba6-c6c8-caef-5d30-7951f50bbbc1/4a2f04ae-6a1d-4bbe-bbc0-2f1a0c4db37b.vhd'] Sep 16 13:09:33 fen-xcp-01 SMGC: [24023] Set vhd-blocks = eJztwQENAAAAwqD3T20PBxQAAAD8Gw8AAAE= for 4a2f04ae(60.000G/128.000K) Sep 16 13:09:33 fen-xcp-01 SMGC: [24023] Leaf-coalesce candidate: 4a2f04ae(60.000G/128.000K) Sep 16 13:09:34 fen-xcp-01 SMGC: [24023] Got on-boot for 4a2f04ae(60.000G/128.000K): 'persist' Sep 16 13:09:34 fen-xcp-01 SMGC: [24023] Got allow_caching for 4a2f04ae(60.000G/128.000K): False Sep 16 13:09:34 fen-xcp-01 SMGC: [24023] Got other-config for 4a2f04ae(60.000G/128.000K): {} Sep 16 13:09:34 fen-xcp-01 SMGC: [24023] Removed vhd-blocks from 4a2f04ae(60.000G/128.000K) Sep 16 13:09:34 fen-xcp-01 SM: [24023] ['/usr/bin/vhd-util', 'read', '--debug', '-B', '-n', '/var/run/sr-mount/ba6c1ba6-c6c8-caef-5d30-7951f50bbbc1/4a2f04ae-6a1d-4bbe-bbc0-2f1a0c4db37b.vhd'] Sep 16 13:09:34 fen-xcp-01 SMGC: [24023] Set vhd-blocks = eJztwQENAAAAwqD3T20PBxQAAAD8Gw8AAAE= for 4a2f04ae(60.000G/128.000K) Sep 16 13:09:34 fen-xcp-01 SMGC: [24023] Leaf-coalesce candidate: 4a2f04ae(60.000G/128.000K) Sep 16 13:09:34 fen-xcp-01 SMGC: [24023] Leaf-coalescing 4a2f04ae(60.000G/128.000K) -> *1869c2bd(60.000G/1.114G) Sep 16 13:09:34 fen-xcp-01 SM: [24023] Pause request for 4a2f04ae-6a1d-4bbe-bbc0-2f1a0c4db37b Sep 16 13:09:34 fen-xcp-01 SMGC: [24023] Removed leaf-coalesce from 4a2f04ae(60.000G/128.000K) Sep 16 13:09:34 fen-xcp-01 SMGC: [24023] Leaf-coalesce failed on 4a2f04ae(60.000G/128.000K), skippingcat SMlog | grep "3a4bdcc6"

Sep 16 11:33:51 fen-xcp-01 SM: [24503] lock: released /var/lock/sm/3a4bdcc6-ce4c-4c00-aab3-2895061229f2/vdi Sep 16 11:33:52 fen-xcp-01 SM: [24503] lock: acquired /var/lock/sm/3a4bdcc6-ce4c-4c00-aab3-2895061229f2/vdi Sep 16 11:33:52 fen-xcp-01 SM: [24503] Adding tag to: 3a4bdcc6-ce4c-4c00-aab3-2895061229f2 Sep 16 11:33:52 fen-xcp-01 SM: [24503] lock: released /var/lock/sm/3a4bdcc6-ce4c-4c00-aab3-2895061229f2/vdi Sep 16 11:33:53 fen-xcp-01 SM: [24503] lock: acquired /var/lock/sm/3a4bdcc6-ce4c-4c00-aab3-2895061229f2/vdi Sep 16 11:33:53 fen-xcp-01 SM: [24503] Adding tag to: 3a4bdcc6-ce4c-4c00-aab3-2895061229f2 Sep 16 11:33:53 fen-xcp-01 SM: [24503] lock: released /var/lock/sm/3a4bdcc6-ce4c-4c00-aab3-2895061229f2/vdi Sep 16 11:33:54 fen-xcp-01 SM: [24503] lock: acquired /var/lock/sm/3a4bdcc6-ce4c-4c00-aab3-2895061229f2/vdi Sep 16 11:33:54 fen-xcp-01 SM: [24503] Adding tag to: 3a4bdcc6-ce4c-4c00-aab3-2895061229f2 Sep 16 11:33:54 fen-xcp-01 SM: [24503] lock: released /var/lock/sm/3a4bdcc6-ce4c-4c00-aab3-2895061229f2/vdi Sep 16 11:33:55 fen-xcp-01 SM: [24503] lock: acquired /var/lock/sm/3a4bdcc6-ce4c-4c00-aab3-2895061229f2/vdi Sep 16 11:33:55 fen-xcp-01 SM: [24503] Adding tag to: 3a4bdcc6-ce4c-4c00-aab3-2895061229f2 Sep 16 11:33:55 fen-xcp-01 SM: [24503] lock: released /var/lock/sm/3a4bdcc6-ce4c-4c00-aab3-2895061229f2/vdi Sep 16 11:33:56 fen-xcp-01 SM: [24503] lock: acquired /var/lock/sm/3a4bdcc6-ce4c-4c00-aab3-2895061229f2/vdi Sep 16 11:33:56 fen-xcp-01 SM: [24503] Adding tag to: 3a4bdcc6-ce4c-4c00-aab3-2895061229f2 Sep 16 11:33:56 fen-xcp-01 SM: [24503] lock: released /var/lock/sm/3a4bdcc6-ce4c-4c00-aab3-2895061229f2/vdi Sep 16 11:33:57 fen-xcp-01 SM: [24503] lock: acquired /var/lock/sm/3a4bdcc6-ce4c-4c00-aab3-2895061229f2/vdi Sep 16 11:33:57 fen-xcp-01 SM: [24503] Adding tag to: 3a4bdcc6-ce4c-4c00-aab3-2895061229f2 Sep 16 11:33:57 fen-xcp-01 SM: [24503] lock: released /var/lock/sm/3a4bdcc6-ce4c-4c00-aab3-2895061229f2/vdi Sep 16 11:33:58 fen-xcp-01 SM: [24503] lock: acquired /var/lock/sm/3a4bdcc6-ce4c-4c00-aab3-2895061229f2/vdi Sep 16 11:33:58 fen-xcp-01 SM: [24503] Adding tag to: 3a4bdcc6-ce4c-4c00-aab3-2895061229f2 Sep 16 11:33:58 fen-xcp-01 SM: [24503] lock: released /var/lock/sm/3a4bdcc6-ce4c-4c00-aab3-2895061229f2/vdi Sep 16 11:33:59 fen-xcp-01 SM: [24503] lock: acquired /var/lock/sm/3a4bdcc6-ce4c-4c00-aab3-2895061229f2/vdi Sep 16 11:33:59 fen-xcp-01 SM: [24503] Adding tag to: 3a4bdcc6-ce4c-4c00-aab3-2895061229f2 Sep 16 11:33:59 fen-xcp-01 SM: [24503] lock: released /var/lock/sm/3a4bdcc6-ce4c-4c00-aab3-2895061229f2/vdi Sep 16 11:34:00 fen-xcp-01 SM: [24503] lock: acquired /var/lock/sm/3a4bdcc6-ce4c-4c00-aab3-2895061229f2/vdi Sep 16 11:34:00 fen-xcp-01 SM: [24503] Adding tag to: 3a4bdcc6-ce4c-4c00-aab3-2895061229f2 Sep 16 11:34:00 fen-xcp-01 SM: [24503] lock: released /var/lock/sm/3a4bdcc6-ce4c-4c00-aab3-2895061229f2/vdi Sep 16 11:34:01 fen-xcp-01 SM: [24503] lock: acquired /var/lock/sm/3a4bdcc6-ce4c-4c00-aab3-2895061229f2/vdi Sep 16 11:34:01 fen-xcp-01 SM: [24503] Adding tag to: 3a4bdcc6-ce4c-4c00-aab3-2895061229f2 Sep 16 11:34:01 fen-xcp-01 SM: [24503] lock: released /var/lock/sm/3a4bdcc6-ce4c-4c00-aab3-2895061229f2/vdi Sep 16 11:34:02 fen-xcp-01 SM: [24503] lock: acquired /var/lock/sm/3a4bdcc6-ce4c-4c00-aab3-2895061229f2/vdi Sep 16 11:34:02 fen-xcp-01 SM: [24503] Adding tag to: 3a4bdcc6-ce4c-4c00-aab3-2895061229f2 Sep 16 11:34:02 fen-xcp-01 SM: [24503] lock: released /var/lock/sm/3a4bdcc6-ce4c-4c00-aab3-2895061229f2/vdi Sep 16 11:34:03 fen-xcp-01 SM: [24503] lock: acquired /var/lock/sm/3a4bdcc6-ce4c-4c00-aab3-2895061229f2/vdi Sep 16 11:34:03 fen-xcp-01 SM: [24503] Adding tag to: 3a4bdcc6-ce4c-4c00-aab3-2895061229f2 Sep 16 11:34:03 fen-xcp-01 SM: [24503] lock: released /var/lock/sm/3a4bdcc6-ce4c-4c00-aab3-2895061229f2/vdi Sep 16 11:34:04 fen-xcp-01 SM: [24503] lock: acquired /var/lock/sm/3a4bdcc6-ce4c-4c00-aab3-2895061229f2/vdi Sep 16 11:34:04 fen-xcp-01 SM: [24503] Adding tag to: 3a4bdcc6-ce4c-4c00-aab3-2895061229f2 Sep 16 11:34:04 fen-xcp-01 SM: [24503] lock: released /var/lock/sm/3a4bdcc6-ce4c-4c00-aab3-2895061229f2/vdi Sep 16 11:34:05 fen-xcp-01 SM: [24503] lock: acquired /var/lock/sm/3a4bdcc6-ce4c-4c00-aab3-2895061229f2/vdi Sep 16 11:34:05 fen-xcp-01 SM: [24503] Adding tag to: 3a4bdcc6-ce4c-4c00-aab3-2895061229f2 Sep 16 11:34:05 fen-xcp-01 SM: [24503] lock: released /var/lock/sm/3a4bdcc6-ce4c-4c00-aab3-2895061229f2/vdi Sep 16 11:34:06 fen-xcp-01 SM: [24503] lock: acquired /var/lock/sm/3a4bdcc6-ce4c-4c00-aab3-2895061229f2/vdi Sep 16 11:34:06 fen-xcp-01 SM: [24503] Adding tag to: 3a4bdcc6-ce4c-4c00-aab3-2895061229f2 Sep 16 11:34:06 fen-xcp-01 SM: [24503] lock: released /var/lock/sm/3a4bdcc6-ce4c-4c00-aab3-2895061229f2/vdi Sep 16 11:34:07 fen-xcp-01 SM: [24503] ***** vdi_activate: EXCEPTION <class 'util.SMException'>, VDI 3a4bdcc6-ce4c-4c00-aab3-2895061229f2 locked Sep 16 11:34:07 fen-xcp-01 SM: [24503] Raising exception [46, The VDI is not available [opterr=VDI 3a4bdcc6-ce4c-4c00-aab3-2895061229f2 locked]] Sep 16 11:34:07 fen-xcp-01 SM: [24503] ***** NFS VHD: EXCEPTION <class 'SR.SROSError'>, The VDI is not available [opterr=VDI 3a4bdcc6-ce4c-4c00-aab3-2895061229f2 locked] Sep 16 11:34:08 fen-xcp-01 SM: [1804] ['/usr/sbin/td-util', 'query', 'vhd', '-vpfb', '/var/run/sr-mount/cfda3fef-663a-849b-3e47-692607e612e4/3a4bdcc6-ce4c-4c00-aab3-2895061229f2.vhd'] Sep 16 11:34:08 fen-xcp-01 SM: [1804] vdi_detach {'sr_uuid': 'cfda3fef-663a-849b-3e47-692607e612e4', 'subtask_of': 'DummyRef:|255a4ea2-036b-4b2c-8cfe-ee66144b5286|VDI.detach', 'vdi_ref': 'OpaqueRef:7abb8fbd-09ab-496e-8154-dcd8f8a190af', 'vdi_on_boot': 'persist', 'args': [], 'o_direct': False, 'vdi_location': '3a4bdcc6-ce4c-4c00-aab3-2895061229f2', 'host_ref': 'OpaqueRef:a34e39e4-b42d-48d3-87a9-6eec76720259', 'session_ref': 'OpaqueRef:433e29ff-9a31-497e-a831-19ef6e7478d8', 'device_config': {'server': '10.20.86.80', 'options': '', 'SRmaster': 'true', 'serverpath': '/mnt/srv/xcp_lowIO', 'nfsversion': '4.1'}, 'command': 'vdi_detach', 'vdi_allow_caching': 'false', 'sr_ref': 'OpaqueRef:795adcc6-8c21-4853-9c39-8b1b1b095abc', 'vdi_uuid': '3a4bdcc6-ce4c-4c00-aab3-2895061229f2'} Sep 16 11:34:08 fen-xcp-01 SM: [1804] lock: opening lock file /var/lock/sm/3a4bdcc6-ce4c-4c00-aab3-2895061229f2/vdi -

Again as I said, for such advanced investigation, you should open a support ticket. Hopefully, all your involved hosts are under support so our storage team could investigate more in depth what's going on.

-

Was a solution to this issue found? I am not running into the same thing in my home lab after my nightly delta and monthly full backup ended up running at the same time. i have 2 VM's each with a disk in the same state as the original poster.

-

@SeanMiller Have you tried running the

resetvdis.pycommand on the affected VDIs? You can read more about it here. -

@Danp sure did, it was no help. we ended up having to restore the effected VM's from backup

there was no sign of any storage network issues during the time this started, no RT,RX errors anywhere, no traps, nothing in the zfs server logs.. nothing.... we have hundreds of VM's running , only 7 were effected and at random times during the backup process. its a little concerning..

-

-

@SeanMiller no sorry, we ended up having to restore the VDI's form a known good backup.

-

Im restoring from backup.

This does have me a bit concerned, This type of issue should not be caused by a backup..

-

The root cause is not clear, I don't think it's the backup directly. It might be the generation of snapshots and reading them that cause a lot of sudden I/O on the storage.

This would require some intensive investigations, gather all the logs of all hosts and inspecting what's going on. Each installation is unique (hardware, network, disks, CPUs, memory, there's an infinite number of combinations), so finding a solution on a forum without spending days asking tons of questions seems complicated. What's concerning to me is that type of infrastructure should not be unsupported, because spending time to understand the problem is part of what we do. I'm not saying we'll find magically a solution or the root cause, but at least it could be investigated in details and compare if we have similar reports to go deeper.

-

@olivierlambert I can provide information on infrastructure if it would help. the issue has not happened again though , and i cannot re create the issue in my lab. even when backups are running we are REALLY under utilizing our NFS/ZFS NVME/SSD based storage with 40gb/s links. i'm not saying its not possible, but i would be shocked. we have no recorded tx/rx errors on truenas/swtich/hosts and no record of any snmp traps coming in for any issues with all the equipment involved.

-

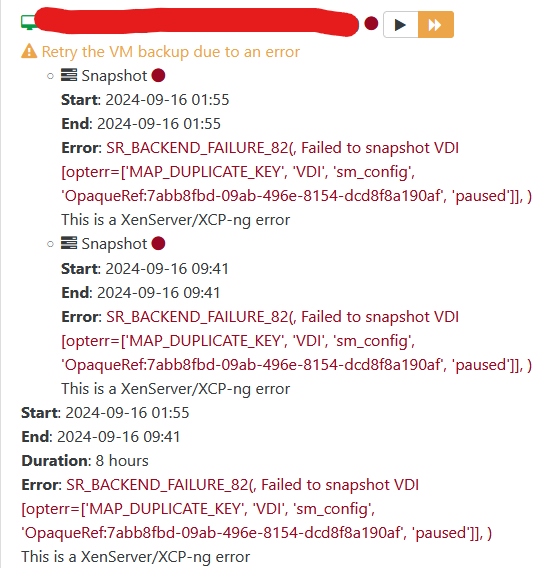

and its happening again tonight.

I have 55 failed backups tonight with a mix of the following errors.

Error: INTERNAL_ERROR(Invalid argument: chop)

Error: SR_BACKEND_FAILURE_82(, Failed to snapshot VDI [opterr=failed to pause VDI 346298a8-cfad-4e9b-84fe-6185fd5e7fbb], )Zero TX/RX errors on SFP's at on XEN hosts and Storage

ZERO TX/RX errors on switch ports

no errors on the ZFS/NFS storage devices

no traps in monitoring for any networking or storage issues. -

Well, obviously something is wrong somewhere, otherwise you wouldn't have this error. I think I never saw

Error: INTERNAL_ERROR(Invalid argument: chop)in my life.There's something triggering storage issues, not having TX/RX errors doesn't save you for other problems, like MTU problems, bad switch config or simply latency issue from the Dom0 perspective, you name it. I'd love to get it reproducible at some point but as you can see, there's "something" that makes it happen, now the question is "what". Those problems are really hard to diagnose because it's often a combo of environment and tipping points from timeouts or similar things.

-

@jshiells Did you also check

/var/log/kern.logfor hardware errors? I'm seeing qemu process crashing with bad RIP (Instruction pointer) value which screams for a hardware issue, IMO. Just a 1-bit flipping in memory is enough to cause unpleasant surprises. I hope the servers are using ECC memory. I'd run a memtest and some CPU stress test on that server.Some years ago, I had a two-socket Dell server with one bad core (no errors reported at boot). When the Xen scheduler ran a task on that core... Boom. Host crash.

-

@olivierlambert digging more into this today, we did find this error in xensource.log related to that "CHOP" message

xensource.log.11.gz:Oct 20 10:22:08 xxx-xxx-01 xapi: [error||115 pool_db_backup_thread|Pool DB sync D:d79f115776bd|pool_db_sync] Failed to synchronise DB with host OpaqueRef:a87f2682-dd77-4a2d-aa1a-b831b1d5107f: Server_error(INTERNAL_ERROR, [ Invalid argument: chop ])

xensource.log.22.gz:Oct 19 06:06:03 fen-xcp-01 xapi: [error||27967996 :::80||backtrace] host.get_servertime D:61ad83a0cd72 failed with exception (Invalid_argument chop)

-

@tuxen no sorry, great idea but we are not seeing any errors like that in kern.log. this problem when it happens is across several xen hosts all at the same time. it would be wild if all of the xen hosts were having hardware issues during the small window of time this problem happened in. if it was one xen server then i would look at hardware but its all of them, letting me believe its XOA, a BUG in xcp-ng or a storage problem (even though we have seen no errors or monitoring blips at all on the truenas server)

-

@jshiells said in MAP_DUPLICATE_KEY error in XOA backup - VM's wont START now!:

@tuxen no sorry, great idea but we are not seeing any errors like that in kern.log. this problem when it happens is across several xen hosts all at the same time. it would be wild if all of the xen hosts were having hardware issues during the small window of time this problem happened in. if it was one xen server then i would look at hardware but its all of them, letting me believe its XOA, a BUG in xcp-ng or a storage problem (even though we have seen no errors or monitoring blips at all on the truenas server)

In a cluster with shared resources, It only takes one unstable host or a crashed PID left with an open shared resource to cause some unclean state cluster-wide. If the hosts and VMs aren't protected by a HA/STONITH mechanism to automatically release the resources, a qemu crash might keep one or more VDIs in an invalid, blocked state and affect SR scans done by the master. Failed SR scans may prevent SR-dependent actions (eg. VM boot, snapshots, GC kicking etc).

I'm not saying there isn't a bug somewhere but running MEMTEST/CPU tests on the host that triggered the bad RIP error would be my starting point of investigation. Just to be sure.

-

After much digging I traced the following formal exceptions below from

XEN. For most of them, the "chop" error is burried in the bowels of XAPI.ie

Oct 18 21:46:29 fen-xcp-01 SMGC: [28249] File "/usr/lib/python2.7/site-packages/XenAPI.py", line 238, in _parse_result Oct 18 21:46:29 fen-xcp-01 SMGC: [28249] raise Failure(result['ErrorDescription'])It's worth noting due to the time involved in this, the only things

considered in play where nfs-01/02 and lesser degree 03. The one XCP

pool (fen-xcp), and even lesser degree XOA itself.'I could not find any obvious issues with storage, neither hardware nor

data. Scrubs were fine, no cpu/hardware errors.I could not find any obvious issues with xcp hosts, neither hardware nor

data. No cpu/hardware errors.The only real change made was to correct the clock on nfs-01. I don't

see how that could affect this since most if not all locking is done

with flock.There is a valid argument to be made that Xen technically was responding

to an issue, though not entirely clear what nor how. Most of the other

errors / wtfbbq states are either directly related (in call path), or

indirectly (xen wanted a thing, didn't get it). Those are some deep

rabbit holes.There is more pre/post context to these, tried to include what I thought

made them a bit more easier to understand.

./SMlog.4.gz:Oct 19 00:19:36 fen-xcp-01 SMGC: [3729] * E X C E P T I O N * Oct 19 00:19:36 fen-xcp-01 SMGC: [3729] leaf-coalesce: EXCEPTION <class 'XenAPI.Failure'>, ['INTERNAL_ERROR', 'Invalid argument: chop'] Oct 19 00:19:36 fen-xcp-01 SMGC: [3729] File "/opt/xensource/sm/cleanup.py", line 1774, in coalesceLeaf Oct 19 00:19:36 fen-xcp-01 SMGC: [3729] self._coalesceLeaf(vdi) Oct 19 00:19:36 fen-xcp-01 SMGC: [3729] File "/opt/xensource/sm/cleanup.py", line 2048, in _coalesceLeaf Oct 19 00:19:36 fen-xcp-01 SMGC: [3729] if not self._snapshotCoalesce(vdi): Oct 19 00:19:36 fen-xcp-01 SMGC: [3729] File "/opt/xensource/sm/cleanup.py", line 2153, in _snapshotCoalesce Oct 19 00:19:36 fen-xcp-01 SMGC: [3729] self._coalesce(tempSnap) Oct 19 00:19:36 fen-xcp-01 SMGC: [3729] File "/opt/xensource/sm/cleanup.py", line 1962, in _coalesce Oct 19 00:19:36 fen-xcp-01 SMGC: [3729] self.deleteVDI(vdi) Oct 19 00:19:36 fen-xcp-01 SMGC: [3729] File "/opt/xensource/sm/cleanup.py", line 2469, in deleteVDI Oct 19 00:19:36 fen-xcp-01 SMGC: [3729] self._checkSlaves(vdi) Oct 19 00:19:36 fen-xcp-01 SMGC: [3729] File "/opt/xensource/sm/cleanup.py", line 2482, in _checkSlaves Oct 19 00:19:36 fen-xcp-01 SMGC: [3729] self._checkSlave(hostRef, vdi) Oct 19 00:19:36 fen-xcp-01 SMGC: [3729] File "/opt/xensource/sm/cleanup.py", line 2491, in _checkSlave Oct 19 00:19:36 fen-xcp-01 SMGC: [3729] text = _host.call_plugin(*call) Oct 19 00:19:36 fen-xcp-01 SMGC: [3729] File "/usr/lib/python2.7/site-packages/XenAPI.py", line 264, in __call__ Oct 19 00:19:36 fen-xcp-01 SMGC: [3729] return self.__send(self.__name, args) Oct 19 00:19:36 fen-xcp-01 SMGC: [3729] File "/usr/lib/python2.7/site-packages/XenAPI.py", line 160, in xenapi_request Oct 19 00:19:36 fen-xcp-01 SMGC: [3729] result = _parse_result(getattr(self, methodname)(*full_params)) Oct 19 00:19:36 fen-xcp-01 SMGC: [3729] File "/usr/lib/python2.7/site-packages/XenAPI.py", line 238, in _parse_result Oct 19 00:19:36 fen-xcp-01 SMGC: [3729] raise Failure(result['ErrorDescription']) ./SMlog.4.gz:Oct 19 00:22:04 fen-xcp-01 SMGC: [3729] * E X C E P T I O N * Oct 19 00:22:04 fen-xcp-01 SMGC: [3729] leaf-coalesce: EXCEPTION <class 'XenAPI.Failure'>, ['INTERNAL_ERROR', 'Invalid argument: chop'] Oct 19 00:22:04 fen-xcp-01 SMGC: [3729] File "/opt/xensource/sm/cleanup.py", line 1774, in coalesceLeaf Oct 19 00:22:04 fen-xcp-01 SMGC: [3729] self._coalesceLeaf(vdi) Oct 19 00:22:04 fen-xcp-01 SMGC: [3729] File "/opt/xensource/sm/cleanup.py", line 2048, in _coalesceLeaf Oct 19 00:22:04 fen-xcp-01 SMGC: [3729] if not self._snapshotCoalesce(vdi): Oct 19 00:22:04 fen-xcp-01 SMGC: [3729] File "/opt/xensource/sm/cleanup.py", line 2153, in _snapshotCoalesce Oct 19 00:22:04 fen-xcp-01 SMGC: [3729] self._coalesce(tempSnap) Oct 19 00:22:04 fen-xcp-01 SMGC: [3729] File "/opt/xensource/sm/cleanup.py", line 1962, in _coalesce Oct 19 00:22:04 fen-xcp-01 SMGC: [3729] self.deleteVDI(vdi) Oct 19 00:22:04 fen-xcp-01 SMGC: [3729] File "/opt/xensource/sm/cleanup.py", line 2469, in deleteVDI Oct 19 00:22:04 fen-xcp-01 SMGC: [3729] self._checkSlaves(vdi) Oct 19 00:22:04 fen-xcp-01 SMGC: [3729] File "/opt/xensource/sm/cleanup.py", line 2482, in _checkSlaves Oct 19 00:22:04 fen-xcp-01 SMGC: [3729] self._checkSlave(hostRef, vdi) Oct 19 00:22:04 fen-xcp-01 SMGC: [3729] File "/opt/xensource/sm/cleanup.py", line 2491, in _checkSlave Oct 19 00:22:04 fen-xcp-01 SMGC: [3729] text = _host.call_plugin(*call) Oct 19 00:22:04 fen-xcp-01 SMGC: [3729] File "/usr/lib/python2.7/site-packages/XenAPI.py", line 264, in __call__ Oct 19 00:22:04 fen-xcp-01 SMGC: [3729] return self.__send(self.__name, args) Oct 19 00:22:04 fen-xcp-01 SMGC: [3729] File "/usr/lib/python2.7/site-packages/XenAPI.py", line 160, in xenapi_request Oct 19 00:22:04 fen-xcp-01 SMGC: [3729] result = _parse_result(getattr(self, methodname)(*full_params)) Oct 19 00:22:04 fen-xcp-01 SMGC: [3729] File "/usr/lib/python2.7/site-packages/XenAPI.py", line 238, in _parse_result Oct 19 00:22:04 fen-xcp-01 SMGC: [3729] raise Failure(result['ErrorDescription']) ./SMlog.4.gz:Oct 19 00:22:11 fen-xcp-01 SMGC: [3729] * E X C E P T I O N * Oct 19 00:22:11 fen-xcp-01 SMGC: [3729] gc: EXCEPTION <class 'XenAPI.Failure'>, ['INTERNAL_ERROR', 'Invalid argument: chop'] Oct 19 00:22:11 fen-xcp-01 SMGC: [3729] File "/opt/xensource/sm/cleanup.py", line 3388, in gc Oct 19 00:22:11 fen-xcp-01 SMGC: [3729] _gc(None, srUuid, dryRun) Oct 19 00:22:11 fen-xcp-01 SMGC: [3729] File "/opt/xensource/sm/cleanup.py", line 3273, in _gc Oct 19 00:22:11 fen-xcp-01 SMGC: [3729] _gcLoop(sr, dryRun) Oct 19 00:22:11 fen-xcp-01 SMGC: [3729] File "/opt/xensource/sm/cleanup.py", line 3214, in _gcLoop Oct 19 00:22:11 fen-xcp-01 SMGC: [3729] sr.garbageCollect(dryRun) Oct 19 00:22:11 fen-xcp-01 SMGC: [3729] File "/opt/xensource/sm/cleanup.py", line 1794, in garbageCollect Oct 19 00:22:11 fen-xcp-01 SMGC: [3729] self.deleteVDIs(vdiList) Oct 19 00:22:11 fen-xcp-01 SMGC: [3729] File "/opt/xensource/sm/cleanup.py", line 2374, in deleteVDIs Oct 19 00:22:11 fen-xcp-01 SMGC: [3729] SR.deleteVDIs(self, vdiList) Oct 19 00:22:11 fen-xcp-01 SMGC: [3729] File "/opt/xensource/sm/cleanup.py", line 1808, in deleteVDIs Oct 19 00:22:11 fen-xcp-01 SMGC: [3729] self.deleteVDI(vdi) Oct 19 00:22:11 fen-xcp-01 SMGC: [3729] File "/opt/xensource/sm/cleanup.py", line 2469, in deleteVDI Oct 19 00:22:11 fen-xcp-01 SMGC: [3729] self._checkSlaves(vdi) Oct 19 00:22:11 fen-xcp-01 SMGC: [3729] File "/opt/xensource/sm/cleanup.py", line 2482, in _checkSlaves Oct 19 00:22:11 fen-xcp-01 SMGC: [3729] self._checkSlave(hostRef, vdi) Oct 19 00:22:11 fen-xcp-01 SMGC: [3729] File "/opt/xensource/sm/cleanup.py", line 2491, in _checkSlave Oct 19 00:22:11 fen-xcp-01 SMGC: [3729] text = _host.call_plugin(*call) Oct 19 00:22:11 fen-xcp-01 SMGC: [3729] File "/usr/lib/python2.7/site-packages/XenAPI.py", line 264, in __call__ Oct 19 00:22:11 fen-xcp-01 SMGC: [3729] return self.__send(self.__name, args) Oct 19 00:22:11 fen-xcp-01 SMGC: [3729] File "/usr/lib/python2.7/site-packages/XenAPI.py", line 160, in xenapi_request Oct 19 00:22:11 fen-xcp-01 SMGC: [3729] result = _parse_result(getattr(self, methodname)(*full_params)) Oct 19 00:22:11 fen-xcp-01 SMGC: [3729] File "/usr/lib/python2.7/site-packages/XenAPI.py", line 238, in _parse_result Oct 19 00:22:11 fen-xcp-01 SMGC: [3729] raise Failure(result['ErrorDescription']) Oct 19 00:22:11 fen-xcp-01 SMGC: [3729] ./SMlog.5.gz:Oct 18 21:51:23 fen-xcp-01 SMGC: [30714] * E X C E P T I O N * Oct 18 21:51:23 fen-xcp-01 SMGC: [30714] GC process exiting, no work left Oct 18 21:51:23 fen-xcp-01 SM: [30714] lock: released /var/lock/sm/0cff5362-5c89-2241-2207-a1d736d9ef5e/gc_active Oct 18 21:51:23 fen-xcp-01 SMGC: [30714] In cleanup Oct 18 21:51:23 fen-xcp-01 SMGC: [30714] SR 0cff ('fen-nfs-03 - DR (Diaster Recovery Storage ZFS/NFS)') (608 VDIs in 524 VHD trees): no changes Oct 18 21:51:23 fen-xcp-01 SMGC: [30714] *~*~*~*~*~*~*~*~*~*~*~*~*~*~*~*~*~*~*~*~* Oct 18 21:51:23 fen-xcp-01 SMGC: [30714] *********************** Oct 18 21:51:23 fen-xcp-01 SMGC: [30714] * E X C E P T I O N * Oct 18 21:51:23 fen-xcp-01 SMGC: [30714] *********************** Oct 18 21:51:23 fen-xcp-01 SMGC: [30714] gc: EXCEPTION <class 'XenAPI.Failure'>, ['INTERNAL_ERROR', 'Invalid argument: chop'] Oct 18 21:51:23 fen-xcp-01 SMGC: [30714] File "/opt/xensource/sm/cleanup.py", line 3388, in gc Oct 18 21:51:23 fen-xcp-01 SMGC: [30714] _gc(None, srUuid, dryRun) Oct 18 21:51:23 fen-xcp-01 SMGC: [30714] File "/opt/xensource/sm/cleanup.py", line 3273, in _gc Oct 18 21:51:23 fen-xcp-01 SMGC: [30714] _gcLoop(sr, dryRun) Oct 18 21:51:23 fen-xcp-01 SMGC: [30714] File "/opt/xensource/sm/cleanup.py", line 3214, in _gcLoop Oct 18 21:51:23 fen-xcp-01 SMGC: [30714] sr.garbageCollect(dryRun) Oct 18 21:51:23 fen-xcp-01 SMGC: [30714] File "/opt/xensource/sm/cleanup.py", line 1794, in garbageCollect Oct 18 21:51:23 fen-xcp-01 SMGC: [30714] self.deleteVDIs(vdiList) Oct 18 21:51:23 fen-xcp-01 SMGC: [30714] File "/opt/xensource/sm/cleanup.py", line 2374, in deleteVDIs Oct 18 21:51:23 fen-xcp-01 SMGC: [30714] SR.deleteVDIs(self, vdiList) Oct 18 21:51:23 fen-xcp-01 SMGC: [30714] File "/opt/xensource/sm/cleanup.py", line 1808, in deleteVDIs Oct 18 21:51:23 fen-xcp-01 SMGC: [30714] self.deleteVDI(vdi) Oct 18 21:51:23 fen-xcp-01 SMGC: [30714] File "/opt/xensource/sm/cleanup.py", line 2469, in deleteVDI Oct 18 21:51:23 fen-xcp-01 SMGC: [30714] self._checkSlaves(vdi) Oct 18 21:51:23 fen-xcp-01 SMGC: [30714] File "/opt/xensource/sm/cleanup.py", line 2482, in _checkSlaves Oct 18 21:51:23 fen-xcp-01 SMGC: [30714] self._checkSlave(hostRef, vdi) Oct 18 21:51:23 fen-xcp-01 SMGC: [30714] File "/opt/xensource/sm/cleanup.py", line 2491, in _checkSlave Oct 18 21:51:23 fen-xcp-01 SMGC: [30714] text = _host.call_plugin(*call) Oct 18 21:51:23 fen-xcp-01 SMGC: [30714] File "/usr/lib/python2.7/site-packages/XenAPI.py", line 264, in __call__ Oct 18 21:51:23 fen-xcp-01 SMGC: [30714] return self.__send(self.__name, args) Oct 18 21:51:23 fen-xcp-01 SMGC: [30714] File "/usr/lib/python2.7/site-packages/XenAPI.py", line 160, in xenapi_request Oct 18 21:51:23 fen-xcp-01 SMGC: [30714] result = _parse_result(getattr(self, methodname)(*full_params)) Oct 18 21:51:23 fen-xcp-01 SMGC: [30714] File "/usr/lib/python2.7/site-packages/XenAPI.py", line 238, in _parse_result Oct 18 21:51:23 fen-xcp-01 SMGC: [30714] raise Failure(result['ErrorDescription']) Oct 18 21:51:23 fen-xcp-01 SMGC: [30714] Oct 18 21:51:23 fen-xcp-01 SMGC: [30714] *~*~*~*~*~*~*~*~*~*~*~*~*~*~*~*~*~*~*~*~* Oct 18 21:51:23 fen-xcp-01 SMGC: [30714] * * * * * SR 0cff5362-5c89-2241-2207-a1d736d9ef5e: ERROR Oct 18 21:51:23 fen-xcp-01 SMGC: [30714] Oct 18 21:51:28 fen-xcp-01 SM: [26746] lock: opening lock file /var/lock/sm/894e5d0d-c100-be00-4fc4-b0c6db478a26/sr -

Well, changing time on the NFS server could have an impact, since file access time attributes are used to do many checks in general. I'm not saying that's 100% the issue, but it tells how deep a small change could cause havoc.

Hello! It looks like you're interested in this conversation, but you don't have an account yet.

Getting fed up of having to scroll through the same posts each visit? When you register for an account, you'll always come back to exactly where you were before, and choose to be notified of new replies (either via email, or push notification). You'll also be able to save bookmarks and upvote posts to show your appreciation to other community members.

With your input, this post could be even better 💗

Register Login