@Pilow Yeah, I'd run iostat and look to see how th resources are being limited, I'd run something like "iostat -dtkx 10" so you get extended stats every 10 seconds during that replication process and look at the wait, queue states, etc. to see if that helps identify any bottlenecks.

Posts

-

RE: Continuous Replication Speed

-

RE: Server Admin Guide: A Tale of Two Servers: BIOS, GPU, and NUMA Tuning for XCP-ng: Preserving the valuable work done by Tobias Kreidl (@tjkreidl)

@poddingue Thank you kindly! Honestly, whatever organizational structure you think is best is fine by me.

-

RE: Server Admin Guide: A Tale of Two Servers: BIOS, GPU, and NUMA Tuning for XCP-ng: Preserving the valuable work done by Tobias Kreidl (@tjkreidl)

@poddingue I am confused is how your updated articles can be accessed somewhere on my main Github page. I only see them if I follow your "pull" link. What needs to be done to commit them?

Thank you! -

RE: Server Admin Guide: A Tale of Two Servers: BIOS, GPU, and NUMA Tuning for XCP-ng: Preserving the valuable work done by Tobias Kreidl (@tjkreidl)

@pierrebrunet Yes, that looks very good. Some of the images are stacked instead of side-by-side, but who cares?

Thanks ever so much again! -

RE: Server Admin Guide: A Tale of Two Servers: BIOS, GPU, and NUMA Tuning for XCP-ng: Preserving the valuable work done by Tobias Kreidl (@tjkreidl)

@poddingue Wow, fantastic! Your time and efforts are most appreciated. And I'd be more that willing to have the contents put on the docs.xcp-ng.org site. I'm just pleased that the information still can be found useful and will be able to serve the community for some time to come.

My only question is with these additions, is it possible to view the full articles similar to how the PDF and improved Word documents I hacked render that first article? I'm afraid I'm not savvy enough about Github to understand this. Many thanks again!

-

RE: Server Admin Guide: A Tale of Two Servers: BIOS, GPU, and NUMA Tuning for XCP-ng: Preserving the valuable work done by Tobias Kreidl (@tjkreidl)

Folks, I made the effort to convert the first blog article from PDF to MS Word .docx format where I was able to much more easily edit it and clean it up. It's now there along with the messier PDF version on the Github site. When I get a chance. I plan to tackle the other two articles. Thank you for your patience. Note that to see the Word Doc, you have to download it as Github itself doesn't render it.

-

RE: GPU share to more Windows VMs on same XCP-NG node

@Aleksander I fear that all these -- even now the RTX cards from NVIDIA -- generally require licensing when run on servers, whether for pass-through or assigned to individual VMs. As well, installing an NVIDIA license server is then necessary. They have become more strict about this over the past several years, as RTX cards used to be exempt.

There are, however, some exceptions, but it's complicated and confusing! For details, consult NVIDIA documents such as this one: https://docs.nvidia.com/vgpu/latest/grid-licensing-user-guide/index.html

P.S. Unfortunately, since retirement a few years ago, I no longer have any hands-on equipment at my disposal. -

RE: Server Admin Guide: A Tale of Two Servers: BIOS, GPU, and NUMA Tuning for XCP-ng: Preserving the valuable work done by Tobias Kreidl (@tjkreidl)

@poddingue Good evening. Honestly, I'd be totally fine with your taking over a full copy of everything over to XCP-ng and augmenting it over time as new information becomes available. It'd stand a better change of longevity there than in my hands, and all I'd ask for is acknowledgement for the original work. There's no real need for me to keep a totally separate copy of the materials, which would only lead to confusion as things likely would diverse over time.

As mentioned above, there are more forum articles and such out there, and when I have time, I'll see if I can hunt some more of those down, as well.

I really appreciate what you folks are doing at XCP-ng and the time and effort you're putting into your products. Above all, the feedback and communication have been better than pretty much anyone else I've ever dealt with in the IT industry.

P.S. @johnnezero did a super summarizing job with his "Series Overview & Quick Reference" that includes some of the most useful commands in addition to a synopsis of each of the articles. Very impressive! -

RE: Server Admin Guide: A Tale of Two Servers: BIOS, GPU, and NUMA Tuning for XCP-ng: Preserving the valuable work done by Tobias Kreidl (@tjkreidl)

I tried adding the HTML file bundles and made a horrible mess, which took forever for me to clean up. The on-line instructions were not very helpful. What I saved as files seemed to contain also way more stuff than it should have, some seemingly unrelated to the original blog. Sigh. I'm no Github expert, that's for sure.

-

RE: Server Admin Guide: A Tale of Two Servers: BIOS, GPU, and NUMA Tuning for XCP-ng: Preserving the valuable work done by Tobias Kreidl (@tjkreidl)

This is great to see, thank you for taking the time to rescue this; and thanks to @john.c for the recovery work and to @tjkreidl for writing it in the first place.

I went looking, and there is a small XCP-ng-specific piece on this in the official docs under NUMA affinity (https://docs.xcp-ng.org/compute#numa-affinity), but it's nothing like the depth of the Tale of Two Servers series, so having the originals archived is genuinely useful.

I won't pretend to judge how much of the 2019 BIOS and GPU-scheduler guidance still maps cleanly onto current hardware and XCP-ng versions; others here will know where it's aged and where it hasn't.

I'll make sure this is on our radar on the docs side, because it keeps coming up. Really appreciate you keeping this from disappearing.

Thank you kindly for your positive comments and appreciation. To me, it's amazing how some information can stay relevant for long periods of time, even given the rapid state of evolution in the technology sectors. I will try to get the full HTML docs uploaded soon, as well. There are a number of other XenServer articles I discovered a while back on a Polish server, and will see what else I can retrieve.

My original avocation for 15 years was that of an astronomer, so research is in my blood and diving into specific issues and doing extensive testing have always been a big part of my motivation to better understand as well as share knowledge. -

RE: Tag-Based Automation: Manage VM CPU Priority via assigned tag.

@johnnezero The full HTML versions will render much better. The PDF conversion is less than perfect. iIll try to get those uploaded, as well.

-

RE: Tag-Based Automation: Manage VM CPU Priority via assigned tag.

@johnnezero Thanks, man! I hope you and others find it and the related other two articles useful.

-

RE: Tag-Based Automation: Manage VM CPU Priority via assigned tag.

@johnnezero Cool -- you guys probably have a way better idea of a proper layout and organization of the materials. Thank you!

-

RE: Tag-Based Automation: Manage VM CPU Priority via assigned tag.

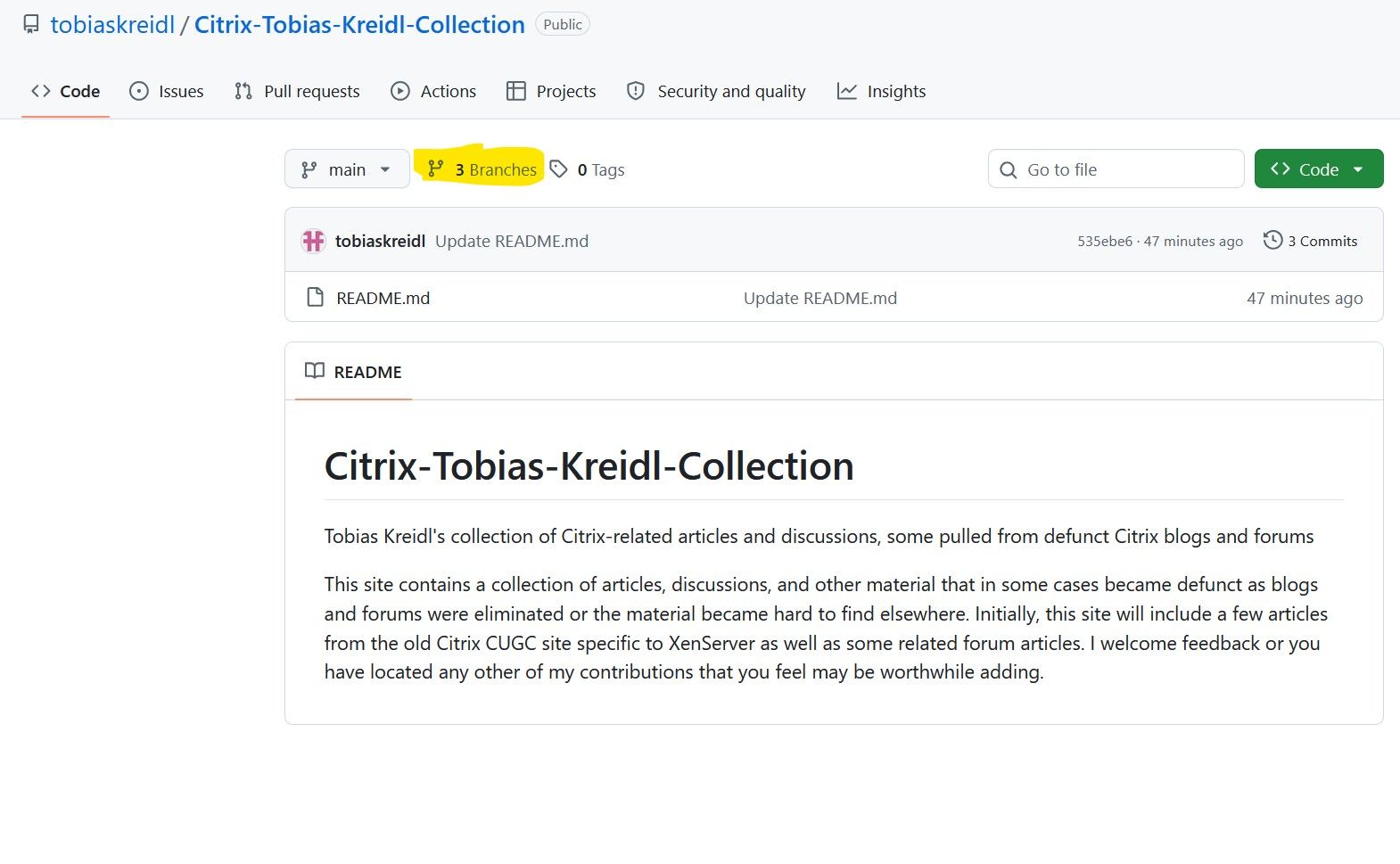

@john.c They are under two branches ... do you not see them?

XenServer-Articles and XenServer-Forum-Threads -- they landed under the "Branches" section ...

-

RE: Tag-Based Automation: Manage VM CPU Priority via assigned tag.

@john.c @johnnezero Well, the Github reporsitory is in place already! Access it at:

https://github.com/tobiaskreidl/Citrix-Tobias-Kreidl-Collection

Feel free to find more material to add, as will I, and thank you kindly for the suggestions and support. -

RE: Tag-Based Automation: Manage VM CPU Priority via assigned tag.

@john.c Wow, that was amazing -- not sure why my searches were unsuccessful, but many thanks! I think Github might be a good option for putting these on-line as a more reliable spot. And, yes, preserving images is always a challenge.

I do hope some of that information may be useful to you and thanks much again for all your efforts, John!P.S. Seems the easiest way to get the full content is to save the Web page as a PDF file, which I have since done. You can, of course, also download the whole package to an HTML folder but that output looks a bit messy, though it does split things out nicely. I'll consider one or the other or both for putting up somewhere, like on Github, but at least I have them now!

-

RE: Tag-Based Automation: Manage VM CPU Priority via assigned tag.

@john.c Not found with the Wayback Machine, alas. Still not finding it anywhere else, but will keep looking!

It's a crying shame Citrix didn't preserve the treasure trove of old community blogs. -

RE: Tag-Based Automation: Manage VM CPU Priority via assigned tag.

@johnnezero It would be also interesting to take UMA/NUMA into account as VMs -- in particular, VMs with vGPUS -- can run much more efficiently if they do not cross memory bank boundaries that span more than one CPU instance. On some Linux systems -- not sure about the one hosting XCP-ng -- you can even disable NUMA. Just an additional thought here. I published a number of years ago a three-part series "A Tale of Two Servers" discussing a number of related optimizations but alas, the Citrix blogs were eliminated and I'm snot sure where copies of these articles exist anymore. But there are plenty of articles on this, in particular by Frank Denneman, and also ones like the following;

https://indico.cern.ch/event/304944/contributions/1672535/attachments/578723/796898/numa.pdf

https://docs.xenserver.com/en-us/xenserver/9/numa.html -

RE: Too many snapshots

@Pilow Agree.... have to be sure that garbage collection is completed or it'll never catch up if backups continue to be run without the coalesce completing.

-

RE: Too many snapshots

@Pilow The other thing to to consider is being cognizant of how long your backups typically take (or even, planning a worst-case condition) and defining the backup intervals accordingly.

In other words, if you know you cannot consistently do your incremental backups in less than an hour, perform them 90 minutes or two hours between backups. It's better IMO to have a solid backup less frequently than have them fail on a regular basis.