XCP-ng 8.3 updates announcements and testing

-

@MajorP93 As you replied to my message and not to stormi.... Just wanted to clear up any misunderstanding.

-

@manilx No I did not. I replied to this thread in general not to your post. When looking at the answers to your specific post where you reported the RPU issue I can only see 1 reply which was written by Stormi (asking for logs).

Going forward: you can identify replies to your posts by either looking at posts that quoted your message or tagged your username using the @ character.

-

Yes, new update candidates, again!

This is, in theory, the very last round of fixes before QCOW2 support comes as an official update!

What changed

Storage

sm+blktap:- Fix a never ending coalesce task and an associated tapdisk crash which would leave the QCOW2 VDI corrupted. Thanks @emerson for reporting the issue!

- Attempting to migrate a QCOW2 towards a SR that supports QCOW2 but prefers VHD will now automatically create a QCOW2 disk at the destination if the disk is bigger than 2 TiB. Previously, it was documented as a known issue that it would attempt to create a VHD and fail.

- Another known issue fixed: attempting to resize a QCOW2 VDI with a snapshot on a LVM-based SR no longer fails.

xapi:- prevent long migrations for failing due to expiring XAPI session.

- More security fixes related to XSA-489.

Versions:

blktap: 3.55.5-6.6.xcpng8.3 -> 3.55.5-6.7.xcpng8.3sm: 3.2.12-17.6.xcpng8.3 -> 3.2.12-17.7.xcpng8.3xapi: 26.1.3-1.9.xcpng8.3 -> 26.1.3-1.10.xcpng8.3

Test on XCP-ng 8.3

If you are using XOSTOR, please refer to our documentation for the update method.

yum clean metadata --enablerepo=xcp-ng-testing,xcp-ng-candidates yum update --enablerepo=xcp-ng-testing,xcp-ng-candidates rebootThe usual update rules apply: pool coordinator first, etc.

What to test

Anything related to storage, be it with VHD or QCOW2 disks.

Test window before official release of the updates

~3 days

-

@stormi Updates deployed, no issues so far.

-

I could reproduce a bug: cloning a VDI (fast and slow) from SR-1 to SR-2 does not work.

I got a pool, current qcow2 (xcp-ng-testing,xcp-ng-candidates), a template (vm), two SR attached via FC HBA, everything else works fine.id "0moocnjj4-jmge69z174" properties method "vm.create" params acls [] clone false existingDisks 0 name_label "xcpwin-vm-200.lab.testing.company.net C" name_description "Created by XO" size 64424509440 $SR "e69a6183-dc17-5b0e-0794-50ee8f57c521" name_label "xcpwin-vm-200.lab.testing.company.net" template "671e59e0-f59d-fd96-7426-9886ad9b8ee3" VDIs [] VIFs 0 network "261a821c-a373-ba36-2c44-487ed0e1a202" allowedIpv4Addresses [] allowedIpv6Addresses [] CPUs 4 cpusMax 4 cpuWeight null cpuCap null name_description "Test VM" memory 4294967296 bootAfterCreate true copyHostBiosStrings false createVtpm false destroyCloudConfigVdiAfterBoot false secureBoot false share false coreOs false tags [] hvmBootFirmware "uefi" name "API call: vm.create" userId "5f5f1e9c-c928-4898-ab88-62b201698476" type "api.call" start 1777726828192 status "failure" updatedAt 1777727473136 end 1777727473136 result code "INTERNAL_ERROR" params 0 "Storage_error ([S(Internal_error);S(Storage_error ([S(Migration_preparation_failure);S(Storage_error ([S(Backend_error);[S(SR_BACKEND_FAILURE_1200);[S();S(list index out of range);S()]]]))]))])" task uuid "cc16d4ba-ce10-58ef-10c0-808872ec30ca" name_label "Async.VDI.pool_migrate" name_description "" allowed_operations [] current_operations {} created "20260502T13:08:44Z" finished "20260502T13:10:25Z" status "failure" resident_on "OpaqueRef:6cac1d11-e91e-5288-8ec8-3d84e6288952" progress 1 type "<none/>" result "" error_info 0 "INTERNAL_ERROR" 1 "Storage_error ([S(Internal_error);S(Storage_error ([S(Migration_preparation_failure);S(Storage_error ([S(Backend_error);[S(SR_BACKEND_FAILURE_1200);[S();S(list index out of range);S()]]]))]))])" other_config {} subtask_of "OpaqueRef:NULL" subtasks [] backtrace "(((process xapi)(filename ocaml/xapi/xapi_vm_migrate.ml)(line 1815))((process xapi)(filename ocaml/libs/xapi-stdext/lib/xapi-stdext-pervasives/pervasiveext.ml)(line 24))((process xapi)(filename ocaml/libs/xapi-stdext/lib/xapi-stdext-pervasives/pervasiveext.ml)(line 39))((process xapi)(filename ocaml/xapi/xapi_vm_migrate.ml)(line 2105))((process xapi)(filename ocaml/libs/xapi-stdext/lib/xapi-stdext-pervasives/pervasiveext.ml)(line 24))((process xapi)(filename ocaml/libs/xapi-stdext/lib/xapi-stdext-pervasives/pervasiveext.ml)(line 39))((process xapi)(filename ocaml/xapi/xapi_vm_migrate.ml)(line 2095))((process xapi)(filename ocaml/xapi/message_forwarding.ml)(line 141))((process xapi)(filename ocaml/libs/xapi-stdext/lib/xapi-stdext-pervasives/pervasiveext.ml)(line 24))((process xapi)(filename ocaml/libs/xapi-stdext/lib/xapi-stdext-pervasives/pervasiveext.ml)(line 39))((process xapi)(filename ocaml/libs/xapi-stdext/lib/xapi-stdext-pervasives/pervasiveext.ml)(line 24))((process xapi)(filename ocaml/libs/xapi-stdext/lib/xapi-stdext-pervasives/pervasiveext.ml)(line 39))((process xapi)(filename ocaml/xapi/rbac.ml)(line 228))((process xapi)(filename ocaml/xapi/rbac.ml)(line 238))((process xapi)(filename ocaml/xapi/server_helpers.ml)(line 78)))" message "INTERNAL_ERROR(Storage_error ([S(Internal_error);S(Storage_error ([S(Migration_preparation_failure);S(Storage_error ([S(Backend_error);[S(SR_BACKEND_FAILURE_1200);[S();S(list index out of range);S()]]]))]))]))" name "XapiError" stack "XapiError: INTERNAL_ERROR(Storage_error ([S(Internal_error);S(Storage_error ([S(Migration_preparation_failure);S(Storage_error ([S(Backend_error);[S(SR_BACKEND_FAILURE_1200);[S();S(list index out of range);S()]]]))]))]))\n at Function.wrap (file:///usr/local/lib/node_modules/xo-server/node_modules/xen-api/_XapiError.mjs:16:12)\n at default (file:///usr/local/lib/node_modules/xo-server/node_modules/xen-api/_getTaskResult.mjs:13:29)\n at Xapi._addRecordToCache (file:///usr/local/lib/node_modules/xo-server/node_modules/xen-api/index.mjs:1078:24)\n at file:///usr/local/lib/node_modules/xo-server/node_modules/xen-api/index.mjs:1112:14\n at Array.forEach (<anonymous>)\n at Xapi._processEvents (file:///usr/local/lib/node_modules/xo-server/node_modules/xen-api/index.mjs:1102:12)\n at Xapi._watchEvents (file:///usr/local/lib/node_modules/xo-server/node_modules/xen-api/index.mjs:1275:14)" -

@IgorGlock Thanks for the report. Can you elaborate on the exact steps required to reproduce this? Also, are you running XOA or XO from sources? Which version or commit?

-

@Danp

I got XOA appliance with trial license for our lab.

Release channels: latest

Current version: 6.4.0I removed the 2 SR and created them new, use qcow2 again. VM migration from SR-1 to SR-2 failed too.

id "0mopksrzz-rnu1secaodl" properties method "vm.migrate" params vm "64b89180-1f63-3625-4f92-efb5cc15b9d6" sr "81a41e32-49e5-0f5f-7692-2fc49ec875a2" targetHost "253ea664-09bf-4212-a3b8-f1588406190e" name "API call: vm.migrate" userId "5f5f1e9c-c928-4898-ab88-62b201698476" type "api.call" start 1777800975551 status "failure" updatedAt 1777801141331 infos 0 message "migration with storage motion" warnings 0 data host "253ea664-09bf-4212-a3b8-f1588406190e" sr "81a41e32-49e5-0f5f-7692-2fc49ec875a2" message "host have no PBD in the SR" 1 data host "253ea664-09bf-4212-a3b8-f1588406190e" sr "81a41e32-49e5-0f5f-7692-2fc49ec875a2" message "host have no PBD in the SR" end 1777801141331 result code "INTERNAL_ERROR" params 0 "Storage_error ([S(Internal_error);S(Storage_error ([S(Migration_preparation_failure);S(Storage_error ([S(Backend_error);[S(SR_BACKEND_FAILURE_1200);[S();S(list index out of range);S()]]]))]))])" task uuid "af35352c-cdbc-0567-8ccc-167d636bff11" name_label "Async.VM.migrate_send" name_description "" allowed_operations [] current_operations {} created "20260503T09:36:15Z" finished "20260503T09:39:01Z" status "failure" resident_on "OpaqueRef:0d7b2e70-c99b-cf1c-7a54-c98d1916af44" progress 1 type "<none/>" result "" error_info 0 "INTERNAL_ERROR" 1 "Storage_error ([S(Internal_error);S(Storage_error ([S(Migration_preparation_failure);S(Storage_error ([S(Backend_error);[S(SR_BACKEND_FAILURE_1200);[S();S(list index out of range);S()]]]))]))])" other_config {} subtask_of "OpaqueRef:NULL" subtasks [] backtrace "(((process xapi)(filename ocaml/xapi/xapi_vm_migrate.ml)(line 1815))((process xapi)(filename ocaml/libs/xapi-stdext/lib/xapi-stdext-pervasives/pervasiveext.ml)(line 24))((process xapi)(filename ocaml/libs/xapi-stdext/lib/xapi-stdext-pervasives/pervasiveext.ml)(line 39))((process xapi)(filename ocaml/xapi/message_forwarding.ml)(line 141))((process xapi)(filename ocaml/libs/xapi-stdext/lib/xapi-stdext-pervasives/pervasiveext.ml)(line 24))((process xapi)(filename ocaml/libs/xapi-stdext/lib/xapi-stdext-pervasives/pervasiveext.ml)(line 39))((process xapi)(filename ocaml/libs/xapi-stdext/lib/xapi-stdext-pervasives/pervasiveext.ml)(line 24))((process xapi)(filename ocaml/libs/xapi-stdext/lib/xapi-stdext-pervasives/pervasiveext.ml)(line 39))((process xapi)(filename ocaml/xapi/message_forwarding.ml)(line 2650))((process xapi)(filename ocaml/xapi/rbac.ml)(line 228))((process xapi)(filename ocaml/xapi/rbac.ml)(line 238))((process xapi)(filename ocaml/xapi/server_helpers.ml)(line 78)))" message "INTERNAL_ERROR(Storage_error ([S(Internal_error);S(Storage_error ([S(Migration_preparation_failure);S(Storage_error ([S(Backend_error);[S(SR_BACKEND_FAILURE_1200);[S();S(list index out of range);S()]]]))]))]))" name "XapiError" stack "XapiError: INTERNAL_ERROR(Storage_error ([S(Internal_error);S(Storage_error ([S(Migration_preparation_failure);S(Storage_error ([S(Backend_error);[S(SR_BACKEND_FAILURE_1200);[S();S(list index out of range);S()]]]))]))]))\n at Function.wrap (file:///usr/local/lib/node_modules/xo-server/node_modules/xen-api/_XapiError.mjs:16:12)\n at default (file:///usr/local/lib/node_modules/xo-server/node_modules/xen-api/_getTaskResult.mjs:13:29)\n at Xapi._addRecordToCache (file:///usr/local/lib/node_modules/xo-server/node_modules/xen-api/index.mjs:1078:24)\n at file:///usr/local/lib/node_modules/xo-server/node_modules/xen-api/index.mjs:1112:14\n at Array.forEach (<anonymous>)\n at Xapi._processEvents (file:///usr/local/lib/node_modules/xo-server/node_modules/xen-api/index.mjs:1102:12)\n at Xapi._watchEvents (file:///usr/local/lib/node_modules/xo-server/node_modules/xen-api/index.mjs:1275:14)" -

@stormi installed updates. Did some backups and VM migrations between various storages (bad and qcow2) looks good so far.

-

@IgorGlock Are you able to open the support tunnel for us to investigate? You can either post the tunnel ID here or open a support ticket.

-

@IgorGlock Hello,

Could you share the exception that should be in

/var/log/SMlog? -

Hello,

I deleted the entire XOA and downloaded fresh image, recreated configuration and blank SR.

The issue is gone. I will test some VDI migrations this week.There are is some performance impact and timeouts when creating 100-200 VMs on single 10 TB SR. I use xo-cli vm.create script. See you later

-

-

Congrats to everyone on those, it was a huge amount of work, test, and as usual, great community feedback!

-

Hello. just wanted to let you know that I got this error message on one of my servers (the others worked fine), when installing the last 25 updates manually via "yum update"

[10:39 xen1 ~]# yum update Loaded plugins: fastestmirror Loading mirror speeds from cached hostfile Excluding mirror: updates.xcp-ng.org * xcp-ng-base: mirrors.xcp-ng.org Excluding mirror: updates.xcp-ng.org * xcp-ng-updates: mirrors.xcp-ng.org Resolving Dependencies --> Running transaction check ---> Package blktap.x86_64 0:3.55.5-6.3.xcpng8.3 will be updated ---> Package blktap.x86_64 0:3.55.5-6.7.xcpng8.3 will be an update ---> Package forkexecd.x86_64 0:26.1.3-1.9.xcpng8.3 will be updated ---> Package forkexecd.x86_64 0:26.1.3-1.10.xcpng8.3 will be an update ---> Package kernel.x86_64 0:4.19.19-8.0.46.1.xcpng8.3 will be updated ---> Package kernel.x86_64 0:4.19.19-8.0.46.2.xcpng8.3 will be an update ---> Package message-switch.x86_64 0:26.1.3-1.9.xcpng8.3 will be updated ---> Package message-switch.x86_64 0:26.1.3-1.10.xcpng8.3 will be an update ---> Package qcow-stream-tool.x86_64 0:26.1.3-1.9.xcpng8.3 will be updated ---> Package qcow-stream-tool.x86_64 0:26.1.3-1.10.xcpng8.3 will be an update ---> Package rrdd-plugins.x86_64 0:26.1.3-1.9.xcpng8.3 will be updated ---> Package rrdd-plugins.x86_64 0:26.1.3-1.10.xcpng8.3 will be an update ---> Package sm.x86_64 0:3.2.12-17.1.xcpng8.3 will be updated ---> Package sm.x86_64 0:3.2.12-17.7.xcpng8.3 will be an update ---> Package sm-cli.x86_64 0:26.1.3-1.9.xcpng8.3 will be updated ---> Package sm-cli.x86_64 0:26.1.3-1.10.xcpng8.3 will be an update ---> Package sm-fairlock.x86_64 0:3.2.12-17.1.xcpng8.3 will be updated ---> Package sm-fairlock.x86_64 0:3.2.12-17.7.xcpng8.3 will be an update ---> Package squeezed.x86_64 0:26.1.3-1.9.xcpng8.3 will be updated ---> Package squeezed.x86_64 0:26.1.3-1.10.xcpng8.3 will be an update ---> Package varstored-guard.x86_64 0:26.1.3-1.9.xcpng8.3 will be updated ---> Package varstored-guard.x86_64 0:26.1.3-1.10.xcpng8.3 will be an update ---> Package vhd-tool.x86_64 0:26.1.3-1.9.xcpng8.3 will be updated ---> Package vhd-tool.x86_64 0:26.1.3-1.10.xcpng8.3 will be an update ---> Package wsproxy.x86_64 0:26.1.3-1.9.xcpng8.3 will be updated ---> Package wsproxy.x86_64 0:26.1.3-1.10.xcpng8.3 will be an update ---> Package xapi-core.x86_64 0:26.1.3-1.9.xcpng8.3 will be updated ---> Package xapi-core.x86_64 0:26.1.3-1.10.xcpng8.3 will be an update ---> Package xapi-nbd.x86_64 0:26.1.3-1.9.xcpng8.3 will be updated ---> Package xapi-nbd.x86_64 0:26.1.3-1.10.xcpng8.3 will be an update ---> Package xapi-rrd2csv.x86_64 0:26.1.3-1.9.xcpng8.3 will be updated ---> Package xapi-rrd2csv.x86_64 0:26.1.3-1.10.xcpng8.3 will be an update ---> Package xapi-storage-script.x86_64 0:26.1.3-1.9.xcpng8.3 will be updated ---> Package xapi-storage-script.x86_64 0:26.1.3-1.10.xcpng8.3 will be an update ---> Package xapi-tests.x86_64 0:26.1.3-1.9.xcpng8.3 will be updated ---> Package xapi-tests.x86_64 0:26.1.3-1.10.xcpng8.3 will be an update ---> Package xapi-xe.x86_64 0:26.1.3-1.9.xcpng8.3 will be updated ---> Package xapi-xe.x86_64 0:26.1.3-1.10.xcpng8.3 will be an update ---> Package xcp-networkd.x86_64 0:26.1.3-1.9.xcpng8.3 will be updated ---> Package xcp-networkd.x86_64 0:26.1.3-1.10.xcpng8.3 will be an update ---> Package xcp-ng-pv-tools.noarch 0:8.3-16.xcpng8.3 will be updated ---> Package xcp-ng-pv-tools.noarch 0:8.3-17.xcpng8.3 will be an update ---> Package xcp-rrdd.x86_64 0:26.1.3-1.9.xcpng8.3 will be updated ---> Package xcp-rrdd.x86_64 0:26.1.3-1.10.xcpng8.3 will be an update ---> Package xenopsd.x86_64 0:26.1.3-1.9.xcpng8.3 will be updated ---> Package xenopsd.x86_64 0:26.1.3-1.10.xcpng8.3 will be an update ---> Package xenopsd-cli.x86_64 0:26.1.3-1.9.xcpng8.3 will be updated ---> Package xenopsd-cli.x86_64 0:26.1.3-1.10.xcpng8.3 will be an update ---> Package xenopsd-xc.x86_64 0:26.1.3-1.9.xcpng8.3 will be updated ---> Package xenopsd-xc.x86_64 0:26.1.3-1.10.xcpng8.3 will be an update ---> Package zabbix-agent2.x86_64 0:7.0.25-release1.el7 will be updated ---> Package zabbix-agent2.x86_64 0:7.0.26-release1.el7 will be an update ---> Package zabbix-agent2-plugin-ember-plus.x86_64 0:7.0.25-release1.el7 will be updated ---> Package zabbix-agent2-plugin-ember-plus.x86_64 0:7.0.26-release1.el7 will be an update ---> Package zabbix-agent2-plugin-mongodb.x86_64 0:7.0.25-release1.el7 will be updated ---> Package zabbix-agent2-plugin-mongodb.x86_64 0:7.0.26-release1.el7 will be an update ---> Package zabbix-agent2-plugin-mssql.x86_64 0:7.0.25-release1.el7 will be updated ---> Package zabbix-agent2-plugin-mssql.x86_64 0:7.0.26-release1.el7 will be an update ---> Package zabbix-agent2-plugin-postgresql.x86_64 0:7.0.25-release1.el7 will be updated ---> Package zabbix-agent2-plugin-postgresql.x86_64 0:7.0.26-release1.el7 will be an update --> Finished Dependency Resolution Dependencies Resolved ==================================================================================================================================================================================================================== Package Arch Version Repository Size ==================================================================================================================================================================================================================== Updating: blktap x86_64 3.55.5-6.7.xcpng8.3 xcp-ng-updates 772 k forkexecd x86_64 26.1.3-1.10.xcpng8.3 xcp-ng-updates 2.5 M kernel x86_64 4.19.19-8.0.46.2.xcpng8.3 xcp-ng-updates 29 M message-switch x86_64 26.1.3-1.10.xcpng8.3 xcp-ng-updates 4.6 M qcow-stream-tool x86_64 26.1.3-1.10.xcpng8.3 xcp-ng-updates 1.4 M rrdd-plugins x86_64 26.1.3-1.10.xcpng8.3 xcp-ng-updates 9.8 M sm x86_64 3.2.12-17.7.xcpng8.3 xcp-ng-updates 391 k sm-cli x86_64 26.1.3-1.10.xcpng8.3 xcp-ng-updates 4.8 M sm-fairlock x86_64 3.2.12-17.7.xcpng8.3 xcp-ng-updates 52 k squeezed x86_64 26.1.3-1.10.xcpng8.3 xcp-ng-updates 1.9 M varstored-guard x86_64 26.1.3-1.10.xcpng8.3 xcp-ng-updates 4.9 M vhd-tool x86_64 26.1.3-1.10.xcpng8.3 xcp-ng-updates 4.9 M wsproxy x86_64 26.1.3-1.10.xcpng8.3 xcp-ng-updates 1.1 M xapi-core x86_64 26.1.3-1.10.xcpng8.3 xcp-ng-updates 30 M xapi-nbd x86_64 26.1.3-1.10.xcpng8.3 xcp-ng-updates 3.1 M xapi-rrd2csv x86_64 26.1.3-1.10.xcpng8.3 xcp-ng-updates 3.0 M xapi-storage-script x86_64 26.1.3-1.10.xcpng8.3 xcp-ng-updates 2.5 M xapi-tests x86_64 26.1.3-1.10.xcpng8.3 xcp-ng-updates 5.0 M xapi-xe x86_64 26.1.3-1.10.xcpng8.3 xcp-ng-updates 1.5 M xcp-networkd x86_64 26.1.3-1.10.xcpng8.3 xcp-ng-updates 4.8 M xcp-ng-pv-tools noarch 8.3-17.xcpng8.3 xcp-ng-updates 16 M xcp-rrdd x86_64 26.1.3-1.10.xcpng8.3 xcp-ng-updates 3.6 M xenopsd x86_64 26.1.3-1.10.xcpng8.3 xcp-ng-updates 1.4 M xenopsd-cli x86_64 26.1.3-1.10.xcpng8.3 xcp-ng-updates 2.0 M xenopsd-xc x86_64 26.1.3-1.10.xcpng8.3 xcp-ng-updates 5.3 M zabbix-agent2 x86_64 7.0.26-release1.el7 zabbix 6.6 M zabbix-agent2-plugin-ember-plus x86_64 7.0.26-release1.el7 zabbix 1.6 M zabbix-agent2-plugin-mongodb x86_64 7.0.26-release1.el7 zabbix 3.8 M zabbix-agent2-plugin-mssql x86_64 7.0.26-release1.el7 zabbix 2.7 M zabbix-agent2-plugin-postgresql x86_64 7.0.26-release1.el7 zabbix 3.1 M Transaction Summary ==================================================================================================================================================================================================================== Upgrade 30 Packages Total size: 162 M Is this ok [y/d/N]: y Downloading packages: Running transaction check Running transaction test Transaction check error: file /usr/bin/qemu-img from install of blktap-3.55.5-6.7.xcpng8.3.x86_64 conflicts with file from package qemu-img-10:1.5.3-175.el7_9.6.x86_64 Error Summary ------------- [10:39 xen1 ~]#So in consequence the update seems to simply fail. I am aware that the manually installed zabbix packages are not "official" packages. However, from my current understanding the error looks more like a non-zabbix related issue. I am wondering, since I have another exactly identical server, which updated fine. If you need some informations, just let me know.

-

Sorry, about this. I think when migrating PV VMs from a previous system I had issues and "needed" to install "qemu-img" and I did not remember. So removing it, solved the problem. So all my fault. Sorry about this message!!!

-

Ran the 2 updates released today and...

Back to only showing one VM inBackup/Restoreas it did a month or 2 ago.

Ran the replication job and all VMs showed up inBackup/Restoreagain. (XO5) -

anyone know if applying these two patches requires rebooting?

-

@marcoi I've read in the blog post of Vates that no.

Did it anyway. -

Indeed, no reboot required if those are the only patches that you are applying, as indicated in the blog post.

-

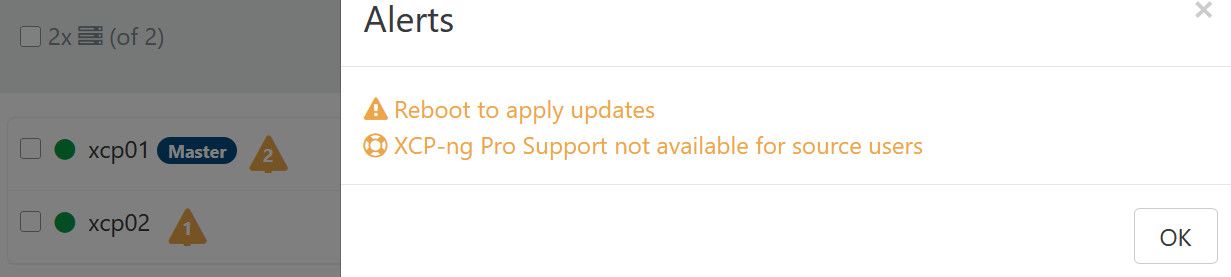

@stormi I getting a response to reboot after applying to master.

Hello! It looks like you're interested in this conversation, but you don't have an account yet.

Getting fed up of having to scroll through the same posts each visit? When you register for an account, you'll always come back to exactly where you were before, and choose to be notified of new replies (either via email, or push notification). You'll also be able to save bookmarks and upvote posts to show your appreciation to other community members.

With your input, this post could be even better 💗

Register Login