@tsukraw said:

Hey guys,

Looking for some help at understanding auto power on as the documentation possibly looks to need some refreshing.

https://docs.xcp-ng.org/guides/autostart-vm/

From the Xen Orchestra, the guide does not mention the pool level it is only under the CLI that it mentions the pool level.

Just looking for some clarification.

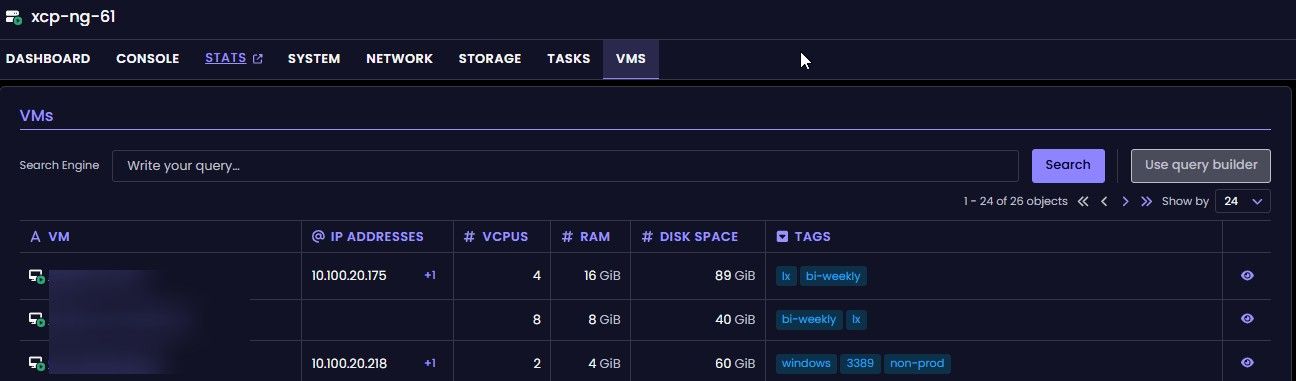

In order for auto power on to function, we need to enable it on the pool level, and then per VM correct?

If you have auto power on enabled at the VM level and the pool level disabled, the VM level is ignored, correct?

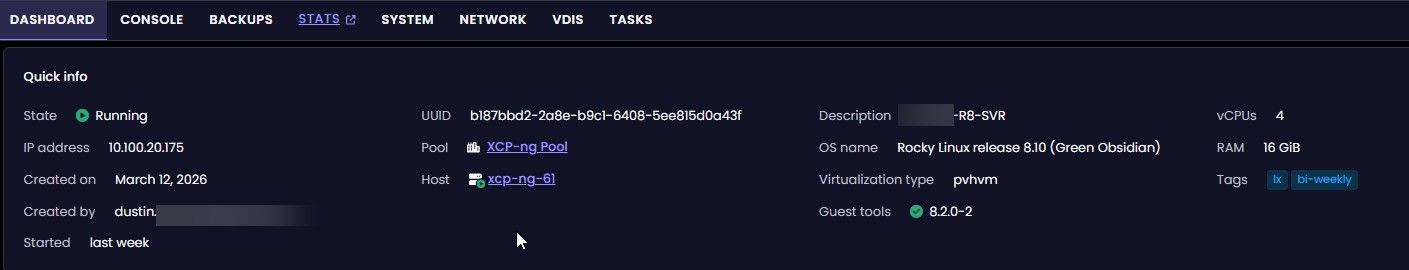

What is actually triggering the power on?

Is it XOA or the host itself?

I am assuming the host itself, just looking for clarification if XOA VM was part of the systems that went offline, should we expect to see it auto power back on if it is set to auto power on.

What determines the start order for auto power on when you have multiple VMs on a host?

Thank you!!

Yes, it needs to be enabled at pool level and at VM level. I tried once at only enabling at the VM level and it didn’t auto power on, as it wasn’t enabled at the pool level, it started auto powering on once it’s enabled at both levels.

It’s configuring a XAPI value as this occurs at the Xen level (virtualisation server). Xen Orchestra is just making this value visible with a nice UI interface toggle.