Posts

-

RE: Create a new SR: qcow2 failure

also do you have a reverse proxy/ http proxy in front of xo ? it can block the bigger upload by default

-

RE: Create a new SR: qcow2 failure

@nasheayahu qcow2 disk import has been merged recently ( https://github.com/vatesfr/xen-orchestra/pull/9817 ) , but there is a caveat

the clusters in the disk must be in order to work . This is generally the case for a disk exported by any system, but not the case for a disk used in production that you want to import

where does this disk comes from ? if you have access to your SR from the outside, you can also put the qcow2 file directly , by renaming it as a valid uuid ( I think uuidgen can do this ) and putting it directly in the SR/uuid repository

-

RE: Too many snapshots

@poddingue this is something that was hidden with the previous system ( same disk chains, but not shown as snapshot )

@julienxovates are you ok to not check the vm tagged as replication from this chech ?

-

RE: Slow Backups | XOA Performance Test – Upgrading from 2 vCPU to 4 vCPU / 8GB RAM

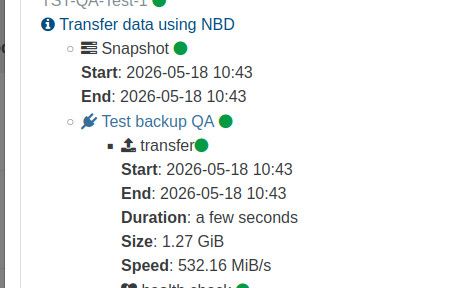

the last rewrite of the stream processing ( spring 2025 ) focused on stability and memory footprint, and , on a standard cpu, it tops at around 300MB/s per backup job. Your benchmarks are very interesting, and they confirm most of it.

this limit was not really an issue since, in most case the xapi was limiting around 100MB/s per disk , but it will be more a more visible limit

Note that master have some fixes on the memory usage (not related to backups)

That's why we have started an internal workforce focused on performance, with all the teams from the kernel to the backups, including storage, network and xapi.

If I can brag a little :

i9 , nvme disk , backup to a nvme disk in passthrough, xoa and vm are on the same host, so it's quite far from real world data, but it shows where the limit is

-

RE: V2V Migration | Mixed Volumes VHD and QCOW

@tsukraw I am taking the ticket and will keep you informed as soon as possible

-

RE: Xen Orchestra has stopped updating commits

@ducatijosh did you do a yarn build ?

-

RE: "app.getLicenses is not a function" when I try to add a node to my pool

@bvivi57 xo do check license if you have xostor installed, since it need some magic to work at a lot of steps ( like the rolling pool updates)

this is the expected behavior with a manually installed xostor ( cc @julienxovates for information )

-

RE: XOA - Memory Usage

@acebmxer not yet

(a little under the water with the release patch, but we will do it ) -

RE: REST - Reversed query?

@DustyArmstrong for now the rest api doesn't support server side sorting ( we don't have the memory budget to do this )

it will be improved in the future

-

RE: Backup Job Fails with "timeout while getting the remote" - but Remote is Reachable

is it possible that your NFS need some specific options ( like nfs version ? )

-

RE: XOA - Memory Usage

I didnt setup ssh on work XOA i just set the password but need to reboot it for it to work. The tunnel is still open if you dont mind doing it otherwise I will need to reboot xoa to get in myself.

I can do it on monday

-

RE: XOA - Memory Usage

@acebmxer

If you are ok, you can do a heap memory export ( not that you will probably have to restart the xoa after to free the memory)If you can do it, and we will collect the memory file on monday ( or when you are ready) , and see if this is a new cause or the return of an old one

-

RE: Error while scanning disk

@poddingue there is a cache system that keep the disk open a few minutes, we will rework it in the near future, it should improve the situation on these errors ( at the cost of being a little slower )

-

RE: Xen Orchestra has stopped updating commits

ping @julienxovates I think we can change thie behavior ( accepting a xo-server start even if a zombie process is still running)

-

RE: CR backup with retention > 4

florent said:

@McHenry yes

your replicated VMmaye be a little slower, and removing these snapshot can lead to a big and slow coalesce, but these tradeoff can be worth it for replication

we will try to clarify it for the 6.5 version ( end of may )

ping @julienxovates

-

RE: Xen Orchestra has stopped updating commits

@ducatijosh my bet is that xo-server was still not completly stoppe,d thus was serving the old code . As strange as it seems, it you start a second xo-server, it won't fail, but only show a warningin the logs

a full reboot really restart xo-server

-

RE: CR backup with retention > 4

@McHenry yes

your replicated VMmaye be a little slower, and removing these snapshot can lead to a big and slow coalesce, but these tradeoff can be worth it for replication

we will try to clarify it for the 6.5 version ( end of may )

-

RE: CR backup with retention > 4

oo many snapshots

the warning limit is 3

this make sense for a VM used for daily production, but we should maybe don't apply the same limit on replicated VM