Hi,

I installed the update candidates within my test environment.

Updates installed fine, after reboot all looks good so far.

No apparent issues can be seen.

Hi,

I installed the update candidates within my test environment.

Updates installed fine, after reboot all looks good so far.

No apparent issues can be seen.

@pierrebrunet Thanks for fixing this critical issue!

Do you have any plans regarding internal automated tests that would ensure that critical issues like these (deletion / corruption of backups etc.) do not get shipped?

Best regards

Yes we use them in production since more than a year without any issue.

Only with a caveat: they only work in environments where DMC (Dynamic Memory Control) feature of XCP-ng is not being used.

Reason: Rust-based Xen guest agent currently has no ballooning driver implemented which is present in Citrix Xen guest agent as confirmed by XCP-ng developer here: https://xcp-ng.org/forum/topic/11955/memory-ballooning-dmc-broken-since-xcp-ng-8.3-january-2026-patches/13?_=1779445511424

@poddingue Hello, while I -as a user- appreciate that you coordinate these bug reports I do wonder: is the GitHub issues section of Xen Orchestra really the preferred way of reporting issues?

This is also a valuable information for me personally.

In the past I felt like issues at the XO github repository often did not get the level of attention by the developers compared to reporting the issues here on the forums.

I wrote some XO github issues in the past as well and noticed that some never got any response and/or update.

Reporting these things here on the forums mostly felt more "productive".

Looking forward to getting some insights in this regard.

Thanks and best regards

@manilx No I did not. I replied to this thread in general not to your post. When looking at the answers to your specific post where you reported the RPU issue I can only see 1 reply which was written by Stormi (asking for logs).

Going forward: you can identify replies to your posts by either looking at posts that quoted your message or tagged your username using the @ character.

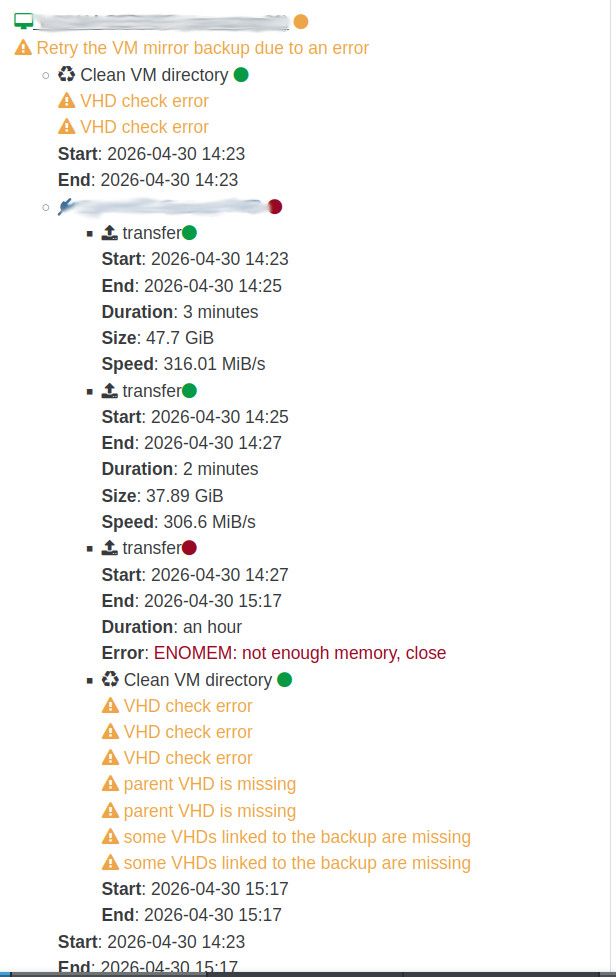

@florent Unfortunately Xen Orchestra RAM usage issue is back on my end.

During delta backups everything looked quite good but yesterday full backups were due according to our schedule.

The initial full backup succeeded but during the mirror job that is being started by sequence after the delta job finished the RAM issue got triggered.

We are using SMB backup remotes by the way.

XO VM has 10GB RAM in total plenty of which is free during backups. Still XO backup failed due to ENOMEM.

I can see in dmesg output on the Xen Orchestra VM:

[71584.204045] kworker/u37:2: page allocation failure: order:4, mode:0x40d40(GFP_NOFS|__GFP_ZERO|__GFP_COMP), nodemask=(null),cpuset=/,mems_allowed=0

[71584.204069] CPU: 4 UID: 0 PID: 75928 Comm: kworker/u37:2 Not tainted 6.18.25 #1 PREEMPT(lazy)

[71584.204075] Hardware name: Xen HVM domU, BIOS 4.17 04/21/2026

[71584.204077] Workqueue: writeback wb_workfn (flush-cifs-2)

[71584.204087] Call Trace:

[71584.204091] <TASK>

[71584.204096] dump_stack_lvl+0x61/0x80

[71584.204103] warn_alloc+0x151/0x1c0

[71584.204109] ? psi_memstall_leave+0x83/0xa0

[71584.204116] __alloc_pages_slowpath+0xdf2/0xe90

[71584.204124] __alloc_frozen_pages_noprof+0x2bb/0x350

[71584.204129] alloc_pages_mpol+0x107/0x190

[71584.204135] ___kmalloc_large_node+0x46/0x100

[71584.204140] __kmalloc_large_node_noprof+0x1d/0xd0

[71584.204144] __kmalloc_noprof+0x358/0x5e0

[71584.204150] ? crypt_message+0x50b/0x940 [cifs]

[71584.204259] crypt_message+0x50b/0x940 [cifs]

[71584.204365] smb3_init_transform_rq+0x278/0x310 [cifs]

[71584.204458] smb_send_rqst+0x120/0x1c0 [cifs]

[71584.204551] cifs_call_async+0x2b8/0x370 [cifs]

[71584.204632] ? __pfx_smb2_writev_callback+0x10/0x10 [cifs]

[71584.204714] smb2_async_writev+0x405/0x600 [cifs]

[71584.204800] netfs_advance_write+0xcd/0xf0 [netfs]

[71584.204827] netfs_write_folio+0x509/0x7c0 [netfs]

[71584.204850] netfs_writepages+0x28f/0x440 [netfs]

[71584.204872] do_writepages+0xdc/0x1f0

[71584.204878] ? update_curr+0x2f/0x180

[71584.204884] __writeback_single_inode+0x3e/0x320

[71584.204889] writeback_sb_inodes+0x274/0x4a0

[71584.204901] __writeback_inodes_wb+0x9a/0xf0

[71584.204906] wb_writeback+0x15b/0x300

[71584.204913] wb_workfn+0x3af/0x590

[71584.204918] process_scheduled_works+0x1d7/0x420

[71584.204923] worker_thread+0x21a/0x2f0

[71584.204927] ? __pfx_worker_thread+0x10/0x10

[71584.204930] kthread+0x201/0x260

[71584.204937] ? __pfx_kthread+0x10/0x10

[71584.204941] ret_from_fork+0x107/0x210

[71584.204946] ? __pfx_kthread+0x10/0x10

[71584.204950] ret_from_fork_asm+0x1a/0x30

[71584.204956] </TASK>

[71584.205026] Mem-Info:

[71584.205031] active_anon:288751 inactive_anon:14365 isolated_anon:0

active_file:1227362 inactive_file:783958 isolated_file:0

unevictable:768 dirty:32364 writeback:1024

slab_reclaimable:113003 slab_unreclaimable:26821

mapped:47649 shmem:936 pagetables:6024

sec_pagetables:0 bounce:0

kernel_misc_reclaimable:0

free:40796 free_pcp:5103 free_cma:0

[71584.205041] Node 0 active_anon:1155004kB inactive_anon:57460kB active_file:4909448kB inactive_file:3135832kB unevictable:3072kB isolated(anon):0kB isolated(file):0kB mapped:190596kB dirty:129456kB writeback:4096kB shmem:3744kB shmem_thp:0kB shmem_pmdmapped:0kB anon_thp:522240kB kernel_stack:7600kB pagetables:24096kB sec_pagetables:0kB all_unreclaimable? no Balloon:0kB

[71584.205050] Node 0 DMA free:13312kB boost:0kB min:100kB low:124kB high:148kB reserved_highatomic:0KB free_highatomic:0KB active_anon:0kB inactive_anon:0kB active_file:0kB inactive_file:0kB unevictable:0kB writepending:0kB zspages:0kB present:15996kB managed:15360kB mlocked:0kB bounce:0kB free_pcp:0kB local_pcp:0kB free_cma:0kB

[71584.205060] lowmem_reserve[]: 0 3720 9925 9925 9925

[71584.205068] Node 0 DMA32 free:108944kB boost:0kB min:25084kB low:31352kB high:37620kB reserved_highatomic:0KB free_highatomic:0KB active_anon:527548kB inactive_anon:0kB active_file:73972kB inactive_file:2908844kB unevictable:0kB writepending:133552kB zspages:0kB present:3915188kB managed:3809364kB mlocked:0kB bounce:0kB free_pcp:16856kB local_pcp:3944kB free_cma:0kB

[71584.205078] lowmem_reserve[]: 0 0 6205 6205 6205

[71584.205085] Node 0 Normal free:43900kB boost:0kB min:42392kB low:52988kB high:63584kB reserved_highatomic:2048KB free_highatomic:2048KB active_anon:627456kB inactive_anon:57460kB active_file:4835476kB inactive_file:226988kB unevictable:3072kB writepending:0kB zspages:0kB present:6545408kB managed:6353952kB mlocked:0kB bounce:0kB free_pcp:664kB local_pcp:248kB free_cma:0kB

[71584.205094] lowmem_reserve[]: 0 0 0 0 0

[71584.205101] Node 0 DMA: 0*4kB 0*8kB 0*16kB 0*32kB 0*64kB 0*128kB 0*256kB 0*512kB 1*1024kB (U) 2*2048kB (UM) 2*4096kB (M) = 13312kB

[71584.205120] Node 0 DMA32: 1553*4kB (UME) 1096*8kB (UME) 822*16kB (UME) 671*32kB (UM) 236*64kB (UM) 104*128kB (UM) 34*256kB (UM) 2*512kB (UM) 3*1024kB (M) 7*2048kB (M) 2*4096kB (M) = 113348kB

[71584.205194] Node 0 Normal: 71*4kB (UME) 998*8kB (UM) 1524*16kB (UE) 280*32kB (UE) 11*64kB (UE) 0*128kB 0*256kB 0*512kB 0*1024kB 1*2048kB (H) 0*4096kB = 44364kB

[71584.205216] Node 0 hugepages_total=0 hugepages_free=0 hugepages_surp=0 hugepages_size=1048576kB

[71584.205219] Node 0 hugepages_total=0 hugepages_free=0 hugepages_surp=0 hugepages_size=2048kB

[71584.205221] 2012251 total pagecache pages

[71584.205223] 2 pages in swap cache

[71584.205225] Free swap = 7294960kB

[71584.205226] Total swap = 7294968kB

[71584.205228] 2619148 pages RAM

[71584.205229] 0 pages HighMem/MovableOnly

[71584.205231] 74479 pages reserved

[71584.205232] 0 pages hwpoisoned

My XO from sources instance is running 6.4.0 release commit.

Best regards

//EDIT: maybe the issue that I am experiencing from time to time is being related to SMB remote?

Maybe backups to SMB remote are using different code path than e.g. NFS?

@manilx It's absolutely clear to me that the method of installing updates and the changes provided by the updated packages are 2 different things.

I was not commenting on your message but rather sharing my own experience with this round of patches as this thread is generally related to XCP-ng patches.

I updated both my test and production XCP-ng environments.

No issues during updates on all 6 hosts.

I noticed the same: for VMs that use UEFI the boot time is greatly increased compared to BIOS mode.

I noticed that VMs that have UEFI enabled show some "installing xen timer" and "spinlock" events in kernel log:

Apr 27 11:35:06 xo01 kernel: Xen: using vcpuop timer interface

Apr 27 11:35:06 xo01 kernel: installing Xen timer for CPU 0

Apr 27 11:35:06 xo01 kernel: smpboot: CPU0: AMD EPYC 7702P 64-Core Processor (family: 0x17, model: 0x31, stepping: 0x0)

Apr 27 11:35:06 xo01 kernel: cpu 0 spinlock event irq 52

Apr 27 11:35:06 xo01 kernel: Performance Events: PMU not available due to virtualization, using software events only.

Apr 27 11:35:06 xo01 kernel: signal: max sigframe size: 1776

Apr 27 11:35:06 xo01 kernel: rcu: Hierarchical SRCU implementation.

Apr 27 11:35:06 xo01 kernel: rcu: Max phase no-delay instances is 1000.

Apr 27 11:35:06 xo01 kernel: Timer migration: 1 hierarchy levels; 8 children per group; 1 crossnode level

Apr 27 11:35:06 xo01 kernel: NMI watchdog: Perf NMI watchdog permanently disabled

Apr 27 11:35:06 xo01 kernel: smp: Bringing up secondary CPUs ...

Apr 27 11:35:06 xo01 kernel: installing Xen timer for CPU 1

Apr 27 11:35:06 xo01 kernel: smpboot: x86: Booting SMP configuration:

Apr 27 11:35:06 xo01 kernel: .... node #0, CPUs: #1

Apr 27 11:35:06 xo01 kernel: installing Xen timer for CPU 2

Apr 27 11:35:06 xo01 kernel: #2

Apr 27 11:35:06 xo01 kernel: installing Xen timer for CPU 3

Apr 27 11:35:06 xo01 kernel: #3

Apr 27 11:35:06 xo01 kernel: installing Xen timer for CPU 4

Apr 27 11:35:06 xo01 kernel: #4

Apr 27 11:35:06 xo01 kernel: installing Xen timer for CPU 5

Apr 27 11:35:06 xo01 kernel: #5

Apr 27 11:35:06 xo01 kernel: installing Xen timer for CPU 6

Apr 27 11:35:06 xo01 kernel: #6

Apr 27 11:35:06 xo01 kernel: installing Xen timer for CPU 7

Apr 27 11:35:06 xo01 kernel: #7

Apr 27 11:35:06 xo01 kernel: cpu 1 spinlock event irq 81

Apr 27 11:35:06 xo01 kernel: cpu 2 spinlock event irq 82

Apr 27 11:35:06 xo01 kernel: cpu 3 spinlock event irq 83

Apr 27 11:35:06 xo01 kernel: cpu 4 spinlock event irq 84

Apr 27 11:35:06 xo01 kernel: cpu 5 spinlock event irq 85

Apr 27 11:35:06 xo01 kernel: cpu 6 spinlock event irq 86

Apr 27 11:35:06 xo01 kernel: cpu 7 spinlock event irq 87

Those events seem to be what is slowing down boot.

Ever since I started using XCP-ng I was able to observe this behavior.

Once the VM is fully booted performance seems to be normal.

Maybe worth investigating at some point.

Best regards

I updated my test environment and performed a few tests:

All tests worked fine so far. Only thing that I noticed: When converting VHD-based VMs to QCOW2 format I was not able to storage migrate more than 2 VMs at a time. XO said something about "not enough memory". That might be related to my dom0 in test environment only having 4GB of RAM. Maybe not related to VHD to QCOW2 migration path. I never saw this error in my live environment where all node's dom0 have 8GB RAM.

Update candidate looks good so far from my point of view.

@florent Thanks!

I did not have time (yet) to look into the heap export due to weekend being in between.

I will update to current master and provide you with the heap export in the following days.

Best regards

@florent Yes I am using XO from sources:

Currently on the branch that @bastien-nollet asked me to test.

Yeah sure I can export a memory heap. Can you give me instructions for that?

Also: currently I have one backup job running. Is it better to take the memory heap snapshot while backup is running or afterwards?

Best regards

Hello @MathieuRA , @florent

Moving the discussion to this thread as it seems to be tackling the same issue: https://xcp-ng.org/forum/topic/11892/xoa-memory-usage/28?_=1776334896974

I reported my findings so far over there

Best regards

Hello @bastien-nollet @florent

moving the discussion from this thread (link), to this one as it seems to be tackling the same issue.

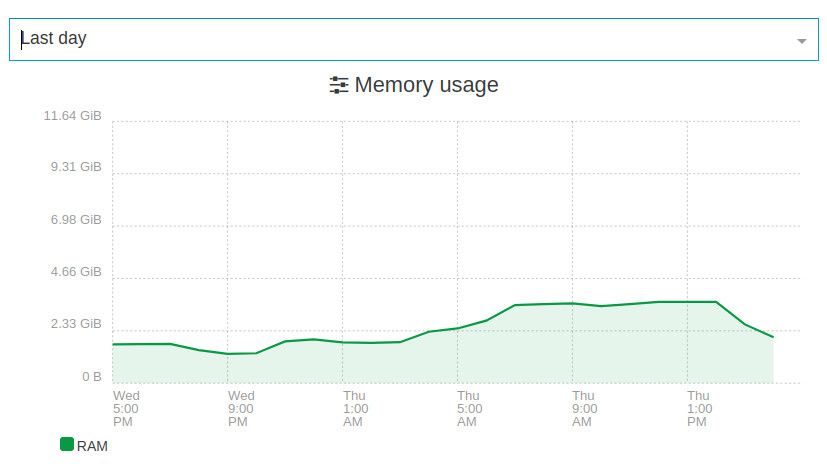

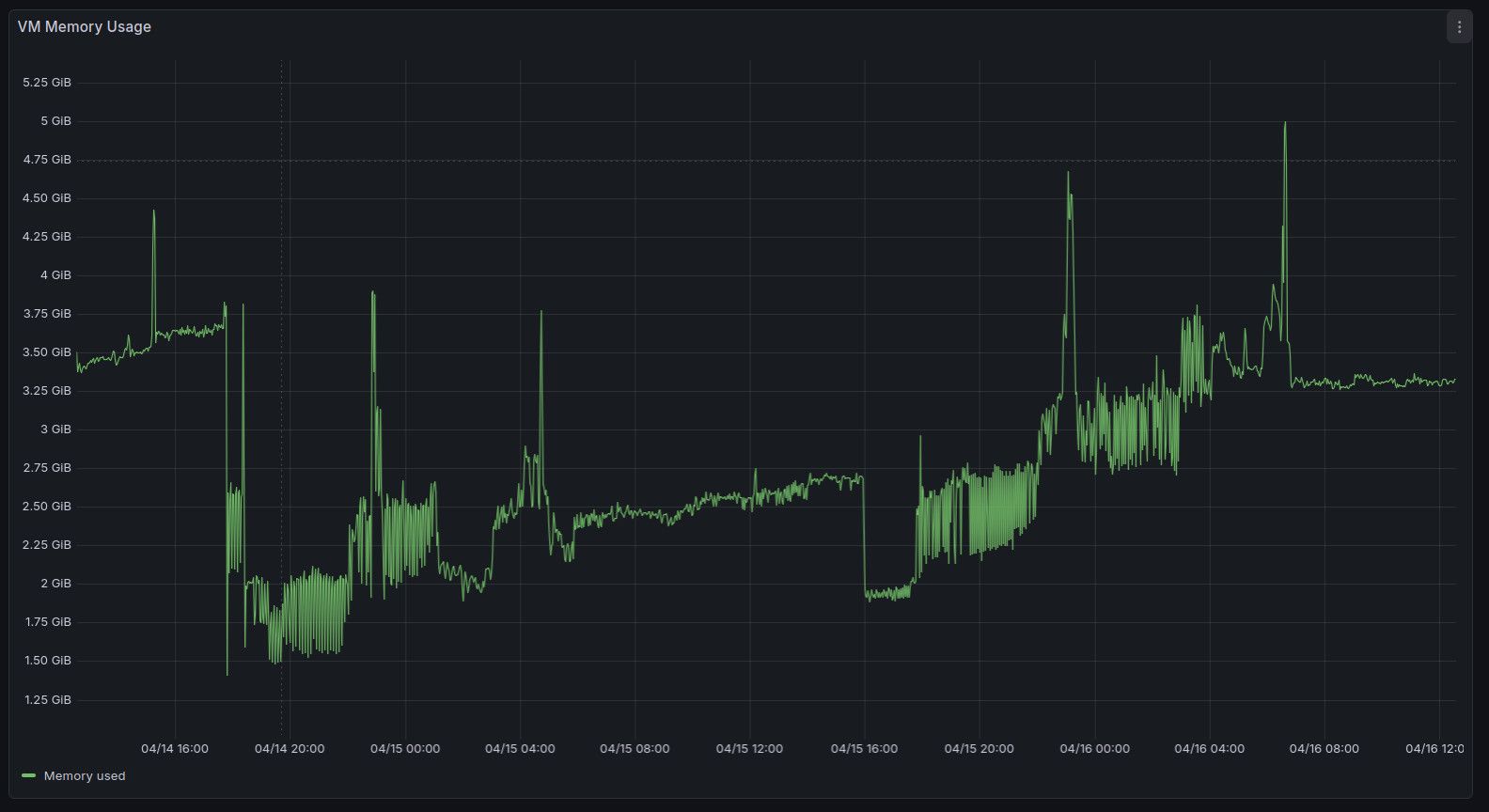

This is the RAM usage of my Xen Orchestra instance before applying "fix(rest-api): fix memory-leak on subscription"

Between 04/07 and 04/10 the system was not rebooted. The RAM usage increased step by step as shown in the screenshot caused by backup runs. Each backup run increased RAM usage and it did not go back to where it was before the backup run.

This is the RAM usage after applying said fix via branch "mra-fix-rest-memory-leak".

Between 04/14 and 04/16 the VM was not restarted.

Backup jobs are scheduled to run every day.

RAM usage still increased a bit by each backup run but it seems to be less.

Looks like an improvement (which is great!) but maybe the issue is not fully fixed yet.

I can not fully tell as I need to keep monitoring this a bit more.

I will keep having an eye on this and report back.

Thanks for working on this and best regards.

I updated to branch "mra-fix-rest-memory-leak".

I will look at backup job results tomorrow and report back.

@MathieuRA Sounds promising! Once my backup job finished I will update my XO instance and start testing. Thanks for the heads up and for working on this.

Best regards

@simonp Hello! Sorry for the delay. I had some time to test the branch that you mentioned.

I can say that the backup job took way less time to finish after you applied those fixes.

In my case it came down from 2 days to 10 hours.

I wanted to compare all of the backup runs of the last weeks and give you some real data but unfortunately my Xen Orchestra seems to not show the backup runs that took place before updating to your branch in backup history.

I still feel like backups take a little bit longer compared to before the merge-refactor but it is only a small difference.

Anyways. It is a big improvement and the jobs finish in reasonable time again. Thank you!

@simonp I'll have to wait for the currently running backup job to finish.

I will update my XO instance afterwards and re-test.

I can probably give you feedback in this regard tomorrow or the day after at latest.

Thanks and best regards

@Pilow I noticed the same thing.

After upgrading my XO instance my backups jobs started to take waaaay longer to finish.

I also noticed that the clean-vm / merging seems to slow down the backup jobs quite heavily.

@simonp

Maybe it would be a good idea to test these kind of things before releasing. Maybe by adding time thresholds to the unit tests as in "if step a or b takes longer than x seconds it is considered failed"?