The past year 2022 was again an exciting year with numerous innovations in XCP-ng and Xen Orchestra as well as good and helpful discussions in the forum. I am excited about what the new year brings and wish everyone a good start for 2023.

Best posts made by gskger

-

A wonderful new year for everybody

-

RE: Can I just say thanks?

I second that! While not a commercial user, I really like the community and the active participation of the Vates team helping novice homelab user with patience and commercial user with in-depth knowledge alike. Keep rocking!

-

RE: Backup reports on Microsoft Teams

You could at least send the backup reports (requires

backup-reportsandtransport-emailplugin on XOA) to a Microsoft Teams channel of your choice (Channel - More options - Get email adress). -

RE: XCP-ng 8.2 updates announcements and testing

gduperrey Updated my two host playlab without a problem. Installed and/or update guest tools (now reporting

7.30.0-11) on some mainstream Linux distros worked as well as the usual VM operations in the pool. Looks good

-

RE: XCP-ng 8.2 updates announcements and testing

bleader Update worked well on my two node homelab and everything looks and works normal after reboot. I did some basic stuff like VM and Storage migration, but nothing in depth. Let's see how things work out.

-

RE: XCP-ng 8.2 updates announcements and testing

bleader Updated my homelab without any issues

-

RE: XCP-ng 8.2 updates announcements and testing

stormi Did not even know the problem existed

. Anyway, added a new (second) DNS server (9.9.9.9) to the DNS server list via

. Anyway, added a new (second) DNS server (9.9.9.9) to the DNS server list via xsconsoleand rebooted the host (XCP-ng 8.2.0 fully patched).Before update: DNS 9.9.9.9 did not persist, only the previous settings are shown

After update: DNS 9.9.9.9 did persist the reboot and is listed together with the previous settingsDeleting DNS 9.9.9.9 worked as well, so the

xsconsoleupdate worked for me. -

RE: XCP-ng 8.2 updates announcements and testing

stormi Updated my two host playlab (8.2.0 fully patched, the third host currently serves as a Covid-19 homeoffice workstation) with no error. Rebooted and ran the usual tests (create, live migrate, copy and delete a linux and a windows 10 VM as well as create / revert snapshot (with/without ram) ). Fooled myself with a

VM_LACKS_FEATUREerror on the windows 10 VM until I realized that I forgot to install the Guest tools - I need more sleep. Will try a restore after tonights backup.

- I need more sleep. Will try a restore after tonights backup.Edit: restore from backup worked as well

-

A great and happy new year

A great and happy new year 2022 to everybody! Another year has passed and the XCP-ng community continues to rock! Stay optimistic and healthy (never hurts

).

). -

Nvidia P40s with XCP-ng 8.3 for inference and light training

Being curious about Large Language Models (LLMs) and machine learning, I wanted to add GPUs to my XCP-ng homelab. Finding the right GPU in June 2024 was not easy, given the numerous options and constraints, including a limited budget. My primary setup consists of two HP ProDesk 600 G6 running my 24/7 XCP-ng cluster with shared storage. My secondary setup features a set of Dell R210 II and Dell R720 servers, which I use for memory-intensive tasks. The Dell R720 can hold up to two full-sized GPU which lead to my first requirement: a GPU must fit into the Dell R720.

With R720 compatibility as a requirement, gaming GPUs (RTX 2080, 3080/3090, 4080/4090) were not an option. Additionally, they are expensive and do not all come with a lot of VRAM memory. But I admit that those are more powerful compared to what I came up with.

Since I want to test different LLMs with various parameter sizes, my minimum memory size requirement was 24GB VRAM. That clearly reduced the GPU options again, even for compatible low power (~70W) GPUs like the Nvidia RTX A2000 (12GB) or the Nvidia Tesla T4 (16GB).

My budget limit for getting started was around €300 for one GPU. That narrowed down my search to the Nvidia Tesla P40, a Pascal architecture GPU that, when released in 2016, cost around $5,699. In Europe, the P40 is available on Ebay for around €300 to €500, and I was very lucky to get two P40s for around €510 in total. Seeing two P40s on Ebay in the US for $299 with no delivery option for Europe was a painful experience though.

However, I had to make two compromises with the P40, which may be a problem in the future. First, the P40 is lacking Tensor Cores, which are essential for deep learning training compared to FP32 training. Additionally, the P40 is limited by its CUDA compute capability of 6.1, which is lower than that of newer GPUs like the H100 (9.0). At some point, software tools might stop supporting the P40.

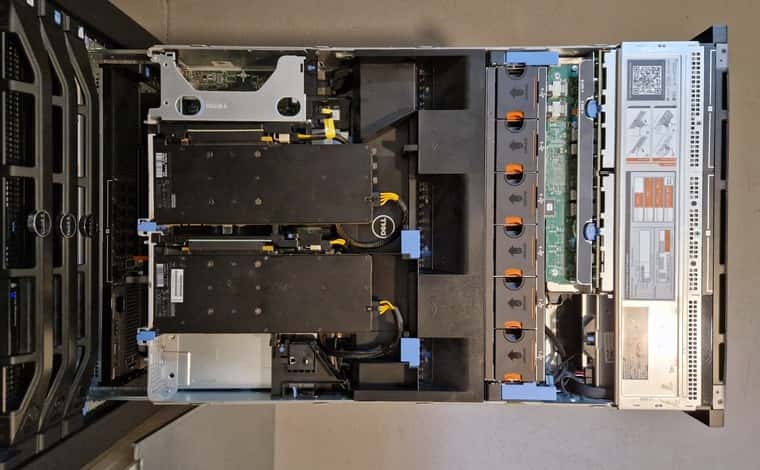

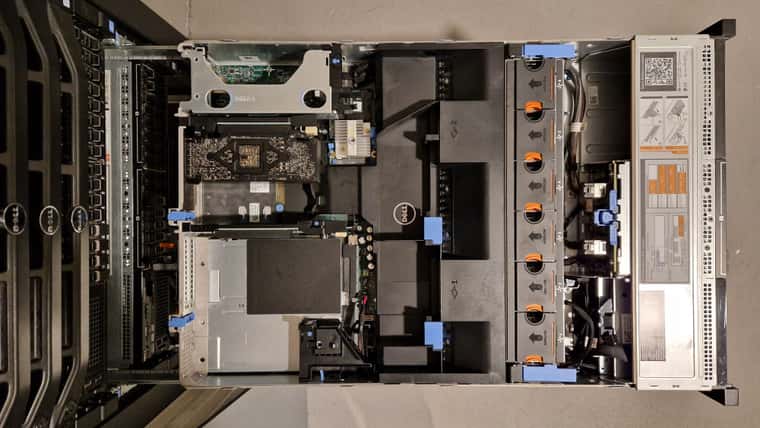

To install a second GPU I had to swap the Dell R720 riser card #3 from 2 PCIe x8 slots with a 150W power connector to a 1 PCIe x16 slot with a 225W power connector. Like the K80/M40/M60/P100 the P40 has a 8-pin EPS connector, so you need a special power cable that can be sourced from Ebay. Using the standard Dell general-purpose GPU cable risks damaging the GPU or motherboard. Yesterday, the last part arrived so today is install day.

The process of swapping riser #3, installing the two P40 GPUs, and connecting the power cables was straightforward.. During boot, the server checks PCI devices and updates the inventory, which might take some minutes. After the initial fan ramp-up, the fan speed dropped back to normal and the Dell R720 idles at about 126W with both GPUs installed.

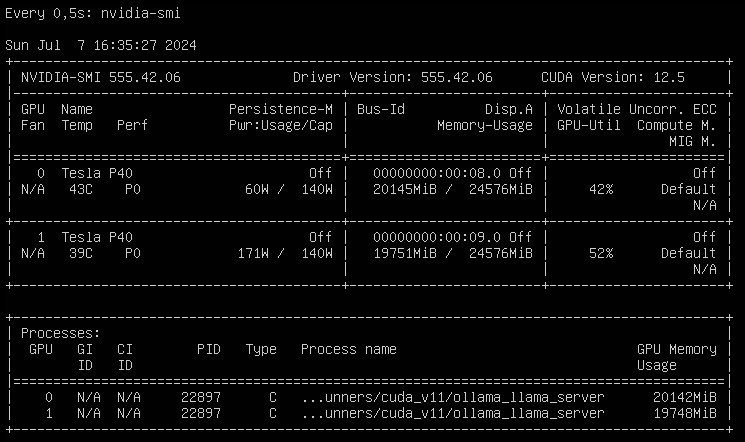

Next step was installing and updating XCP-ng 8.3 beta, which was as easy as installing the GPUs. Adding the host to XO from source and activating the PCI pass-through in the hosts advanced view required a reboot, but after that I could setup an Ollama VM to run LLMs and another Open WebUI VM to chat with the LLMs. With 48 GB of VRAM, I can run

llama3-70bwith some headroom and about 6 tokens/sec whilellama3-8bis much smaller and answers with 23 tokens/sec on this setup.

So what are the next steps? On one hand, I want to setup a development environment for

Phytonand API based usage of LLMs (not only local LLMs, but also cloud based LLMs like ChaGPT or Claude). That will be fun, since I have zero experience with that. On the other hand, I will setup more GPU supported services like Perplexica or AUTOMATIC1111 or whisper. Apart from that, I will also try to improve my prompt engineering skills and learn about LLM multi agent frameworks.The best thing on this setup is that XCP-ng 8.3 beta provides a robust foundation for running Large Language Models (LLMs) and other AI workloads on one machine. Looking forward to the release candidate!

Latest posts made by gskger

-

RE: XCP-ng 8.3 updates announcements and testing

Greg_E You need to update all members of a pool, starting with the pool coordinator (including reboot if needed), followed by each member.

-

RE: XCP-ng 8.3 updates announcements and testing

gduperrey Updated two Dell R720 servers (dual E5-2640v2, 128GB RAM, with GPUs) and a Dell R730 server (dual E5-2690v4, 512GB RAM, with GPUs) without issues. The services (VMs) are working as expected, so we'll see how the systems perform over the next few days

-

RE: XCP-ng 8.3 updates announcements and testing

gduperrey Installed on some R720s with GPUs and everything works as expected. Looks good

Edit: also installed the update on my playlab hosts and a Dell PowerEdge R730. Again no issues and all hosts and VMs are up again.

-

RE: Some questions about vCPUs and Topology

jasonnix Not an expert on this topic, but my understanding is that 1 vCPU can be understood as 1 CPU core or - when available - 1 CPU thread. Since vCPU's are used for resource allocation by the hypervisor, vCPU overprovisioning can make things more complicated.

VMs or memory-sensitive applications sometimes make memory locality (NUMA) decisions based on the topology of the vCPUs (sockets/cores) presented by the hypervisor, which is why you can choose different topologies. You can read more on NUMA affinity in the XCP-ng documentation. In a homelab, you rarely have to worry about this and as a rule of thumb, you can use virtual sockets with 1 core for the amount of vCPUs you need (which is the standard for XCP-ng and VMware ESXi).

Sometimes it still makes sense to keep the number of sockets low and the number of cores high, as sockets or cores can be a licensing metric that determine license costs. However, most manufacturers already take this into account in their terms and conditions.

In a somewhat (over-) simplified form: socket/core topologies can be used in some special scenarios to optimize memory efficiency and performance or to optimize licensing costs.

-

RE: How to Migrate VMs Seamlessly in Xen Orchestra ??

danieeljosee If your XCP-ng pool is set up correctly, live migration with Xen Orchestra works straight away. Compatibility is key in a pool (CPU manufacturer, CPU architecture, XCP-ng version, network cards/ports). There is a lot of leeway for CPU architecture, but I would recommend not mixing new and older CPU generations.

For the network settings, it depends on your network architecture. You can run all network traffic via one NIC or you can separate the network traffic from management, VM migration, VM and/or storage/backup using more NICs. In a homelab, one NIC is usually sufficient.

While shared storage is very helpful for VM live migration, you can also live migrate the VM with its disks attached on local storage. Just select the destination host and destination storage repository. For local storage, VMs and attached disks must of course be located on the same destination host.

Check out Tom's YT channel as ph7 suggested.

-

RE: Nvidia P40s with XCP-ng 8.3 for inference and light training

Vinylrider Cool modification, but definitely not for the faint-hearted. Is it difficult to cut through the metal? Interestingly, in your setup, the end with the 4x black wires connects to the riser card. With my setup / cable, it's the 5x black wire end that plugs into the riser card. Mh, I saw that on youtube but never gave it much thought until now.

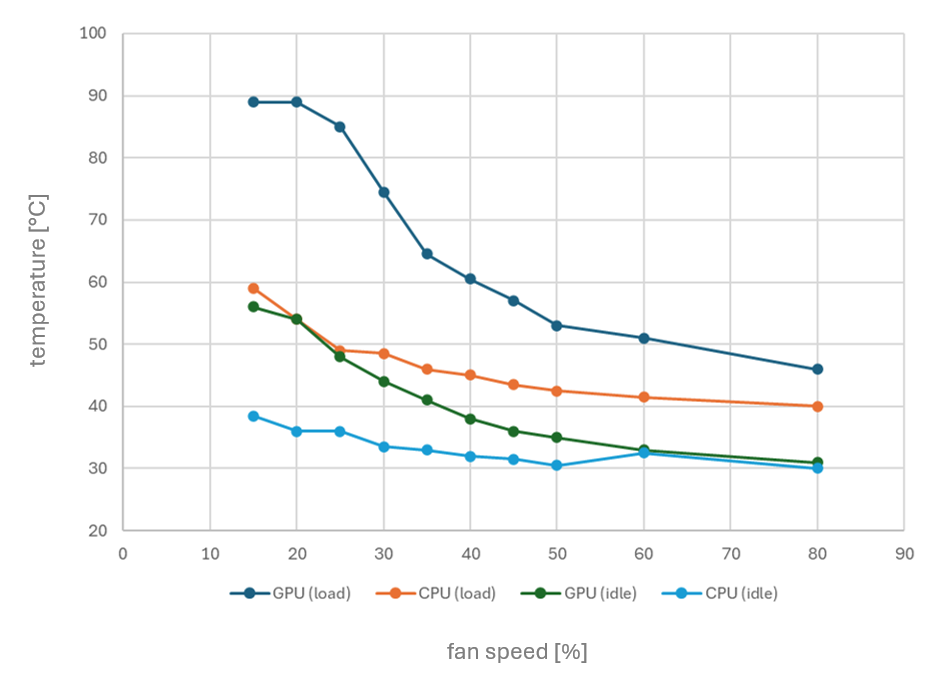

Since my R720s can’t natively monitor GPU temperatures of this GPUs to adjust fan speeds, I’m planning to create a script that reads both CPU and GPU temperatures and dynamically controls the fan speed through iDRAC. My R720s, equipped with two Intel Xeon CPU E5-2640 v2 processors (TDP of 95W), don’t typically run too hot though, even under load.

Here’s a quick temperature chart I’ve put together for my setup, showing CPU and GPU temperatures at various fan speeds (adjusted via iDRAC) - both at idle and under load:

With the automatic fan control at 45%, both CPU and GPU temperatures remain comfortably below 60°C, even under load.

-

RE: NVIDIA Tesla M40 for AI work in Ubuntu VM - is it a good idea?

CodeMercenary Did some tests with the A2000 and as expected, the 12GB VRAM is the biggest limitation. Used vanilla installations of Ollama and ComfyUI with no tweaking or optimization. Especially in stable diffusion, the A2000 is about three times faster compared to the P40, but that is to be expected. I have added some results below.

Stable Diffusion tests

A2000 1024x1024, batch 1, iterations 30, cfg 4.0, euler 1.4s/it 1024x1024, batch 4, iterations 30, cfg 4.0, euler 2.5s/it = 0.6s/it P40 1024x1024, batch 1, iterations 30, cfg 4.0, euler 2.8s/it 1024x1024, batch 4, iterations 30, cfg 4.0, euler 12.1s/it = 3s/itInference tests

A2000 qwen2.5:14b 21 token/sec qwen2.5-coder:14b 21 token/sec llama3.2:3b-Q8 50 token/sec llama3.2:3b-Q4 60 token/sec P40 qwen2.5:14b 17 token/sec qwen2.5-coder:14b 17 token/sec llama3.2:3b-Q8 40 token/sec llama3.2:3b-Q4 48 token/secDuring heavy testing, the A2000 reached 70°C and the P40 reached 60°C both with the Dell R720 set to automatic fan control.

-

RE: XCP-ng 8.3 updates announcements and testing

gduperrey Update some Dell R720s with GPUs and a Dell R730. Update worked without any problem and VMs operate as expected. Will update this post if that changes during day-to-day operation. Great work!

-

RE: NVIDIA Tesla M40 for AI work in Ubuntu VM - is it a good idea?

CodeMercenary I got my hands on a Nvidia RTX A2000 12GB (around 310€ used on Ebay) which might be an option, depending on what you want to do. It is a dual slot low profile GPU with 12GB VRAM, a max power consumption of 70W and active cooling. With a compute capability of 8.3 (P40: 6.1, M40: 5.2) it is fully supported by

ollama. While 12GB is only 50% of one P40 with 24GB VRAM, it runs small LLMs nicely and with a high token per second rate. It can almost run the Llama3.2 11b vision model (11b-instruct-q4_K_M) using the GPU with only 3% offloaded to CPU. I will start testing this card during the weekend and can share some results if that would help.

-

RE: NVIDIA Tesla M40 for AI work in Ubuntu VM - is it a good idea?

CodeMercenary I didn't know there was a midboard hard drive option for the R730xd - cool. You could always install a couple Nvidia T4s, but they only come with 16GB of VRAM and are much more expensive compared to the P40s. For reference, I've added a top view of the R720 with two P40s installed.