@fdhabhar XO Lite isn't meant to be the complete tool to restore a VM from. It appears it does allow you to create a snapshot, but snapshots are the whole backup.

Is there a reason you're unable to use XO (Community or Appliance)?

@fdhabhar XO Lite isn't meant to be the complete tool to restore a VM from. It appears it does allow you to create a snapshot, but snapshots are the whole backup.

Is there a reason you're unable to use XO (Community or Appliance)?

@acebmxer I may just need to re-evaluate our backup strategy and adjust it so there is more time for the backups, I could also just run the daily delta's, the main issue is the weekly fulls that I run as a precaution, I'm always paranoid about something happening with the daily delta chain and having an unusable backup so i also pull dedicated weekly full backups which take a lot of time to run.

I've also considered running the full backups at different days to spread them out more, sounds like one of those is my best option rather than adding more cost/complexity.

I would absolutely change this backup plan, to running monthly full backups (weekly full backups are overkill for most).

The backup mechanism in XO has improved a ton (since launch). Without more detail, types of VMs, workloads etc it's really difficult for anyone to offer a perfect answer, but most people here would likely agree that weekly full's aren't a benefit here.

Changing the window on your backups is also an option as you mentioned, but that is only shifting when the work is being performed, not the type of work performed.

If you have a 1TB server and you're backing that up daily with delta's and weekly with full backups you're backing up something like 1300 GB every week (of course this depends on your delta data change).

Is the recommended approach for finding HCL equipment still to look at Citrix's HCL. Specifically looking at adding GPU's to our pool and want to confirm that we won't have any issues passing these through to our VMs for use in Kubernetes.

TIA

Hi there!

I'm using XO from source.

I can't see how to apply patches to a host from the GUI in XO-6; it reports that there are patches, but there's no option to apply them. I have to switch to XO-5, or use the command line.

Has this made it into the UI yet and I'm just missing it?

(FWIW, this is a home lab, and I have only a single host in a single pool. I don't know if the GUI has safety checks to prevent patching a machine with running VM's.)

At the moment you can't this is a WIP. You'll swap over to the v5 interface and perform your patching there. Unless you wanted to login to your pool master, manually patch that and then reboot it, lastly manually patching your remaining hosts in the pool.

@DustinB but this is impossible scenario. CR requried a VM SR, not a backup one) weird option.

You're still replicating a VM to a storage repository.

Maybe the system will allow you to create a more flexible CR job using different hosts, rather than 1:1 . . . ?

still not clear what it does

"one backup archive of each VM"... on first remote ? on second ? alternatively ?

You get to add multiple backup repo's to your XO instance, if your backup SR becomes full, XO starts using the second, third etc storage repo so you don't have to lift and shift your backups.

My understanding is this should only be happening during backups, not a CR run though.

CR is a form of backup.

https://xen-orchestra.com/blog/xen-orchestra-6-2/

In the above blog posting this feature is explained that @ph7 referenced.

Another suggestion, collapse non-running VMs into a single group, rather than having them listed individually.

https://feedback.vates.tech/posts/50/collapse-non-running-vms-into-group

@john.c This is already viewable under each hosts in the pool.

@olivierlambert https://feedback.vates.tech/posts/49/pool-view-rather-than-host-view

So you’re interested in having it a bit more like when using VMware vSphere while, regarding VMs, hosts and pools? That could work, but still need a hosts view for each member of the pool. In the host’s view it needs to be able to see, what VMs are running on it.

Also needs to be compatible with https://github.com/vatesfr/xen-orchestra/issues/9430. If not already synced with NetBox, the pool and hosts should be linked by UUID when synced. So in other words the structure needs to be pool is linked by UUID to host by its UUID and then the same for VMs. So the VMs are linked by UIID to the host UUID.

Nothing in my post would restrict the view to only seeing VMs within a specific Pool, the view should be flexible enough to shows VMs in a pool.

As it is now, you see all VMs that are running under a given host and then pool.

This in and of itself could become tedious to manage/dig through.

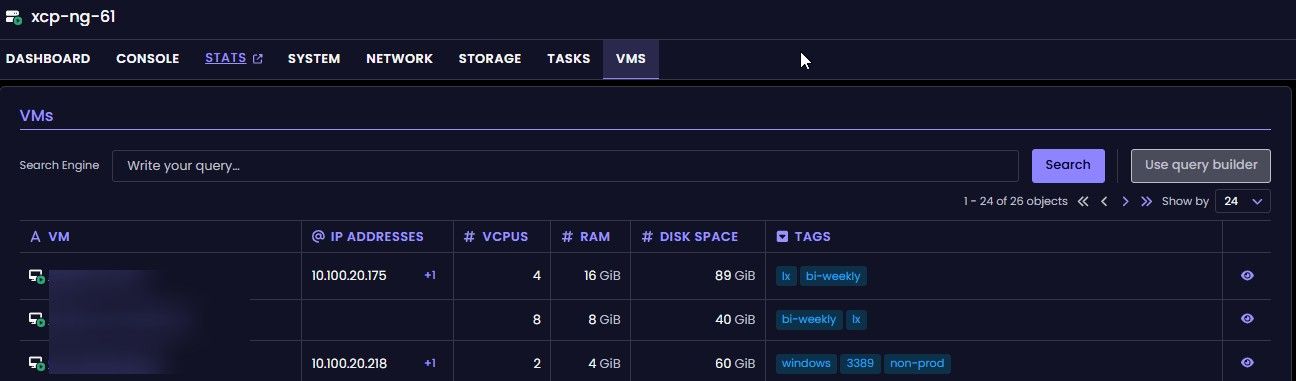

The potential remedy here would be to show all VMs in a pool, and on the given VM show the host where it's running.

I just tried with XCP-ng 8.3 and it's exactly the same, just spinning wheels, nothing ever opens, same in Chrome.

I wonder how anyone even got this to work at all?

or maybe I just chose some unlucky commit? I just built from latest master commit

You would download the ISO to install XCP-ng to your hardware. Are you specifically talking about Xen Orchestra?

https://xcp-ng.org/forum/topic/11967/gracefull-shutdown-of-host-when-ups-battery-runs-low

Not a great response, if this is really a need paying customers should go and put a request in on the ticketing side.

Yes this "extra" tool was not (never?) supported ,

NUT has never been supported in XCP-ng, it has been asked about for a long time though and a lot of people handle XCP-ng not as an appliance but as any other Server/Desktop system and install packages because they aren't blocked from doing so

@acebmxer Using performance mode should migrate your VM's so all systems are equally "performant" the different modes are outlined here https://docs.xcp-ng.org/management/vm-load-balancing/

As to why your VMs not migrate, I would only be guessing - anyways my guess is that it only polls the system every so often and if your systems are performing well enough nothing gets moved...

@firefly because the underlying hardware that the VM has registered has likely changed, maybe substantially.

A sysprep has the Windows system go through and validate what it's hardware is, it removes hardware specific drivers namely, but it does other stuff too.

sysprep on windows is also sometimes required, but will require that you reactive the OS with it's product key.

Why would it take 30 minutes to boot a Windows ESXi 8.0.3 imported VM in XOA on the first boot?

Did you remove the ESXi drivers from the VM before migrating it to XCP-ng?

And to really round this out, the MTBF for any of these is in the millions of hours (1.2-3M), that's a use time of 136.968 - 342.46 years respectively.

Basically, if a drive dies, just replace it no matter what, but in the end the reliability of these drives is meant to outlast all of us.

Unless you actually need some specific function provided in some form-factor or model, don't bother.