XCP-ng 8.3 updates announcements and testing

-

Sorry, about this. I think when migrating PV VMs from a previous system I had issues and "needed" to install "qemu-img" and I did not remember. So removing it, solved the problem. So all my fault. Sorry about this message!!!

-

Ran the 2 updates released today and...

Back to only showing one VM inBackup/Restoreas it did a month or 2 ago.

Ran the replication job and all VMs showed up inBackup/Restoreagain. (XO5) -

anyone know if applying these two patches requires rebooting?

-

@marcoi I've read in the blog post of Vates that no.

Did it anyway. -

Indeed, no reboot required if those are the only patches that you are applying, as indicated in the blog post.

-

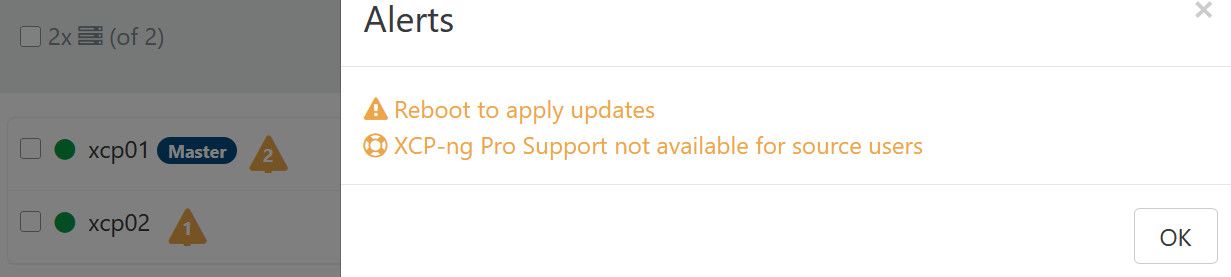

@stormi I getting a response to reboot after applying to master.

-

XO isn't subtle and will ask for reboot regardless of the type of update (because for now there's no way to know if reboot is required)

-

@olivierlambert lol okay got it

-

I got the results. You can access our lab, feel free, nothing is productive. Support ID: 40797

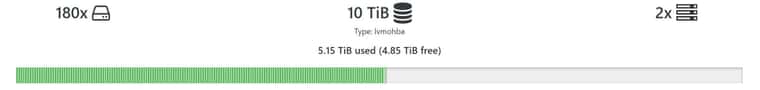

High count of VDI / VMs on single SR make entire Storage slow.

Migration fails because of:

[21:52 xcp-ng-1 ~]# xe sr-scan uuid=43dbbe8e-039a-e66c-f6cb-d88de4f4d962

Error code: SR_BACKEND_FAILURE_1200

Error parameters: , list index out of range,59 Lines of log:

May 8 21:40:34 xcp-ng-2 SM: [56171][MainThread] ['/sbin/lvchange', '-an', '/dev/VG_XenStorage-43dbbe8e-039a-e66c-f6cb-d88de4f4d962/QCOW2-ffcf4c40-0d81-4da7-9908-478629c88358'] May 8 21:40:34 xcp-ng-2 SM: [56171][MainThread] pread SUCCESS May 8 21:40:34 xcp-ng-2 fairlock[8031]: /run/fairlock/devicemapper released May 8 21:40:34 xcp-ng-2 fairlock[8031]: /run/fairlock/devicemapper acquired May 8 21:40:34 xcp-ng-2 fairlock[8031]: /run/fairlock/devicemapper sent '56171 - 832.417448266' May 8 21:40:34 xcp-ng-2 SM: [56171][MainThread] ['/sbin/dmsetup', 'status', 'VG_XenStorage--43dbbe8e--039a--e66c--f6cb--d88de4f4d962-QCOW2--ffcf4c40--0d81--4da7--9908--478629c88358'] May 8 21:40:34 xcp-ng-2 SM: [56171][MainThread] pread SUCCESS May 8 21:40:34 xcp-ng-2 fairlock[8031]: /run/fairlock/devicemapper released May 8 21:40:34 xcp-ng-2 SM: [56171][MainThread] lock: released /var/lock/sm/lvm-43dbbe8e-039a-e66c-f6cb-d88de4f4d962/ffcf4c40-0d81-4da7-9908-478629c88358 May 8 21:40:34 xcp-ng-2 fairlock[8031]: /run/fairlock/devicemapper acquired May 8 21:40:34 xcp-ng-2 fairlock[8031]: /run/fairlock/devicemapper sent '56171 - 832.433077125' May 8 21:40:34 xcp-ng-2 SM: [56171][MainThread] ['/sbin/vgs', '--noheadings', '--nosuffix', '--units', 'b', 'VG_XenStorage-43dbbe8e-039a-e66c-f6cb-d88de4f4d962'] May 8 21:40:34 xcp-ng-2 SM: [56171][MainThread] pread SUCCESS May 8 21:40:34 xcp-ng-2 fairlock[8031]: /run/fairlock/devicemapper released May 8 21:40:34 xcp-ng-2 fairlock[8031]: /run/fairlock/devicemapper acquired May 8 21:40:34 xcp-ng-2 fairlock[8031]: /run/fairlock/devicemapper sent '56171 - 832.64258113' May 8 21:40:34 xcp-ng-2 SM: [56171][MainThread] ['/sbin/vgs', '--readonly', 'VG_XenStorage-43dbbe8e-039a-e66c-f6cb-d88de4f4d962'] May 8 21:40:34 xcp-ng-2 SM: [56171][MainThread] pread SUCCESS May 8 21:40:34 xcp-ng-2 fairlock[8031]: /run/fairlock/devicemapper released May 8 21:40:34 xcp-ng-2 SM: [56171][MainThread] lock: released /var/lock/sm/43dbbe8e-039a-e66c-f6cb-d88de4f4d962/sr May 8 21:40:34 xcp-ng-2 SM: [56171][MainThread] ***** generic exception: sr_scan: EXCEPTION <class 'IndexError'>, list index out of range May 8 21:40:34 xcp-ng-2 SM: [56171][MainThread] File "/opt/xensource/sm/SRCommand.py", line 113, in run May 8 21:40:34 xcp-ng-2 SM: [56171][MainThread] return self._run_locked(sr) May 8 21:40:34 xcp-ng-2 SM: [56171][MainThread] File "/opt/xensource/sm/SRCommand.py", line 163, in _run_locked May 8 21:40:34 xcp-ng-2 SM: [56171][MainThread] rv = self._run(sr, target) May 8 21:40:34 xcp-ng-2 SM: [56171][MainThread] File "/opt/xensource/sm/SRCommand.py", line 377, in _run May 8 21:40:34 xcp-ng-2 SM: [56171][MainThread] return sr.scan(self.params['sr_uuid']) May 8 21:40:34 xcp-ng-2 SM: [56171][MainThread] File "/opt/xensource/sm/LVMoHBASR", line 163, in scan May 8 21:40:34 xcp-ng-2 SM: [56171][MainThread] LVMSR.LVMSR.scan(self, sr_uuid) May 8 21:40:34 xcp-ng-2 SM: [56171][MainThread] File "/opt/xensource/sm/LVMSR.py", line 822, in scan May 8 21:40:34 xcp-ng-2 SM: [56171][MainThread] new_vdi = self.vdi(cbt_uuid) May 8 21:40:34 xcp-ng-2 SM: [56171][MainThread] File "/opt/xensource/sm/LVMoHBASR", line 230, in vdi May 8 21:40:34 xcp-ng-2 SM: [56171][MainThread] return LVMoHBAVDI(self, uuid) May 8 21:40:34 xcp-ng-2 SM: [56171][MainThread] File "/opt/xensource/sm/VDI.py", line 100, in __init__ May 8 21:40:34 xcp-ng-2 SM: [56171][MainThread] self.load(uuid) May 8 21:40:34 xcp-ng-2 SM: [56171][MainThread] File "/opt/xensource/sm/LVMSR.py", line 1375, in load May 8 21:40:34 xcp-ng-2 SM: [56171][MainThread] size = int(self.sr.srcmd.params['args'][0]) May 8 21:40:34 xcp-ng-2 SM: [56171][MainThread] May 8 21:40:34 xcp-ng-2 SM: [56171][MainThread] ***** LVM over FC: EXCEPTION <class 'IndexError'>, list index out of range May 8 21:40:34 xcp-ng-2 SM: [56171][MainThread] File "/opt/xensource/sm/SRCommand.py", line 392, in run May 8 21:40:34 xcp-ng-2 SM: [56171][MainThread] ret = cmd.run(sr) May 8 21:40:34 xcp-ng-2 SM: [56171][MainThread] File "/opt/xensource/sm/SRCommand.py", line 113, in run May 8 21:40:34 xcp-ng-2 SM: [56171][MainThread] return self._run_locked(sr) May 8 21:40:34 xcp-ng-2 SM: [56171][MainThread] File "/opt/xensource/sm/SRCommand.py", line 163, in _run_locked May 8 21:40:34 xcp-ng-2 SM: [56171][MainThread] rv = self._run(sr, target) May 8 21:40:34 xcp-ng-2 SM: [56171][MainThread] File "/opt/xensource/sm/SRCommand.py", line 377, in _run May 8 21:40:34 xcp-ng-2 SM: [56171][MainThread] return sr.scan(self.params['sr_uuid']) May 8 21:40:34 xcp-ng-2 SM: [56171][MainThread] File "/opt/xensource/sm/LVMoHBASR", line 163, in scan May 8 21:40:34 xcp-ng-2 SM: [56171][MainThread] LVMSR.LVMSR.scan(self, sr_uuid) May 8 21:40:34 xcp-ng-2 SM: [56171][MainThread] File "/opt/xensource/sm/LVMSR.py", line 822, in scan May 8 21:40:34 xcp-ng-2 SM: [56171][MainThread] new_vdi = self.vdi(cbt_uuid) May 8 21:40:34 xcp-ng-2 SM: [56171][MainThread] File "/opt/xensource/sm/LVMoHBASR", line 230, in vdi May 8 21:40:34 xcp-ng-2 SM: [56171][MainThread] return LVMoHBAVDI(self, uuid) May 8 21:40:34 xcp-ng-2 SM: [56171][MainThread] File "/opt/xensource/sm/VDI.py", line 100, in __init__ May 8 21:40:34 xcp-ng-2 SM: [56171][MainThread] self.load(uuid) May 8 21:40:34 xcp-ng-2 SM: [56171][MainThread] File "/opt/xensource/sm/LVMSR.py", line 1375, in load May 8 21:40:34 xcp-ng-2 SM: [56171][MainThread] size = int(self.sr.srcmd.params['args'][0]) May 8 21:40:34 xcp-ng-2 SM: [56171][MainThread] May 8 21:40:34 xcp-ng-2 SM: [56171][MainThread] lock: closed /var/lock/sm/lvm-43dbbe8e-039a-e66c-f6cb-d88de4f4d962/030878c2-49fd-4b0f-a971-9dfa121249a3Best regards

Igor

-

@olivierlambert Seeing some failure/errors on CR jobs. It leaves VDIs attached to Control Domain... Next run it normally works. I have not seen this error until after the current sm update. Running XO (commit 7e144).

"message": "INTERNAL_ERROR(Storage_error ([S(Illegal_transition); [[S(Activated);S(RO)];[S(Activated);S(RW)]]]))", "name": "XapiError", "stack": "XapiError: INTERNAL_ERROR(Storage_error ([S(Illegal_transition);[[S(Activated);S(RO)];[S(Activated);S(RW)]]]))\n at XapiError.wrap (file:///opt/xo/xo-builds/xen-orchestra-202605050700/packages/xen-api/_XapiError.mjs:16:12)\n at default (file:///opt/xo/xo-builds/xen-orchestra-202605050700/packages/xen-api/_getTaskResult.mjs:13:29)\n at Xapi._addRecordToCache (file:///opt/xo/xo-builds/xen-orchestra-202605050700/packages/xen-api/index.mjs:1078:24)\n at file:///opt/xo/xo-builds/xen-orchestra-202605050700/packages/xen-api/index.mjs:1112:14\n at Array.forEach (<anonymous>)\n at Xapi._processEvents (file:///opt/xo/xo-builds/xen-orchestra-202605050700/packages/xen-api/index.mjs:1102:12)\n at Xapi._watchEvents (file:///opt/xo/xo-builds/xen-orchestra-202605050700/packages/xen-api/index.mjs:1275:14)\n at process.processTicksAndRejections (node:internal/process/task_queues:104:5)" -

Ping @Team-Storage

-

Hello @igorglock, Damien is in holiday this week but he identified the issue and a patch should be tested for the next release of SM.

-

I get this in dmesg after the latest updates :

[ 54.673443] python3[3691]: segfault at 200000 ip 00007f16eb8eca9f sp 00007ffd b84e9ff0 error 4 in libpython3.6m.so.1.0[7f16eb804000+28d000] [ 54.673450] Code: 01 00 00 8d 5f ff 48 8d 2d de 3a 3c 00 c1 eb 03 44 8d 24 1b 4e 8b 44 e5 00 49 8b 70 10 49 39 f0 74 5f 49 8b 40 08 41 83 00 01 <48> 8b 38 48 85 ff 49 89 78 08 74 0d 48 83 c4 10 5b 5d 41 5c c3 0f [ 84.587661] xapi[3697]: segfault at 7f28cacaea40 ip 00007f28c6df0ec2 sp 00007 f289a5b8af0 error 6 in libjemalloc.so.2[7f28c6d85000+85000] [ 84.587669] Code: 48 2b 73 08 44 8b 4d 84 ba 01 00 00 00 49 83 c2 01 49 0f af f1 4c 8d 0d ac 72 42 00 48 89 f1 48 c1 ee 26 48 c1 e9 20 48 d3 e2 <48> 31 54 f3 40 48 8b 8d 58 ff ff ff 48 8b 33 48 8d be 00 00 00 10 -

@Andrew I have experienced this twice so far as well.

-

@stormi I'm also getting error on some VMs while trying to export a disk and also trying to even start some VMs from NFS (that were fine before).

xo-server[565]: 2026-05-13T02:53:15.746Z xo:api WARN admin | vm.start(...) [2s] =!> XapiError: INTERNAL_ERROR(xenopsd internal error: Storage_error ([S(Illegal_transition);[[S(Activated);S(RO)];[S(Activated);S(RW)]]])) xo-server[565]: 2026-05-13T02:53:40.652Z xo:api WARN admin | vm.start(...) [3s] =!> XapiError: SR_BACKEND_FAILURE_46(, The VDI is not available [opterr=VDI 399734eb-5965-4799-ac36-f6dd774db867 not detached cleanly], ) -

Ping @Team-Storage again, about the last comment.

-

New security update candidates for XCP-ng 8.3 LTS (kernel, xen, intel-microcode)

This release batch contains security fix on kernel, version updates, some bug fixes.

What changed

Virtualization & System

kernel: Update to 4.19.19-8.0.46.3- Fixes CVE-2026-43284 (used by the DirtyFrag and CopyFail2 exploits)

intel-microcode: Update to 20260416-1- Improve Intel support and security INTEL-SA-01420

xen: Update to 4.17.6-8.1- Minor bugfixes for x86 systems, including calibration of various timers and handling of PCI devices when disabling SR-IOV

Control plane

xapi: Update to 26.1.4- Minor NUMA fixes

UI

xo-lite: Update to 0.21.0- chore: upgrade dependencies with known security vulnerabilities (#9640)

- These vulnerabilities are not believed to affect XO Lite itself. They are fixed as defence-in-depth.

- Changelog

- chore: upgrade dependencies with known security vulnerabilities (#9640)

Versions:

gpumon: 24.1.0-83.2.xcpng8.3 -> 24.1.0-84.1.xcpng8.3intel-microcode: 20260115-1.xcpng8.3 -> 20260416-1.xcpng8.3kernel: 4.19.19-8.0.46.2.xcpng8.3 -> 4.19.19-8.0.46.3.xcpng8.3xapi: 26.1.3-1.10.xcpng8.3 -> 26.1.4-3.1.xcpng8.3xcp-featured: 1.1.8-6.xcpng8.3 -> 1.2.1-1.xcpng8.3xen: 4.17.6-6.2.xcpng8.3 -> 4.17.6-8.1.xcpng8.3xo-lite: 0.20.0-1.xcpng8.3 -> 0.21.0-1.xcpng8.3

Test on XCP-ng 8.3

yum clean metadata --enablerepo=xcp-ng-testing,xcp-ng-candidates yum update --enablerepo=xcp-ng-testing,xcp-ng-candidates rebootThe usual update rules apply: pool coordinator first, etc.

What to test

As usual, normal use and anything else you want to test.

Test window before official release of the updates

~1 day

We would like to thank users who reported feedback since our last call for testing:

@Andrew, @FritzGerald, @IgorGlock, @bufanda, @flakpyro, @manilx, @marcoi, @ovicz, @ph7. -

@rzr There's other stuff in the updates too. Are these also expected?

forkexecd x86_64 26.1.4-3.1.xcpng8.3 xcp-ng-testing 2.5 M message-switch x86_64 26.1.4-3.1.xcpng8.3 xcp-ng-testing 4.6 M qcow-stream-tool x86_64 26.1.4-3.1.xcpng8.3 xcp-ng-testing 1.4 M rrdd-plugins x86_64 26.1.4-3.1.xcpng8.3 xcp-ng-testing 9.8 M sm-cli x86_64 26.1.4-3.1.xcpng8.3 xcp-ng-testing 4.8 M squeezed x86_64 26.1.4-3.1.xcpng8.3 xcp-ng-testing 1.9 M varstored-guard x86_64 26.1.4-3.1.xcpng8.3 xcp-ng-testing 4.9 M vhd-tool x86_64 26.1.4-3.1.xcpng8.3 xcp-ng-testing 4.9 M wsproxy x86_64 26.1.4-3.1.xcpng8.3 xcp-ng-testing 1.1 M xenopsd x86_64 26.1.4-3.1.xcpng8.3 xcp-ng-testing 1.4 M xenopsd-cli x86_64 26.1.4-3.1.xcpng8.3 xcp-ng-testing 2.0 M xenopsd-xc x86_64 26.1.4-3.1.xcpng8.3 xcp-ng-testing 5.3 M -

While updating my host i see 30 updates...

========================================================================================================================================= Package Arch Version Repository Size ========================================================================================================================================= Updating: forkexecd x86_64 26.1.4-3.1.xcpng8.3 xcp-ng-testing 2.5 M gpumon x86_64 24.1.0-84.1.xcpng8.3 xcp-ng-testing 1.6 M intel-microcode noarch 20260416-1.xcpng8.3 xcp-ng-testing 14 M kernel x86_64 4.19.19-8.0.46.3.xcpng8.3 xcp-ng-testing 29 M message-switch x86_64 26.1.4-3.1.xcpng8.3 xcp-ng-testing 4.6 M qcow-stream-tool x86_64 26.1.4-3.1.xcpng8.3 xcp-ng-testing 1.4 M rrdd-plugins x86_64 26.1.4-3.1.xcpng8.3 xcp-ng-testing 9.8 M sm-cli x86_64 26.1.4-3.1.xcpng8.3 xcp-ng-testing 4.8 M squeezed x86_64 26.1.4-3.1.xcpng8.3 xcp-ng-testing 1.9 M varstored-guard x86_64 26.1.4-3.1.xcpng8.3 xcp-ng-testing 4.9 M vhd-tool x86_64 26.1.4-3.1.xcpng8.3 xcp-ng-testing 4.9 M wsproxy x86_64 26.1.4-3.1.xcpng8.3 xcp-ng-testing 1.1 M xapi-core x86_64 26.1.4-3.1.xcpng8.3 xcp-ng-testing 30 M xapi-nbd x86_64 26.1.4-3.1.xcpng8.3 xcp-ng-testing 3.1 M xapi-rrd2csv x86_64 26.1.4-3.1.xcpng8.3 xcp-ng-testing 3.0 M xapi-storage-script x86_64 26.1.4-3.1.xcpng8.3 xcp-ng-testing 2.5 M xapi-tests x86_64 26.1.4-3.1.xcpng8.3 xcp-ng-testing 5.0 M xapi-xe x86_64 26.1.4-3.1.xcpng8.3 xcp-ng-testing 1.5 M xcp-featured x86_64 1.2.1-1.xcpng8.3 xcp-ng-testing 1.5 M xcp-networkd x86_64 26.1.4-3.1.xcpng8.3 xcp-ng-testing 4.8 M xcp-rrdd x86_64 26.1.4-3.1.xcpng8.3 xcp-ng-testing 3.6 M xen-dom0-libs x86_64 4.17.6-8.1.xcpng8.3 xcp-ng-testing 703 k xen-dom0-tools x86_64 4.17.6-8.1.xcpng8.3 xcp-ng-testing 2.0 M xen-hypervisor x86_64 4.17.6-8.1.xcpng8.3 xcp-ng-testing 2.4 M xen-libs x86_64 4.17.6-8.1.xcpng8.3 xcp-ng-testing 65 k xen-tools x86_64 4.17.6-8.1.xcpng8.3 xcp-ng-testing 46 k xenopsd x86_64 26.1.4-3.1.xcpng8.3 xcp-ng-testing 1.4 M xenopsd-cli x86_64 26.1.4-3.1.xcpng8.3 xcp-ng-testing 2.0 M xenopsd-xc x86_64 26.1.4-3.1.xcpng8.3 xcp-ng-testing 5.3 M xo-lite noarch 0.21.0-1.xcpng8.3 xcp-ng-testing 2.6 M Transaction Summary ========================================================================================================================================= Upgrade 30 Packages Total download size: 152 M -

I'm seeing 30 updates as well but they're installed and seem to be working fine on my test systems.

Hello! It looks like you're interested in this conversation, but you don't have an account yet.

Getting fed up of having to scroll through the same posts each visit? When you register for an account, you'll always come back to exactly where you were before, and choose to be notified of new replies (either via email, or push notification). You'll also be able to save bookmarks and upvote posts to show your appreciation to other community members.

With your input, this post could be even better 💗

Register Login