ph7 said:

Local VMs vith scheduled

IncrementalBackup and CR are affected

NFS VMs vith scheduledIncrementalBackup are affected

Incremental -> Incremental OR full

ph7 said:

Local VMs vith scheduled

IncrementalBackup and CR are affected

NFS VMs vith scheduledIncrementalBackup are affected

Incremental -> Incremental OR full

@florent

fix_flr commit b7230

Restored files from debian 12 on local ext

and from Linux Mint 22.3 on NFS

both with tgz and zip

Local VMs vith scheduled Incremental or full Backup and CR are affected

NFS VMs vith scheduled Incremental or full Backup are affected

NFS VMs with CR not affected

@julienXOvates

The faulty ones are on local SR

If I take a manual snapshot on a NFS VM, I see the correct value

Will test a scheduled one

@julienXOvates

Well not in my daefc witch is newer than 6.5.1

ph@romy:~$ df -h

Filsystem Storlek Använt Ledigt Anv% Monterat på

udev 1,9G 0 1,9G 0% /dev

tmpfs 392M 916K 391M 1% /run

/dev/xvda2 93G 6,3G 82G 8% /

tmpfs 2,0G 0 2,0G 0% /dev/shm

efivarfs 128K 52K 72K 42% /sys/firmware/efi/efivars

tmpfs 5,0M 0 5,0M 0% /run/lock

tmpfs 1,0M 0 1,0M 0% /run/credentials/systemd-journald.service

tmpfs 2,0G 4,0K 2,0G 1% /tmp

/dev/xvda1 975M 8,8M 966M 1% /boot/efi

tmpfs 1,0M 0 1,0M 0% /run/credentials/getty@tty1.service

tmpfs 1,0M 0 1,0M 0% /run/credentials/serial-getty@hvc0.service

tmpfs 392M 68K 392M 1% /run/user/105

tmpfs 392M 68K 392M 1% /run/user/1000

seems more like 8% to me

@rzr

It seems to be working

edit: the update that is.

In the back of my head I knew there was a thread and I found it

https://xcp-ng.org/forum/topic/12040/restore-only-showing-1-vm/21?_=1780396449511

Maybe this schould be under XO/Backup

Anyhow.

ph7 said:

Ran the 2 updates released today and...

Back to only showing one VM inBackup/Restoreas it did a month or 2 ago.

Ran the replication job and all VMs showed up inBackup/Restoreagain. (XO5)

It occurred again today, only showing 1 VM in restore

XO daefc

Host updated 2 weeks ago.

After 1 scheduled CR job ran all VMs showed up again

@olivierlambert

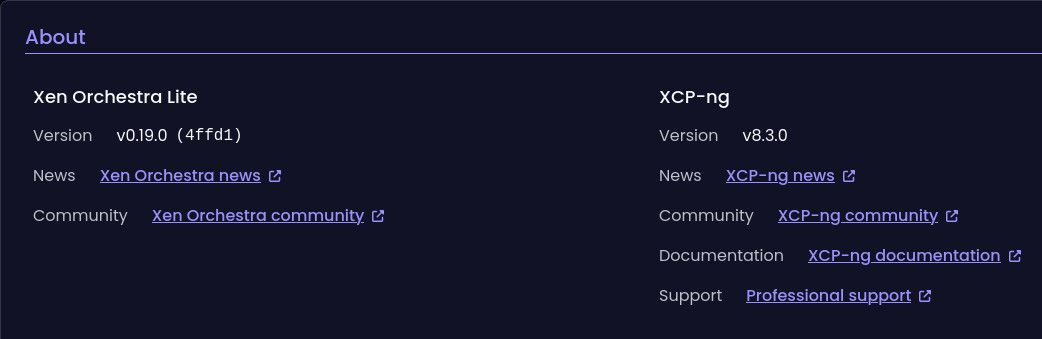

No, both my XO 2b887 and dec0c are 0.19.0

Updated XO from script and was trying to check the version but could not get into the settings.

did fnF5 and rebooted and then find out I was back to 0.19 ???

edit ctrl F5

@rzr

Seems to be OK

ran a few backups and installed a new VM

XO-lite was already on 0.21.0 (13f98) after the update 2 weeks ago.. Don't know if it's the same.

Ran the 2 updates released today and...

Back to only showing one VM in Backup/Restore as it did a month or 2 ago.

Ran the replication job and all VMs showed up in Backup/Restore again. (XO5)

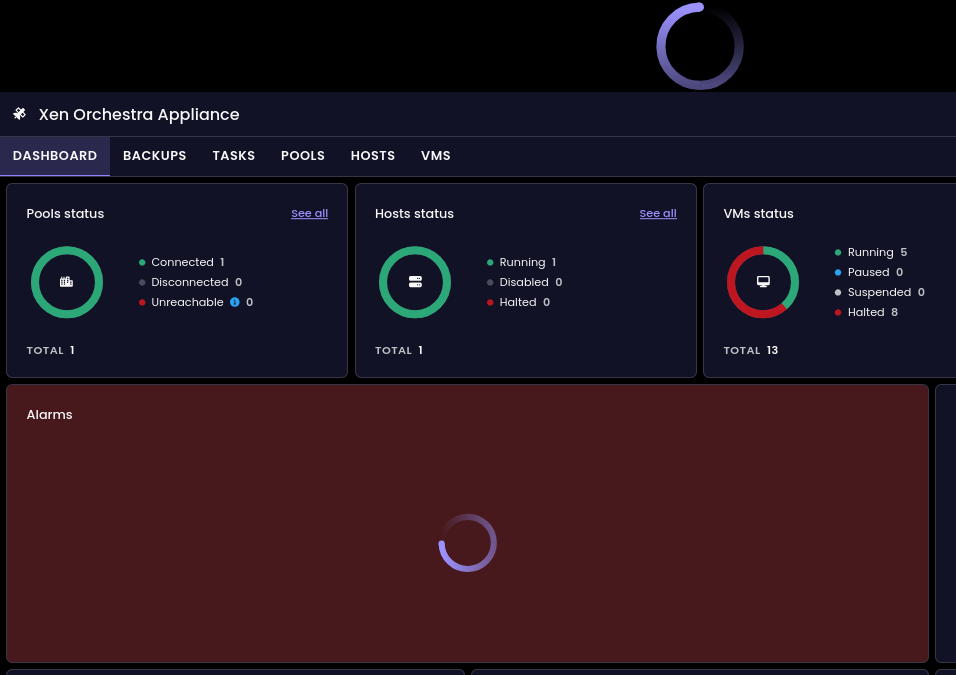

[17:00 30] xoa@xoa:~$ sudo journalctl -u xo-server -f

Apr 30 16:23:13 xoa xo-server[569]: 2026-04-30T14:23:13.264Z xo:main INFO Setting up / → /usr/local/lib/node_modules/@xen-orchestra/web/dist

Apr 30 16:26:27 xoa xo-server[569]: 2026-04-30T14:26:27.942Z xo:rest-api:error-handler INFO [GET] /users/370331c9-fe77-49db-b2a0-e38f776607bd (403)

Apr 30 16:26:28 xoa xo-server[569]: 2026-04-30T14:26:28.549Z xo:rest-api:error-handler INFO [GET] /pools (403)

Apr 30 16:26:28 xoa xo-server[569]: 2026-04-30T14:26:28.555Z xo:rest-api:error-handler INFO [GET] /hosts (403)

Apr 30 16:26:28 xoa xo-server[569]: 2026-04-30T14:26:28.563Z xo:rest-api:error-handler INFO [GET] /vms (403)

Apr 30 16:26:28 xoa xo-server[569]: 2026-04-30T14:26:28.627Z xo:rest-api:error-handler INFO [GET] /tasks (403)

Apr 30 16:26:28 xoa xo-server[569]: 2026-04-30T14:26:28.812Z xo:rest-api:error-handler INFO [GET] /alarms (403)

Apr 30 16:26:28 xoa xo-server[569]: 2026-04-30T14:26:28.812Z xo:rest-api:error-handler INFO [GET] /vm-controllers (403)

Apr 30 16:26:28 xoa xo-server[569]: 2026-04-30T14:26:28.813Z xo:rest-api:error-handler INFO [GET] /vdis (403)

Apr 30 16:26:28 xoa xo-server[569]: 2026-04-30T14:26:28.813Z xo:rest-api:error-handler INFO [GET] /srs (403)

@Baronvaile

Started my 6.4.0 test VM and got the same

@Baronvaile

Have You tested wit CTRL-F5 to update Your browser

just found out that it's the same on XO5

I removed 1 host from the pool and the local storage from that host didn't get purged