I was going to suggest it might be a amd issue. I can try later on work host that are intel.

Posts

-

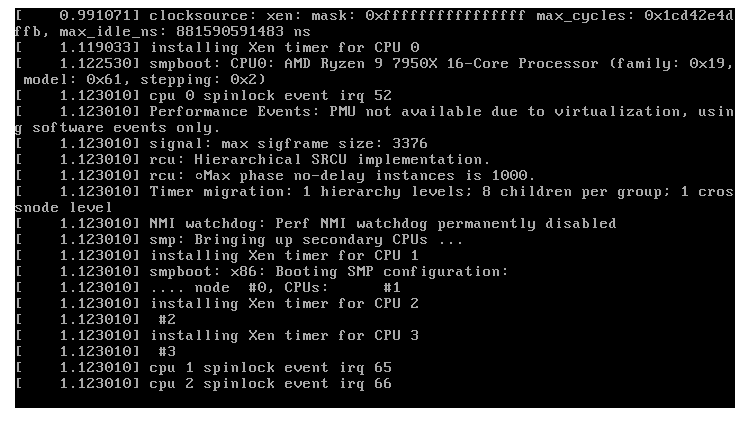

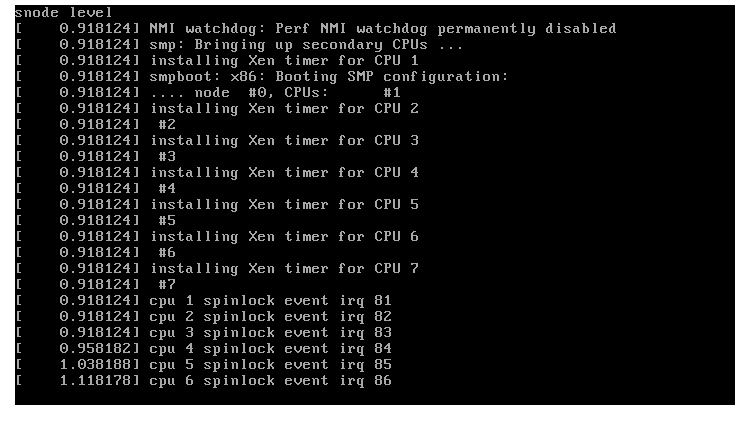

RE: Slow boot on rocky linux 10 latest kernel

-

RE: Slow boot on rocky linux 10 latest kernel

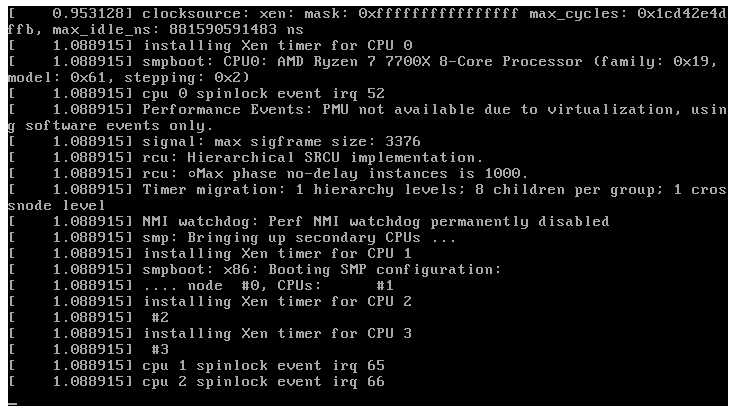

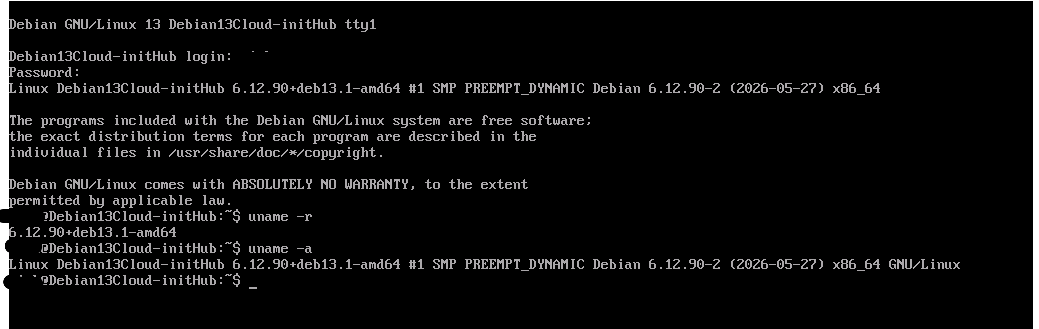

This is on fresh install Debian 13 deployed from XO Hub - 6.12.38+deb13-amd64. I do not see this behavior on Ubuntu.

after update 6.12.90+deb13.1-amd64

still happens.

-

RE: XCP-ng 8.3 updates announcements and testing

Installed updates on home lab. No issues to report initially other then nslookup still an issue.

[10:54 xcp-ng-haznrrtw ~]# nslookup vates.com 8.8.8.8 Server: 8.8.8.8 Address: 8.8.8.8#53 Non-authoritative answer: Name: vates.com Address: 104.21.52.238 Name: vates.com Address: 172.67.205.118 openssl_link.c:132: INSIST(dst__memory_pool != ((void *)0)) failed, back trace #0 0x7f163cd960e7 in ?? #1 0x7f163cd9603a in ?? #2 0x7f163d9a3780 in ?? #3 0x7f163c1aedf6 in ?? #4 0x7f163c1f5464 in ?? #5 0x7f163c1f5732 in ?? #6 0x7f163c1f4b8d in ?? #7 0x7f163a95fbd9 in ?? #8 0x7f163a95fc27 in ?? #9 0x7f163a94844c in ?? #10 0x405818 in ?? Aborted (core dumped) [12:50 xcp-ng-haznrrtw ~]# -

RE: Trying to enable v2v and difficulty adding nbdinfo on xo 6

@CGB Hi, are you running XO from source with root or another user?

If it is another user, it may be not accessible from itThat is what i was able to determine what is going on with my script. - https://xcp-ng.org/forum/post/105965

when using root user button works just fine. If using non-root user the button will never work as it assumes root will use it. I am not sure how other scripts handle this issue.

-

RE: Install XO from sources.

After building new xo with root and more testing, I have come to this conclusion...

Both things are true, and they're in tension

The official docs prefer non-root for the long-running service — that's a least-privilege hardening recommendation for the daemon. Normal XO (UI, backups, hosts, VMs, NFS/CIFS remotes) works fine non-root.

But several XO features assume root anyway. The ESXi/VMware import "install from source" buttons are hard-coded to refuse unless id -u == 0. You already hit this same pattern once before — the credential-encryption/XenStore work (commit 5e8b7fd) existed precisely because non-root broke that too.

So "everything fails non-root" isn't quite it — what fails is the specific subset of features XO wrote assuming it runs as root. Each one needs a separate workaround. The import button is one that cannot be worked around for a non-root process: it's a uid check on the running daemon, full stop.The honest trade-off

You can pick at most two of these three:Service runs non-root (docs' preference)

In-app "install nbd from source" button works

Script doesn't pre-install packages

The button (#2) requires the daemon to be uid 0. So:Want the button to work → run that box as SERVICE_USER=root. Simplest, everything XO ships just works, zero manual steps. You give up the non-root hardening.

Want to stay non-root → the button is permanently dead; the only way to get import working is the binaries being placed by root once (script or by hand). The binaries run fine as non-root — only their installation needs root.

My recommendation

Use SERVICE_USER=root on this box. XO's own codebase keeps assuming root (import, and you already saw it with encryption/XenStore), so non-root is a recurring fight against upstream for marginal hardening. Root is fully supported, it's what the official XO appliance ships, and it makes the buttons you want work with no manual package steps. Keep non-root only if hardening that box is a hard requirement and you're fine never using the in-app import installer. -

RE: Install XO from sources.

While looking into the following issue - https://xcp-ng.org/forum/topic/11976/trying-to-enable-v2v-and-difficulty-adding-nbdinfo-on-xo-6/10

I found a few issues with my install script... Looking for recommendations on the fix approach.

I am noticing that are some confusion or inconsistencies with the documentation. Areas it suggest to use root and/or a non root account. Part of these issues would not be if the account used was root or with root access.

The two failures

Reason 1 — privileges (SERVICE_USER=xo-service, non-root):

XO's button runs apt-get install ..., make install, and ldconfig with no sudo (confirmed in esxi.mjs source). As xo-service, those commands are permission-denied: it can't apt-install, can't write to /usr/local/..., can't run ldconfig. XO's own dependency checker even says V2V requires root (UID 0) — which is why your sample-xo-config.cfg:56 already warns "V2V import requires root."So yes — your instinct is correct. With SERVICE_USER=xo-service, the button cannot succeed no matter what.

But Reason 2 — build tools absent:

Even if you set SERVICE_USER=root and click the button, it will then try apt-get install -y git dh-autoreconf pkg-config make libxml2-dev ocaml libc-bin and compile from GitLab. That will work if the box has internet and apt is healthy — but it's a multi-minute source compile pulling ~hundreds of MB (ocaml!) every time, done live inside the web request. Pre-staging those packages via the installer makes the button fast and reliable instead of a long live compile.So, is the fix "just set SERVICE_USER=root"?

To make the button function: yes, that is the necessary fix. Pre-installing build deps is an optional reliability/speed improvement on top.Given your answers, here's my refined proposal — minimal, docs-aligned:

Proposed changes

-

Add INSTALL_V2V_DEPS=false opt-in to sample-xo-config.cfg. When true, the installer pre-stages XO's exact build-dep list (git dh-autoreconf pkg-config make libxml2-dev ocaml libc-bin) on apt systems only, so the button compiles quickly instead of installing them live. Default false → no ocaml bloat on normal installs.

-

Root guard (your "Warn only" preference): When INSTALL_V2V_DEPS=true and SERVICE_USER is non-root, print a prominent warning:

"VMware V2V import requires xo-server to run as root (per XO docs). The in-XO 'install nbdinfo' button runs apt-get/make install without sudo and will fail as user '$SERVICE_USER'. Set SERVICE_USER=root to use V2V import."

…and continue (don't abort).-

RHEL/dnf: if INSTALL_V2V_DEPS=true on a non-apt system, log "VMware V2V import is only officially supported on Debian 12/13 per XO docs — skipping V2V dependency setup." and do nothing.

-

Keep the existing /usr/local/lib/vddk dir creation (it's correct — XO untars VDDK there), and don't touch APT contrib/multiverse or pre-compile nbdkit (XO compiles from source itself; distro packages are never used by the button).

-

Docs: README row for INSTALL_V2V_DEPS + a sharpened note in sample-config that V2V needs SERVICE_USER=root, linking the official guide.

This stays strictly within what XO's own code does, scopes the heavy deps behind opt-in, and surfaces the real root-user requirement you correctly identified.

Shall I implement this? If yes, I'll make the edits and run shellcheck/bats afterward to verify nothing breaks. (I have not edited anything yet.)

Prior to looking into this issue. I was trying to work on switching from standard user to root but that broke alot of things and will need rework. Dont belive it would be an issue if root was used to initially deploy xo.

-

-

RE: Trying to enable v2v and difficulty adding nbdinfo on xo 6

@CGB

Are you using XOA or XO from sources? If from sources, who's script did you use to deploy? Also if from source what commit are you on? -

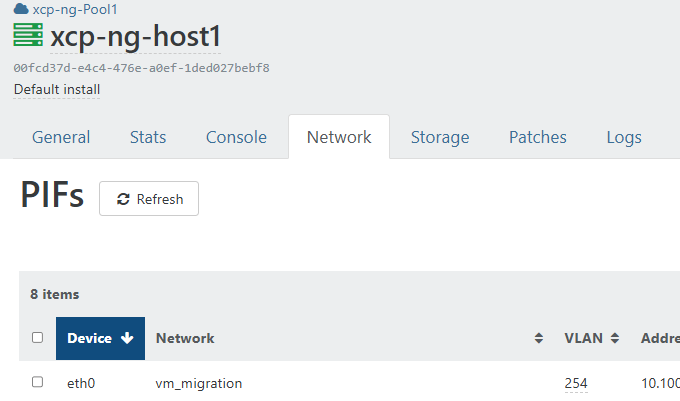

RE: XCP-ng 8.3 updates announcements and testing

Applied patches at work. 3 pools updated with zero issues.

-

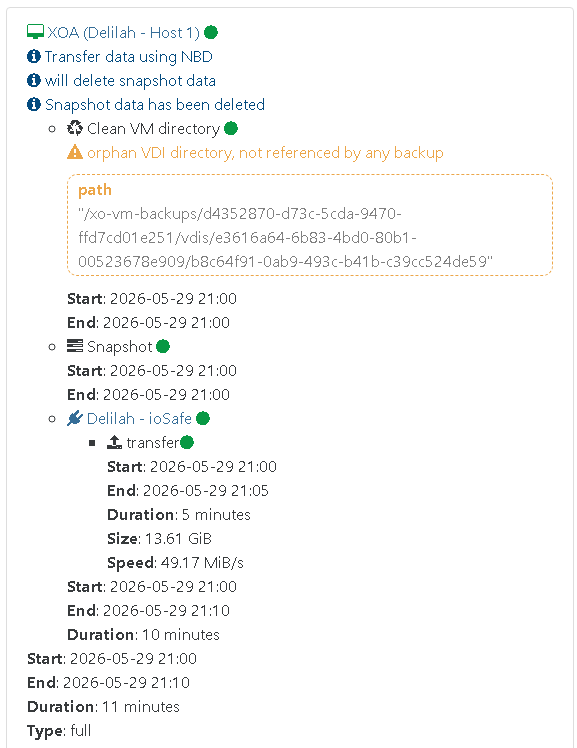

RE: broken backup in XOA 6.5 ? orphan VDI Directory, not referenced by any backup

I have updated my XOA and Proxies... It seems i did not see the warning on the next round of backups. Will continue to monitor now patches are installed.

-

RE: XCP-ng 8.3 updates announcements and testing

[09:43 xcp-ng-haznrrtw ~]# nslookup vates.com 8.8.8.8 -bash: nslookup: command not found[10:21 xcp-ng-haznrrtw ~]# yum install bind-utils -y Loaded plugins: fastestmirror Loading mirror speeds from cached hostfile Excluding mirror: updates.xcp-ng.org * xcp-ng-base: mirrors.xcp-ng.org Excluding mirror: updates.xcp-ng.org * xcp-ng-updates: mirrors.xcp-ng.org Resolving Dependencies --> Running transaction check ---> Package bind-utils.x86_64 32:9.9.4-63.1.xcpng8.3 will be installed --> Processing Dependency: bind-libs = 32:9.9.4-63.1.xcpng8.3 for package: 32:bind-utils-9.9.4-63.1.xcpng8.3.x86_64 --> Processing Dependency: libbind9.so.90()(64bit) for package: 32:bind-utils-9.9.4-63.1.xcpng8.3.x86_64 --> Processing Dependency: libdns.so.100()(64bit) for package: 32:bind-utils-9.9.4-63.1.xcpng8.3.x86_64 --> Processing Dependency: libisc.so.95()(64bit) for package: 32:bind-utils-9.9.4-63.1.xcpng8.3.x86_64 --> Processing Dependency: libisccc.so.90()(64bit) for package: 32:bind-utils-9.9.4-63.1.xcpng8.3.x86_64 --> Processing Dependency: libisccfg.so.90()(64bit) for package: 32:bind-utils-9.9.4-63.1.xcpng8.3.x86_64 --> Processing Dependency: liblwres.so.90()(64bit) for package: 32:bind-utils-9.9.4-63.1.xcpng8.3.x86_64 --> Running transaction check ---> Package bind-libs.x86_64 32:9.9.4-63.1.xcpng8.3 will be installed --> Finished Dependency Resolution Dependencies Resolved ========================================================================================================================================= Package Arch Version Repository Size ========================================================================================================================================= Installing: bind-utils x86_64 32:9.9.4-63.1.xcpng8.3 xcp-ng-updates 126 k Installing for dependencies: bind-libs x86_64 32:9.9.4-63.1.xcpng8.3 xcp-ng-updates 948 k Transaction Summary ========================================================================================================================================= Install 1 Package (+1 Dependent package) Total download size: 1.0 M Installed size: 3.0 M Downloading packages: (1/2): bind-libs-9.9.4-63.1.xcpng8.3.x86_64.rpm | 948 kB 00:00:00 (2/2): bind-utils-9.9.4-63.1.xcpng8.3.x86_64.rpm | 126 kB 00:00:01 ----------------------------------------------------------------------------------------------------------------------------------------- Total 855 kB/s | 1.0 MB 00:00:01 Running transaction check Running transaction test Transaction test succeeded Running transaction Installing : 32:bind-libs-9.9.4-63.1.xcpng8.3.x86_64 1/2 Installing : 32:bind-utils-9.9.4-63.1.xcpng8.3.x86_64 2/2 Verifying : 32:bind-libs-9.9.4-63.1.xcpng8.3.x86_64 1/2 Verifying : 32:bind-utils-9.9.4-63.1.xcpng8.3.x86_64 2/2 Installed: bind-utils.x86_64 32:9.9.4-63.1.xcpng8.3 Dependency Installed: bind-libs.x86_64 32:9.9.4-63.1.xcpng8.3 Complete! [10:22 xcp-ng-haznrrtw ~]# nslookup vates.com 8.8.8.8 Server: 8.8.8.8 Address: 8.8.8.8#53 Non-authoritative answer: Name: vates.com Address: 172.67.205.118 Name: vates.com Address: 104.21.52.238 openssl_link.c:132: INSIST(dst__memory_pool != ((void *)0)) failed, back trace #0 0x7f419d8790e7 in ?? #1 0x7f419d87903a in ?? #2 0x7f419e486780 in ?? #3 0x7f419cc91df6 in ?? #4 0x7f419ccd8464 in ?? #5 0x7f419ccd8732 in ?? #6 0x7f419ccd7b8d in ?? #7 0x7f419b442bd9 in ?? #8 0x7f419b442c27 in ?? #9 0x7f419b42b44c in ?? #10 0x405818 in ?? Aborted (core dumpedEdit -No further issues to report at this time.

-

RE: XCP-ng 8.3 updates announcements and testing

Updated both hosts at home with no issues. Will continue to test.

Dependency Installed: libtasn1-tools.x86_64 0:4.21.0-1.xcpng8.3 Updated: blktap.x86_64 0:3.55.5-9.1.xcpng8.3 dmidecode.x86_64 1:3.6-3.xcpng8.3 edk2.x86_64 0:20220801-1.7.11.1.xcpng8.3 forkexecd.x86_64 0:26.1.4-3.2.xcpng8.3 fuse-libs.x86_64 0:2.9.2-10.1.xcpng8.3 ipxe.noarch 0:20121005-1.0.8.xcpng8.3 kernel.x86_64 0:4.19.19-8.0.46.5.xcpng8.3 libtasn1.x86_64 0:4.21.0-1.xcpng8.3 libtasn1-devel.x86_64 0:4.21.0-1.xcpng8.3 message-switch.x86_64 0:26.1.4-3.2.xcpng8.3 openssh.x86_64 0:9.8p1-1.2.4.xcpng8.3 openssh-clients.x86_64 0:9.8p1-1.2.4.xcpng8.3 openssh-server.x86_64 0:9.8p1-1.2.4.xcpng8.3 qcow-stream-tool.x86_64 0:26.1.4-3.2.xcpng8.3 qemu.x86_64 2:4.2.1-5.2.18.1.xcpng8.3 rrdd-plugins.x86_64 0:26.1.4-3.2.xcpng8.3 slang.x86_64 0:2.3.2-11.1.xcpng8.3 sm-cli.x86_64 0:26.1.4-3.2.xcpng8.3 squeezed.x86_64 0:26.1.4-3.2.xcpng8.3 systemtap-runtime.x86_64 0:4.0-5.3.xcpng8.3 varstored.x86_64 0:1.3.2-2.1.xcpng8.3 varstored-guard.x86_64 0:26.1.4-3.2.xcpng8.3 varstored-tools.x86_64 0:1.3.2-2.1.xcpng8.3 vhd-tool.x86_64 0:26.1.4-3.2.xcpng8.3 wsproxy.x86_64 0:26.1.4-3.2.xcpng8.3 xapi-core.x86_64 0:26.1.4-3.2.xcpng8.3 xapi-nbd.x86_64 0:26.1.4-3.2.xcpng8.3 xapi-rrd2csv.x86_64 0:26.1.4-3.2.xcpng8.3 xapi-storage-script.x86_64 0:26.1.4-3.2.xcpng8.3 xapi-tests.x86_64 0:26.1.4-3.2.xcpng8.3 xapi-xe.x86_64 0:26.1.4-3.2.xcpng8.3 xcp-networkd.x86_64 0:26.1.4-3.2.xcpng8.3 xcp-rrdd.x86_64 0:26.1.4-3.2.xcpng8.3 xenopsd.x86_64 0:26.1.4-3.2.xcpng8.3 xenopsd-cli.x86_64 0:26.1.4-3.2.xcpng8.3 xenopsd-xc.x86_64 0:26.1.4-3.2.xcpng8.3 -

RE: broken backup in XOA 6.5 ? orphan VDI Directory, not referenced by any backup

Maybe because my backup was a full vs delta?

-

RE: broken backup in XOA 6.5 ? orphan VDI Directory, not referenced by any backup

Just notice similar for me with only 2 vms. Both vms are on main pool with xoa. pools connected via proxy dont show this error..

I have support tunnel open already for active support ticket still. Home lab does not seem to have this issue. currently.

-

RE: XO-Lite back to 0.19

I just re-ran on main host...

[12:27 xcp-ng-haznrrtw ~]# yum clean metadata Loaded plugins: fastestmirror Cleaning repos: xcp-ng-base xcp-ng-updates 6 metadata files removed 6 sqlite files removed 0 metadata files removed [12:29 xcp-ng-haznrrtw ~]# yum update Loaded plugins: fastestmirror Loading mirror speeds from cached hostfile Excluding mirror: updates.xcp-ng.org * xcp-ng-base: mirrors.xcp-ng.org Excluding mirror: updates.xcp-ng.org * xcp-ng-updates: mirrors.xcp-ng.org xcp-ng-base/signature | 473 B 00:00:00 xcp-ng-base/signature | 3.0 kB 00:00:00 !!! xcp-ng-updates/signature | 473 B 00:00:00 xcp-ng-updates/signature | 3.0 kB 00:00:00 !!! (1/2): xcp-ng-updates/primary_db | 1.4 MB 00:00:00 (2/2): xcp-ng-base/primary_db | 3.9 MB 00:00:01 No packages marked for update [12:29 xcp-ng-haznrrtw ~]# rebootXO-CE -

Commit dec0c

XO-Lite stillv0.19.0(4ffd1) -

RE: Some dashboard loading issues with v6

@acebmxer Hi,

Thanks to your help we were able to identify an issue with Redis that we think is the source of the v6 dashboard loading issue.

Could you try and checkout the

fix_redis_encryption_issuebranch, rebuild xo and restart ?This should solve the 401 issues.

Switched back to Master branch and made some changes to my install script.

- add diagnostics for missing XO 6 web UI build artifacts

- Plain bash

[[ -f ]]fails silently on unreadable paths owned by

SERVICE_USER, causing false-positive missing-artifact warnings. Switch

all file/dir tests and grep calls to use sudo.

SUCCESS] Xen Orchestra built successfully [INFO] Build verification passed: dist — all JS chunks present. [INFO] Build verification passed: dist — all JS chunks present. [INFO] Creating systemd service... [SUCCESS] Systemd service created and enabled [INFO] Configuring sudo for xo-service (mount/umount/findmnt)... [SUCCESS] Sudo configured for xo-service (mount, umount, findmnt) [INFO] Applying security hardening... [INFO] Starting xo-server service... [INFO] Waiting for Xen Orchestra to become ready (up to 60s)... [INFO] Not ready yet (attempt 1/10), retrying in 6s... [SUCCESS] Xen Orchestra is ready (HTTPS on port 443) [SUCCESS] Update completed successfully! [INFO] New commit: 0f29421627c7v6 Dashboard still loading correctly. Thank you for the fix.

-

RE: XOA - Memory Usage

@acebmxer back to work

thank you for yor patience and help on this. I feel that it's not the same issue , with abrupt increaseW will try our best to also fix this one

Yes i replied to ticket also.... Yes you can do what is needed to XOA.

Just looked at memory and it dropped....