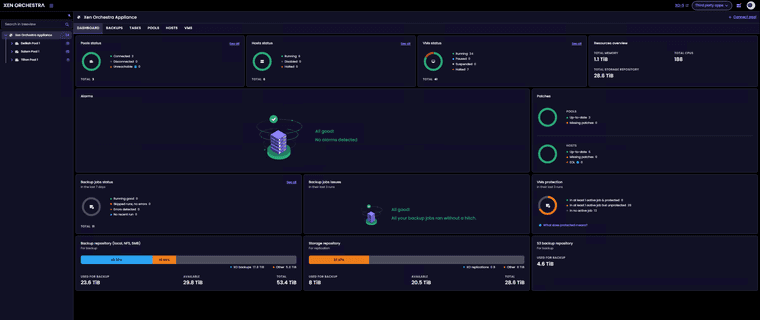

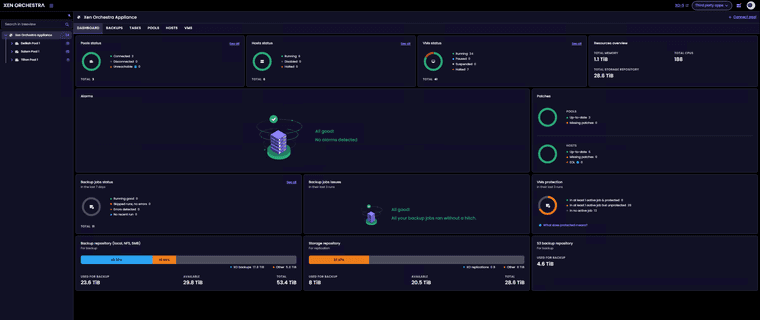

Finally just finished our migration from VMware to XCP-NG.

VMs - 34 mix windows server and ubuntu linux.

Pools - 3

Host - 6

Dell R660 - 2

Dell R640 - 4

Finally just finished our migration from VMware to XCP-NG.

VMs - 34 mix windows server and ubuntu linux.

Pools - 3

Host - 6

Dell R660 - 2

Dell R640 - 4

@rzr Installed latest update and no issues to report. I dont hvae any 2tb+ drives in my vms. converting from vhd to qcow2 and backups all working.

installed updates will report back.

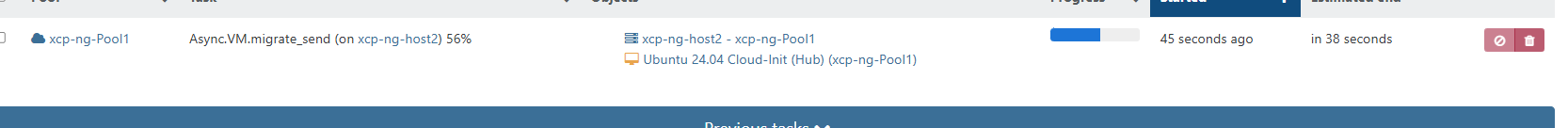

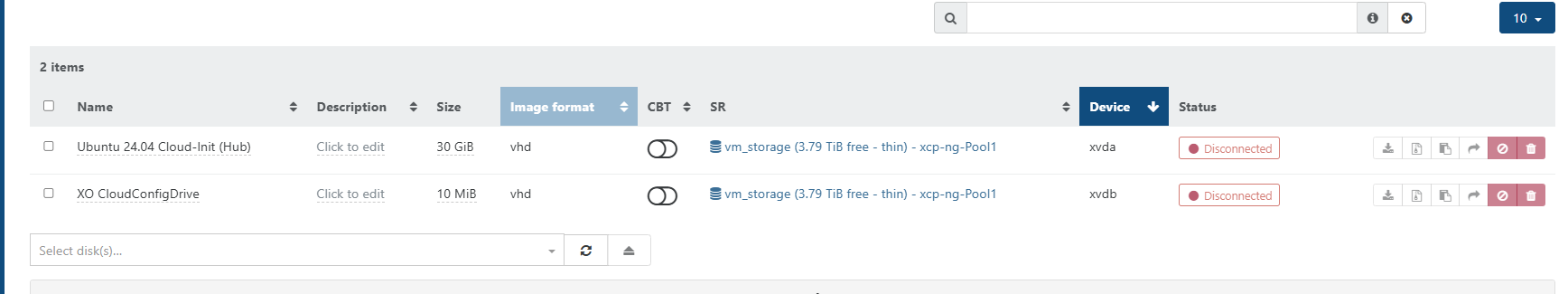

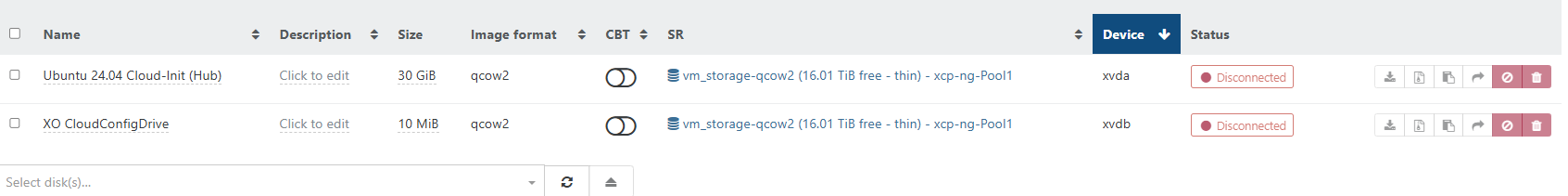

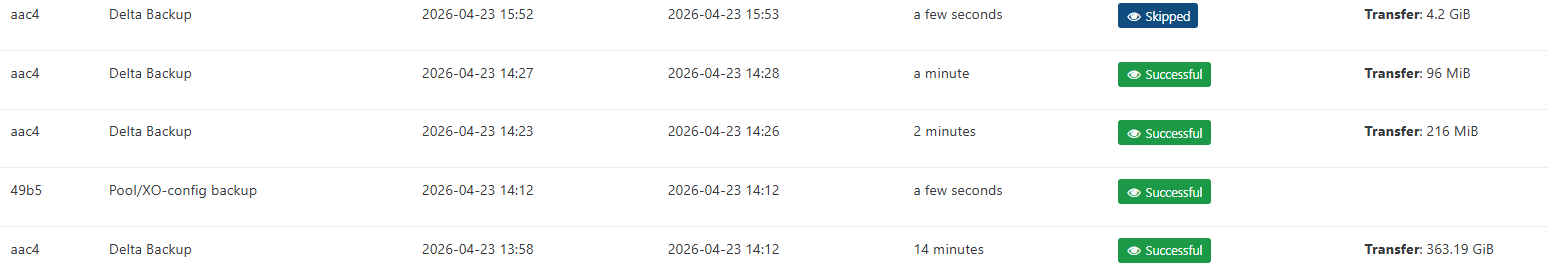

Update - I had migrated vms back over to vhd prior to update release. I have migrated 2 vms back over to qcow2 and the initial backup ran successfull. Ran a second delta backup and that as well was successful with out issues. Backups happen very quickly now. But it appears the % and progress bar are working.

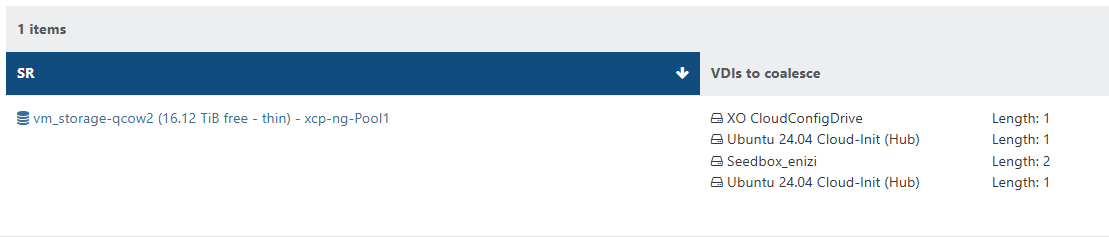

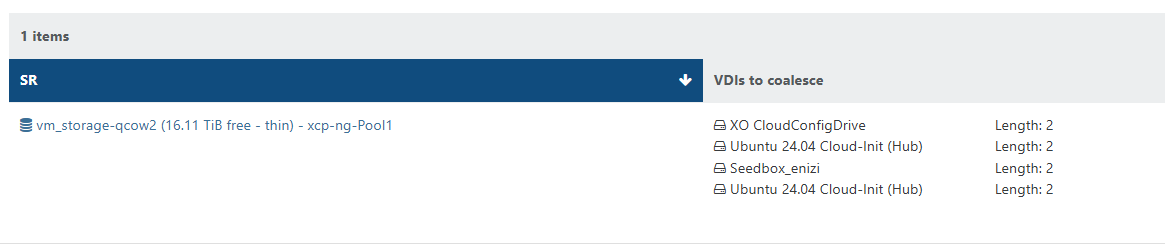

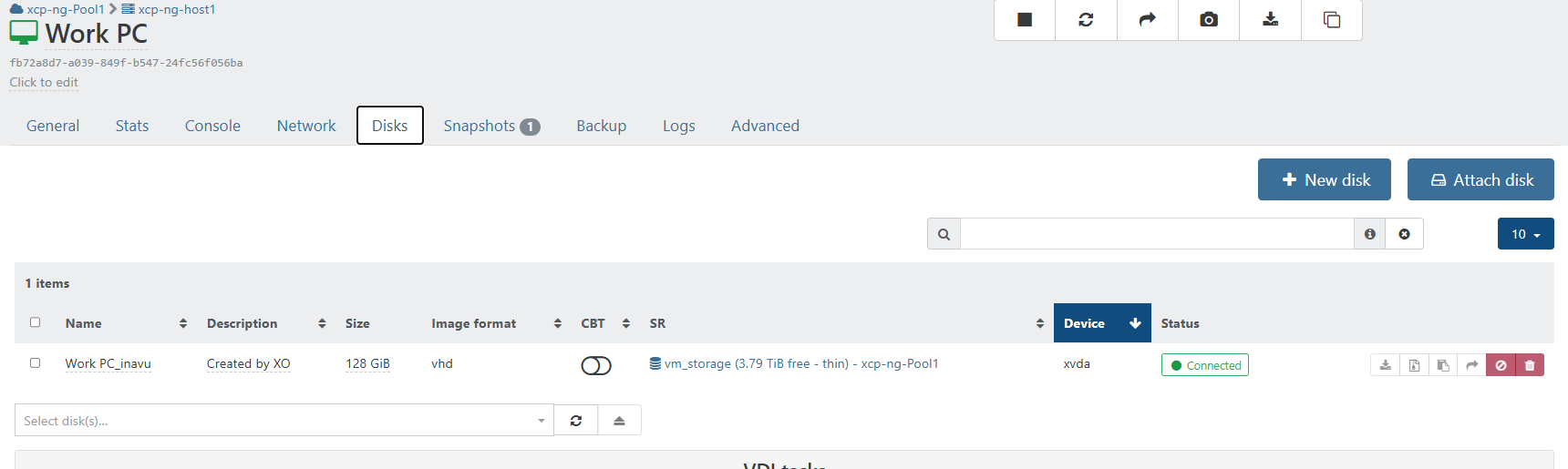

When CBT is enabled on the vm vdi. They show up as needing to be coalesced. VMs without CBT enabled the vdis are coalesced.

Will continue to monitor.

Once the coalesence hits 2 for the vm. The vm is skipped form future backups until cleared. (shutting down the vm will allow the coalescence to happen.

2026-04-23T19_52_34.694Z - backup NG.txt

@olivierlambert Created new topic.

Updated my to AMD Ryzen host in my home lab. No issues with update will monitor and report back any issues.

Ok that was in xo from sources and yes issue is fixed...

Confirmed is working in XOA in 6.4.1

While this project is more for myself it is open to others to use. Please use at your own risk. As always review the script before using in a production environment. Please leave any feedback or suggestions. https://github.com/acebmxer/install_xen_orchestra/

https://forums.pozzatech.com - You can read more about this project and other things over in my personal forums.

Automated installation and management of Xen Orchestra from source.

Update 5/15/26 - This update only applies to anyone using older version of script. See note. Also added option to Adjust Xen Orchestra Memory Allocation. It will look at the system memory and suggest setting for XO based off the official documentation.

⚠️ Upgrading from an earlier version of this script? Read this first.

This version bumps the config schema to v2 (adds PUBLIC_URL and ENCRYPT_REDIS_CREDENTIALS) and corrects two config.toml generation bugs. Your xo-config.cfg is migrated automatically and non-destructively, but the corrected /etc/xo-server/config.toml is only written by --reconfigure.

Run --reconfigure once before resuming normal updates:

./install-xen-orchestra.sh --reconfigure

This regenerates config.toml with the fixes (your old file is backed up first; data in /var/lib/xo-server is untouched). It is strongly recommended if you set both REDIRECT_TO_HTTPS=true and REVERSE_PROXY_TRUST — that combination previously produced a duplicate [http] section and silently dropped one of the settings.

Afterwards, run --update as normal for routine XO updates — --update does not need to be preceded by --reconfigure again.

| Function | CLI Flag | Description |

|---|---|---|

| Install | --install |

Fresh install of Xen Orchestra |

| Update | --update |

Update existing installation (with backup) |

| Restore | --restore |

Restore from a previous backup |

| Rebuild | --rebuild |

Fresh clone + clean build, preserves settings |

| Reconfigure | --reconfigure |

Apply config changes without rebuilding |

| XO Proxy | --proxy |

Deploy XO Proxy to a Xen pool master |

| Edit Config | (menu only) | Open xo-config.cfg in your preferred editor |

| Rename Config | (menu only) | Rename sample-xo-config.cfg to xo-config.cfg |

Running without flags launches an interactive menu. All flags also work directly:

./install-xen-orchestra.sh # interactive menu

./install-xen-orchestra.sh --update # run update directly

./install-xen-orchestra.sh --help # show all options

Running the script with no arguments opens a two-column menu with keyboard navigation:

╔══════════════════════════════════════════════════════════════════════════════════╗

║ Install Xen Orchestra from Sources Setup and Update ║

╚══════════════════════════════════════════════════════════════════════════════════╝

Current Script Commit : 693f4 (Branch: main)

Master Script Commit : 693f4 (Branch: main)

Current XO Commit : a1b2c (Branch: master)

Master XO Commit : d4e5f (Branch: master)

Current Node : v24.15.0

──────────────────────────────────────────────────────────────────────────────────

▸ [✓] Install Xen Orchestra [ ] Reconfigure Xen Orchestra

[ ] Update Xen Orchestra [ ] Rebuild Xen Orchestra

[ ] Rename Sample-xo-config.cfg [ ] Edit xo-config.cfg

[ ] Install XO Proxy [ ] Restore Backup

[ ] Adjust Xen Orchestra Memory Allocation

──────────────────────────────────────────────────────────────────────────────────

Selected: 1

↑↓←→ Navigate SPACE Select/Deselect ENTER Confirm Q Quit

Select one or more items with SPACE, then press ENTER to run them.

git clone https://github.com/acebmxer/install_xen_orchestra.git

cd install_xen_orchestra

cp sample-xo-config.cfg xo-config.cfg

nano xo-config.cfg # edit to your liking

./install-xen-orchestra.sh

Do NOT run with

sudo. Run as a normal user with sudo privileges — the script handlessudointernally.

If xo-config.cfg doesn't exist, it will be created automatically from the sample.

All settings live in xo-config.cfg. See sample-xo-config.cfg for full documentation of every option.

Key settings:

| Option | Default | Description |

|---|---|---|

HTTP_PORT |

80 | HTTP port |

HTTPS_PORT |

443 | HTTPS port |

INSTALL_DIR |

/opt/xen-orchestra | Installation directory |

GIT_BRANCH |

master | Git branch or tag |

NODE_VERSION |

24.15.0 | Node.js version |

SERVICE_USER |

xo-service | Service user (set to root for VMware V2V import) |

BACKUP_KEEP |

5 | Number of backups to retain |

BIND_ADDRESS |

0.0.0.0 | Bind address |

REVERSE_PROXY_TRUST |

false | Trust X-Forwarded headers from proxy IP |

Note on

BACKUP_KEEProtation: The retention policy only applies to backups created by the current version of the script. Backups made by older script versions may use a different naming convention and will not be counted or pruned by the rotation logic. If you are upgrading from an older version, manually review your backup directory (BACKUP_DIRin config, default/var/lib/xo-backups) and remove any legacy-named archives you no longer need.

After installation, access the web interface at https://your-server-ip.

admin@admin.netadminChange the default password immediately after first login.

Before applying an update, the script queries the Xen Orchestra REST API for active tasks (e.g. running backups, VM exports). If any are found, the update is aborted to prevent data loss or corruption.

Only admin-level XO accounts can access the REST API. Authentication is resolved in priority order:

| Priority | Method | Source |

|---|---|---|

| 1 | Auth token | XO_TASK_CHECK_TOKEN in xo-config.cfg |

| 2 | Credentials | XO_TASK_CHECK_USER / XO_TASK_CHECK_PASS in xo-config.cfg |

| 3 | Interactive | Prompted at runtime (press Enter to skip) |

It is recommended to create a dedicated XO web UI account solely for the task check (e.g. task-checker@local.net). This account:

You are free to use any admin account you choose, but a dedicated account is the safest approach.

Tokens are more secure than storing a password — they can be revoked independently and expire after 30 days by default.

curl -X POST -u 'task-checker@local.net:yourpassword' \

https://localhost/rest/v0/users/me/authentication_tokens -k

id field from the responsexo-config.cfg:XO_TASK_CHECK_TOKEN=UlTBEnFeL12XocK-7Qx-DKvOYbPn0eG7Z2oMvOniNjg

Alternatively, store the account credentials directly:

XO_TASK_CHECK_USER=task-checker@local.net

XO_TASK_CHECK_PASS=changeme

If neither token nor credentials are configured, the script will prompt interactively during each update.

| Variable | Description |

|---|---|

XO_DEBUG=1 |

Enable debug mode (set -x) |

XO_NO_SELF_UPDATE=1 |

Skip automatic script self-update |

Check service logs:

sudo journalctl -u xo-server -n 50

If the build is broken, rebuild (takes a backup first):

./install-xen-orchestra.sh --rebuild

The Yarn build is memory-intensive. On hosts with less than 2 GB RAM the Node.js process can be killed by the kernel OOM killer mid-build, leaving an incomplete install.

Add or increase swap to give the build room:

sudo fallocate -l 2G /swapfile

sudo chmod 600 /swapfile

sudo mkswap /swapfile

sudo swapon /swapfile

Re-run the install or --rebuild after the swap is active. To make it permanent across reboots, add /swapfile none swap sw 0 0 to /etc/fstab.

On hosts without internet access (or with strict egress firewall rules) the NodeSource repository setup script fails because it cannot reach keyserver.ubuntu.com or deb.nodesource.com.

Option A — pre-download and import the key manually, then copy the .deb/.rpm packages to the host.

Option B — set NODE_VERSION to a specific patch version (e.g. 24.15.0) in xo-config.cfg. The script will then download a pre-built binary directly from nodejs.org instead of using the NodeSource package repository.

git reports "dubious ownership" and exitsRecent versions of Git refuse to operate on a repository owned by a different user than the one running the command. This can happen when sudo is used inconsistently or when the install directory was created by root but the script is run as a normal user.

Fix it by resetting ownership to match your SERVICE_USER:

sudo chown -R xo-service:xo-service /opt/xen-orchestra

Replace xo-service with the value of SERVICE_USER in xo-config.cfg. Re-running the script afterwards will resolve the rest.

On SELinux-enforcing systems the xo-server service may fail to bind ports or access network resources. Check for AVC denials:

sudo ausearch -m avc -ts recent | grep xo-server

If denials are present, generate and apply a local policy module:

sudo ausearch -m avc -ts recent | audit2allow -M xo-server-local

sudo semodule -i xo-server-local.pp

Alternatively, set the service to permissive mode while investigating:

sudo semanage permissive -a xo_server_t

audit2allow and semanage are provided by the policycoreutils-python-utils package on RHEL/Rocky/Alma.

This project is licensed under the MIT License. Xen Orchestra itself is licensed under AGPL-3.0.

[info] Updating Xen Orchestra from 'cb85e44ae' to 'eed3d72f7'

First log is from automatic schedule before latest update.

2026-02-17T18_00_00.012Z - backup NG.txt

latest log after update. Backup completed successfully.

2026-02-17T18_22_52.199Z - backup NG.txt

Updated AMD Ryzen pool at home and update two Intel Dell r660 and r640 pools at work. No issues to report back.

Thanks for the reply back. Update when sucessfull. Windows Server 2025 iso now properly installs.

At work I was not able to install default certs for UEFI due to one failing to download. Run these updates and I was able to successfully install the certs to the host.

Installed updates on home lab. No issues to report initially other then nslookup still an issue.

[10:54 xcp-ng-haznrrtw ~]# nslookup vates.com 8.8.8.8

Server: 8.8.8.8

Address: 8.8.8.8#53

Non-authoritative answer:

Name: vates.com

Address: 104.21.52.238

Name: vates.com

Address: 172.67.205.118

openssl_link.c:132: INSIST(dst__memory_pool != ((void *)0)) failed, back trace

#0 0x7f163cd960e7 in ??

#1 0x7f163cd9603a in ??

#2 0x7f163d9a3780 in ??

#3 0x7f163c1aedf6 in ??

#4 0x7f163c1f5464 in ??

#5 0x7f163c1f5732 in ??

#6 0x7f163c1f4b8d in ??

#7 0x7f163a95fbd9 in ??

#8 0x7f163a95fc27 in ??

#9 0x7f163a94844c in ??

#10 0x405818 in ??

Aborted (core dumped)

[12:50 xcp-ng-haznrrtw ~]#

Updated both hosts at home with no issues. Will continue to test.

Dependency Installed:

libtasn1-tools.x86_64 0:4.21.0-1.xcpng8.3

Updated:

blktap.x86_64 0:3.55.5-9.1.xcpng8.3 dmidecode.x86_64 1:3.6-3.xcpng8.3

edk2.x86_64 0:20220801-1.7.11.1.xcpng8.3 forkexecd.x86_64 0:26.1.4-3.2.xcpng8.3

fuse-libs.x86_64 0:2.9.2-10.1.xcpng8.3 ipxe.noarch 0:20121005-1.0.8.xcpng8.3

kernel.x86_64 0:4.19.19-8.0.46.5.xcpng8.3 libtasn1.x86_64 0:4.21.0-1.xcpng8.3

libtasn1-devel.x86_64 0:4.21.0-1.xcpng8.3 message-switch.x86_64 0:26.1.4-3.2.xcpng8.3

openssh.x86_64 0:9.8p1-1.2.4.xcpng8.3 openssh-clients.x86_64 0:9.8p1-1.2.4.xcpng8.3

openssh-server.x86_64 0:9.8p1-1.2.4.xcpng8.3 qcow-stream-tool.x86_64 0:26.1.4-3.2.xcpng8.3

qemu.x86_64 2:4.2.1-5.2.18.1.xcpng8.3 rrdd-plugins.x86_64 0:26.1.4-3.2.xcpng8.3

slang.x86_64 0:2.3.2-11.1.xcpng8.3 sm-cli.x86_64 0:26.1.4-3.2.xcpng8.3

squeezed.x86_64 0:26.1.4-3.2.xcpng8.3 systemtap-runtime.x86_64 0:4.0-5.3.xcpng8.3

varstored.x86_64 0:1.3.2-2.1.xcpng8.3 varstored-guard.x86_64 0:26.1.4-3.2.xcpng8.3

varstored-tools.x86_64 0:1.3.2-2.1.xcpng8.3 vhd-tool.x86_64 0:26.1.4-3.2.xcpng8.3

wsproxy.x86_64 0:26.1.4-3.2.xcpng8.3 xapi-core.x86_64 0:26.1.4-3.2.xcpng8.3

xapi-nbd.x86_64 0:26.1.4-3.2.xcpng8.3 xapi-rrd2csv.x86_64 0:26.1.4-3.2.xcpng8.3

xapi-storage-script.x86_64 0:26.1.4-3.2.xcpng8.3 xapi-tests.x86_64 0:26.1.4-3.2.xcpng8.3

xapi-xe.x86_64 0:26.1.4-3.2.xcpng8.3 xcp-networkd.x86_64 0:26.1.4-3.2.xcpng8.3

xcp-rrdd.x86_64 0:26.1.4-3.2.xcpng8.3 xenopsd.x86_64 0:26.1.4-3.2.xcpng8.3

xenopsd-cli.x86_64 0:26.1.4-3.2.xcpng8.3 xenopsd-xc.x86_64 0:26.1.4-3.2.xcpng8.3

I have issue with rolling pool update with 1 of my 3 pools at work. It was the last pool to be updated. Host 1 updated no issues. vms stopped migrated off host 2 to complete updates.

Support ticket opened - Ticket#7758427. Found 1 vm with cpu stuck at 100% and unresponsive. Force rebooted vm and proceed updates on host2.

While updating my host i see 30 updates...

=========================================================================================================================================

Package Arch Version Repository Size

=========================================================================================================================================

Updating:

forkexecd x86_64 26.1.4-3.1.xcpng8.3 xcp-ng-testing 2.5 M

gpumon x86_64 24.1.0-84.1.xcpng8.3 xcp-ng-testing 1.6 M

intel-microcode noarch 20260416-1.xcpng8.3 xcp-ng-testing 14 M

kernel x86_64 4.19.19-8.0.46.3.xcpng8.3 xcp-ng-testing 29 M

message-switch x86_64 26.1.4-3.1.xcpng8.3 xcp-ng-testing 4.6 M

qcow-stream-tool x86_64 26.1.4-3.1.xcpng8.3 xcp-ng-testing 1.4 M

rrdd-plugins x86_64 26.1.4-3.1.xcpng8.3 xcp-ng-testing 9.8 M

sm-cli x86_64 26.1.4-3.1.xcpng8.3 xcp-ng-testing 4.8 M

squeezed x86_64 26.1.4-3.1.xcpng8.3 xcp-ng-testing 1.9 M

varstored-guard x86_64 26.1.4-3.1.xcpng8.3 xcp-ng-testing 4.9 M

vhd-tool x86_64 26.1.4-3.1.xcpng8.3 xcp-ng-testing 4.9 M

wsproxy x86_64 26.1.4-3.1.xcpng8.3 xcp-ng-testing 1.1 M

xapi-core x86_64 26.1.4-3.1.xcpng8.3 xcp-ng-testing 30 M

xapi-nbd x86_64 26.1.4-3.1.xcpng8.3 xcp-ng-testing 3.1 M

xapi-rrd2csv x86_64 26.1.4-3.1.xcpng8.3 xcp-ng-testing 3.0 M

xapi-storage-script x86_64 26.1.4-3.1.xcpng8.3 xcp-ng-testing 2.5 M

xapi-tests x86_64 26.1.4-3.1.xcpng8.3 xcp-ng-testing 5.0 M

xapi-xe x86_64 26.1.4-3.1.xcpng8.3 xcp-ng-testing 1.5 M

xcp-featured x86_64 1.2.1-1.xcpng8.3 xcp-ng-testing 1.5 M

xcp-networkd x86_64 26.1.4-3.1.xcpng8.3 xcp-ng-testing 4.8 M

xcp-rrdd x86_64 26.1.4-3.1.xcpng8.3 xcp-ng-testing 3.6 M

xen-dom0-libs x86_64 4.17.6-8.1.xcpng8.3 xcp-ng-testing 703 k

xen-dom0-tools x86_64 4.17.6-8.1.xcpng8.3 xcp-ng-testing 2.0 M

xen-hypervisor x86_64 4.17.6-8.1.xcpng8.3 xcp-ng-testing 2.4 M

xen-libs x86_64 4.17.6-8.1.xcpng8.3 xcp-ng-testing 65 k

xen-tools x86_64 4.17.6-8.1.xcpng8.3 xcp-ng-testing 46 k

xenopsd x86_64 26.1.4-3.1.xcpng8.3 xcp-ng-testing 1.4 M

xenopsd-cli x86_64 26.1.4-3.1.xcpng8.3 xcp-ng-testing 2.0 M

xenopsd-xc x86_64 26.1.4-3.1.xcpng8.3 xcp-ng-testing 5.3 M

xo-lite noarch 0.21.0-1.xcpng8.3 xcp-ng-testing 2.6 M

Transaction Summary

=========================================================================================================================================

Upgrade 30 Packages

Total download size: 152 M

Well I just ran rolling pool update on my 2 host home lab. I wanted to watch to make sure there were no issues. Of coarse I got distracted. Only to come back and find both host updated and running. Will continue to monitor.

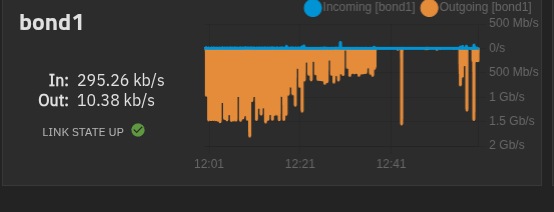

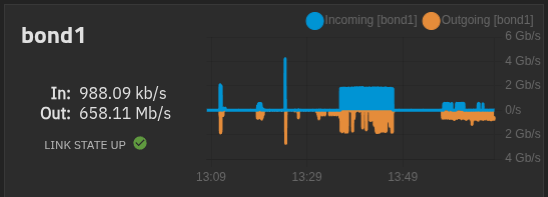

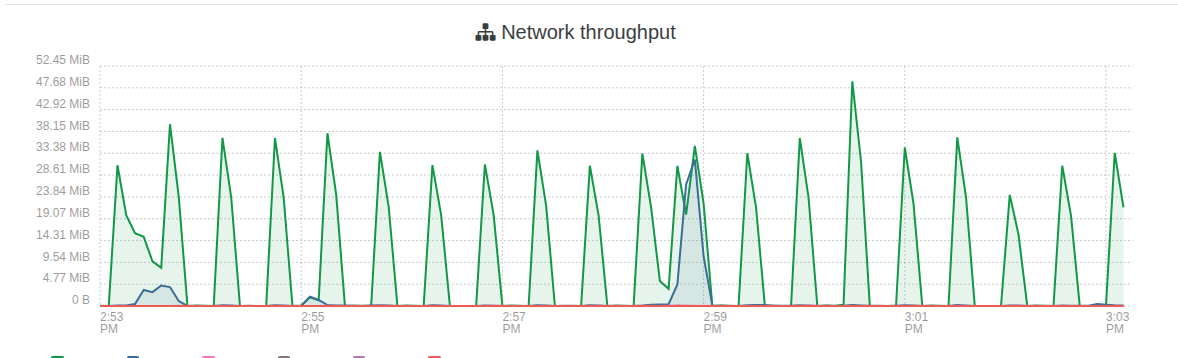

One thing i am noticing after this updates. Is i am seeing alot more traffic on my truenas. The gaps are me shutting down vms and starting each one to find the problem vms but it seems to be any vm. I didnt notice any performance issues just noticed the graph in truenas when its usally flat line there the occasional spike here and there not the big mess to the left in first screenshot. 5 vms running

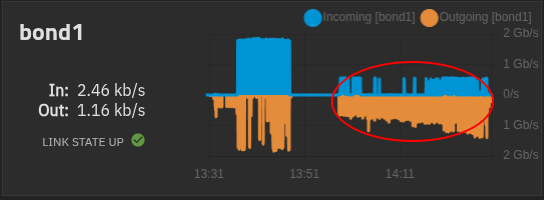

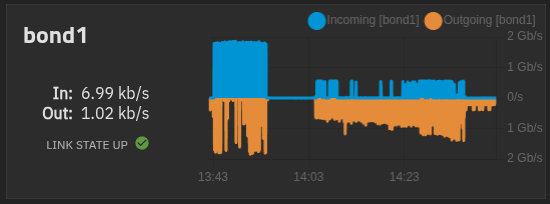

My xoa is on local storage on master host. and used for these test.

All vms are powered off except xoa and xo

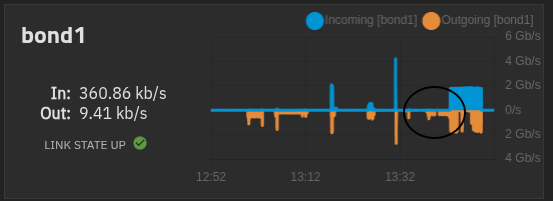

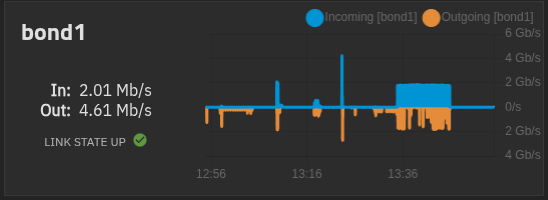

Here i booted the xo vm and left it idle. The spike after is me live migrate back to vhd SR and then left idle.

The gap in the middle is xo idle on vhd only SR.

live Migrate xo back to qcow2 only SR

Migration back to qcow2 completed

Left xo ldle after migration to qcow2 sr.

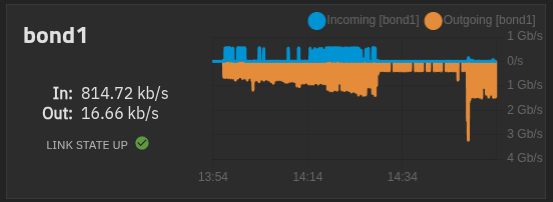

Again all vms booted and idle....

From master host.

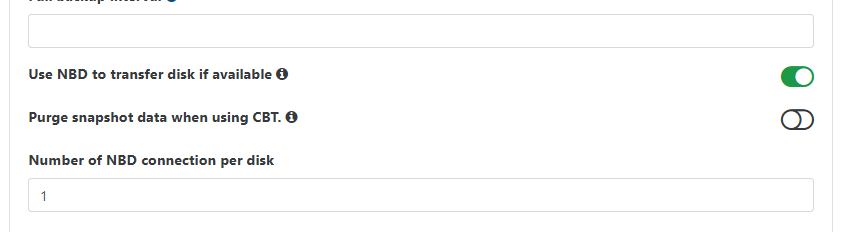

I thought i pressed save after editing the backup job to enable the purge snapshot when using cbt.

After re-enabling and clicking save. All is good now. No errors no stuck coalescence after backups.

I have been able to migrate all vms over to qcow2. Think shutting down the vms and booting backup. Alos if anything from this thread might have had an impact. https://xcp-ng.org/forum/topic/12087/backups-with-qcow2-enabled/9

Edit - If @olivierlambert wants to make this post its own thread im ok with that.

Updated home lab and live convert to qcow2 seems to work.

Process was a little long 30ish min. but it worked and did not fail.

Kubuntu 26.04 lts beta

Tried a windows vm got error - 2026-04-16T13_04_47.720Z - XO.txt

Gives VDI has CBT enabled...

Windows 11 vm.

From backup job...

Just tried another vm that was powerd off. regular ubuntu 24.04 successfull...