@manilx yup, same here

we evacuate & roll patch manually because RPU is inconsistent in achieving a full pool update nowadays

maximum hosts in pools are 3, so it is still easy to process manually

thoses with 5-6+ hosts must be more painful

@manilx yup, same here

we evacuate & roll patch manually because RPU is inconsistent in achieving a full pool update nowadays

maximum hosts in pools are 3, so it is still easy to process manually

thoses with 5-6+ hosts must be more painful

@marcoi perhaps a restart toolstack would correct the phantom task ?

but at the end of patching of the master a restart toolstack should have happened already, automatically...

@Bastien-Nollet Sorry, I had to revert back to 6.3.3, and didnt save the logs...

but as far as I remember, it was not an UI issue, even in the logs it didnt put any transfer informations

@pierrebrunet i'll report back as soon as we test 6.5.1 on this same client infrastructure

@Milenko if this is a definitive migration pathway for you, you can force start the replica VM, no problem, you can even delete the replica snapshot.

Just disable the job at source, it will create a new replica VM otherwise

if it is just a test boot of the replica, fast clone it, and start the fast clone (beware of double ip addressing while doing so... source VM should be halted, or replica clone should be started without network)

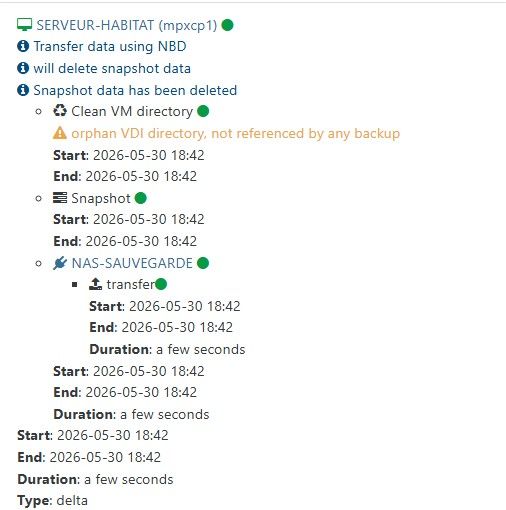

@acebmxer had a combo error on some VMs "fell back to full" and "not referenced by any backup" but no tranfer size either.

can't screenshot it anymore, I did a rollback to XOA 6.3 for the time being, snapshoted just before the upgrade

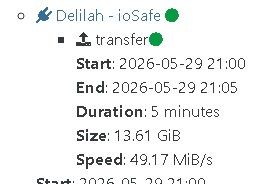

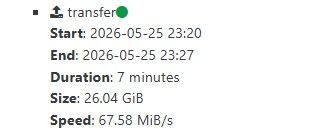

@acebmxer but you have SIZE and SPEED

same error but I do not even have these infos.

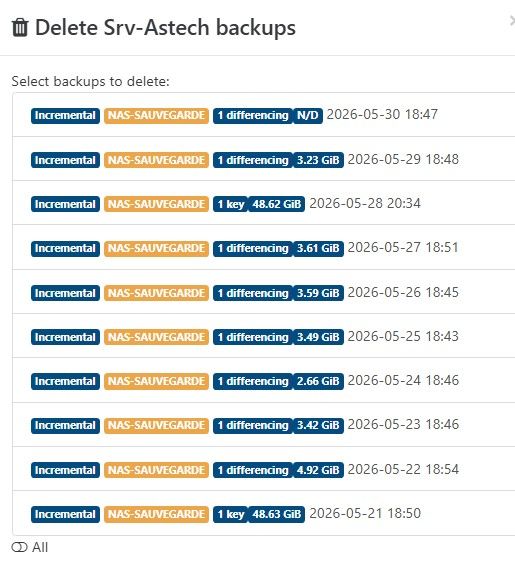

did a full VM restoration on an "N/D" backup point, was successful

so it seems backup is backuping buuuuut not reporting correct transfer size

halp

some VMs do take more than 30 seconds, and do not have the error

still no transfer size

and still no apparent size

is the XO backup working ?!

Hi,

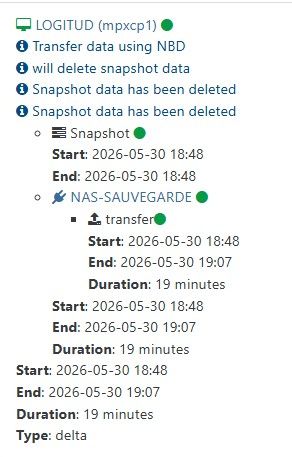

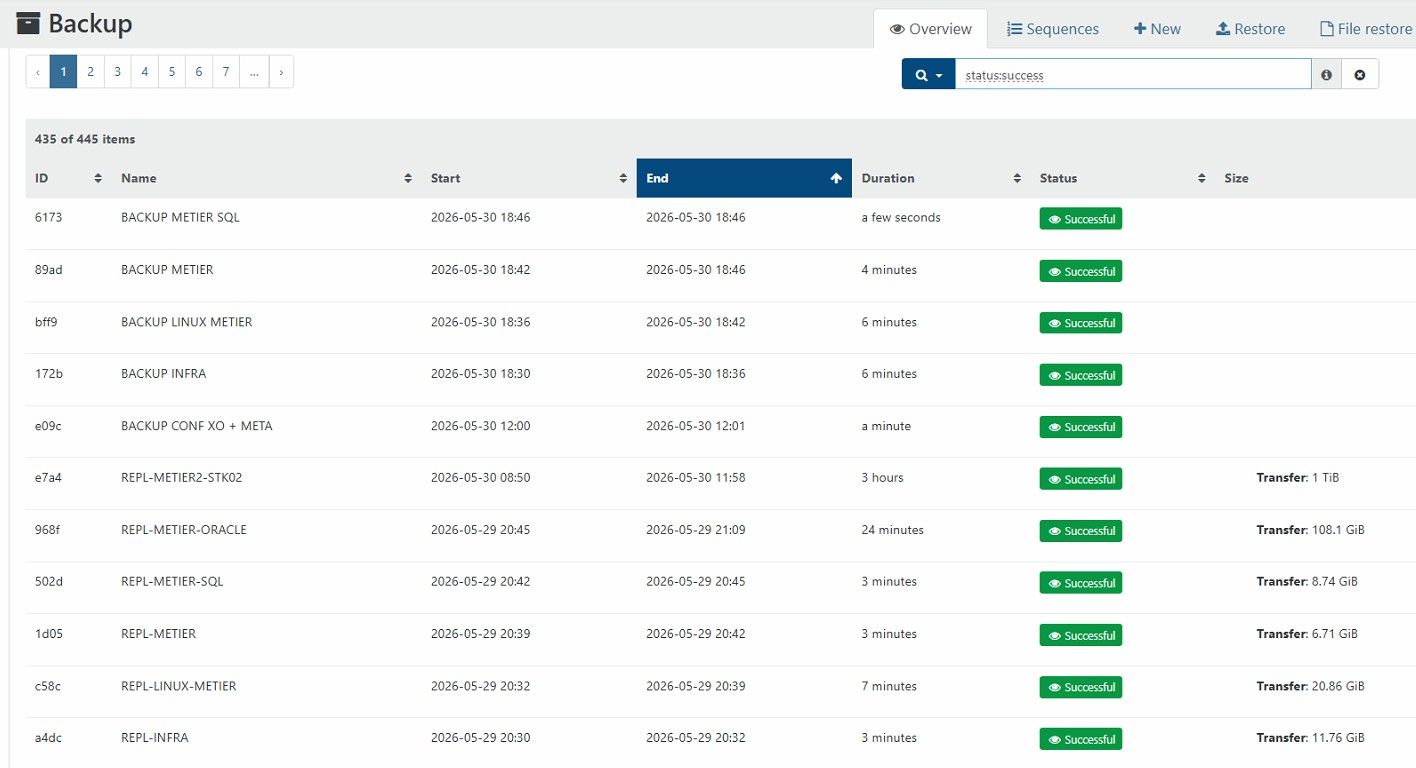

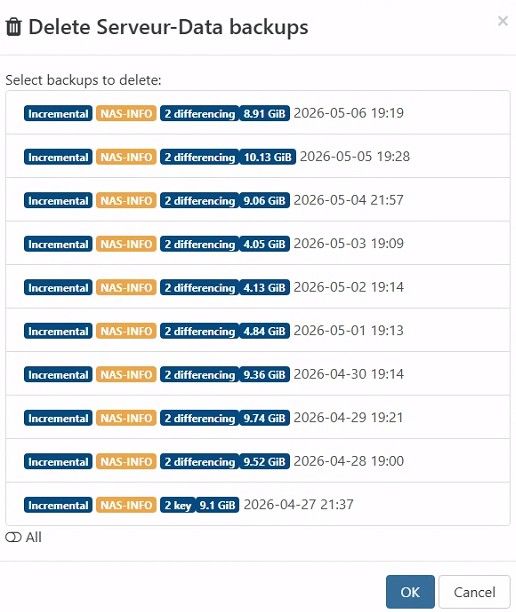

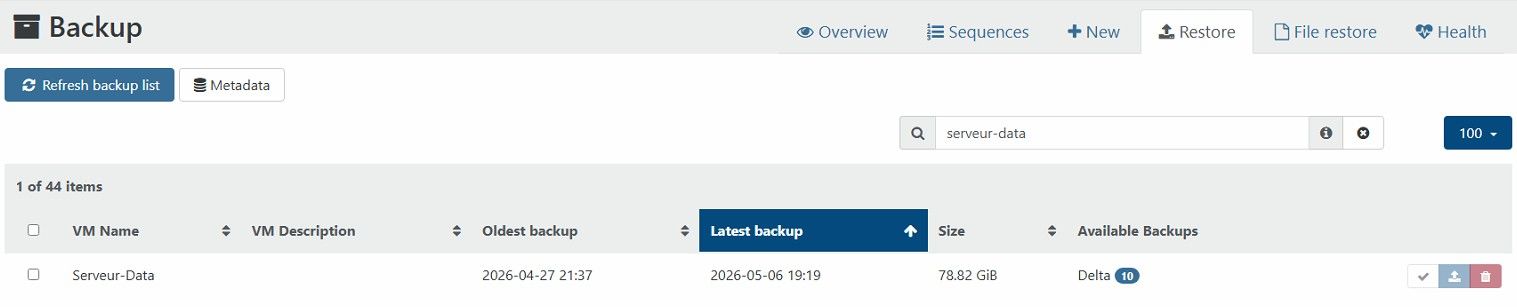

Today we updated from XOA 6.3.3 to 6.5

XCP is full up to date.

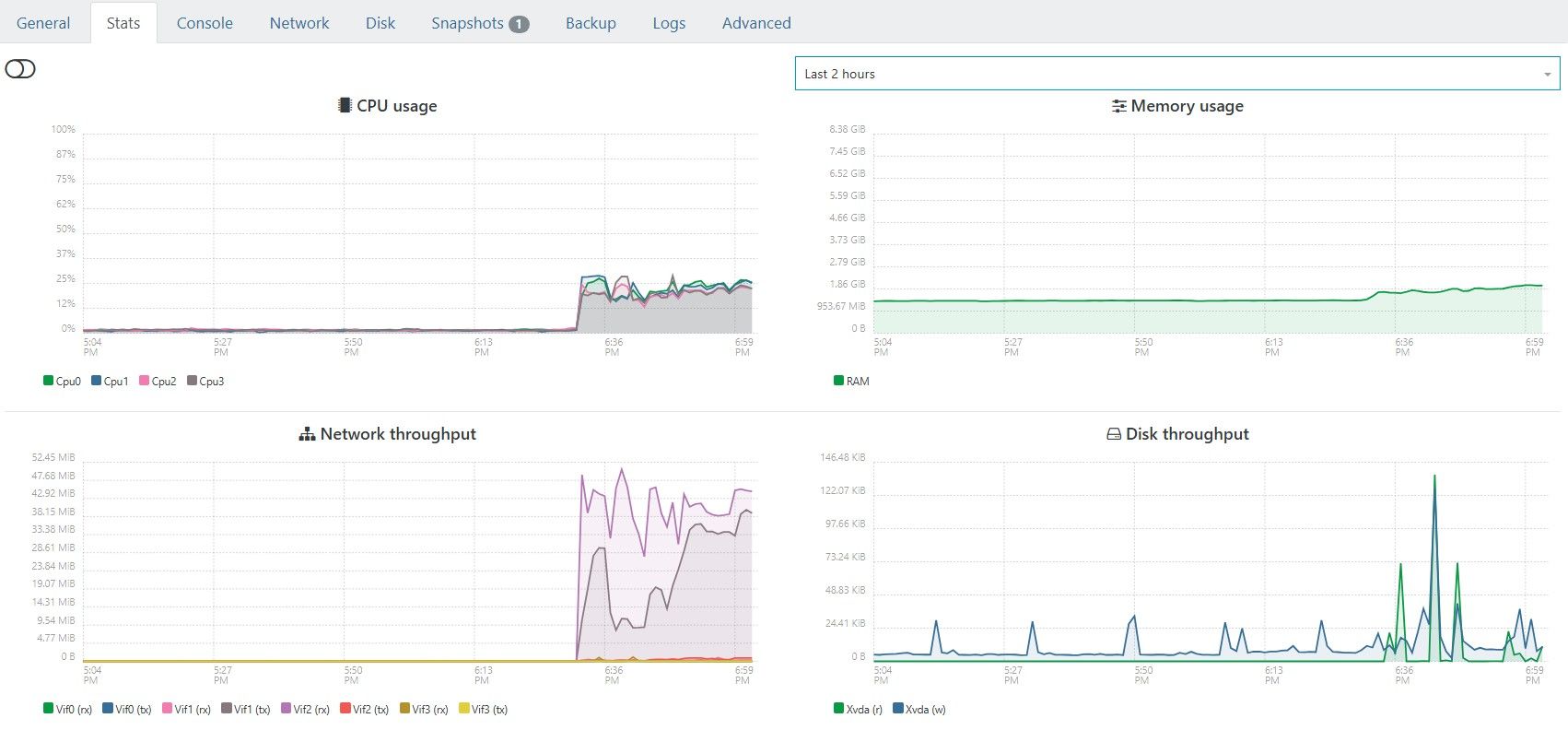

Since then, the backups are successful but do not seem to transfer any data ?

Comparison between yesterday and today :

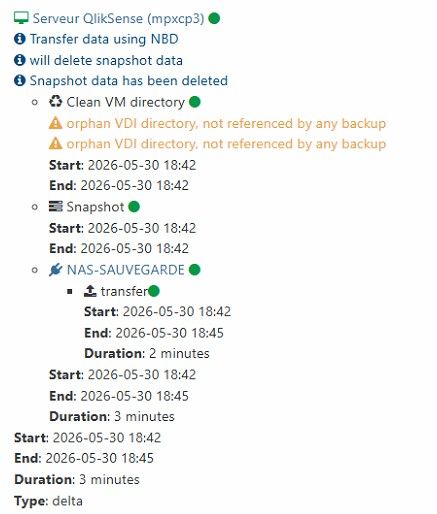

Each job goes like that :

restore point size is N/D !

XOA seems to be working

any idea @florent @bastien-nollet ?

@tsukraw I think the bottleneck is indeed the tapdisk and smapiv1.

We have full 10Gb WDM between nominal and DR site, and get the same transfer speeds as you on CR.

@nasheayahu Hi, you could passthrough the controller hosting these disks if its independant of the disks hosting the XCP itself I guess...

but not really recommended ? perhaps someone has another solution

@LoTus111 nice, but could be better

you're missing the additionnal step in the TIPS section here :

https://docs.xen-orchestra.com/xo5/troubleshooting#memory

upgrading XOA to 8Gb is not enough, you have to tell xo services to be able to use this additionnal RAM.

@johnnezero this could be a plugin in XOA !

@julienXOvates sounds promising !!

@gashorus in last veeam webinar they announced for veeam v13.1 (currently 13.0.1) to be out of beta on XCP-NG plugin...

date : june 2026.

it could appear when a host is selected in the tree view?

or as a tooltip when the cursor fly-over the name of th VM / host

the size is really 2.17Tb, but showing last incremental size on the key

I'll wait for the patch, this is really a visual bug, backup is working okay.

hello there, still XOA 6.3.3 here.

since the last big XObackup & CR reworking, we have an annoying bug

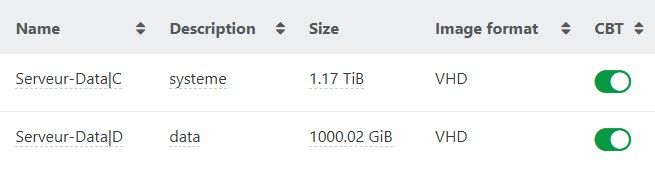

This VM is 2.17Tb

how can be the KEY is 9.1Gb ?

In fact the KEY size is good, but when retention of 10 points is obtained, the merging of last incremental in the first KEY point of the chain erase the size.

So in our restore view, data sizes are all wrong, based on the sum of this key + 9 inc

@florent could you do something about that ?

@poddingue there is a notion of appliance too (group of VMs)

https://docs.xcp-ng.org/appendix/cli_reference/#appliance-commands

where you can start/stop a group of VMs, never tried it, doesn't seem to have a boot order in the vAPP neither

if you have other SRs, migrating VDIs/VMs is known to correct the "visual issue"

the bug just populates a metadata of the VDI making XOA Web UI believe it's a snapshot, and it is not...

a dev told us in another thread they are on it, but it will be a two phase remediation I believe, correcting the bug so that it doesn't reproduce and a way to script out this metadata on impacted VDIs

this is only guesses

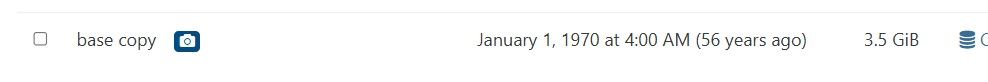

@AlexD2006 you are right, this is dangerous.

I also have them old & dusty base copies from the seventies

do not shoot them.