@probain Hello,

It's likely linked to the

List index out of rangebug.

That bug was linked to the SR scan failing to introduceCBT_metatadataVDI in the XAPI database, could you try to launch axe sr-scan uuid=<SR UUID>and try again to disable CBT?

If it does not work, could you share the/var/log/SMlogof around the time you are trying to disable CBT?

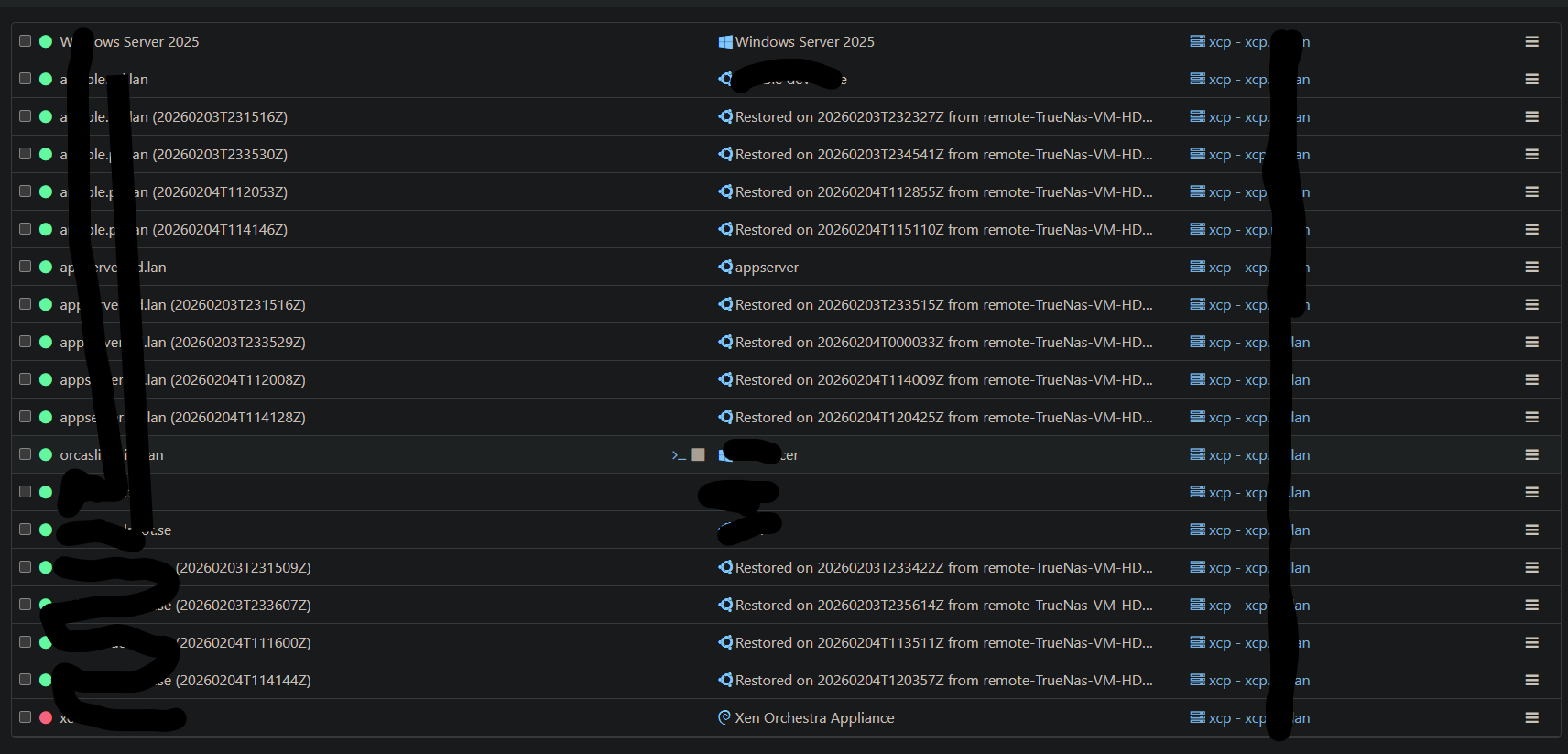

I've sent you a DM for sharing the logs.. Unfortunately I "solved" the issue by deleting all snapshots related to each VM. Including CBT ones. That did make it so I could toggle CBT on the VDIs again.

But I've collected the logs for you.

This also seems like a good time to raise my suggestion to have somewhere at vates where we could upload details in a similar way to how TrueNAS does it. Suggested here: https://feedback.vates.tech/posts/69/suggesting-to-add-a-debug-file-option

I know, not usual right? It was so stable that we left it like that waiting for people coming for bugs and nothing happened… We'll cut a 1.0 in the next weeks/months.

I know, not usual right? It was so stable that we left it like that waiting for people coming for bugs and nothing happened… We'll cut a 1.0 in the next weeks/months.

️ XO 6: dedicated thread for all your feedback!

️ XO 6: dedicated thread for all your feedback!