@danp I see your response to my concern was to add a block on the script being able to run on 8.2, without an update to the elif bug @anthoineb acknowledged. While I can remove your addition and fix that other bug myself, I just wanted to give you some feedback as to why I expressed the issue in the way I did - it wasn't because I feel an entitlement to anything, just sharing my perceptions to @antoineb

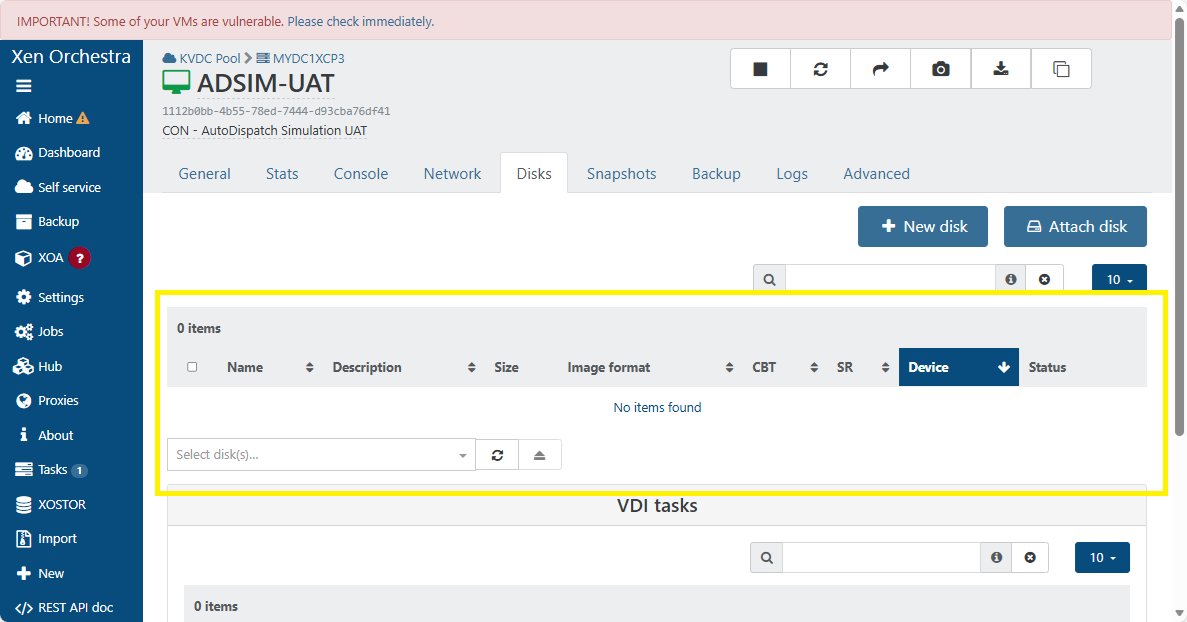

I have been a xen cli user for a couple of decades, give or take. I moved to xcp-ng with a lot of PV legacy. That was a blocker to using 8.3 straight away, otherwise I would have. The workflow I had established to update the VMs to HVM included adding a new volume for /boot and /boot/efi, and then switching boot OFF on the legacy disk. It was worrying when I went to complete the migration to HVM with the announced EOL of 8.2.1, that suddenly the disks I needed to work with, both legacy and new, had disappeared in XO so I couldn't follow my SOP to move the VMs to HVM, and then proceed to upgrade to 8.3.

Given how relatively widespread this issue seems to be, I am surprised that Vates wouldn't treat it as a reputational issue and want to help users to resolve it so that corporates can have confidence to move their cloud to xcp-ng platform, even if they are a startup or in the initial stages of a move and can't yet invest in Pro Support. I note you have a "Pro Support Team" badge, and understand that may not mean you work for Vates, but I gather offer Pro Support. Valuable service.

While I am not encouraging this thread to become a marketing channel, it would speak well for xcp-ng, Vates and the Pro Support community if this important issue was handled differently - even if it was "we have tested on 8.2.1 internally and should be able to fix it for you quickly and cost effectively if you join our X plan or request a quote on Y page."

I appreciate all you've shared and wasn't being dismissive of your recommendation to upgrade to 8.3, just the timing of this issue - arising right before the EOL of 8.2.1 made that a bit of a "chicken and egg problem".

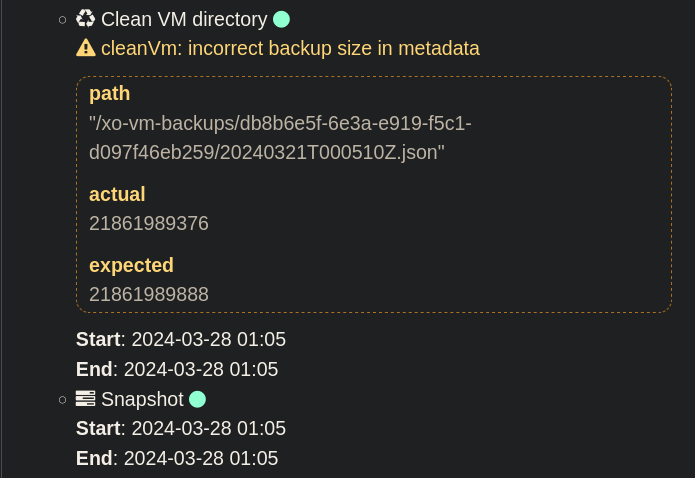

I gather from what you've said on this thread that you don't expect upgrading to 8.3 to be a problem with VDI in this state, so I guess I will just soldier on with creating an xcp-ng CLI SOP to upgrade the remaining PVs to HVM, upgrade to 8.3, and then use the script that has been shared - since you've now made it clear in the code that it shouldn't be run on 8.2.

I am thankful that it was shared as guidance, if not a fix in my situation.