After building new xo with root and more testing, I have come to this conclusion...

Both things are true, and they're in tension

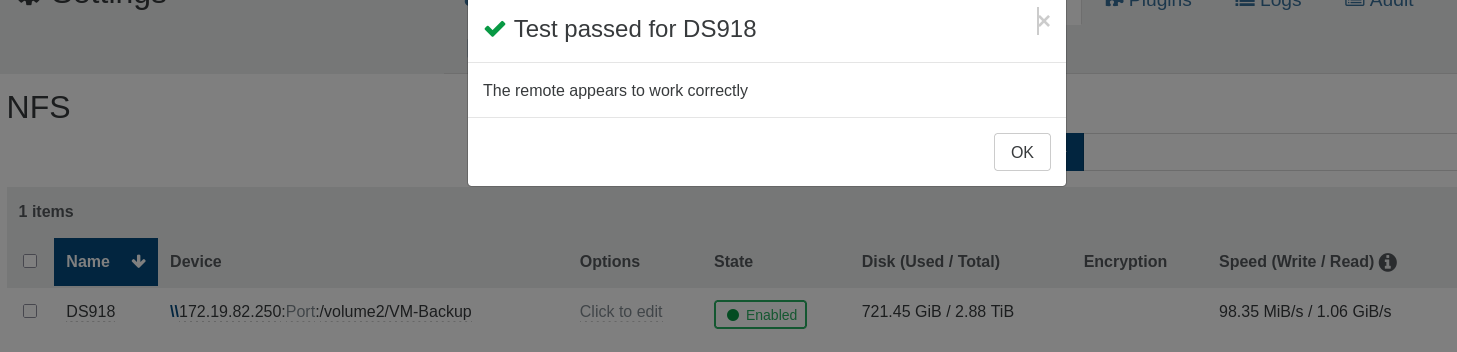

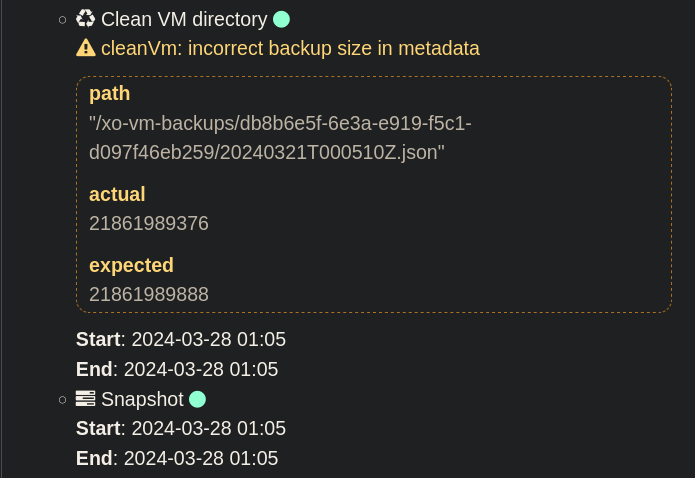

The official docs prefer non-root for the long-running service — that's a least-privilege hardening recommendation for the daemon. Normal XO (UI, backups, hosts, VMs, NFS/CIFS remotes) works fine non-root.

But several XO features assume root anyway. The ESXi/VMware import "install from source" buttons are hard-coded to refuse unless id -u == 0. You already hit this same pattern once before — the credential-encryption/XenStore work (commit 5e8b7fd) existed precisely because non-root broke that too.

So "everything fails non-root" isn't quite it — what fails is the specific subset of features XO wrote assuming it runs as root. Each one needs a separate workaround. The import button is one that cannot be worked around for a non-root process: it's a uid check on the running daemon, full stop.

The honest trade-off

You can pick at most two of these three:

Service runs non-root (docs' preference)

In-app "install nbd from source" button works

Script doesn't pre-install packages

The button (#2) requires the daemon to be uid 0. So:

Want the button to work → run that box as SERVICE_USER=root. Simplest, everything XO ships just works, zero manual steps. You give up the non-root hardening.

Want to stay non-root → the button is permanently dead; the only way to get import working is the binaries being placed by root once (script or by hand). The binaries run fine as non-root — only their installation needs root.

My recommendation

Use SERVICE_USER=root on this box. XO's own codebase keeps assuming root (import, and you already saw it with encryption/XenStore), so non-root is a recurring fight against upstream for marginal hardening. Root is fully supported, it's what the official XO appliance ships, and it makes the buttons you want work with no manual package steps. Keep non-root only if hardening that box is a hard requirement and you're fine never using the in-app import installer.